The previous Scala-based script, which uses the DbUtils package, and creates the mount in the last section, only uses a small portion of the functionality of this package. In this section, I would like to introduce some more features of the DbUtils package, and the Databricks File System (DBFS). The help option within the DbUtils package can be called within a Notebook connected to a Databricks cluster, to learn more about its structure and functionality. As the following screenshot shows, executing dbutils.fs.help() in a Scala Notebook provides help on fsutils, cache, and the mount-based functionality:

It is also possible to obtain help on individual functions, as the text in the previous screenshot shows. The example in the following screenshot explains the cacheTable function, providing descriptive text and a sample function call with the parameter and return types:

The next section will briefly examine the DBFS before moving on to examining more of the dbutils functionality.

The DBFS can be accessed using URL's of the dbfs:/* form, and using the functions available within dbutils.fs.

The previous screenshot shows the /mnt file system being examined using the ls function, and then showing mount directories—s3data and s3data1. These were the directories created during the previous Scala S3 mount example.

The fsutils group of functions, within the dbutils package, covers functions such as cp, head, mkdirs, mv, put, and rm. The help calls, shown previously, can provide more information about them. You can create a directory on DBFS using the mkdirs call, as shown next. Note that I have created a number of directories under dbfs:/, named as data* in this session. The following example has created the directory called data2:

The previous screenshot shows by executing an ls that there are many default directories that already exist on DBFS. For instance, see the following:

/tmpis a temporary area/mntis a mount point for remote directories—that is, S3/useris a user storage area that currently contains Hive/mountis an empty directory/FileStoreis a storage area for tables, JARs, and job JARs/databricks-datasetsis datasets provided by Databricks

The dbutils copy command, shown next, allows a file to be copied to a DBFS location. In this instance, the external1.txt file had been copied to the /data2 directory, as shown in the following screenshot:

The

head function can be used to return the first maxBytes characters from the head of a file on DBFS. The following example shows the format of the external1.txt file. This is useful, as it tells me that this is a CSV file, and so shows me how to process it.

It is also possible to move files within DBFS. The following screenshot shows the mv command being used to move the external1.txt file from the directory data2 to the directory called data1. The ls command is then used to confirm the move.

Finally, the remove function (rm) is used to remove the file called external1.txt, which was just moved. The following ls function call shows that the file no longer exists within the data1 directory, because there is no FileInfo record in the function output:

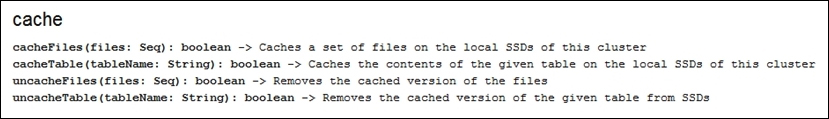

The cache functionality, within DbUtils, provides the means to cache (and uncache) both tables and files to DBFS. Actually, the tables are saved as files also to the DBFS directory called /FileStore. The following screenshot shows that the cache functions are available:

The mount functionality allows you to mount remote file systems, refresh mounts, display mount details, and unmount specific mounted directories. An example of an S3 mount was already given in the previous sections, so I won't repeat it here. The following screenshot shows the output from the mounts function. The s3data and s3data1 mounts have been created by me. The other two mounts for root and datasets already existed. The mounts are listed in a sequence of the MountInfo objects. I have rearranged the text to be more meaningful, and to be better presented on the page.