Chapter 2: Purple Teaming – a Generic Approach and a New Model

Purple teaming is an under-documented process; indeed, there is no official documentation for this – even Wikipedia doesn't have any official article on this process (at the time of writing). The problem is also amplified as many vendors try to explain and develop their own vision of purple teaming activities based on the product they market.

This global issue leads to a situation where a vendor-agnostic approach based on financially interested parties and researchers is required to help people understand and implement purple team strategies in their companies.

In this chapter, we are proposing our own purple teaming vision; we don't pretend it is the best or the official one, but our vision is result-centric, based on our various purple teaming experiences using different scopes and approaches. We have also tried to leverage existing efforts that the community has made to help the industry mature the purple teaming process. We wanted to offer you practical processes and models that are as generic as possible, with documentation, collaboration tools, and continuous improvement capabilities in mind.

In this chapter, we will cover the following topics:

- A purple teaming definition

- Roles and responsibilities

- A purple teaming process description

- The purple teaming maturity model

- Purple teaming eXtended

- Purple teaming exercise types

- Purple teaming templates

A purple teaming definition

You might have noticed that we didn't define purple teaming in the first chapter. Therefore, let's start this chapter by defining what purple teaming is and what it is not.

First of all, purple teaming is not a dedicated team. So, be reassured that you don't need to hire additional, hard-to-find security experts to build a new team. In fact, teaming is simply the act of working together as a team. As we've seen in the previous chapter, there are issues currently faced by the traditional approach to red (offensive) and blue (defensive) concepts around security. Purple teaming joins both the red and the blue teams toegther to act as a virtual team during an exercise called purple teaming. This will ensure that both teams' goals are aligned and that both teams have incentives to help each other.

Purple teaming solves the issue with the success of one means the failure of the other mindset and helps an organization to optimize its security efforts in a common direction. It is a collaborative approach that creates a bond between red and blue members to, of course, enhance an overall organization's security posture but also to improve people's skills and communication.

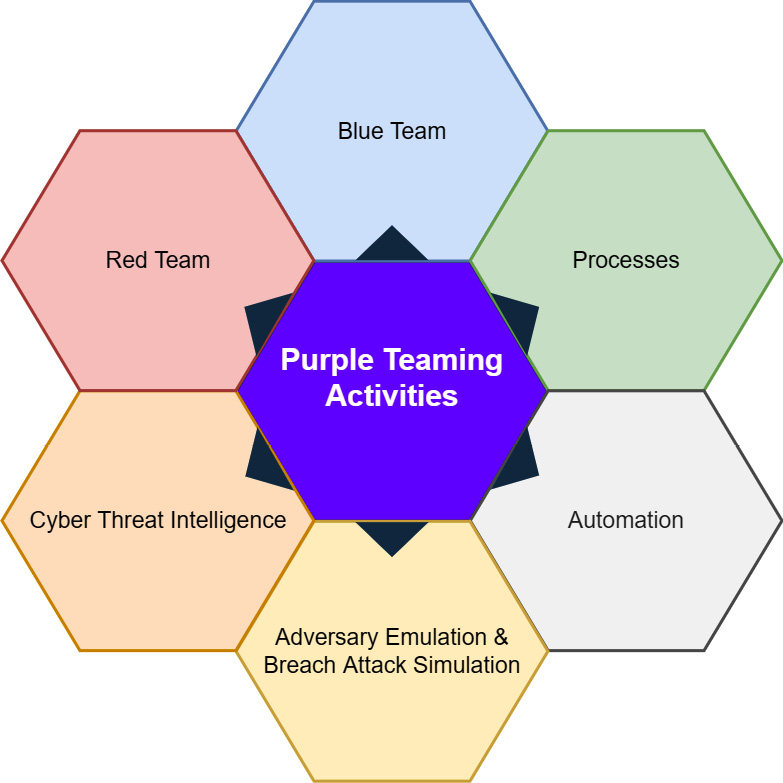

This historical approach can also be enhanced thanks to purple teaming technical solutions. The purple teaming activities flower was eventually introduced, which describes the different components of purple teaming:

Figure 2.1 – A purple teaming activities model

From the previous figure, we can see that the activity is not limited to human interactions with blue and red teams and can also involve the following:

- Processes: Different processes are involved in the activity to provide a continuous improvement life cycle, including activity logs, reporting, and change management.

- Automation: Custom development of continuous security controls based on attacks.

- Breach Attack Simulation (BAS): An operation consisting of replaying one or multiple existing attack techniques manually or relying on an existing tool.

- Adversary Emulation: Identifying different techniques used by a specific attacker, leveraging CTI, then building a plan to replay them in order to be able to test the organization's defenses.

Before digging into the purple teaming process, we need to describe the overall organizational structure encountered during a purple teaming exercise.

Roles and responsibilities

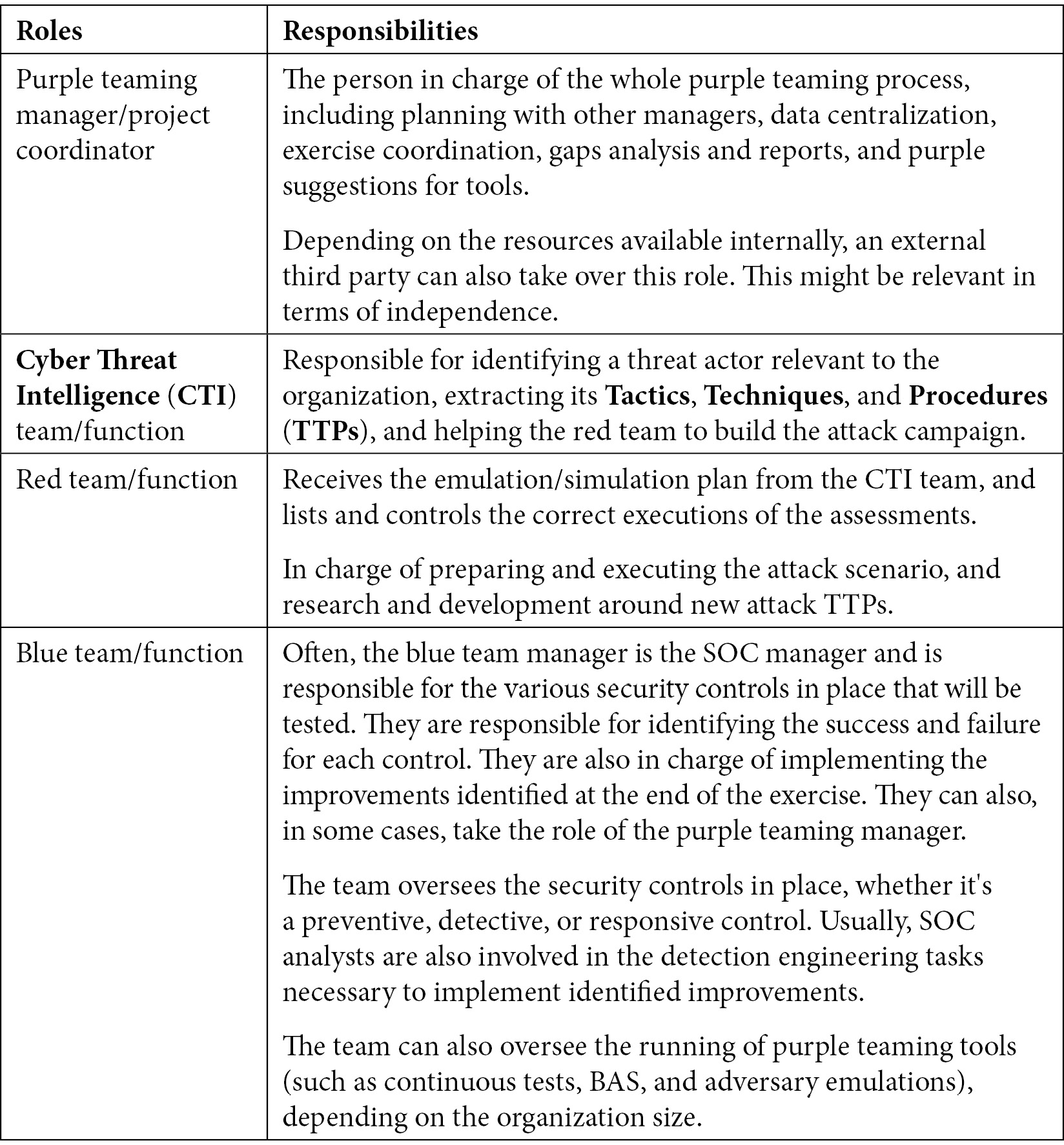

As usual in security, organization is key, especially for purple teaming success. Roles and responsibilities have to be clearly defined to avoid confusion, failure, and tension between teams and to optimize the success of the exercise.

A standard structure would look like this:

Table 2.1 – Purple teaming roles and responsibilities

Of course, the structure may be adapted according to an organization's resources, needs, and objectives.

Indeed, it is common to see companies where a purple team manager or dedicated project manager is missing or merged with other roles. Most of the time, the blue team manager will take the lead on a purple teaming activity; this will ensure that the incident response is not disproportionate and not blocking production assets. On the other hand, we might want to introduce independence for the assessment; in that case, it can be necessary to hire an external consultant that will lead the exercise as a coordinator and for the reporting activities.

In addition, it is also common to see companies that do not have a red team in place (or at least use external resources for scheduled activities).

We may think that the purple teaming process cannot be implemented if no internal red team exists. This is not correct. Leveraging external resources can still be used collaboratively on one hand and also completed with internal developments and solutions (open source or commercial) for continuous controls and improvements on the other hand.

The same applies to a Cyber Threat Intelligence (CTI) team, which most organizations don't have. Leveraging external third-party companies and resources is a must-have. Some might still be able to dedicate a Security Operations Center (SOC) analyst to perform this duty.

Now that we have a good understanding of the roles necessary for performing a purple teaming exercise, let's see how the process works.

A purple teaming process description

As we have seen previously, the purple teaming process combines red and blue activities across a joint-venture exercise supported by the CTI team and an exercise coordinator. This combined approach allows global company security to be improved thanks to failure and gap identification.

The Prepare, Execute, Identify, and Remediate approach

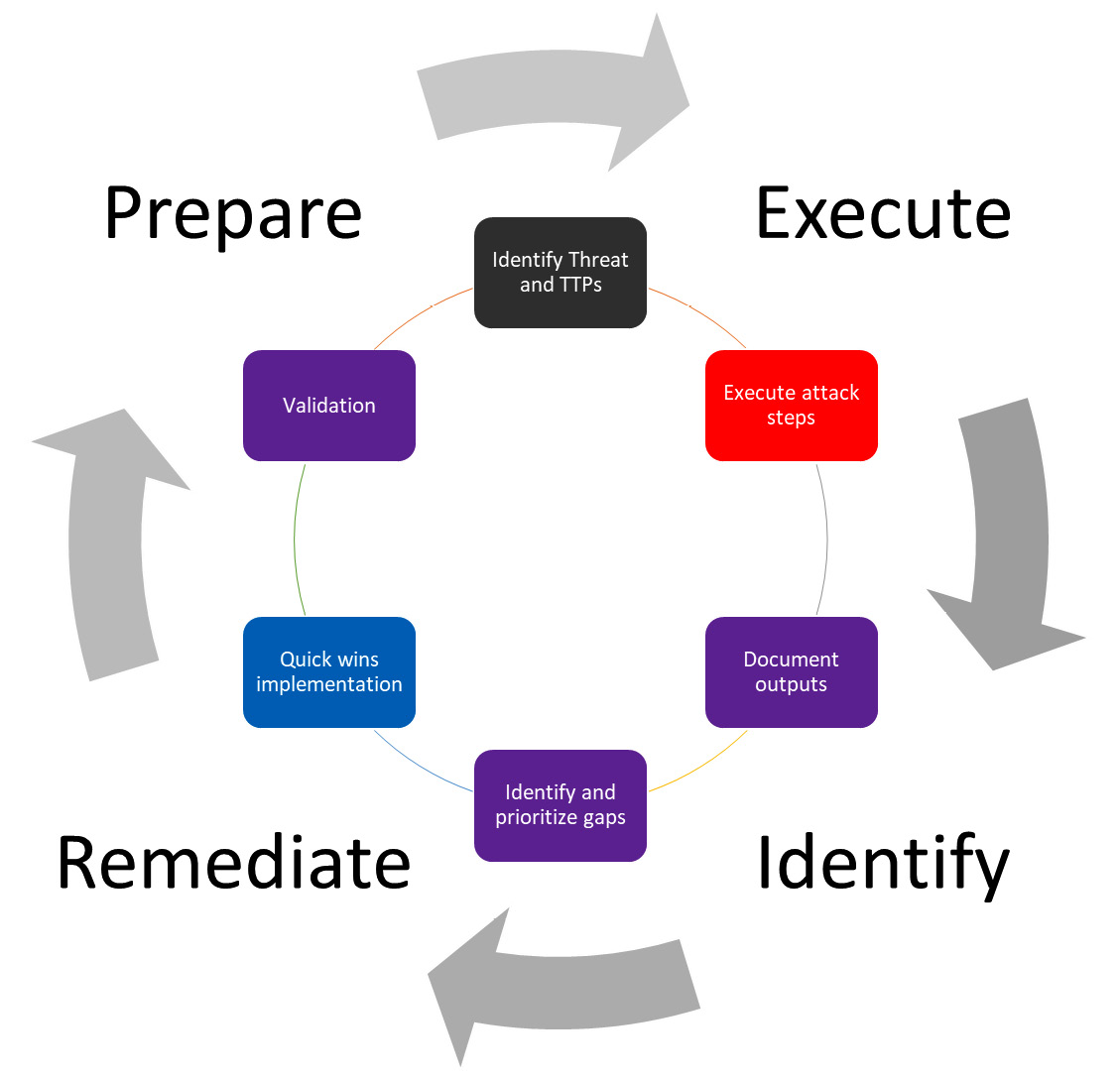

Everyone should be familiar with the Plan-Do-Check-Act (PDCA) process, also called the Deming wheel, which is a generic management tool used to verify and continuously improve processes and products over time. This seems to perfectly fit what purple teaming is trying to achieve, and that is why we have based the purple teaming process on this method, resulting in a more tailored Prepare, Execute, Identify, and Remediate (PEIR) model.

This high-level process is represented in the following figure:

Figure 2.2 – The PEIR process of purple teaming

This scheme represents a high-level purple teaming approach where both blue and red team managers are involved. In such a situation, blue team members may or may not be informed about the exercises. Without crossing the boundaries of red teaming, whose goal is to be stealthy and assess response capabilities, a purple teaming exercise can still be performed in a blind way where most of the blue team members are not informed in order to also assess detection and response capabilities. Indeed, it is possible to simulate red team activities such as injecting logs or deploying unweaponized techniques to evaluate the blue team's overall capabilities and controls, especially investigation, escalation, and response.

Let's now see in a bit more detail each step of the process:

- Prepare: The purple process is initiated by a plan to run security tests (offensive actions, attacks, and scans) on a predefined scope and security controls. This plan can be manually defined (at least for the first iteration) or automated using advanced implementation or solutions (Breach Attack Simulation (BAS), custom developments, adversary emulation, and so on).

The following is a workflow example of this process step:

- All members sit at the same table for this phase.

- The CTI team starts by selecting a threat actor and the TTPs of the attack that are relevant to the organization, depending on its context and environment.

- The CTI team presents the TTPs that the red team will prepare to perform the selected scenario.

- The CTI and the red team present the detailed TTPs to the blue team, which documents and identifies expected security controls (prevention, detection, and hunts) for each presented TTP. This step can be skipped if the blind approach is selected.

- Execute: Attacks are executed in person by a red team or emulated with a tool (continuously or temporarily). The current active defense systems are expected to detect TTPs partially or totally to provide security-related information.

The following is a workflow example of this process step:

- The red team starts executing the selected attack scenario.

- The blue team will detect and respond to these TTPs.

- The blue team manager will report the findings to the purple team manager.

- Identify: Gap detection and prioritization are performed. All related information will be reported to the purple teaming process owner (the project manager or SOC manager) or to a technical solution that will identify detection gaps and new unseen security risks.

The following is a workflow example of this process step:

- All members sit at the same table for this phase.

- All teams go through each step of the attack and describe all issues, successes, and failures to document the efficiency of all security controls identified at the beginning of the exercise.

- The purple team manager documents all findings.

- All members assess and prioritize improvements according to the risk reduction and the implementation effort.

- Remediate: Implement and validate improvements. Prevention and detection gaps will be identified and then transmitted to the blue team manager to prioritize the implementation of a corresponding remediation. The blue team will perform detection engineering in accordance with the identified risks and then implement new detection rules or change existing configurations. As a continuous improvement process, detection will be checked afterward to ensure it is implemented and working properly.

The following is a workflow example of this process step:

- The blue team implements the quick wins.

- The red team replays TTPs related to the newly implemented quick wins to ensure immediate efficiency.

- The blue team together with the purple team manager document and plan the rest of the identified improvements on a roadmap.

This workflow is vendor-independent and can cover any type of purple teaming activities. It can be used as a generic purple teaming workflow approach.

For the veterans among you, in 1993, a document called Improving the security of your site by breaking into it published by D. Farmer suggests various attack methods to defend by thinking like an attacker. It could be the first public resource describing an approach for purple teaming, even if the team, in that case, was composed of one person only.

Purple teaming exercises can be considered as a continuous security improvement process by mixing offensive and defensive skills. This exercise is not purely focused on technology but can also be shaped in different forms to improve the overall security posture (that is, people and processes too).

The foundation of cybersecurity is often described with three pillars, which are the people, the processes, and the technology (or products). Let's now see how purple teaming can address each of them.

Improving the people

Improving the people with purple teaming is a must. Regardless of the types and goals of the purple teaming exercise, people will always benefit from it because it gives them the opportunity to see the other side of security. The red team will learn and understand what kind of security controls are in place within their organization, how they can bypass it, and therefore think about ways to strengthen it to increase the overall security posture of the organization. On the other hand, the blue team will learn and understand how the red team, and therefore adversaries, approaches and operates during an attack scenario, as well as better understanding the strengths and weaknesses of their controls, again to improve the defense strategy.

Nevertheless, it can be useful to assess how people react and handle security alerts and incidents within an organization.

Even if it is not pure purple teaming, some professionals may also implement a blind approach where the blue team is not initially informed. It can be interesting for the blue team manager to determine whether all the members of its team can investigate and handle alerts and incidents in a consistent manner and not depend on people's interests, skills, and experience.

The following criteria should be taken into account:

- Mean Time to Detect (MTTD), which starts from the beginning of the attack until the first event or alert being handled by the blue team.

- Mean Time to Respond (MTTR), which starts from the beginning of the attack until the full containment of the attack by the blue team. This one can be tricky, as it might lead the team to select alerts and incidents that they are most comfortable with. Other key points can be monitored, such as the fact that blue team analysts have effectively followed the steps described in Standard Operating Procedures (SOP) and/or incident response playbooks.

Then, the purple team manager can use those Key Performance Indicators (KPIs) to create charts in order to identify improvements and benchmark against other purple teaming exercises over time. This approach is fully described in Chapter 14, Exercise Wrap-Up and KPIs.

When considering assessing people, other parameters must be considered, such as the following:

- Analyst skills

- Adequate resources to incident response

Thus, to evaluate those points, a purple approach would be to open critical cases and measure whether the blue team (especially level 1) is able to manage and respond to cases in a timely and effective manner (using a service-level agreement or an average handling time).

The capacity to adapt to TTP variations is also important; perhaps your blue team is highly trained to handle specific incidents, but what if slightly different TTPs are applied or, even worse, a different threat actor with radically different TTPs starts considering your business a potential target? This is exactly why simulation is also a key concept that need to be applied and developed. Testing your organizations controls against non-related threat actors may add value in case threat actors decided to shift targets or motivations.

Improving the processes

In addition to people, processes are the second key pillar of any organization's cybersecurity practice; for this reason, it is important to assess several aspects, such as the following:

- Creating defense from newly tested attackers' tools using a shared methodological approach: This is maybe one of the best examples of a powerful collaboration between the red and blue teams thanks to purple teaming. The concept is quite simple – new tools and TTPs are published every day and evaluated by the red team to improve their internal knowledge, but the same TTPs are also reviewed by the blue team to implement security controls.

- As the purple team is focused on collaboration, both team members should work together to evaluate TTPs to create not only new attack methods but also new security controls (or validate existing ones) to detect and mitigate these methods.

- Reducing the amount of work with automated controls.

- Assessing incident response processes: Performing purple teaming exercises can help measure the efficiency of your whole Incident Response (IR) process; you can review reports generated from these exercises and assess the quality of your IR at each point (analysis, containment, remediation, recovery, and lessons learned)

All these aspects should be taken into consideration when improving the processes around cybersecurity within an organization.

Improving the technology

Technical solutions are implemented at different layers; therefore, being able to assess them is an absolute requirement to ensure the safety of your data. Purple teaming can help us with the following:

- Improving perimeters and endpoint security.

- Continuously testing Security Information and Event Management (SIEM) detection rules to ensure system's health.

- Diffing security tools that generate reports at different periods in time to monitor and alert on evolutions and changes. This topic will be discussed in Part 4: Assessing and improving of the book.

Generating automated reports from security tools such as vulnerability scanners, Active Directory security audits, and network port scanners frequently, and making the diffing automatically between the previous and current report to generate alarms and insights from this intelligence. These technical implementations will be covered in Chapter 12, Purple Teaming eXtended, to provide practical usage examples.

- Being able to answer the C-level question, "Are we prepared for a New_Strange_Name attack?"

So, clearly, the old approach of red versus blue, even if still applicable, can be greatly improved. This book was created for that purpose – giving us new concepts, tools, opportunities, and ideas to leverage purple teaming in order to improve our overall security posture.

Each of us co-authors has had experience in different environments with multiple positions, providing various visions and tried-and-tested methods of purple teaming for multiple layers of security.

Now that we understand the standard purple teaming process, the next obvious question to ask ourselves is, where do we start? That's why we believe that a maturity model is key to enabling all organizations, whether Fortune 100 or small-to-medium businesses, to start applying purple teaming within.

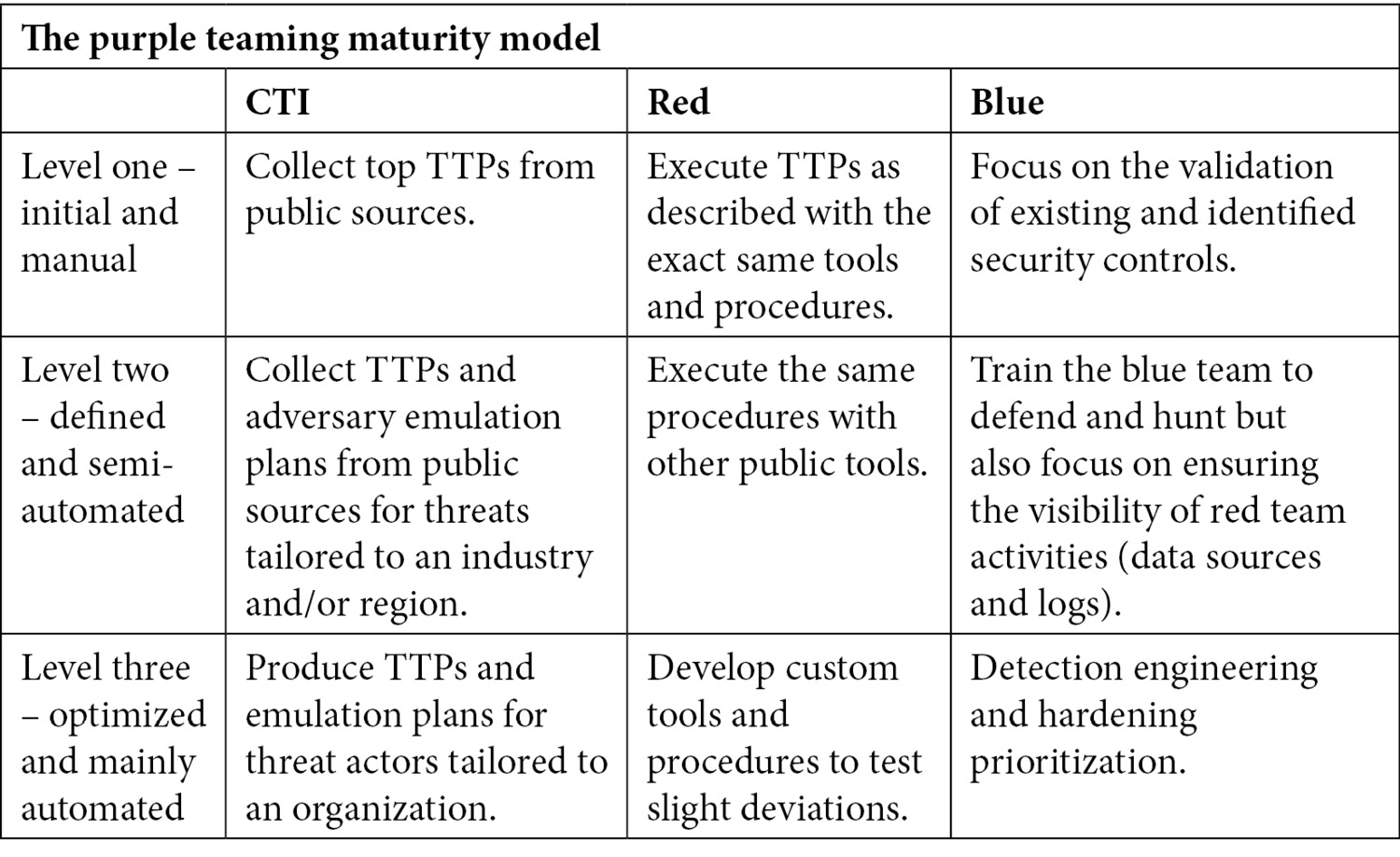

The purple teaming maturity model

Whether our blue team is composed of one person or a full SOC and Computer Security Incident Response Team (CSIRT), the maturity model should give us a place to start and help us make our way up to the top.

We, humbly, tried to develop a new approach while having in mind that the industry is overwhelmed with new tools, acronyms, frameworks, and models every day. So, we tried to stick to something simple and applicable to any kind of organization. We strongly believe that this practical model to purple teaming will help anyone succeed:

Table 2.2 – The purple teaming maturity model

As we can see here, the model is meant to fit any organization's size. Of course, third-party tools or services can help in fulfilling a role, as stated previously. Maturity levels are not meant to be aligned between all teams. It is also important to keep in mind automation as we mature; repeated activities must be automated as much as possible to ease the repetition of exercises.

As an example of maturity levels, we can rely on our CTI inputs on public reports describing the most used TTPs as a start (level one), having a red team executing the TTPs exactly as described in the provided CTI report (level one), and having the blue team already looking at improving and developing new alerts (level three).

But how can collaboration work between the three teams? We will suggest a tool in the next section. Let's introduce here the purple teaming templates.

PTX – purple teaming extended

We strongly believe that the purple teaming mindset could benefit organizations by being extended for broader use. The approach remains the same and follows the PEIR process, but it could be applied not only for adversary emulation but also for various types of exercises, as we will see later in this chapter. Indeed, any offensive activity that builds on the attack, audit, or scan steps can be automated to perform continuous testing to assess, measure, and control security controls based on active detections or blocking mechanisms at any layer of an infrastructure. This approach will be detailed more in the next section, and multiple examples of this approach will be covered in Chapter 12, PTX – Purple Teaming eXtended.

Let's now see some types of exercise that can be performed based on the generic purple teaming process and the Purple Teaming eXtended approach.

Purple teaming exercise types

In the previous sections, we have seen the official operation of what a purple teaming exercise is, but we have also seen that the concept of purple teaming could and should include a broader usage of PTX to benefit organizations. We will now see different exercise types that can be defined using the five Ws and 1 H framework:

- Who: The who defines the functions during the exercise; it could be in-person (teams, managers, or coordinators) or automated (for example, with a breach attack simulation tool). We must think about filling the following functions:

- The defensive function

- The offensive function

- The CTI function

- The purple coordinator

- What: The what defines the threat(s) that will be tested, such as the Advanced Persistent Threat (APT) group, vulnerability exploitation, specific TTP, and threat campaign.

- Where: The where defines the scope of controls to be assessed, such as people, processes, products, and technologies.

- When: The when defines the planification and frequency of the exercise; it can be scheduled or continuous.

- Why: The why defines the reason to perform this control – for example, is it to prevent an existing risk, a future risk, or check the health of existing controls?

- How: The how defines the methodology and approach, such as informed-based exercises and an emulation plan.

Next, we'll describe some exercises and the processes linked to them.

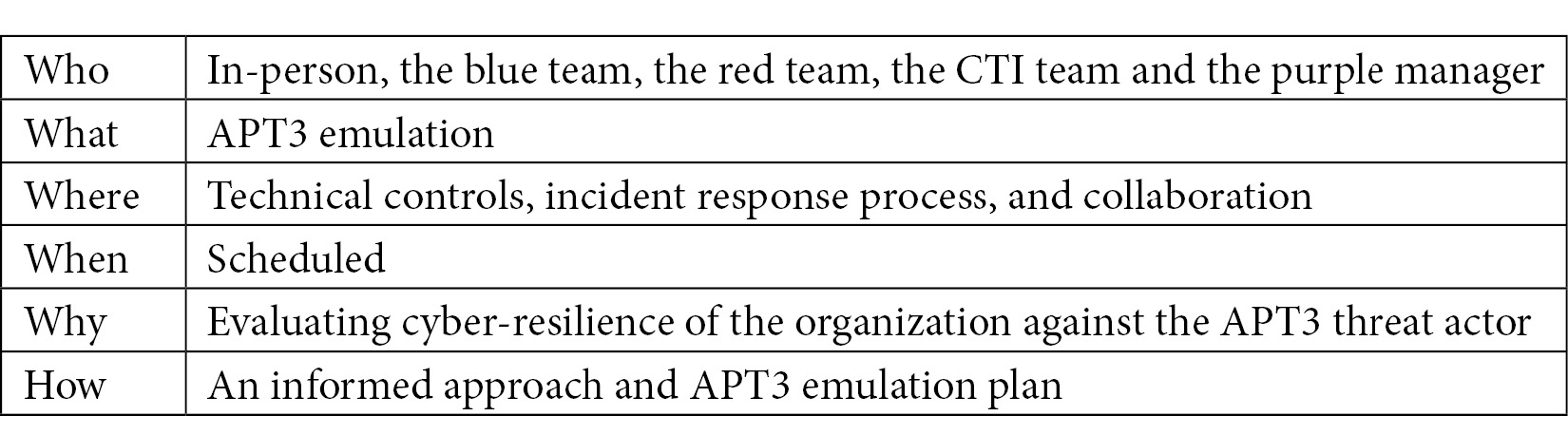

Example one – APT3 emulation

Let's start by defining the five Ws and one H of the emulation of the threat actor APT3:

Table 2.3 – The five Ws and one H for the APT3 emulation

Adversary emulation is probably the most common purple teaming exercise with the collaboration of red and blue teams. So, how do we handle such an exercise?

To make it easier with a concrete example, we suppose that after producing our CTI, which will be described in the next chapter, we can select the APT3 threat actor as a potential adversary to our organization. Let's assume we have both a red and a blue team internally, and that we need to make them work together to run a purple teaming exercise in order to assess our cyber-resilience against this threat actor.

Step one – preparation

The process begins with the preparation phase; at this stage, we will use available information and intelligence regarding this adversary.

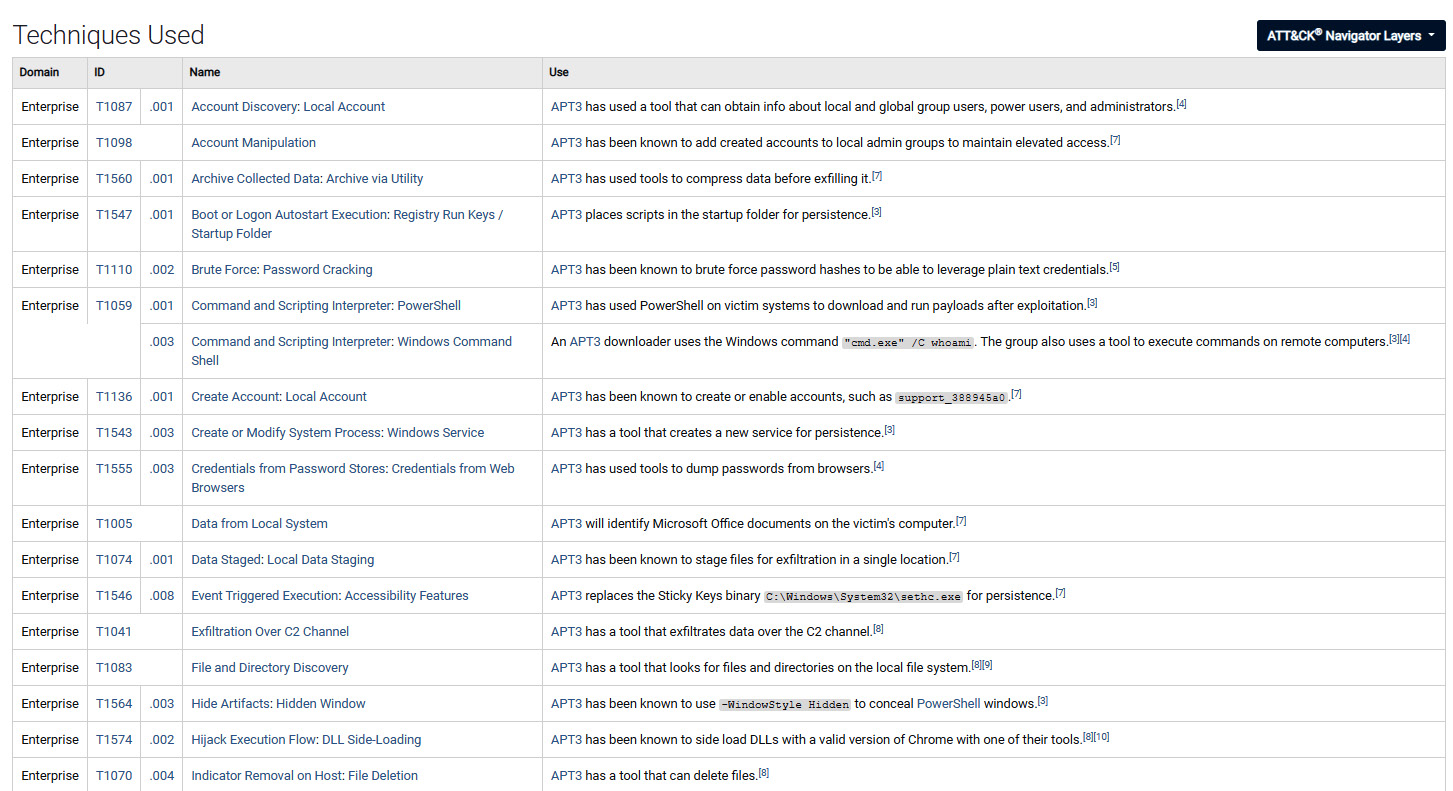

An initial approach would be to use the MITRE ATT&CK framework, https://attack.mitre.org/groups/, to gather initial information on the adversary. Indeed, this would be faster than reading and aggregating multiple threat intelligence reports:

Figure 2.3 – MITRE ATT&CK showing the APT3 adversary techniques used

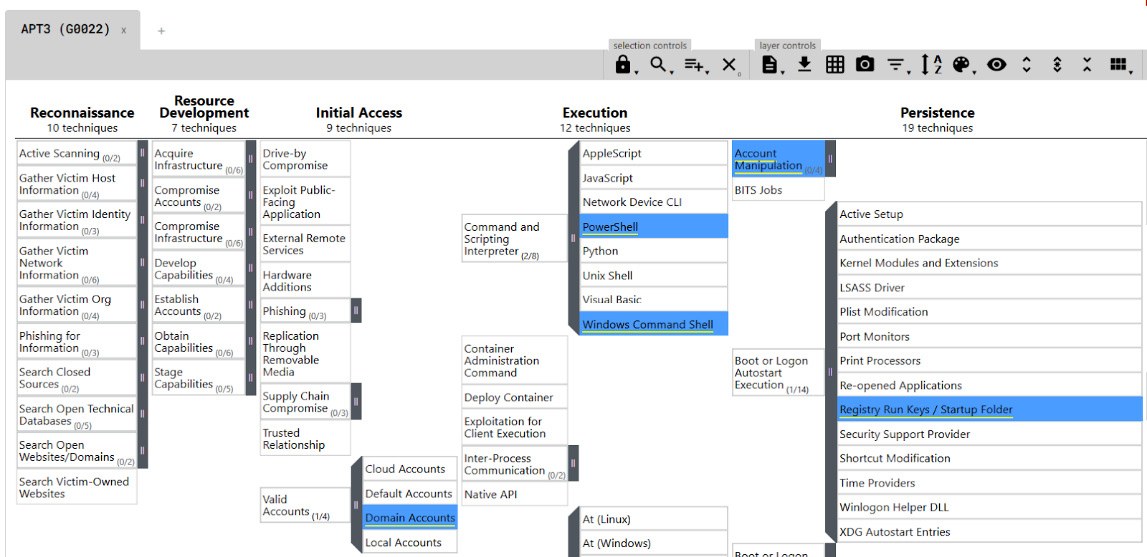

Another interesting feature is the MITRE ATT&CK Navigator; this web application allows an analyst to clearly view the attack steps (tactics) and techniques used:

Figure 2.4 – The MITRE ATT&CK Navigator for APT3

Each technique is detailed and usually has an interesting detection section. It will provide a generic approach to detect each specific technique. It requires detection engineering skills to be converted into practical usage – for example, monitoring net.exe or net1.exe usage, which can be technically translated to the following:

- The required data source: Sysmon

- Sysmon EventID to collect: 1

- Specific fields to analyze: Image or CommandLine

- Pattern match (pseudocode): Image == "* et.exe*" or Image == "* et1.exe*"

As we can see, detection recommendations require additional work to be effective.

From this pre-analysis, an adversary emulation plan can be defined. This document should contain details on all identified techniques and how to reproduce them. A sample of such a document is provided by MITRE at https://attack.mitre.org/docs/APT3_Adversary_Emulation_Plan.pdf.

Obviously, to be able to create reports from this adversary emulation, we need to have an overview of all the actions to perform. For this, multiple approaches can be used, usual spreadsheets, or dedicated tools for collaboration. (This option will be described later in Chapter 9, Purple Team Infrastructure.) For a first-time scenario, we will rely on an existing spreadsheet provided by MITRE for this specific APT group.

We modified it a little bit to add additional columns, test results, and reasons/comments. Ideally, tests should not be performed on a production environment. A cyber range infrastructure or a pre-production environment similar to the real production environment should be used to prevent disruptions that may be caused by an attack. While riskier, executing the TTPs in the production environment would gives the most accurate results.

It is also important to schedule operations with both blue and red teams to have dedicated resources working simultaneously on the exercise.

So, the global output of this phase is as follows:

- Define the adversary TTPs.

- Create the emulation plan.

- Create a spreadsheet to be filled with expected attack results

- Define the scope of the tests.

- Schedule operations with both teams.

Once everything is prepared, the next phase can be applied – execution.

Step two – execution

This step will be the starting point of the attack scenario. Both teams start the exercise.

The red team plays the TTPs one by one corresponding to the emulation plan defined previously. In the meantime, the blue team checks the expected security controls (prevention, detection, and hunting) in tools such as SIEM, Endpoint Detection and Response (EDR), and eXtended Detection and Response (XDR) to ensure that each technique is properly prevented, detected, or at least logged.

The blue team will have to fill in the emulation plan results.

The output is as follows:

- Emulation plan results

- Results sent to the purple team manager

Now, we can move on to the next step – identification.

Step three – identification

The emulation plan will be analyzed to determine gaps, failures, and improvements on each expected security control. A remediation plan will be created with prioritized actions based on implementation effort and risk reduction.

Once done, this information will be transmitted to the blue team to improve prevention, detection, and logging capabilities.

The output is as follows:

- A remediation plan with prioritized improvement actions

Let's move on to the final step – remediation.

Step four – remediation

Once received, the blue team manager asks detection engineers, SOC analysts analysts, or SIEM/SOC engineers to implement new detection rules and/or change the existing configuration to close identified gaps.

As a continuous improvement process, once implemented, these failed detections should be tested again with the same tests to ensure newly modified security controls work properly.

Some KPIs and reports of the operation will be provided to different managers to show the process relevance and demonstrate the security improvements.

The output is as follows:

- Configuration changes and/or new use cases

- Reporting

- A new iteration of the process to ensure everything was implemented correctly

As discussed previously, different types of exercise can be performed to leverage the purple teaming approach. We will describe other common and uncommon exercises next.

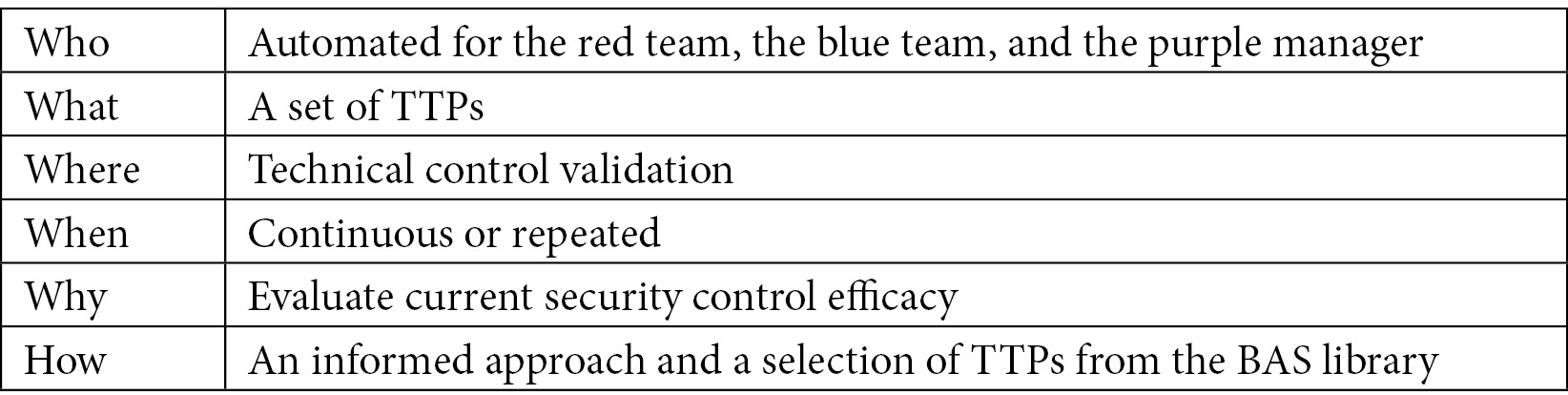

A breach attack simulation exercise

Let's define the five Ws and one H for a BAS exercise:

Table 2.4 – The five Ws and one H for the BAS exercise

An approach using existing BAS solutions is common nowadays; indeed, attackers' techniques are mapped in the MITRE ATT&CK framework in a standardized way.

From this postulate, it becomes possible to apply a model similar to the previous one.

Step one – preparation

Even if part of a job is automated, the preparation phase remains a success key.

In such a situation, multiple elements have to be considered and configured.

Once again, you have to define your tests (if not defined by default in the BAS solution), based on CTI or the most common trends (see next chapter).

From there, you will build your emulation plan and pay special attention to technique tags that can be extracted from the MITRE ATT&CK framework.

In this specific configuration, the red team will be potentially involved only in the last step (remediate); instead, the blue team will work by itself with the BAS tool.

As usual, a test machine using the same production conditions (such as audit policies and log collection) will have to be used.

The output is as follows:

- The simulation plan (based on the same model as the emulation plan)

- The simulation results spreadsheet (for results analysis)

- The test machine (ideally virtual and snapshotted for reuse later on)

- BAS software installation on a dedicated machine (such as Atomic Red Team)

Let's now go to the execution step.

Step two – execution

In this situation, the blue team will work by itself and will run tests locally to ensure security detection.

For each test, the blue team will check on required security devices (SIEM, EDR, and so on) to ensure prevention and detection happens correctly.

All elements will be reported on the simulation results spreadsheet at the identification phase.

The output is as follows:

- The simulation results spreadsheet (updated)

Once the execution has been performed, we need to document the results and identify necessary remediations.

Step three – identification

At this step, the purple team manager (or, more generally, the blue team manager) will analyze the simulation results spreadsheet and identify gaps. These gaps will be output for the last step – remediation.

The output is as follows:

- A summary of the simulation plan results with identified gaps and possible improvements

Now we move on to the last step, remediation.

Step four – remediation

At this stage, remediations will be handled by the blue team to add new detection capabilities. To follow the control process, the red team can then be included in the process to perform collaborative tests with exact techniques and small variations to ensure the detection of identified gaps.

The output is as follows:

- Implemented changes

- A request for a new human-based control with the red team to ensure the correct detection (a new purple teaming exercise loop)

- Reports to management

We will now see another type of exercise that slightly deviates from the original definition but still retains the purple mindset. It is not an exercise anymore but rather a continuous assessment.

Continuous vulnerability detection

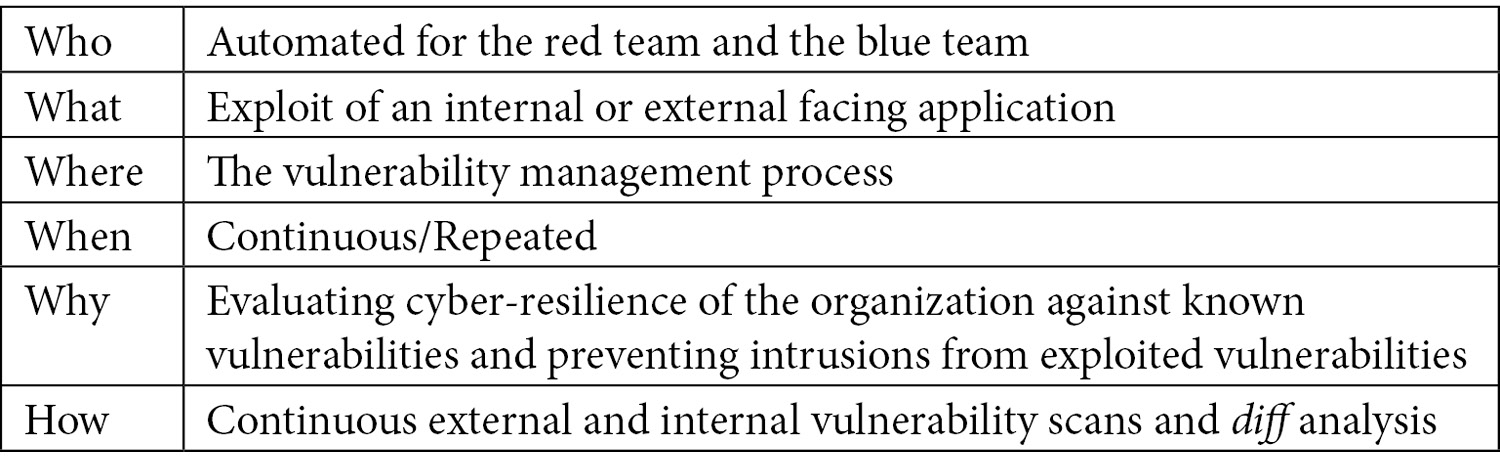

Let's now see the five Ws and one H of continuous vulnerability detection:

Table 2.5 – 5 Ws and 1 H for continuous vulnerability detection

This specific use case will be fully described in the next chapters; the global concept we will introduce is vulnerability diffing (also known as a purple scan).

This is the same concept as patch diffing where a reverse engineer will try to find the differences between an existing portion of reverse-engineered code before and after an applied patch to discover a zero-day vulnerability. This same diffing approach can be applied to an infinite number of security solutions (such as vulnerability scanning, AD audits, and network scans).

In this specific scenario, a vulnerability scanning solution is implemented, reports are collected automatically and normalized, and then an algorithm is applied to detect differences between previous and current vulnerability scans. These differences are considered as new vulnerabilities to investigate and will generate an alert to the blue team.

This approach can be implemented without the red team.

Step one – preparation

The interesting part of this scenario is that thanks to automation, human activity and document handling are strongly limited.

Basically, the main requirement is to set up the correct technical components – a vulnerability scanner, a scheduled scan on a specific scope, and a script run for data collection and diffing (which can be done thanks to a SIEM with real analytic capabilities).

- A configured vulnerability scanner (scheduled scans)

- Data collection, normalization, and a vulnerability diffing algorithm implementation thanks to a custom script

- Alerting, email, instant messaging (IM), and so on

Let's now go to the execution phase.

Step two – execution

Contrary to the other scenarios presented previously, execution is automated and repeated (scheduled once a week, for example). This frequency allows us to greatly reduce the attack window risk.

Once executed, reports are generated and then collected by a SIEM or using custom code.

The purple scan code or the SIEM will handle the identification step.

The output is as follows:

- Generated reports

Now let's move on to the identification step.

Step three – identification

As already shown, the main idea of this step is to be able to perform an automated analysis between a previous and a new scan. This difference can be applied using a previous reference of the vulnerability name and the impacted host tuple.

Once a difference (diff) is identified by the detection algorithm, an alert event is generated to the SIEM, which is analyzed by the blue team as a newly identified vulnerability and handled as a security threat.

Whether it produces positive or null results, the new report is considered as the new reference model.

The output is as follows:

Finally, let's tackle the remediation step.

Step four – remediation

Once the blue team receives this alert the internal vulnerability management process will begin for prioritizing patching.

The output is as follows:

- Vulnerability identified

- Applied patches

- A new manual scan after a patch to ensure that it is correctly patched

- Automatic updates of dashboards, reports, and KPIs

The next section requires the collaboration of both attack and defense teams to protect the company from new hackers' TTPs.

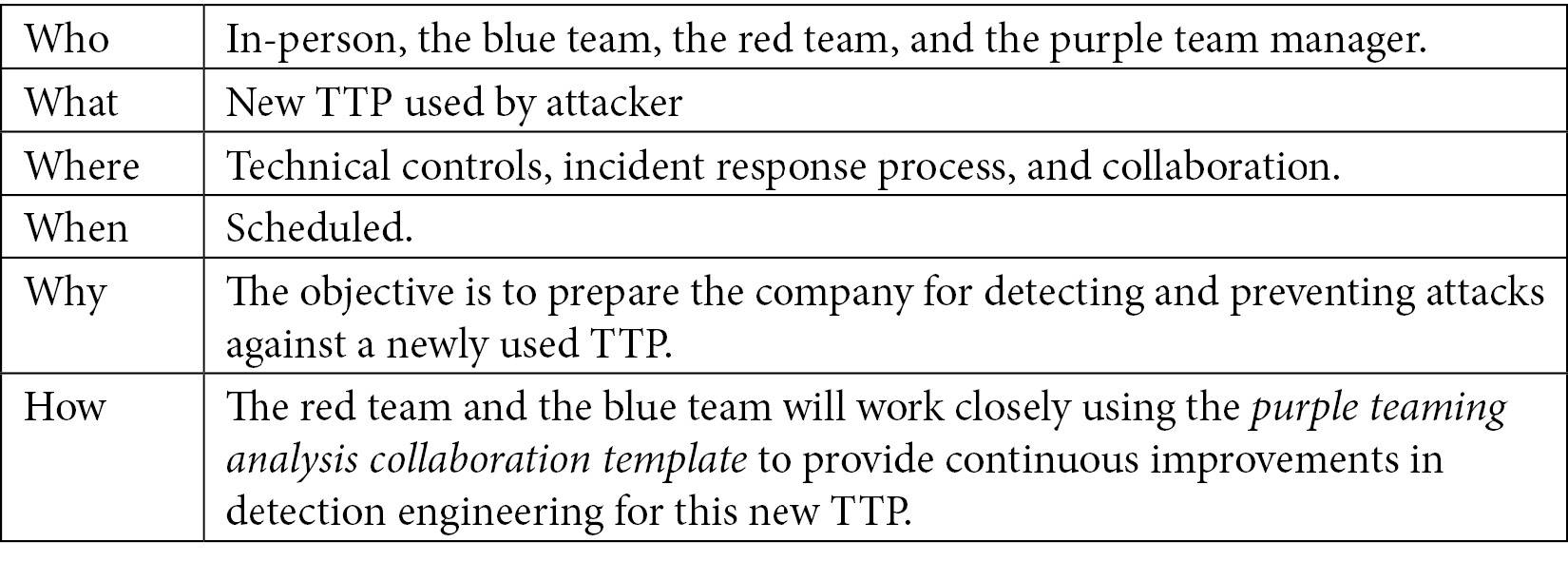

A new TTP or threat analysis

Let's now see the five Ws and one H for an exercise focusing on a new TTP:

Table 2.6 – The five Ws and one H for the new TTP exercise

In this scenario, the company is facing another problem – they need to create detection from an existing public threat, TTP, or offensive software. This same model can be applied to published exploits without a patch provided for the vulnerability or no available team to patch quickly. The red and blue teams will be involved together to build detection rules collaboratively.

Let's take a practical example.

The red team, as part of their research and development, analyzed a threat report to discover a new potential TTP to use. This report disclosed the fact that Ping Castle is used by an attacker group to perform malicious operations. PingCastle is a tool developed by Vincent Le Toux (who is also the famous Mimikatz co-author), which allows any domain user to get an exhaustive overview of Active Directory security risks and exploitation possibilities. It has the main advantage of being trusted by antivirus/EDR vendors and can be run on the command line. A quick search on the internet did not reveal any technique that could be used for the detection of such a tool.

This issue is very common because most of the time, attackers will try to use TTPs that are as stealthy as possible to evade detection.

Now that we've understood the overall process and some practical applications of purple teaming, let's talk about about purple teaming analysis collaboration template.

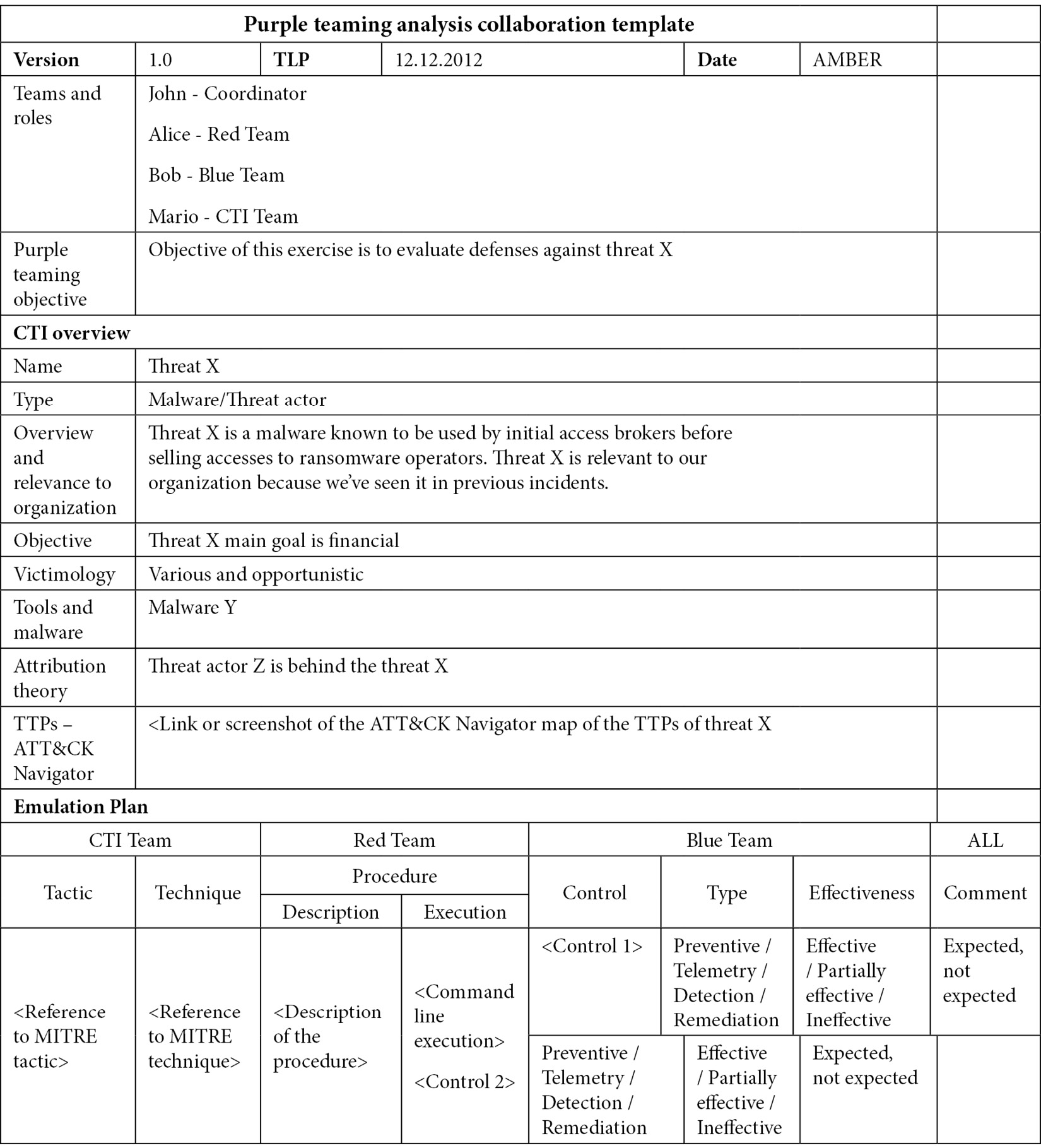

Purple teaming templates

Purple teaming is an amazing example of collaboration across teams that usually compete with each other. This is where a need for a standardized collaborative approach and methodology is necessary. Let's introduce the purple teaming templates. Here, two templates are proposed. One purple teaming report template which contains the intelligence overview, the emulation plan and can validate security controls and identify improvements and gaps a low level version of this template can be found inside the Chapter 14, Exercise Wrap-up and KPIs. The collaboration engineering template aims to provide a standardized methodology to guide red and blue teams through a detection engineering process.

Both can be leveraged as inspiration for a custom template that better suits everyone's needs.

Report template

This template example is intended to be a complete log of a purple teaming exercise. It describes its objective, the intelligence overview of the threat being emulated as well as the adversary emulation plan. This plan lists the techniques identified by the CTI team. The red team can then explain the procedure of how the technique will be executed. The blue team can then identify and document each of its security controls following the four key dimensions – prevention, visibility, detection and remediation.

Throughout an exercise, each successful and failed control can be highlighted with a dedicated color. Upon completion, the purple teaming manager can synthesize the results before all three teams sit together to discuss the priority concerns of the gaps and improvement opportunities identified:

Table 2.7 – The basic collaboration template

Now let's see another type of template useful for collaboration engineering.

Collaboration engineering template

This template can be used for multiple analysis activities requiring both red and blue teams' work and analysis. We have tried to make it as standard as possible and respect the PEIR approach to ensure security improvements and controls throughout the collaboration. The detection logic relies on pseudo-code to be product-agnostic. All the gray parts have to be filled. Please note that interaction should still be coordinated by a manager:

Now that you have understood the concepts of how to plan, execute, identify, and remediate, the next chapter will focus on the usage of CTI as a main input for your purple teaming exercise preparation.

Summary

In this chapter, we saw that purple teaming is a process that can be applied in different kinds of assessments; nevertheless, we strongly believe that purple teaming is also a mindset that must be incorporated into an organization's culture. Purple teaming exercises help to build human cross-collaboration between red and blue teams. This is exactly what purple teaming enables within an organization – a common and shared objective: improving the organization's security. After all that, does this mean that red teaming exercises don't make sense anymore? Not at all – they do serve a purpose to test responsive capabilities in a realistic scenario where the blue team is not informed, and the red team performs actions with stealth in mind.

In the next chapter, we will introduce CTI and what it implies, as well as defining how it should be leveraged as an input for purple teaming.