The process of cleansing and transforming big datasets in the data lake has become an increasingly popular and critical step in a modern enterprise’s data architecture. Microsoft has introduced several big data analytics and orchestration tools to serve the need for big data lake Extract-Load-Transform (ELT). Customers are seeking cloud-based services that can cleanse, transform, and aggregate extremely big datasets with ease, coupled with a low learning curve. They are seeking to understand what tools and technologies could potentially fit the bill for big data lake cleansing and transformations.

Azure Data Factory’s Mapping Data Flows has become a promising solution for big data lake cleansing and transformations. In Chapter 11, I discussed the concept of a modern data warehouse and demonstrated a practical example of Mapping Data Flows for enterprise data warehouse transformations. In this chapter, I will continue to demonstrate additional data cleansing and aggregation features of Mapping Data Flows, specifically to process big data files stored in Azure Data Lake Storage Gen2 as hierarchical files.

Add Files and Folders to Azure Data Lake Storage Gen2

Structuring a Data Lake Storage correctly by using best practices is key. When data is stored in Data Lake Storage Gen2, the file size, number of files, and folder structure have an impact on performance.

File Size

Depending on what services and workloads are using the data, a good size to consider for files is 256 MB or greater. If the file sizes cannot be batched when landing in Data Lake Storage, you can have a separate compaction job that combines these files into larger ones.

Folder Structure

The folder and file organizational structure can help some queries read only a subset of the data, which improves performance by optimizing for the larger file sizes and a reasonable number of files in each folder. Be cognizant of performance tuning and optimization techniques, along with folder and file structure recommendations.

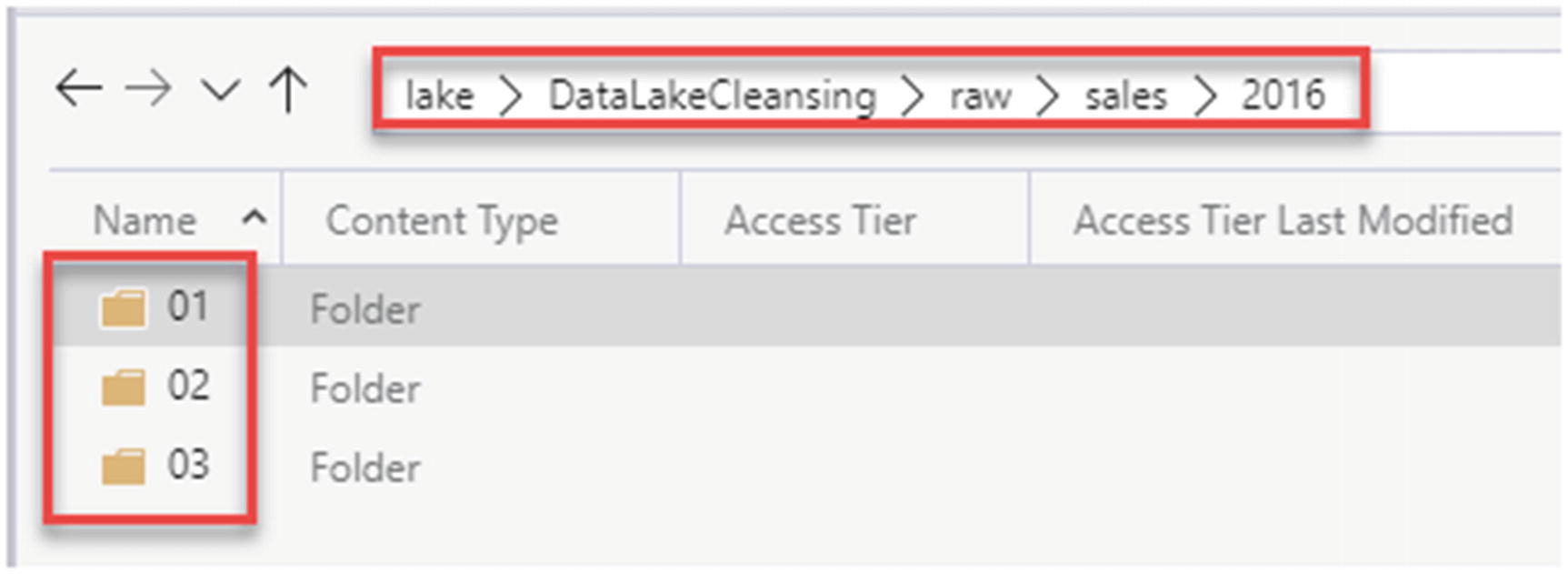

For this exercise, you will learn how to create an ADLS Gen2 container named lake, along with a few additional folders that will organize the data by the year 2016. To get started, ensure that you have an ADLS Gen2 account and ADF account set up. Next, you’ll need to upload the 2016 sales files from the following GitHub account (https://github.com/ronlesteve/sales-datasets) into the ADLS Gen2 folder structure shown in Figure 12-1.

2016 sales folders organized by month number

2016 sales folders organized by month day text files

Structure of the text files

Create Azure Data Factory Resources

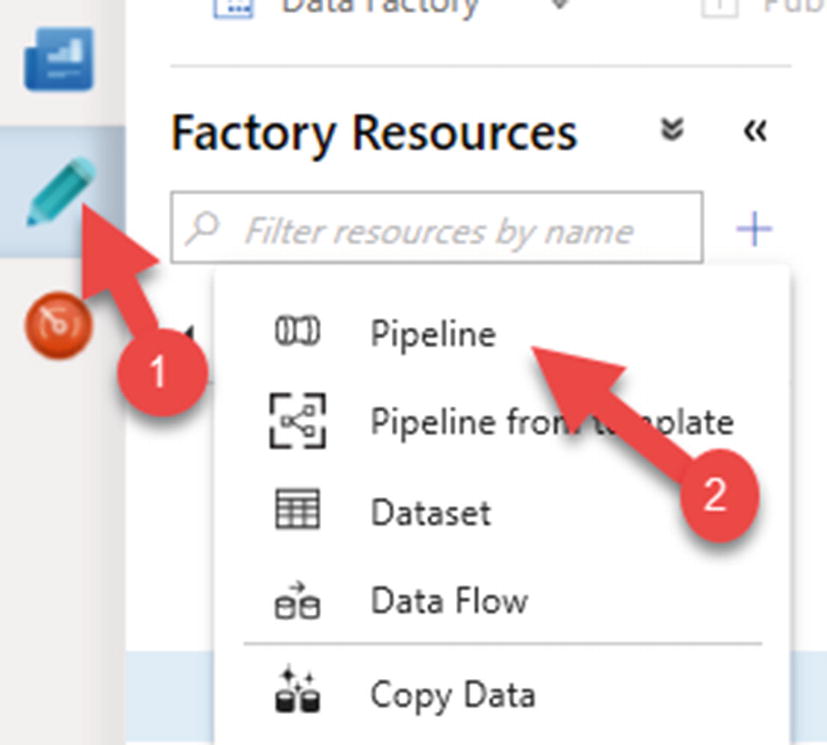

Create a new ADF pipeline

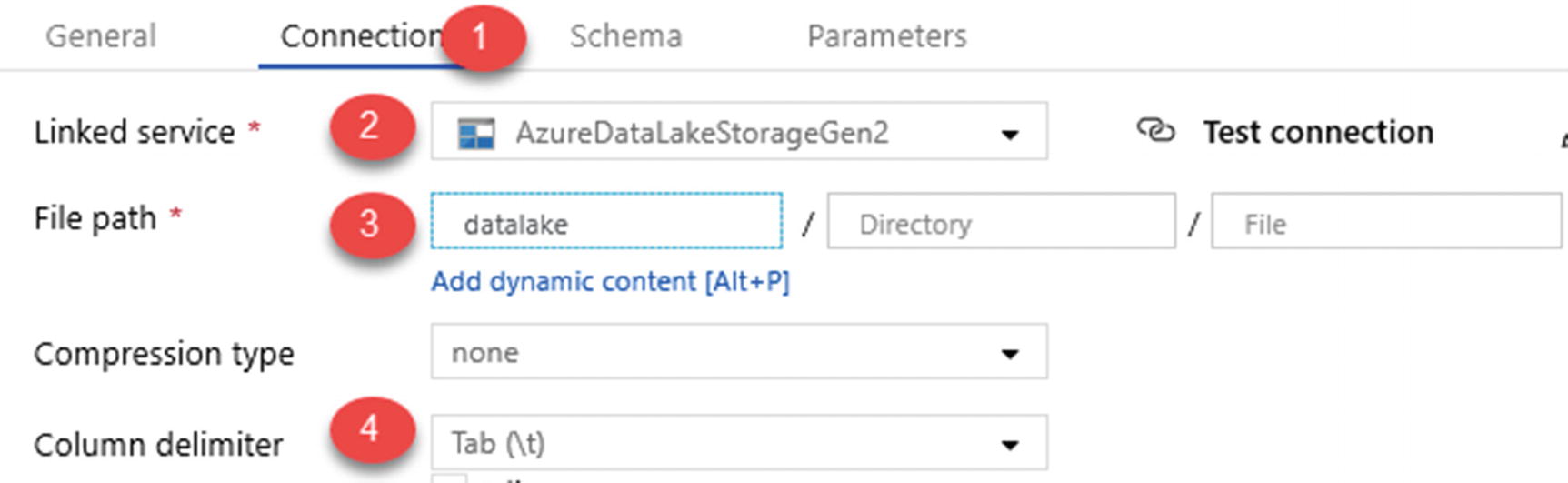

ADLS Gen2 dataset connection properties

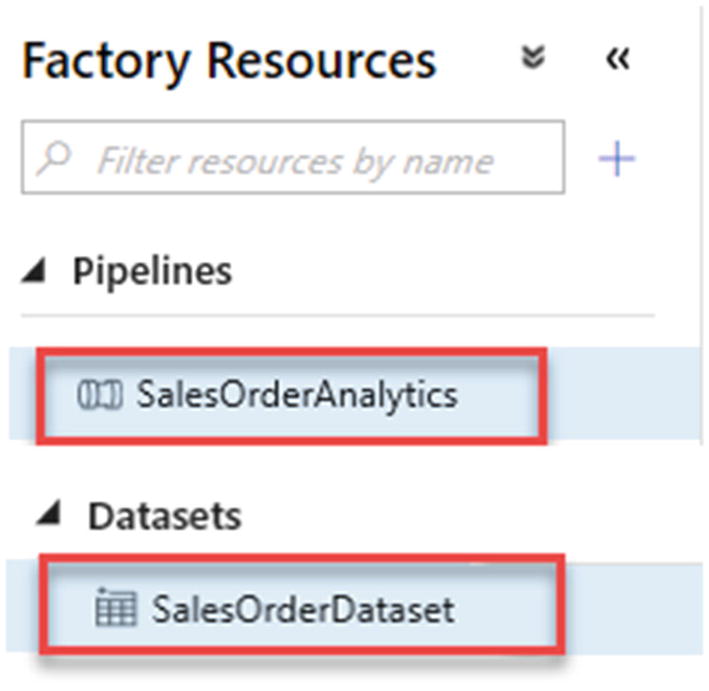

ADF pipeline and dataset resources

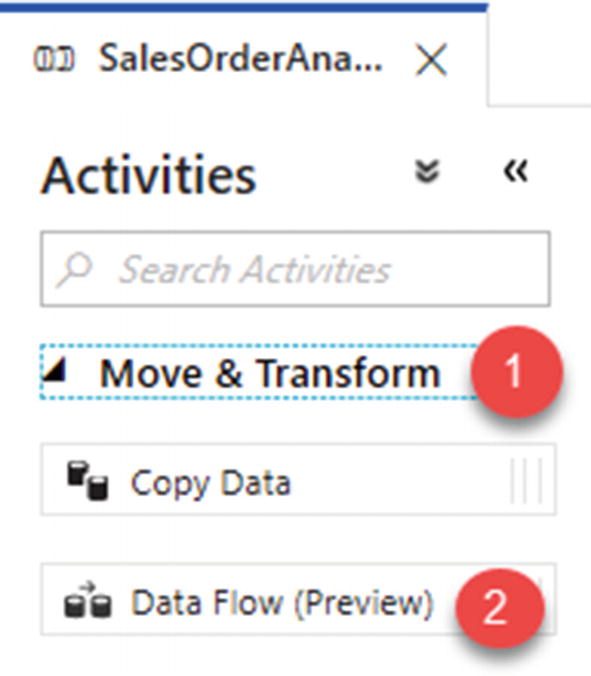

ADF Data Flow activity

Create the Mapping Data Flow

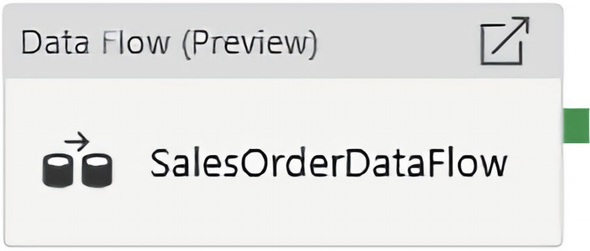

ADF SalesOrderDataFlow

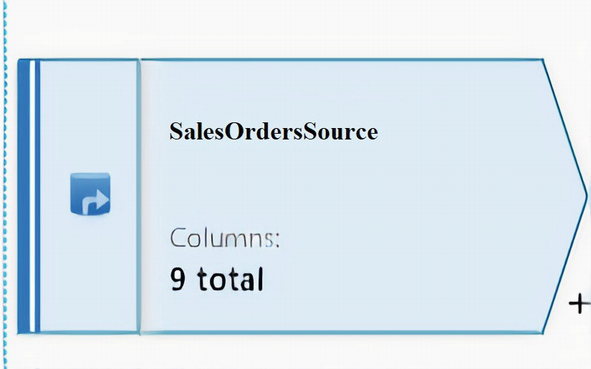

ADF data flow source connection to SalesOrderDataset

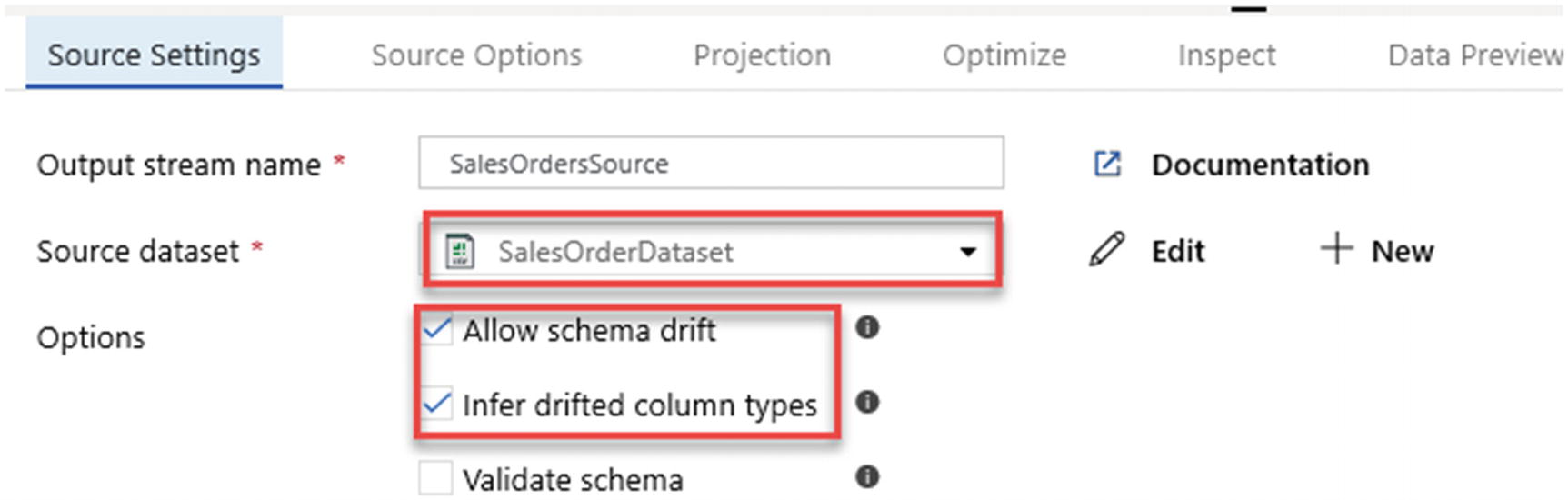

ADF source data flow settings

ADF source dataset parameters

ADF source options

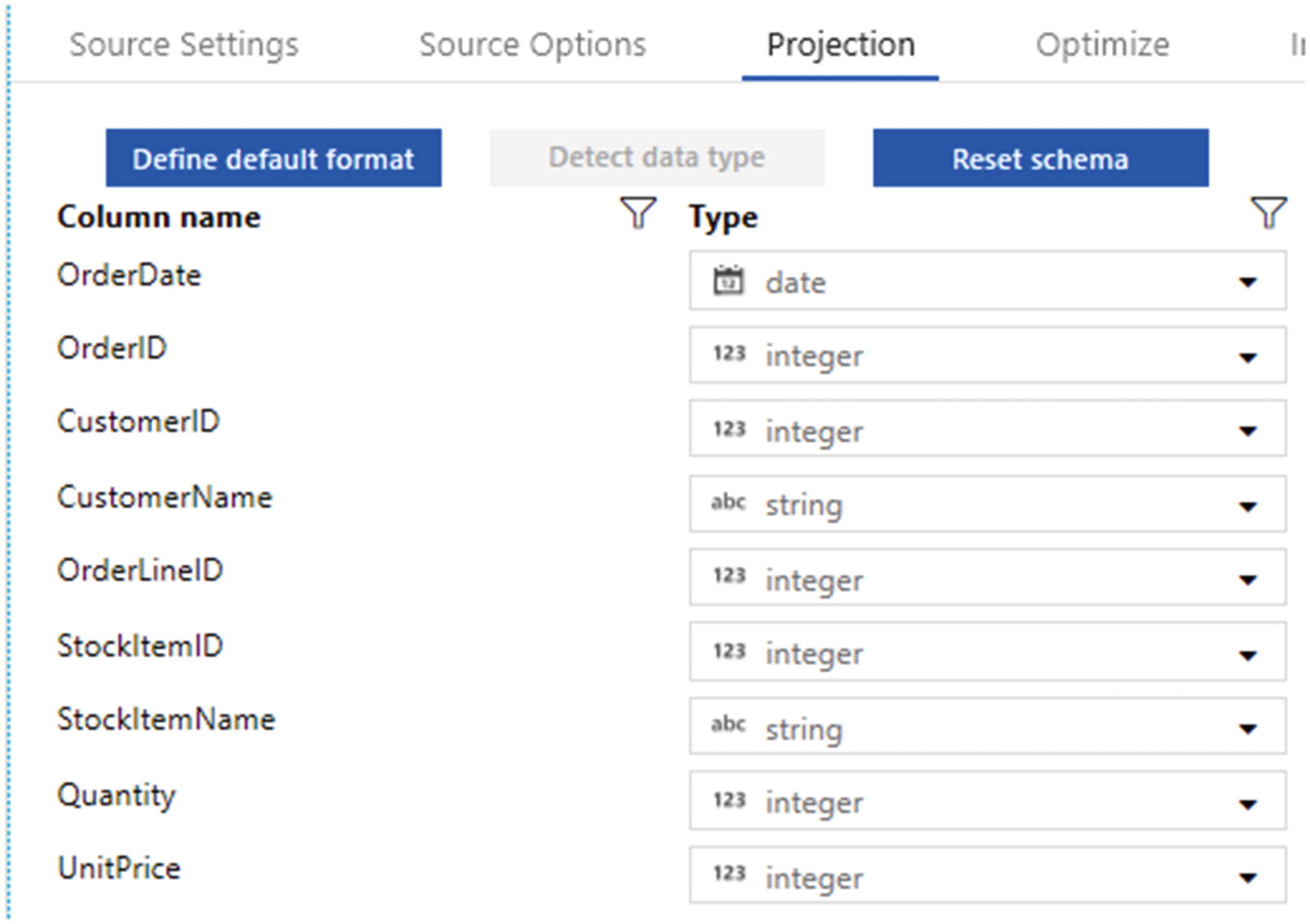

ADF data flow source projection

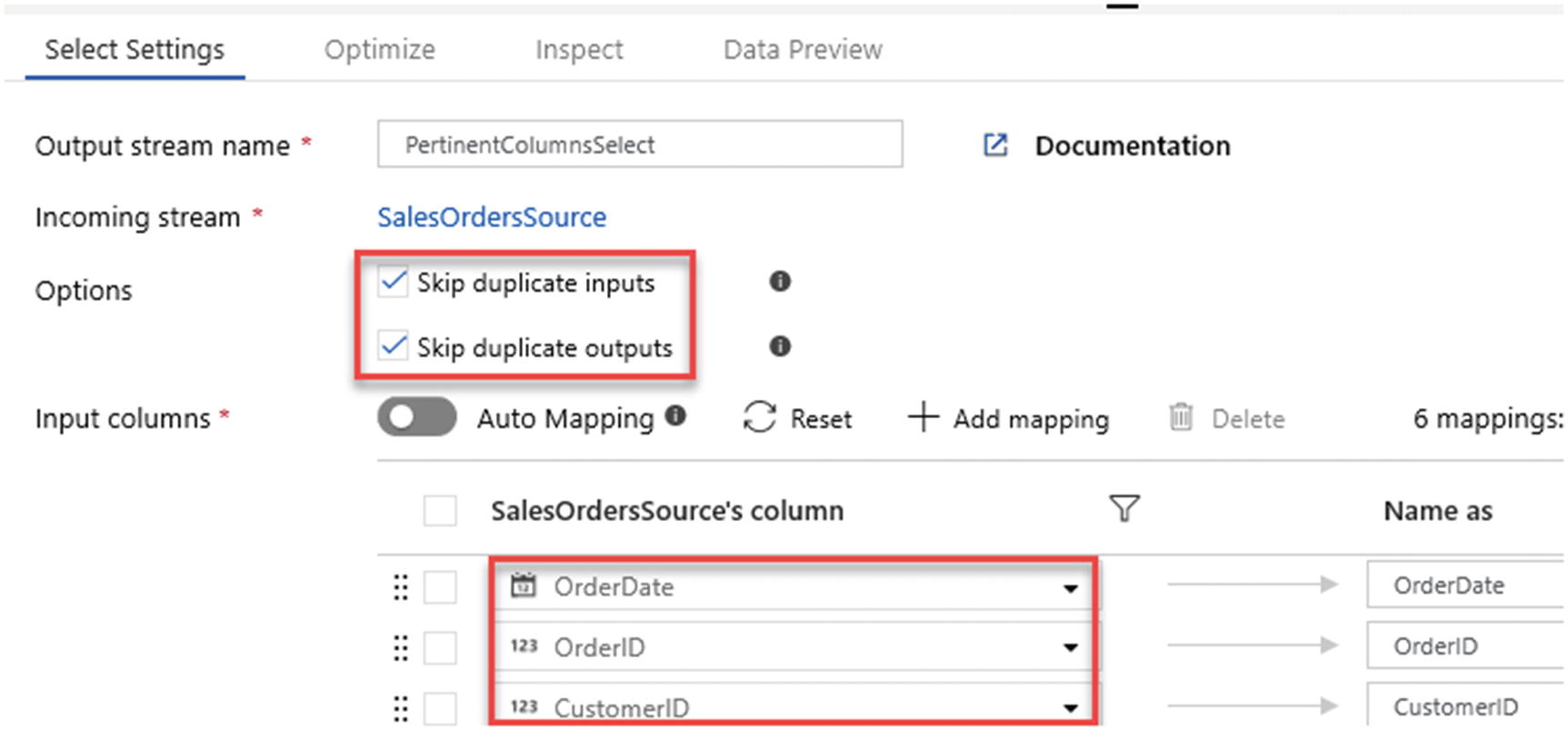

ADF Mapping Data Flows “Select” modifier activity

ADF data flow “Select Settings” to skip duplicates

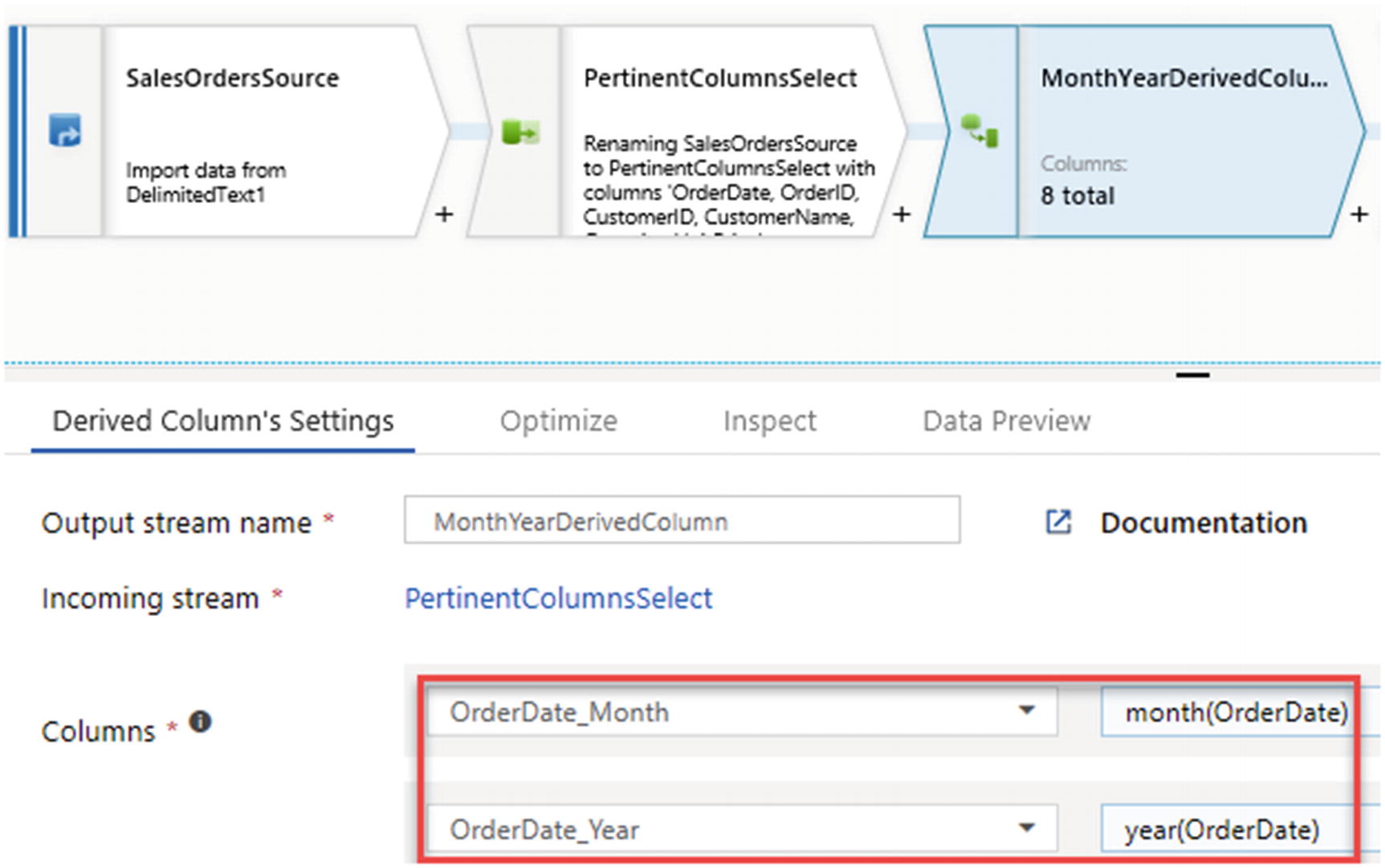

- 1.

Order month number based on OrderDate

- 2.

Year number also based on OrderDate

ADF data flow derived columns

Derived columns are great for data cleansing through the power of expressions.

Regular Expressions (Regex)

A regular expression is a sequence of characters that specifies a search pattern. Usually, such patterns are used by string-searching algorithms for “find” or “find and replace” operations on strings or for input validation. It is a technique developed in theoretical computer science and formal language theory. Regex functions are compatible with Mapping Data Flows and can be added to activities within the ADP pipelines. Here are some sample regular expressions and their intended purpose:

RegexReplace(Address,`^a-zA-Zds:`,''): Removes all non-alphanumeric characters

RegexReplace(Address,`[ ]{2}|.`,' '): Takes the Address field, which contains street address strings, and replaces any occurrence of two spaces or dots “.” with a single space.

Regex_extract(Address, `^(d+)`, 1): Uses the street address to extract just the house number.

Soundex

Soundex is a phonetic algorithm for indexing names by sound, as pronounced in English. The goal is for homophones to be encoded to the same representation so that they can be matched despite minor differences in spelling.

This can be a great expression to use when working with semi- or unstructured data in a lake to overcome issues with joining and cleansing data without keys.

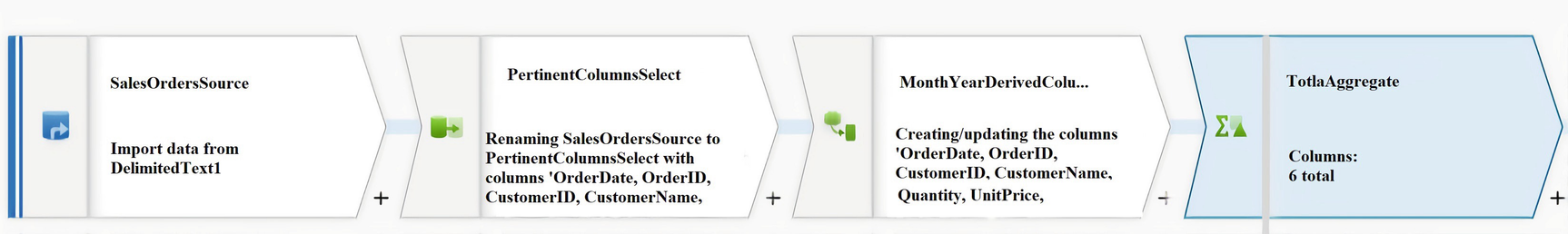

ADF data flow Aggregate schema modifier

ADF data flow Group by aggregate settings

ADF data flow Aggregates

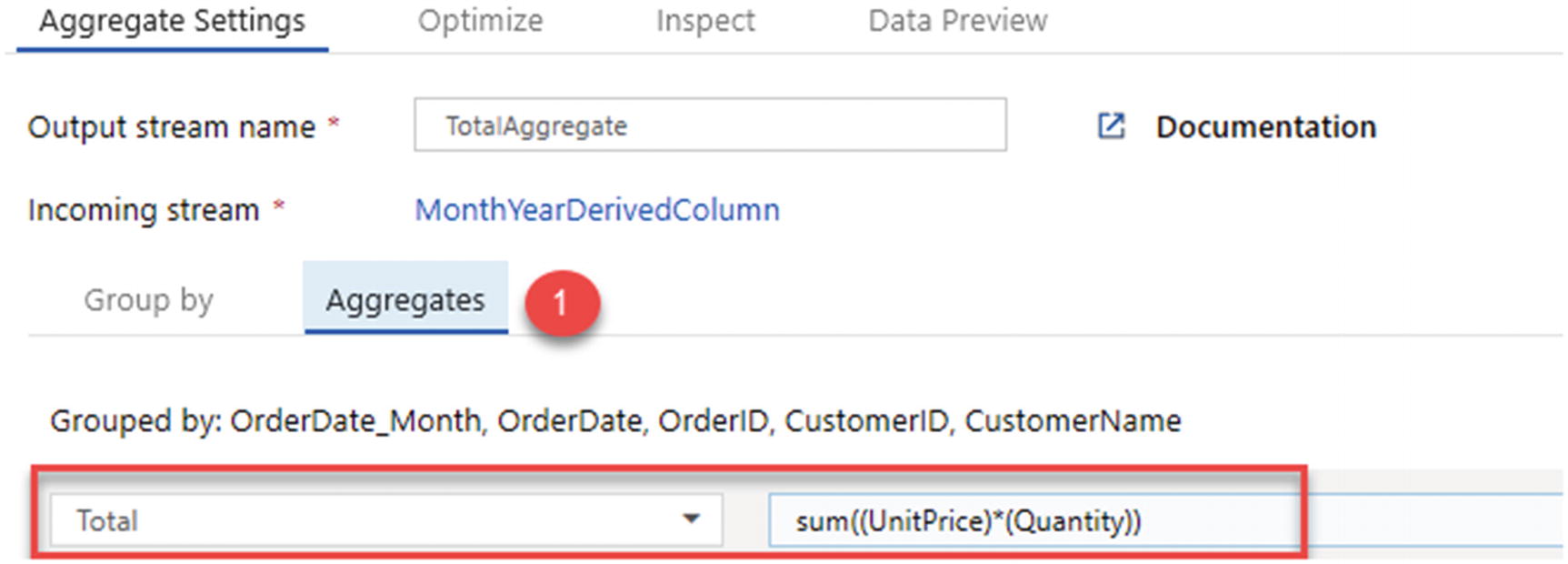

Now that the aggregation is complete, add a Windows schema modifier, as shown in Figure 12-20.

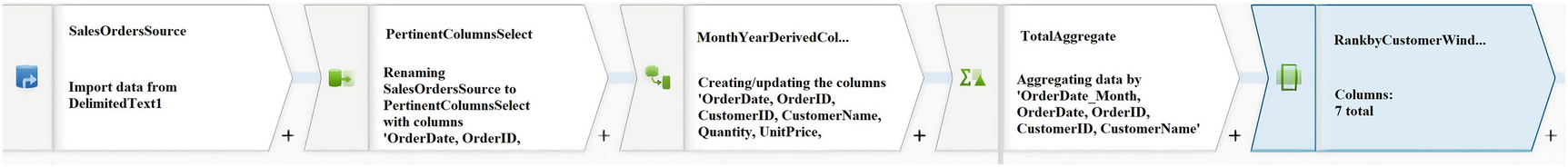

The window transformation is where you will define window-based aggregations of columns in your data streams. In the Expression Builder, you can define different types of aggregations that are based on data or time windows (SQL OVER clause such as LEAD, LAG, NTILE, CUMEDIST, RANK, etc.). A new field will be generated in your output that includes these aggregations. You can also include optional group-by fields.

ADF data flow Windows schema modifier

ADF Windows Settings for “Over”

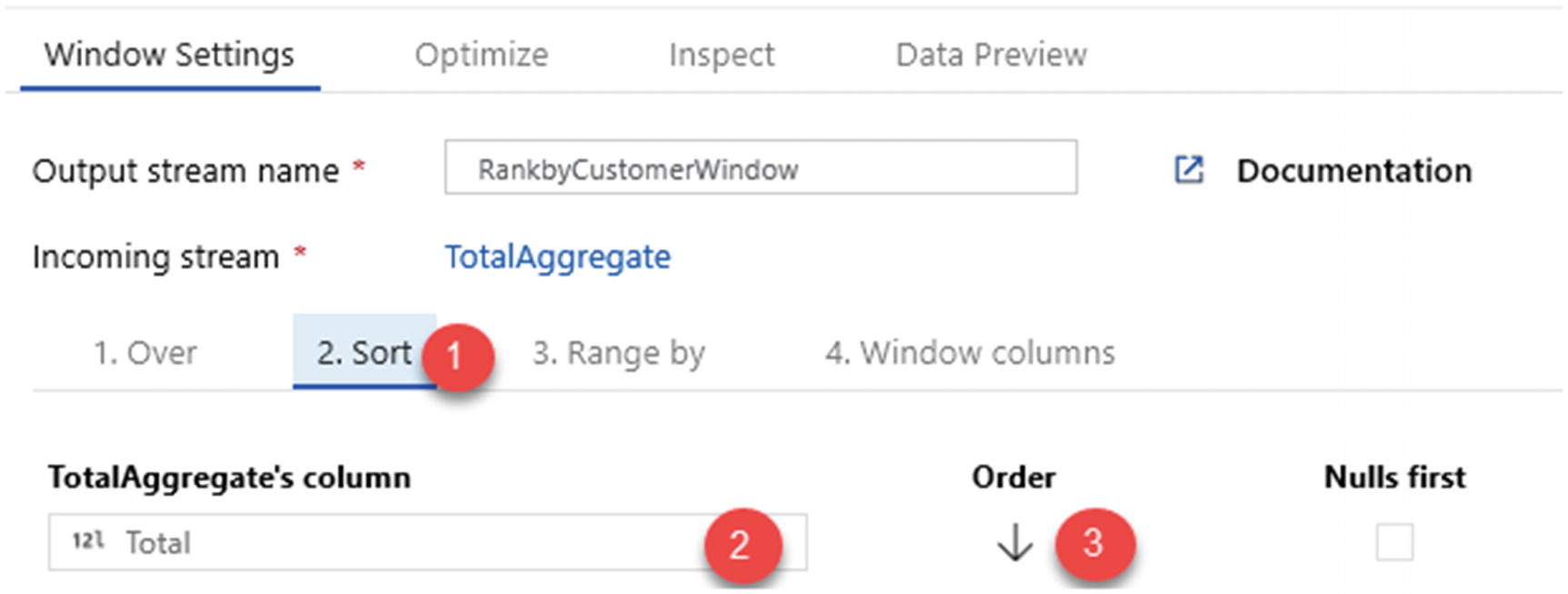

ADF Windows Settings for “Sort”

ADF Windows Settings for “Range by”

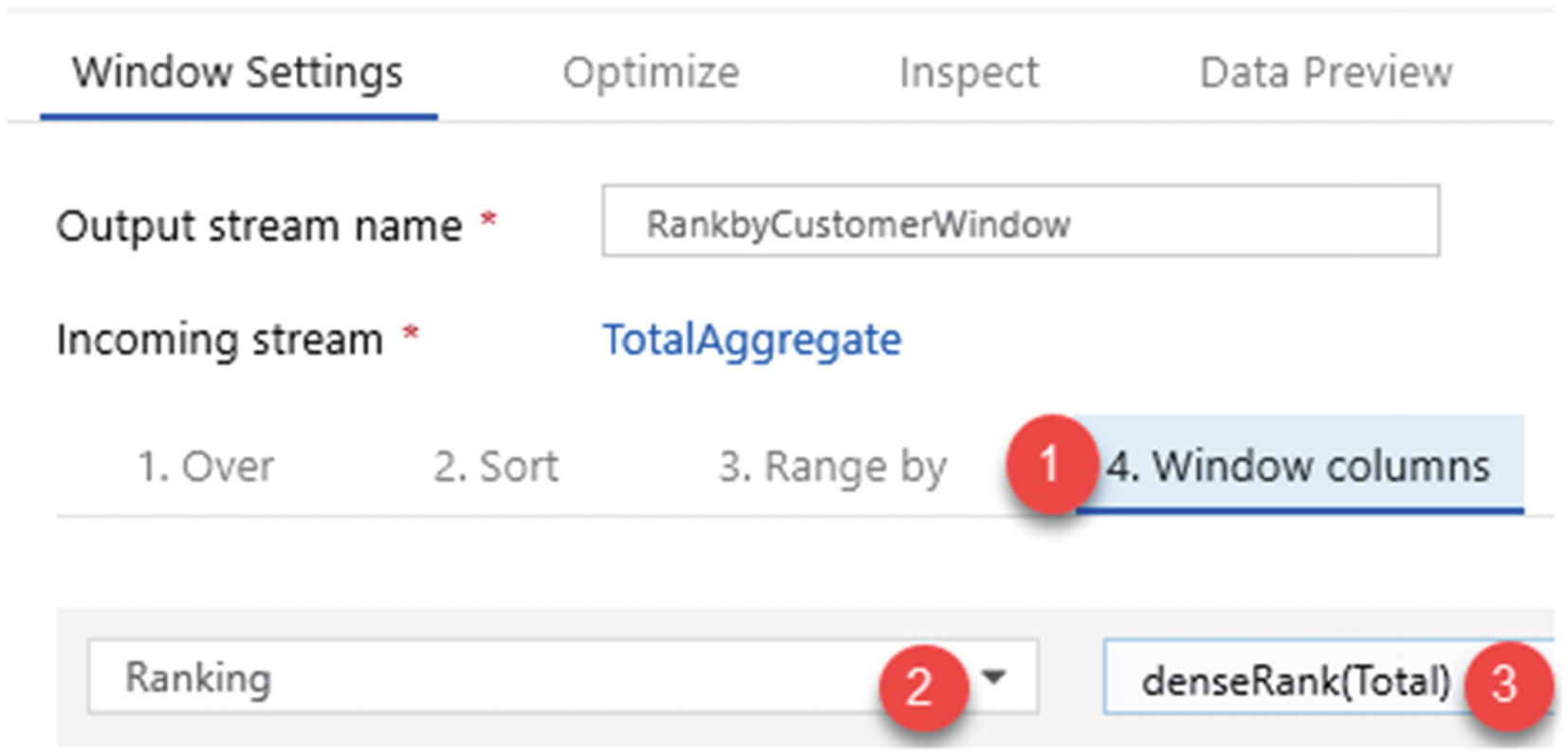

Next, add a dense rank function to the total. Note that there are a few rank and row number functions that fit specific needs and use cases .

RANK Function

RANK function sample output

ENAME | SAL | RANK |

|---|---|---|

SMITH | 800 | 1 |

JAMES | 950 | 2 |

ADAMS | 1100 | 3 |

MARTIN | 1250 | 4 |

WARD | 1250 | 4 |

TURNER | 1500 | 6 |

Table 12-1 shows a sample output for the RANK function which has been run on a sample dataset.

DENSE_RANK Function

The DENSE_RANK function is like the RANK function. However, the DENSE_RANK function does not skip any ranks if there is a tie between the ranks of the preceding records.

DENSE_RANK function sample output

ENAME | SAL | RANK |

|---|---|---|

SMITH | 800 | 1 |

JAMES | 950 | 2 |

ADAMS | 1100 | 3 |

MARTIN | 1250 | 4 |

WARD | 1250 | 4 |

TURNER | 1500 | 5 |

ROW_NUMBER Function

ADF Windows Settings for “Window columns”

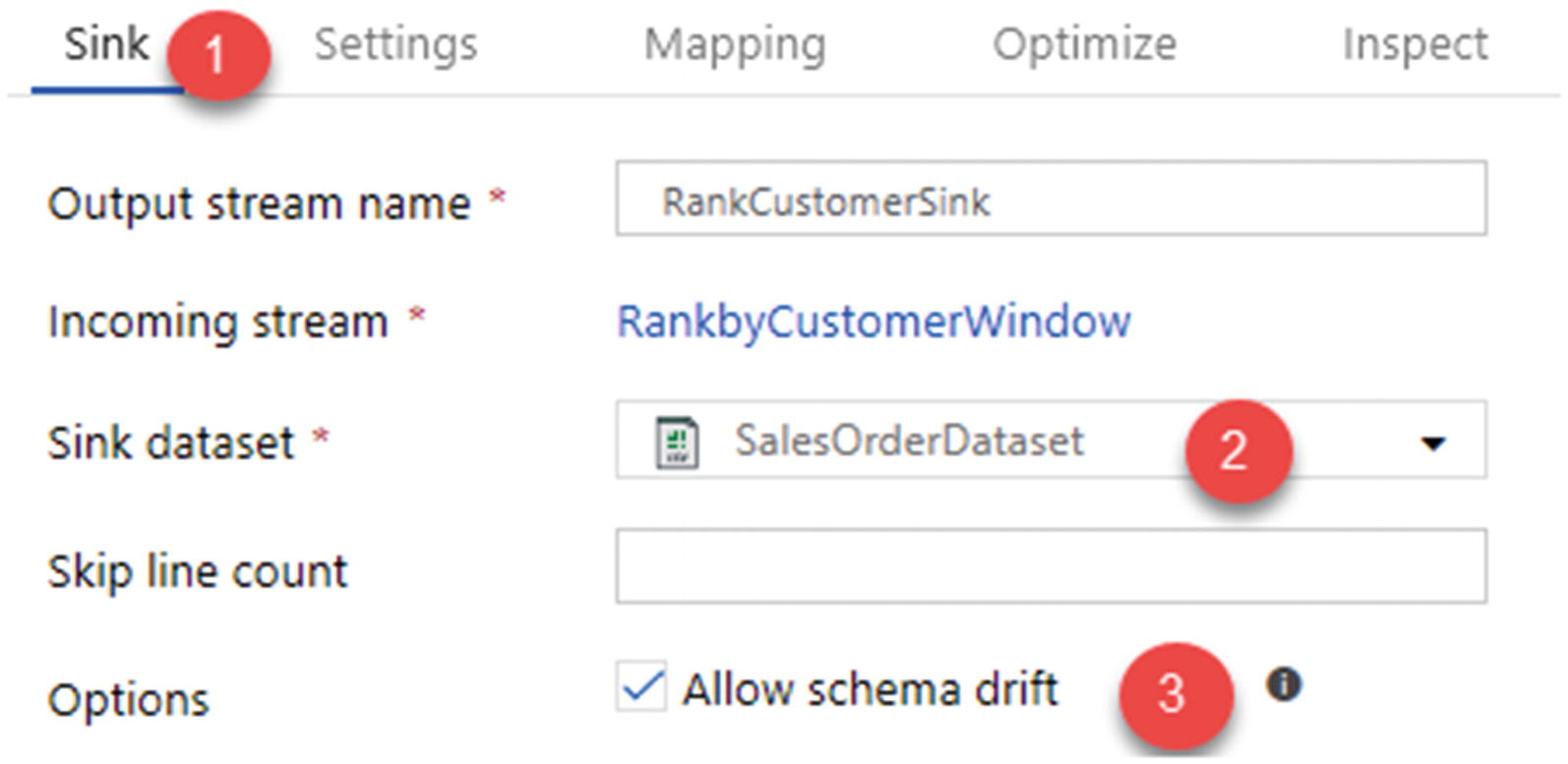

ADF data flow sink to store enriched data

Use the following sink dataset settings shown in Figure 12-26 and remember to check the option Allow schema drift. Azure Data Factory natively supports flexible schemas that change from execution to execution so that you can build generic data transformation logic without the need to recompile your data flows.

Schema drift is the case where your sources often change metadata. Fields, columns, and types can be added, removed, or changed on the fly. Without handling schema drift, your data flow becomes vulnerable to upstream data source changes. Typical ETL patterns fail when incoming columns and fields change because they tend to be tied to those source names.

Define sources that have mutable field names, data types, values, and sizes.

Define transformation parameters that can work with data patterns instead of hard-coded fields and values.

Define expressions that understand patterns to match incoming fields, instead of using named fields.

ADF data flow sink dataset connection properties

ADF data flow sink settings

One of the benefits of Mapping Data Flows is the Data Flow Debug mode, which allows for a preview of the transformed data without having to manually create clusters and run the pipeline.

ADF data flow Debug trigger

Ranked total results by customer

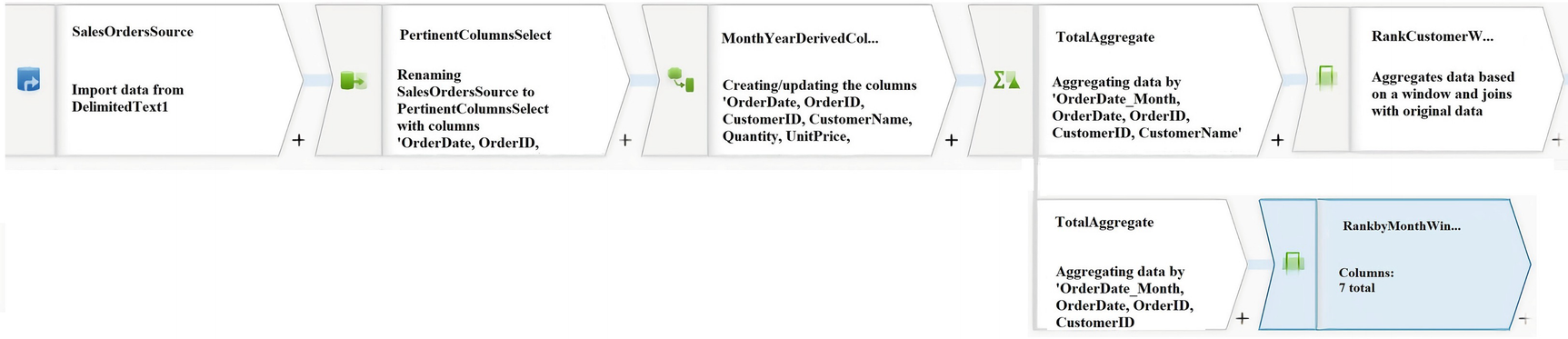

Split Total Aggregate into new branch

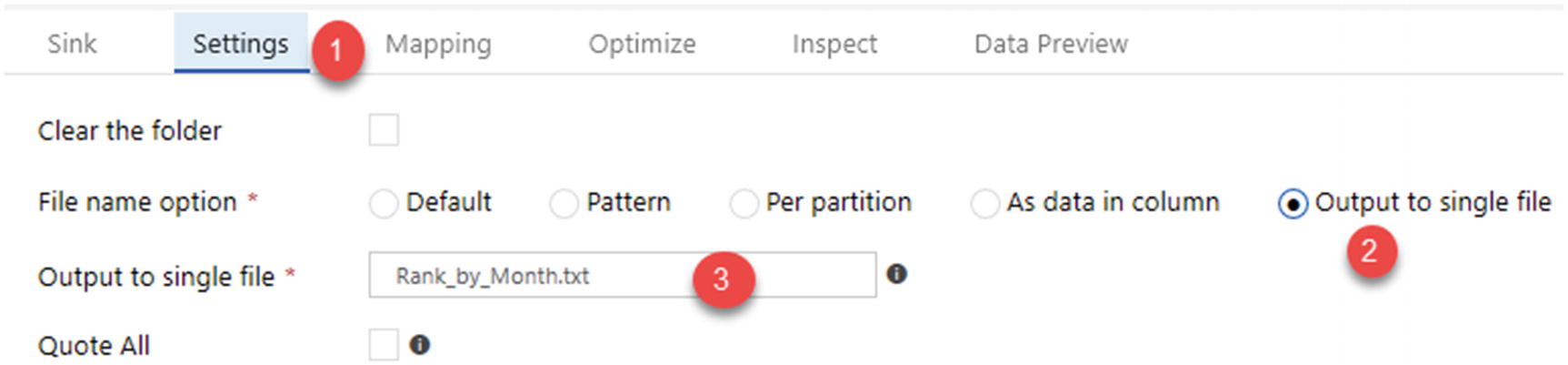

Settings to rank the totals by month and output the results to file

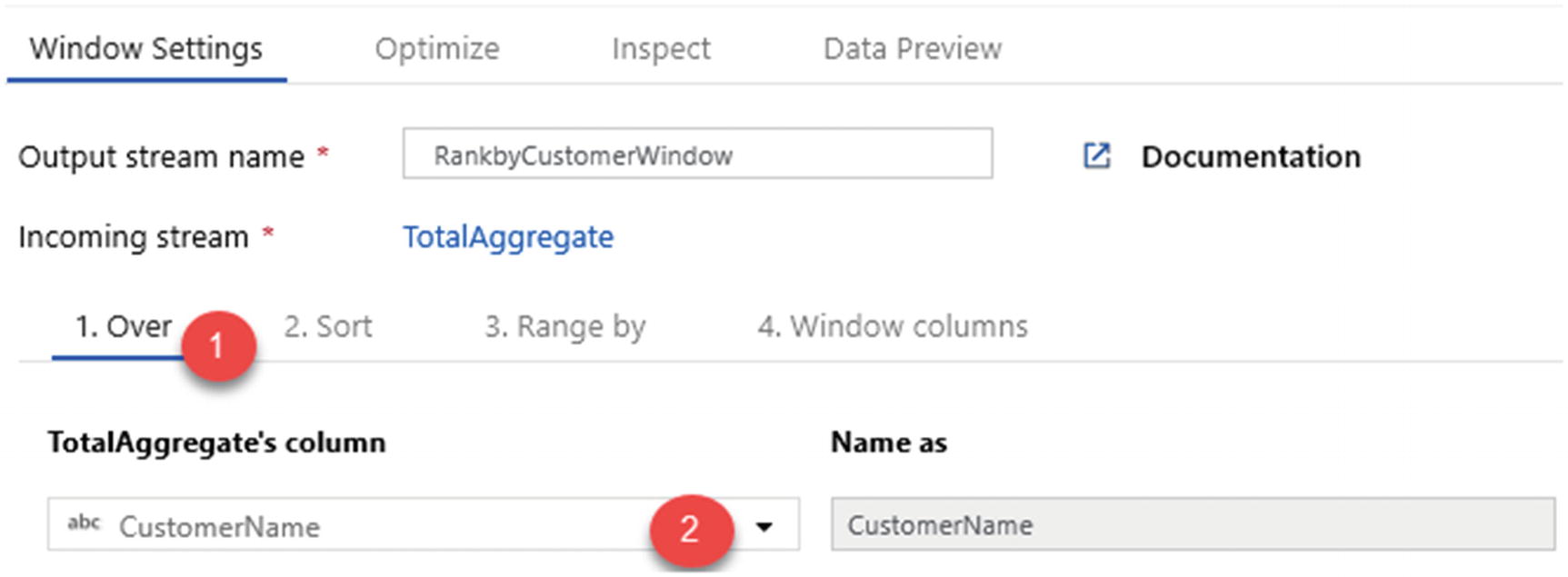

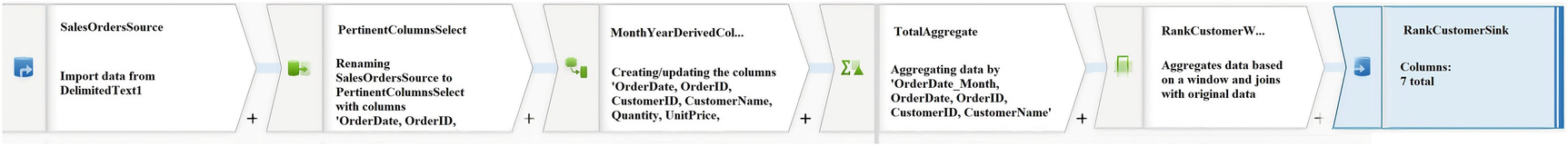

Full end-to-end ADF Mapping Data Flow

Summary

In this chapter, I demonstrated how to create a dataset pointing to a data lake container. Next, I showed you how to add a parameterized wildcard path to all text files in the 2016 sales folder. I then showed you how to select the pertinent columns, add a few key derived columns, perform aggregations, add window functions, split branches, and export the desired results to enriched text files in the data lake.

The output dataset contains aggregated and descending ranked totals (unit price * quantity) by customer name and by month. All of this was done by utilizing Azure Data Factory’s Mapping Data Flows feature and tested with the Data Flow Debug functionality.