The architecture of HBase has high-level logical components. These are as follows:

- HMaster: Responsible mainly for cluster-management operations such as failover, fallback, and primary region selector. Also, it assigns regions to different region servers. In addition, all the DDL operations go via the HMaster.

- Region server: Region servers are the workhorses of the cluster. They hold the clients' data and handle all the CRUD operations on that data. In terms of their location, region servers run on all the data nodes of HDFS. Region servers are meant to handle two types of requests:

- Read existing data

- Write data to the table

For optimal read and write operations, we need to tune the Java heap memory, and part of that is configuring the available space set aside for reads and writes.

At a broad level, there are two logical memories in HBase that are defined for read and write operations:

- Memstore: Part of the JVM heap memory store that is used by all the HBase write operations

- Block Cache: Part of the JVM heap memory that is used by all the HBase read operations

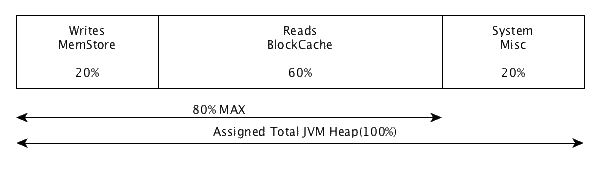

This is depicted here:

As you can see, we have allocated 60% of the total heap to Read operations and 20% to Write operations, with the remaining 20% left for System-wide heap usage. This configuration tell us that the cluster is a read-heavy cluster where the writes happen occasionally.

HBase provides us with the capability to dynamically allocate heap based on the incoming load. These are enabled via configurations. The following properties can be tuned in Hbase config files to enable a dynamic increase or decrease of heap based on incoming load:

|

Property name |

Default value |

Description |

|

hbase.regionserver.global .memstore.size.min.range

|

Unset |

The lower boundary for the size of all memstores.

|

|

hbase.regionserver.global .memstore.size.max.range

|

Unset |

The upper boundary for the size of all memstores.

|

|

hbase.regionserver.global .memstore.size

|

0.4 (40%) |

The default share of the total heap set aside for memstores.

|

|

hfile.block.cache.size .min.range

|

Unset |

The lower boundary for the block cache size.

|

|

hfile.block.cache.size .max.range

|

Unset |

The default share of the total heap assigned to the block cache.

|

|

hfile.block.cache.size |

0.4 (40%) |

The share of the total heap assigned to the block cache.

|

|

hbase.regionserver.heapmemory .tuner.period

|

Unset |

Defines how often the heap tuner runs. NOT ENABLED BY DEFAULT, See Below (when enabled the default value is in the 60s).

|

|

hbase.regionserver.global .memstore.upperLimit

|

40% |

Maximum size of all memstores in a region server before new updates are blocked and flushes are forced. Defaults to 40% of heap. Updates are blocked and flushes are forced until size of all memstores in a region server hits hbase.regionserver.global.memstore.lowerLimit.

|

|

base.regionserver.global .memstore.lowerLimit

|

35% |

Maximum size of all memstores in a region server before flushes are forced. Defaults to 35% of heap. This value equal to hbase.regionserver.global.memstore.upperLimit causes the minimum-possible flushing to occur when updates are blocked due to memstore-limiting.

|

So looking at the preceding data, if we want to create a Cluster that can handle read and write efficiently, then we can probably set the following values:

|

Property name |

Suggested value |

|

hbase.regionserver.global .memstore.size.min.range

|

0.2 |

|

hbase.regionserver.global .memstore.size.max.range

|

0.6 |

|

hbase.regionserver.global .memstore.size

|

0.4 |

|

hfile.block.cache.size .min.range

|

0.2 |

|

hfile.block.cache.size .max.range

|

0.6 |

|

hfile.block.cache.size |

0.4 |

|

hbase.regionserver.heapmemory .tuner.period

|

60s |

|

hbase.regionserver.global .memstore.upperLimit

|

0.4 |

|

base.regionserver.global .memstore.lowerLimit

|

0.35 |

What this data means is that the Minimum MemStore Heap value can never go below 20% of the total heap. But it can grow up to 60% if the reads are not heavy. Similarly, for the block cache that is responsible for the read operations, the minimum Java heap always available is 20% and it can grow dynamically to 60% if the load is not heavy on the write side. This is depicted in the following diagram:

ZooKeeper: ZooKeeper is a Coordination Service for HBase. It keeps all the meta information about the running HBase cluster and provides services such as naming, confg management, data synchronizations, leader election, and implementing consensus. A distributed HBase Cluster relies on Zookeeper for cluster configuration and cluster management. By design, HBase relies on Zookeeper only for transient data management. This includes cluster coordination and state communication. If zookeeper is not available, data can still be read from and written to an HBase cluster.