Chapter 3. Telecommunications and Network Security

This chapter covers the following topics:

• OSI model: An explanation of the functions of the seven layers of the OSI model

• TCP/IP model: A discussion of the TCP/IP model and its relationship to the OSI model

• Common TCP/UDP ports: A description of the function of port numbers and common standard ports

• IP addressing: A look at both logical and physical addressing systems and their interrelationship in routing and switching

• Network transmission: An examination of the processes used to transfer data across various media types

• Cabling: Types of bounded media, their characteristics, and proper use

• Network topologies: A survey of both logical and physical network topologies

• Network technologies: A discussion of the various technologies used to accomplish networking

• Network protocols/services: The functions of the major network protocols and services that provide network functionality

• Network routing: An explanation of how static and dynamic routing works and a discussion of the major interior and exterior routing protocols

• Network devices: Covers the function and placement of major network devices

• Network types: An explanation of local area network types including MAN, WAN, LAN, extranet, and intranet

• WAN technologies: A discussion of the various methods of connecting local area networks with wide area networks

• Remote connection technologies: A description of the methods of connecting remote users and networks to the LAN and the Internet

• Wireless networks: Covers the types of wireless networks and the processes required to secure them

• Network threats: An introduction to the various security threats facing networks

Sensitive data must be protected from unauthorized access when the data is at rest (on a hard drive) and in transit (moving through a network). Moreover, sensitive communications of other types such as emails, instant messages, and phone conversations must also be protected from prying eyes and ears. Many communication processes send information in a form that can be read and understood if captured with a protocol analyzer or sniffer.

In today’s communication world, assume that your communications are being captured regardless of how unlikely you think that might be. You should also take steps to protect or encrypt the transmissions so they will be useless to anyone capturing them. This chapter covers the protection of wired and wireless transmissions and of the network devices that perform the transmissions, as well as some networking fundamentals required to understand transmission security.

Foundation Topics

OSI Model

A complete understanding of networking requires an understanding of the Open Systems Interconnect (OSI) model. Created in the 1980s by the International Standards Organization (ISO) as a part of its mission to create a protocol set to be used as a standard for all vendors, it breaks the communication process into layers. Although the ensuing protocol set did not catch on as a standard (TCP/IP was adopted), the model has guided the development of technology since its creation. It also has helped generations of students understand the network communication process between two systems.

The OSI model breaks up the process into seven layers or modules. The benefits of doing this are

• It breaks up the communication process into layers with standardized interfaces between the layers, allowing for changes and improvements on one layer without necessitating changes on other layers.

• It provides a common framework for hardware and software developers, fostering interoperability.

The goal of this open systems architecture is that no vendor owns it and it acts as a blueprint or model for developers to work with. Various protocols operate at different layers of this model. A protocol is a set of communication rules two systems must both use and understand to communicate. Some protocols depend on other protocols for services, and as such these protocols work as a team to get transmissions done, much like the team at the post office that gets your letters delivered. Some people sort, others deliver, and still others track lost shipments.

The OSI model and the TCP/IP model, explained in the next section, are often both used to describe the process called packet creation or encapsulation. Until a packet is created to hold the data, it cannot be sent on the transmission medium.

With a modular approach it becomes possible for a change in a protocol or the addition of a new protocol to be accomplished without having to rewrite the entire protocol stack (a term for all the protocols that work together at all layers). The model has seven layers. This section discusses each layer’s function and its relationship to the layer above and below it in the model. The layers are often referred to by their number with the numbering starting at the bottom of the model at layer 1, the Physical layer.

The process of creating a packet or encapsulation begins at layer 7, the Application layer rather than layer 1, so we discuss the process starting at layer 7 and work down the model to layer 1, the Physical layer, where the packet is sent out on the transmission medium.

Application Layer

The Application layer (layer 7) is where the encapsulation process begins. This layer receives the raw data from the application in use and provides services, such as file transfer and message exchange to the application (and thus the user). An example of a protocol that operates at this layer is Hypertext Transfer Protocol (HTTP), which is used to transfer web pages across the network. Other examples of protocols that operate at this layer are DNS queries, FTP transfers, and SMTP email transfers.

The user application interfaces with these application protocols through a standard interface called an Application Programming Interface (API). The Application layer protocol receives the raw data and places it in a container called a protocol data unit (PDU). When the process gets down to layer 4 these PDUs have standard names, but at layers 5–7 we simply refer to the PDU as “data.”

Presentation Layer

The information that is developed at layer 7 is then handed to layer 6, the Presentation layer. Each layer makes no changes to the data received from the layer above it. It simply adds information to the developing packet. In the case of the Presentation layer, information is added that standardizes the formatting of the information if required.

Layer 6 is responsible for the manner in which the data from the Application layer is represented (or presented) to the Application layer on the destination device (explained more fully in the section “Encapsulation.” If any translation between formats is required it will take care of it. It also communicates the type of data within the packet and the application that might be required to read it on the destination device.

Session Layer

The Session layer or layer 5 is responsible for adding information to the packet that makes a communication session between a service or application on the source device possible with the same service or application on the destination device. Do not confuse this process with the one that establishes a session between the two physical devices. That occurs not at this layer but at layers 3 and 4. This session is built and closed after the physical session between the computers has taken place.

The application or service in use is communicated between the two systems with an identifier called a port number. This information is passed on to the Transport layer, which also makes use of these port numbers.

Transport Layer

The protocols that operate at the Transport layer (layer 4) work to establish a session between the two physical systems. The service provided can be either connection oriented or connectionless, depending on the transport protocol in use. The “TCP/IP Model” section (TCP/IP being the most common standard networking protocol in use) discusses the specific transport protocols used by TCP/IP in detail.

The Transport layer receives all the information from layers 7, 6, and 5 and adds information that identifies the transport protocol in use and the specific port number that identifies the required layer 7 protocol. At this layer the PDU is called a segment because this layer takes a large transmission and segments it into smaller pieces for more efficient transmission on the medium.

Network Layer

At layer 3 or the Network layer, information required to route the packet is added. This is in the form of a source and destination logical address (meaning one that is assigned to a device in some manner and can be changed). In TCP/IP this is in terms of a source and destination IP address. An IP address is a number that uniquely differentiates a host from all other devices on the network. It is based on a numbering system that makes it possible for computers (and routers) to identify whether the destination device is on the local network or on a remote network Any time a packet needs to be sent to a different network or subnet (IP addressing is covered later in the chapter), it must be routed and the information required to do that is added here. At this layer the PDU is called a packet.

Data Link Layer

The Data Link layer is responsible for determining the destination physical address. Network devices have logical addresses (IP addresses) and the network interfaces they possess have a physical address (MAC address), which is permanent in nature. When the transmission is handed off from routing device to routing device, at each stop this source and destination address pair changes, whereas the source and destination logical addresses (in most cases IP addresses) do not. This layer is responsible for determining what those MAC addresses should be at each hop (router interface) and adding them to this part of the packet. The later section “TCP/IP Model” covers how this resolution is performed in TCP/IP. After this is done, we call the PDU a frame.

Something else happens at this layer that is unique to this layer. Not only is a layer 2 header placed on the packet but also a trailer at the “end” of the frame. Information contained in the trailer is used to verify that none of the data contained has been altered or damaged en route.

Physical Layer

Finally, the packet (or frame as it is called at layer 2 is received by the Physical layer (layer 1). Layer 1 is responsible for turning the information into bits (ones and zeros) and sending it out on the medium. The way in which this is accomplished can vary according to the media in use. For example, in a wired network, the ones and zeros are represented as electrical charges. In wireless, they are represented by altering the radio waves. In an optical network they are represented with light.

The ability of the same packet to be routed through various media types is a good example of the independence of the layers. As a PDU travels through different media types, the physical layer will change but all the information in layers 2–7 will not. Similarly, when a frame crosses routers or hops, the MAC addresses change but none of the information in layers 3–7 changes. The upper layers depend on the lower layers for various services but the lower layers leave the upper layer information unchanged.

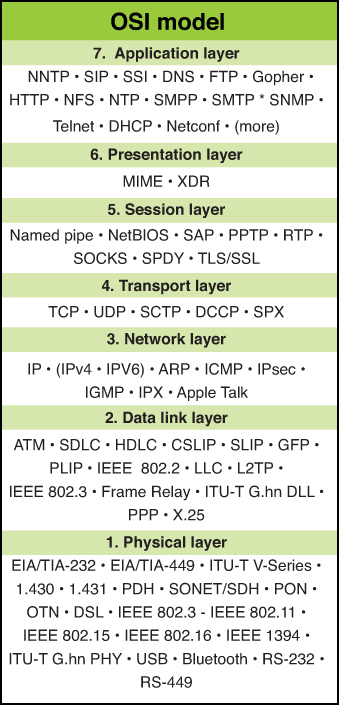

Figure 3-1 shows common protocols mapped to the OSI model.

The next section covers another model that perhaps more accurately depicts what happens in a TCP/IP network. Because TCP/IP is the standard now for transmission comparing these two models is useful. Although they have a different number of layers and some of the layer names are different, they describe the same process of packet creation or encapsulation.

Multi-Layer Protocols

Many protocols, such as FTP and DNS, operate on a single layer of the OSI model. However, many protocols operate at multiple layers of the OSI model. The best example is TCP/IP, the networking protocol used on the Internet and on the vast majority of LANs. In fact, this protocol has its own model that describes the layers on which it operates and the parts of the protocol that operate on each layer. The next section covers this model and the protocol it was designed to describe.

TCP/IP Model

The protocols developed when the OSI model was developed (sometimes referred to as OSI protocols) did not become the standard for the Internet. The Internet as we know it today has its roots in a wide area network developed by the Department of Defense with TCP/IP being the protocol developed for that network. The Internet is a global network of public networks and Internet Service Providers (ISPs) throughout the world.

Although the OSI model is still often referenced, of the protocols themselves only X.400, X.500, and IS-IS have had much lasting impact. For that reason a second model exists based on TCP/IP. In a discussion of this model, the protocols that are part of what is called the TCP/IP suite can be mapped to the layer on which they perform their function.

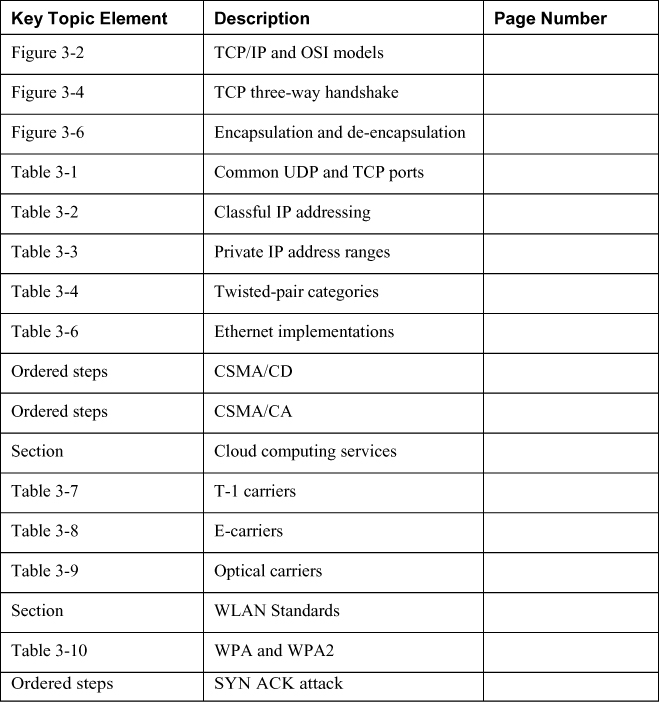

This model bears many similarities to the OSI model, which is not unexpected because they both describe the process of packet creation or encapsulation. The difference is that the OSI model breaks the process into seven layers, whereas the TCP/IP model breaks it into four. If you examine them side by side, however, it becomes apparent that many of the same functions occur at the same layers, while the TCP/IP model combines the top three layers of the OSI model into one and the bottom two layers of the OSI model into one. Figure 3-2 show the two models next to one another.

Figure 3-2. TCP/IP and OSI Models

The TCP/IP model has only four layers and is useful to study because it focuses its attention on TCP/IP. This section explores those four layers and their functions and relationships to one another and to layers in the OSI model.

Application Layer

Although the Application layer in the TCP/IP model has the same name as the top layer in the OSI model, the Application layer in the TCP/IP model encompasses all the functions performed in layers 5–7 in the OSI model. Not all functions map perfectly because both are simply conceptual models. Within the Application layer, applications create user data and communicate this data to other processes or applications on another host. For this reason, it is sometimes also referred to as the process-to-process layer.

Examples of protocols that operate at this layer are SMTP, FTP, SSH, and HTTP. These protocols are discussed in the section “Network Protocols/Services” later in this chapter. In general, however, these are usually referred to as higher layer protocols that perform some specific function, whereas protocols in the TCP/IP suite that operate at the Transport and Internet layers perform location and delivery service on behalf of these higher layer protocols.

A port number identifies to the receiving device these upper layer protocols and the programs on whose behalf they function. The number identifies the protocol or service. Many port numbers have been standardized. For example, DNS is identified with the standard port number of 53. The “Common TCP/UDP Ports” section covers these port numbers in more detail.

Transport Layer

The Transport layers of the OSI model and the TCP/IP model perform the same function, which is to open and maintain a connection between hosts. This must occur before the session between the processes can occur as described in the Application layer section and can be done in TCP/IP in two ways: connectionless and connection-oriented. A connection-oriented transmission means that a connection will be established before any data is transferred, whereas in a connectionless transmission this is not done. One of two different transport layer protocols is used for each process. If a connection-oriented transport protocol is required, Transmission Control Protocol (TCP) will be used. If the process will be connectionless, User Datagram Protocol (UDP) is used.

Application developers can choose to use either TCP or UDP as the Transport layer protocol used with the application. Regardless of which transport protocol is used, the application or service will be identified to the receiving device by its port number and the transport protocol (UDP or TCP). Port numbers are discussed in more detail in the section “Common TCP/UDP Ports” later in this chapter.

Although TCP provides more functionality and reliability, the overhead required by this protocol is substantial when compared to UDP. This means that a much higher percentage of the packet consists of the header when using TCP than when using UDP. This is necessary to provide the fields required to hold the information needed to provide the additional services. Figure 3-3 shows a comparison of the size of the two respective headers.

Figure 3-3. TCP/IP and UDP Headers

When an application is written to use TCP, a state of connection is established between the two hosts before any data is transferred. This occurs using a process known as the TCP three-way handshake. This process is followed exactly, and no data is transferred until it is complete. Figure 3-4 shows the steps in this process. The steps are as follows:

1. The initiating computer sends a packet with the SYN flag set (one of the fields in the TCP header), which indicates a desire to create a connection.

2. The receiving host acknowledges receiving this packet and indicates a willingness to create a state of connection by sending back a packet with both the SYN and ACK flags set.

3. The first host acknowledges completion of the connection process by sending a final packet back with only the ACK flag set.

Figure 3-4. TCP Three-Way Handshake

So what exactly is gained by using the extra overhead to use TCP? The following are examples of the functionality provided with TCP:

• Guaranteed delivery: If the receiving host does not specifically acknowledge receipt of each packet, the sending system will resend the packet.

• Sequencing: In today’s routed networks, the packets might take many different routes to arrive and might not arrive in the order in which they were sent. A sequence number added to each packet allows the receiving host to reassemble the entire transmission using these numbers.

• Flow control: The receiving host has the capability of sending the acknowledgement packets back to signal the sender to slow the transmission if it cannot process the packets as fast as they are arriving.

Many applications do not require the services provided by TCP or cannot tolerate the overhead required by TCP. In these cases the process will use UDP, which sends on a “best effort” basis with no guarantee of delivery. In many cases some of these functions are provided by the Application layer protocol itself rather than relying on the Transport layer protocol.

Internet Layer

The Transport layer can neither create a state of connection nor send using UDP until the location and route to the destination are determined, which occurs on the Internet layer. The four protocols in the TCP/IP suite that operate at this layer are

• Internet Protocol (IP): Responsible for putting the source and destination IP addresses in the packet and for routing the packet to its destination.

• Internet Control Message Protocol (ICMP): Used by the network devices to send messages regarding the success or failure of communications and used by humans for troubleshooting. When you use the PING or TRACEROUTE commands, you are using ICMP.

• Internet Group Management Protocol (IGMP): Used when multicasting, which is a form of communication whereby one host sends to a group of destination hosts rather than a single host (called a unicast transmission) or to all hosts (called a broadcast transmission).

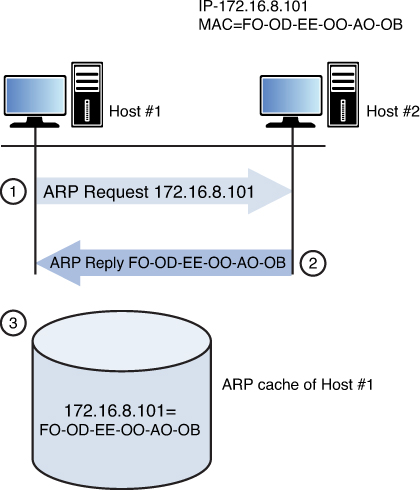

• Address Resolution Protocol (ARP): Resolves the IP address placed in the packet to a physical or layer 2 address (called a MAC address in Ethernet).

The relationship between IP and ARP is worthy of more discussion. IP places the source and destination IP addresses in the header of the packet. As we saw earlier, when a packet is being routed across a network the source and destination IP addresses never change but the layer 2 or MAC address pairs change at every router hop. ARP uses a process called the ARP broadcast to learn the MAC address of the interface that matches the IP address of the next hop. After it has done this, a new layer 2 header is created. Again, nothing else in the upper layer changes in this process, just layer 2.

That brings up a good point concerning the mapping of ARP to the TCP/IP model. Although we generally place ARP on the Internet layer, the information it derives from this process is placed in the Link layer or layer 2, the next layer in our discussion.

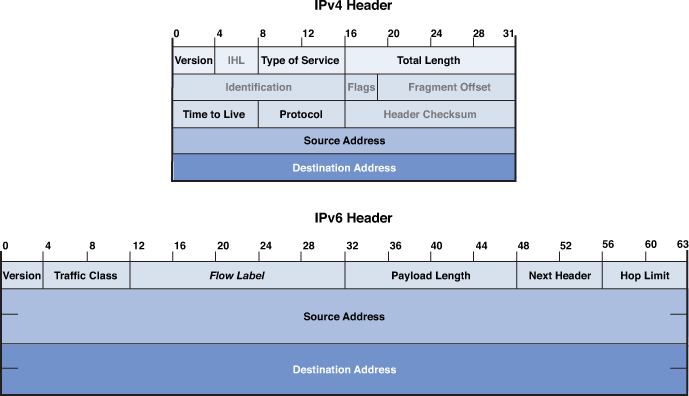

Just as the Transport layer added a header to the packet, so does the Internet layer. One of the improvements made by IPv6 is the streamlining of the IP header. Although the same information is contained in the header and the header is larger, it has a much simpler structure. Figure 3-5 shows a comparison of the two.

Figure 3-5. IPv6 and IPv4 Header

Link Layer

The Link layer of the TCP/IP model provides the services provided by both the Data Link and the Physical layers in the OSI model. The source and destination MAC addresses are placed in this layer’s header. A trailer is also placed on the packet at this layer with information in the trailer that can be used to verify the integrity of the data.

This layer is also concerned with placing the bits on the medium, as discussed in the section on the OSI model earlier in this chapter Again the exact method of implementation varies with the physical transmission medium. It might be in terms of electrical impulses, light waves, or radio waves.

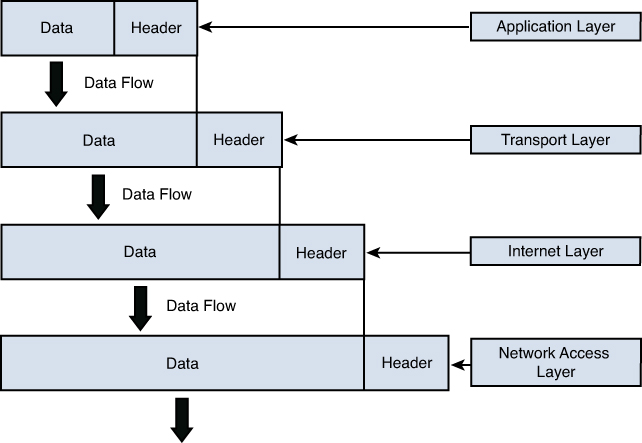

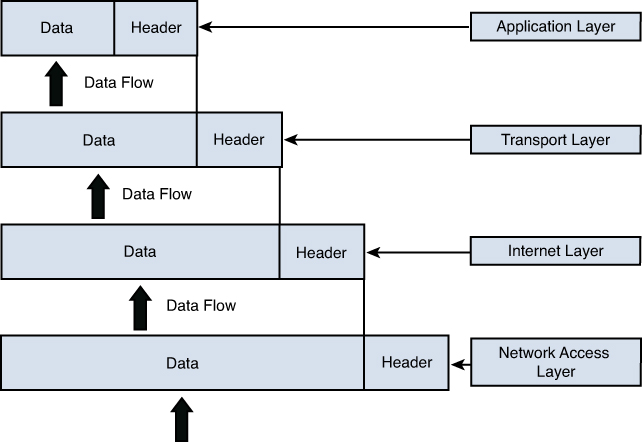

Encapsulation

In either model as the packet is created, information is added to the header at each layer and then a trailer is placed on the packet before transmission. This process is called encapsulation. Intermediate devices, such as routers and switches, only read the layers of concern to that device (for a switch layer 2 and for a router layer 3). The ultimate receiver strips off the entire header with each layer, making use of the information placed in the header by the corresponding layer on the sending device. This process is called de-encapsulation. Figure 3-6 shows a visual representation of this process.

Figure 3-6. Encapsulation and De-encapsulation

Common TCP/UDP Ports

When the Transport layer learns the required port number for the service or application required on the destination device from the Application layer, it is recorded in the header as either a TCP or UDP port number. Both UDP and TCP use 16 bits in the header to identify these ports. These port numbers are software based or logical, and there are 65,535 possible numbers. Port numbers are assigned in various ways, based on three ranges:

• System or well-known ports (0–1023)

• User Ports (1024–49151)

• Dynamic and/or Private Ports (49152–65535)

System Ports are assigned by the Internet Engineering Task Force (IETF) for standards-track protocols, as per [RFC6335]. User ports can be registered with the Internet Assigned Numbers Authority (IANA) and assigned to the service or application using the “Expert Review” process, as per [RFC6335]. Dynamic ports are used by source devices as source ports when accessing a service or application on another machine. For example, if computer A is sending an FTP packet, the destination port will be the well-known port for FTP and the source will be selected by the computer randomly from the dynamic range.

The combination of the destination IP address and the destination port number is called a socket. The relationship between these two values can be understood if viewed through the analogy of an office address. The office has a street address but the address also must contain a suite number as there could be thousands (in this case 65,535) suites in the building. Both are required to get the information where it should go.

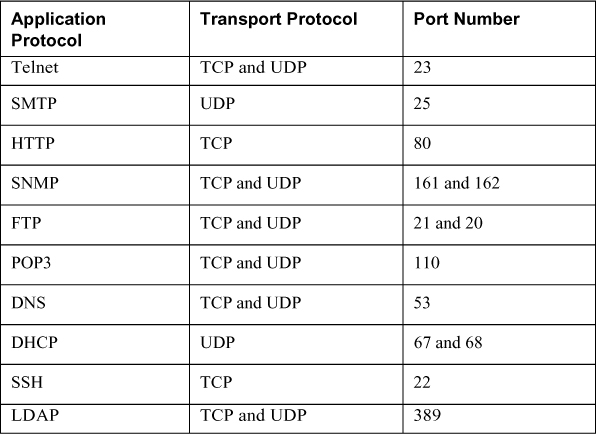

As a security professional you should be aware of well-known port numbers of common services. In many instances firewall rules and access control lists (ACLs) are written or configured in terms of the port number of what is being allowed or denied rather than the name of the service or application. Table 3-1 lists some of the more important port numbers. Some use more than one port.

Table 3-1. Common TCP/UDP Port Numbers

Logical and Physical Addressing

During the process of encapsulation at layer 3 of the OSI model, IP places source and destination IP addresses in the packet. Then at layer 2, the matching source and destination MAC addresses that have been determined by ARP are placed in the packet. IP addresses are examples of logical addressing, and MAC addresses are examples of physical addressing. IP addresses are considered logical because these addresses are administered by humans and can be changed at any time. MAC addresses on the other hand are assigned permanently to the interface cards of the devices when the interfaces are manufactured. It is important to note, however, that although these addresses are permanent they can be spoofed. When this is done, however, the hacker is not actually changing the physical address, but rather telling the interface to place a different MAC address in the layer 2 headers.

This section discusses both address types with a particular focus on how IP addresses are used to create separate networks or subnets in the larger network. It also discusses how IP addresses and MAC addresses are related and used during a network transmission.

IPv4

IPv4 addresses are 32 bits in length and can be represented in either binary or in dotted-decimal format. The number of possible IP addresses using 32 bits can be calculated by raising the number 2 (the number of possible values in the binary number system) to the 32nd power. The result is 4,294,967,296, which on the surface appears to be enough IP addresses. But with the explosion of the Internet and the increasing number of devices that require an IP address, this number has proven to be insufficient.

Due to the eventual exhaustion of the IPv4 address space, several methods of preserving public IP addresses (more on that in a bit, but for now these are addresses that are legal to use on the Internet) have been implemented, including the use of private addresses and Network Address Translation (NAT), both discussed in the following sections. The ultimate solution lies in the adoption of IPv6, a new system that uses 128 bits and allows for enough IP addresses for each man, woman, and child on the planet to have as many IP addresses as the entire IPv4 numbering space. IPv6 is discussed later in this section.

IP addresses that are written in dotted-decimal format, the format in which humans usually work with them, have four fields called octets separated by dots or periods. Each field is called an octet because when we look at the addresses in binary format, we devote 8 bits in binary to represent each decimal number that appears in the octet when viewed in dotted-decimal format. Therefore, if we look at the address 216.5.41.3, four decimal numbers are separated by dots, where each would be represented by 8 bits if viewed in binary. The following is the binary version of this same address:

11011000.00000101.00101001.00000011

There are 32 bits in the address, 8 in each octet.

The structure of IPv4 addressing lends itself to dividing the network into subdivisions called subnets. Each IP address also has a required companion value called a subnet mask. The subnet mask is used to specify which part of the address is the network part and which part is the host. The network part, on the left side of the address determines on which network the device resides whereas the host portion on the right identifies the device on that network. Figure 3-7 shows the network and host portion of the three default classes of IP address.

Figure 3-7. Network and Host Bits

When the IPv4 system was first created, there were only three default subnet masks. This yielded only three sizes of networks, which later proved to be inconvenient and wasteful of public IP addresses. Eventually a system called Classless Interdomain Routing (CIDR) was adopted that uses subnet masks that allows you to make subnets or subdivisions out of the major classful networks possible before CIDR. CIDR is beyond the scope of the exam but it is worth knowing about. You can find more information about how CIDR works at http://searchnetworking.techtarget.com/definition/CIDR.

IP Classes

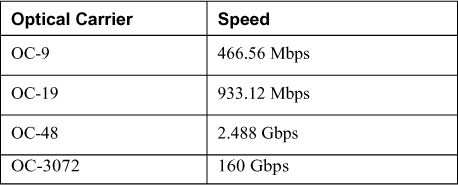

Classful subnetting (pre-CIDR) created five classes of networks. Each class represented a range of IP addresses. Table 3-2 shows the five classes. Only the first three (A, B, and C) are used for individual network devices. The other ranges are for special use.

Table 3-2. Classful Addressing

As you can see, the key value that changes as you move from one class to another is the value of the first octet (the one on the far left). What might not be immediately obvious is that as you move from one class to another, the dividing line between the host portion and network portion also changes. This is where the subnet mask value comes in. When the mask is overlaid with the IP addresses (thus we call it a mask) every octet in the subnet mask where there is a 255 is a network portion and every octet where there is a 0 is a host portion. Another item to mention is that each class has a distinctive pattern in the first two bits of the first octet. For example, ANY IP address that begins with 01 in the first bit positions MUST be in Class A, also indicated in Table 3-2.

The significance of the network portion is that two devices must share the same values in the network portion to be in the same network. If they do not, they will not be able to communicate.

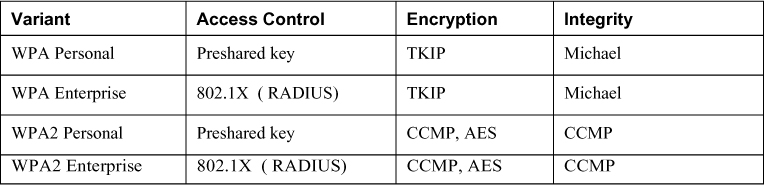

Public versus Private IP Addresses

The initial solution used (and still in use) to address the exhaustion of the IPv4 space involved the use of private addresses and Network Address Translation (NAT). Three ranges of IP addresses were set aside to be used ONLY within private networks and are NOT routable on the Internet. RFC 1918 set aside the IP address ranges in Table 3-3 to be used for this purpose. Because these addresses are not routable on the public network, they must be translated to public addresses before being sent to the Internet. This process, called Network Address Translation (NAT) is discussed in the next section.

NAT

Network Address Translation (NAT) is a service that can be supplied by a router or by a server. The device that provides the service stands between the LAN and the Internet. When packets need to go to the Internet, the packets go through the NAT service first. The NAT service changes the private IP address to a public address that is routable on the Internet. When the response is returned from the Web, the NAT service receives it, translates the address back to the original private IP address, and sends it back to the originator.

This translation can be done on a one-to-one basis (one private address to one public address), but to save IP addresses, usually the NAT service will represent the entire private network with a single public IP address. This process is called Port Address Translation (PAT). This name comes from the fact that the NAT service keeps the private clients separate from one another by recording their private address and the source port number (usually a unique number) selected when the packets were built.

Allowing NAT to represent an entire network (perhaps thousands of computers) with a single public address has been quite effective in saving public IP addresses. However, many applications do not function properly through NAT, and thus it has never been seen as a permanent solution to resolving the lack of IP addresses. That solution is IPv6.

IPv4 Versus IPv6

IPv6 was developed to more cleanly address the issue of the exhaustion of the IPv4 space. Although private addressing and the use of NAT have helped to delay the inevitable, the use of NAT introduces its own set of problems. The IPv6 system uses 128 bits so it creates such a large number of possible addresses that it is expected to suffice for many, many years.

The details of IPv6 are beyond the scope of the exam but these addresses look different than IPv4 addresses because they use a different format and use the hexadecimal number system, so there are letters and numbers in them such as you would see in a MAC address (discussed in the next section). There are eight fields separated by colons, not dots. Here is an example address:

2001:00000:4137:9e76:30ab:3035:b541:9693

Many of the security features that were add-ons to IPv4 (such as IPsec) have been built into IPv6, increasing its security. Moreover, while DHCP can be used with IPv6, IPv6 provides a host the ability to locate its local router, configure itself, and discover the IP addresses of its neighbors. Finally, broadcast traffic is completely eliminated in IPv6 and replaced by multicast communications.

MAC Addressing

All the discussion about addressing thus far has been addressing that is applied at layer 3, which is IP addressing. At layer 2 physical addresses reside. In Ethernet these are called Media Access Control (MAC) addresses. They are called physical addresses because these 48-bit addresses expressed in hexadecimal are permanently assigned to the network interfaces of devices. Here is an example of a MAC address:

01:23:45:67:89:ab

As a packet is transferred across a network, at every router hop and then again when it arrives at the destination network, the source and destination MAC addresses change. ARP resolves the next hop address to a MAC address using a process called the ARP broadcast. MAC addresses are unique. This comes from the fact that each manufacturer has a different set of values assigned to it at the beginning of the address called the Organizationally Unique Identifier (OUI). Each manufacturer ensures that it assigns no duplicate within its OUI. The OUI is the first three bytes of the MAC address.

Network Transmission

Data can be communicated across a variety of media types, using several possible processes. These communications can also have a number of characteristics that need to be understood. This section discusses some of the most common methods and their characteristics.

Analog versus Digital

Data can be represented in various ways on a medium. On a wired medium, the data can be transmitted in either analog or digital format. Analog represents the data as sound and is used in analog telephony. Analog signals differ from digital in that there are an infinite possible number of values. If we look at an analog signal on a graph, it looks like a wave going up and down. Figure 3-8 shows an analog waveform compared to a digital one.

Figure 3-8. Digital and Analog Signals

Digital signaling on the other hand, which is the type used in most computer transmissions, does not have an infinite number of possible values, but only two: on and off. A digital signal shown on a graph exhibits a sawtooth pattern as shown in Figure 3-8. Digital signals are usually preferable to analog because they are more reliable and less susceptible to noise on the line. Transporting more information on the same line at a higher quality over a longer distance than with analog is also possible.

Asynchronous versus Synchronous

When two systems are communicating, they not only need to represent the data in the same format (analog/digital) but they must also use the same synchronization technique. This process tells the receiver when a specific communication begins and ends so two-way conversations can happen without talking over one another. The two types of techniques are asynchronous transmission and synchronous transmission.

With asynchronous transmissions, the systems use start and stop bits to communicate when each byte is starting and stopping. This method also uses parity bits for the purpose of ensuring that each byte has not changed or been corrupted en route. This introduces additional overhead to the transmission.

Synchronous transmission uses a clocking mechanism to synch up the sender and receiver. Data is transferred in a stream of bits with no start, stop, or parity bits. This clocking mechanism is embedded into the layer 2 protocol. It uses a different form of error checking (cyclical redundancy check or CRC) and is preferable for high-speed, high-volume transmissions. Figure 3-9 shows a visual comparison of the two techniques.

Figure 3-9. Asynchronous versus Synchronous

Broadband versus Baseband

All data transfers use a communication channel. Multiple transmissions might need to use the same channel. Sharing this medium can be done in two different ways: broadband or baseband. The difference is in how the medium is shared.

In baseband, the entire medium is used for a single transmission, and then multiple transmission types are assigned time slots to use this single circuit. This is called Time Division Multiplexing (TDM). Multiplexing is the process of using the same medium for multiple transmissions. The transmissions take turns rather than sending at the same time.

Broadband, on the other hand, divides the medium in different frequencies, a process called Frequency Division Multiplexing (FDM). This has the benefit of allowing true simultaneous use of the medium.

An example of broadband transmission is DSL, where the phone signals are sent at one frequency and the computer data at another. This is why you can talk on the phone and use the Web at the same time. Figure 3-10 illustrates these two processes.

Figure 3-10. Broadband versus Baseband

Unicast, Multicast, and Broadcast

When systems are communicating in a network they might send out three types of transmissions. These methods differ in the scope of their reception as follow:

• Unicast: Transmission from a single system to another single system. It is considered one-to-one.

• Multicast: A signal is received by all others in a group called a multicast group. It is considered one-to-many.

• Broadcast: A transmission sent by a single system to all systems in the network. It is considered one-to-all.

Figure 3-11 illustrates the three methods.

Figure 3-11. Unicast, Multicast, and Broadcast

Wired versus Wireless

As you probably know by now, not all transmissions occur over a wired connection. Even within the category of wired connections, the way in which the ones and zeros are represented can be done in different ways. In a copper wire, the ones and zeros are represented with changes in the voltage of the signal, whereas in a fiberoptic cable, they are represented with manipulation of a light source (lasers or LEDs).

In wireless transmission, radios waves or light waves are manipulated to represent the ones and zeros. When infrared technology is used, this is done with infrared light. With WLANs, radio waves are manipulated to represent the ones and zeros. These differences is how the bits are represented occur at the physical and data link layers of the OSI model. When a packet goes from a wireless section of the network to a wired section, these two layers are the only layers that change.

When a different physical medium is used, typically a different layer 2 protocol is called for. For example, while the data is traveling over the wired Ethernet network, the 802.3 standard is used. However, when the data gets to a wireless section of the network, it needs a different layer 2 protocol. Depending on the technology in use, it could be either 802.11 (WLAN) or 802.16 (WiMAX).

The ability of the packet to travers various media types is just another indication of the independence of the OSI layers because the information in layers 3–7 remain unchanged regardless of how many layer 2 transitions must be made to get the data to its final destination.

Cabling

Cabling resides at the physical layer of the OSI model and simply provides a medium on which data can be transferred. The vast majority of data is transferred across cables of various types, including coaxial, fiberoptic, and twisted pair. Some of these cables represent the data in terms of electrical voltages whereas fiber cables manipulate light to represent the data. This section discusses each type.

You can compare cables to one another using several criteria. One of the criteria that is important with networking is the cables susceptibility to attenuation. Attenuation occurs when the signal meets resistance as it travels through the cable. This weakens the signal, and at some point (different in each cable type) the signal is no longer strong enough to be read properly at the destination. For this reason all cables have a maximum length. This is true regardless of whether the cable is fiberoptic or electrical.

Another important point of comparison between cable types is their data rate, which describes how much data can be sent through the cable per second. This area has seen great improvement over the years, going from rates of 10 Mbps in a LAN to 1000 Mbps in today’s networks (and even higher rates in data centers).

Another consideration when selecting a cable type is the ease of installation. Some cable types are easier than others to install, and fiberoptic cabling requires a special skill set to install, raising its price of installation.

Finally (and most importantly for our discussion) is the security of the cable. Cables can leak or radiate information. Cables can also be tapped into by hackers if they have physical access to it. Just as the cable types can vary in allowable length and capacity they can also vary in their susceptibility to these types of data losses.

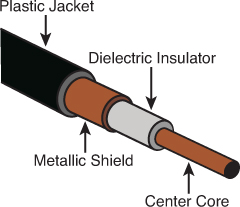

Coaxial

One of the earliest cable types to be used for networking was coaxial, the same basic type of cable that brought cable TV to millions of homes. Although coaxial cabling is still used, due to its low capacity and the adoption of other cable types, its use is almost obsolete now in LANs.

Coaxial cabling comes in two types or thicknesses. The thicker type, called Thicknet, has an official name of 10Base5. This naming system, used for other cable types as well, imparts several facts about the cable. In the case of 10Bbase5 it means that it is capable of transferring 10 Mbps and can go roughly 500 meters. Thicknet uses two types of connectors: a vampire tap (named thusly because it has a spike that pierces the cable) and N-connectors.

Thinnet or 10Base2 also operates at 10 Mbps. Although when it was named it was anticipated to be capable of running 200 feet, this was later reduced to 185 feet. Both types are used in a bus topology (more on topologies in the section “Network Topologies” later in this chapter). Thinnet uses two types of connectors: BNC connectors and T-connectors.

Coaxial has an outer cylindrical covering that surrounds either a solid core wire (Thicknet) or a braided core (Thinnet). This type of cabling has been replaced over time with more capable twisted-pair and fiberoptic cabling. Coaxial cabling can be tapped, so physical access to this cabling should be restricted or prevented if possible. It should be out of sight if it is used. Figure 3-12 shows the structure of a coaxial cable.

Another security problem with coax in a bus topology is that it is broadcast based, which means a sniffer attached anywhere in the network can capture all traffic. In switched networks (more on that topic later in this chapter in the section “Network devices”), this is not a consideration.

Twisted Pair

The most common type of network cabling found today is called twisted-pair cabling. It is called this because inside the cable are four pairs of smaller wires that are braided or twisted. This twisting is designed to eliminate a phenomenon called crosstalk, which occurs when wires that are inside a cable interfere with one another. The number of wire pairs that are used depends on the implementation. In some implementations, only two pairs are used, and in others all four wire pairs are used. Figure 3-13 shows the structure of a twisted-pair cable.

Figure 3-13. Twisted-Pair Cabling

Twisted-pair cabling comes in shielded (STP) and unshielded (UTP) versions. Nothing is gained from the shielding except protection from Radio Frequency Interference (RFI) and Electromagnetic Interference (EMI). RFI is interference from radio sources in the area, whereas EMI is interference from power lines. A common type of EMI is called common mode noise, which is interference that appears on both signal leads (signal and circuit return) or the terminals of a measuring circuit, and ground. If neither EMI nor RFI are a problem, nothing is gained by using STP, and it costs more.

The same naming system used with coaxial and fiber is used with twisted pair. The following are the major types of twisted pair you will encounter:

• 10BaseT: Operates at 10 Mbps

• 100BaseT: Also called Fast Ethernet; operates at 100 Mbps

• 1000BaseT: Also called Gigabit Ethernet; operates at 1000 Mbps

• 10GBaseT: Operates at 10 Gbps

Twisted-pair cabling comes in various capabilities and is rated in categories. Table 3-4 lists the major types and their characteristics. Regardless of the category, twisted-pair cabling can be run about 100 meters before attenuation degrades the signal.

Table 3-4. Twisted-Pair Categories

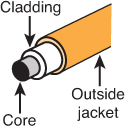

Fiberoptic

Fiberoptic cabling uses a source of light that shoots down an inner glass or plastic core of the cable. This core is covered by cladding that causes light to be confined to the core of the fiber. Figure 3-14 shows the structure of a fiberoptic cable.

Figure 3-14. Fiberoptic Cabling

Fiberoptic cabling manipulates light such that it can be interpreted as ones and zeros. Because it is not electrically based, it is totally impervious to EMI, RFI, and crosstalk. Moreover, although not impossible, tapping or eavesdropping on a fiber cable is much more difficult. In most cases, attempting to tap into it results in a failure of the cable, which then becomes quite apparent to all.

Fiber comes in a single and multi-mode format. The single mode uses a single beam of light provided by a laser, goes the further of the two, and is the most expensive. Multi-mode uses several beams of light at the same time, uses LEDs, will not go as far and is less expensive. Either type goes much further than electrical cabling in a single run and also typically provides more capacity. Fiber cabling has its drawbacks, however. It is the most expensive to purchase and the most expensive to install. Table 3-5 shows some selected fiber specifications and their theoretical maximum distances.

Table 3-5. Selected Fiber Specifications

Network Topologies

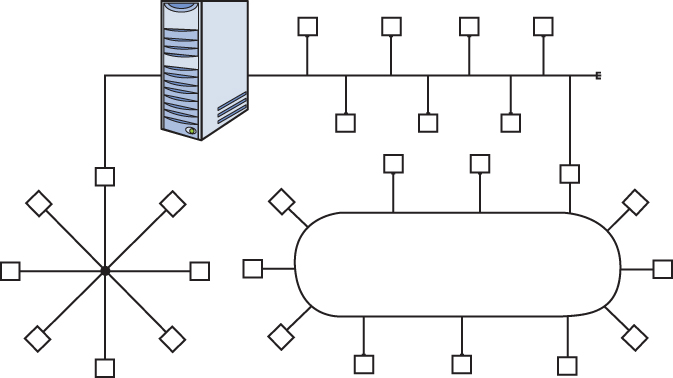

Networks can be described by their logical topology (the data path used) and by their physical topology (the way in which devices are connected to one another. In most cases the logical topology and the physical topology will be the same but not in all. This section discusses both logical and physical network topologies.

Ring

A physical ring topology is one in which the devices are daisy-chained one to another in a circle or ring. If the network is also a logical ring, the data circles the ring from one device to another. Two technologies use this topology, Fiber Distributed Data Interface (FDDI) and Token Ring. Both of these technologies are discussed in detail in the section, “Network Technologies.” Figure 3-15 shows a typical ring topology.

One of the drawbacks of the ring topology is that if a break occurs in the line, all systems will be affected as the ring will be broken. As you will see in the section “Network Technologies” a FDDI network addresses this issue with a double ring for fault tolerance.

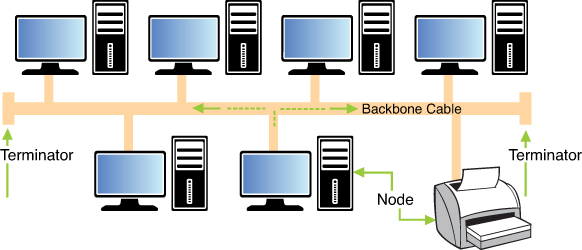

Bus

The bus topology was the earliest Ethernet topology used. In this topology all devices are connected to a single line that has two definitive endpoints. The network does NOT loop back and form a ring. This topology is broadcast based, which can be a security issue in that a sniffer or protocol analyzer connected at any point in the network will be capable of capturing all traffic. From a fault tolerance standpoint, the bus topology suffers the same danger as a ring. If a break occurs anywhere in the line all devices are affected. Moreover, a requirement specific to this topology is that each end of the bus must be terminated. This prevents signals from “bouncing” back on the line causing collisions. (More on collisions later, but collisions require the collided packets to be sent again, lowering overall throughput.) If this termination is not done properly, the network will not function correctly. Figure 3-16 shows a bus topology.

Star

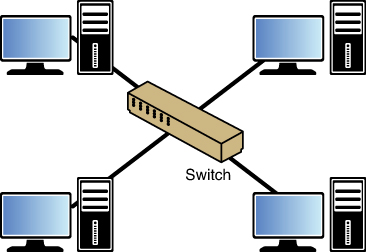

The star topology is the most common in use today. In this topology all devices are connected to a central device (either a hub or a switch). One of the advantages of this topology is that if a connection to any single device breaks, ONLY that device is affected and no others. The downside of this topology is that a single point of failure (the hub or switch) exists. If the hub or switch fails all devices are affected. Figure 3-17 shows a star topology.

Mesh

Although the mesh topology is the most fault tolerant of any discussed thus far, it is also the most expensive to deploy. In this topology all devices are connected to all other devices. This provides complete fault tolerance but also requires multiple interfaces and cables on each device. For that reason it is deployed only in rare circumstances where such an expense is warranted. Figure 3-18 shows a mesh topology.

Hybrid

In many cases an organization’s network is a combination of these network topologies, or a hybrid network. For example, one section might be a star that connects to a bus network or a ring network. Figure 3-19 shows an example of a hybrid network.

Network Technologies

Just as a network can be connected in various topologies, different technologies have been implemented over the years that run over those topologies. These technologies operate at layer 2 of the OSI model, and their details of operation are specified in various standards by the Institute of Electrical and Electronics Engineers (IEEE). Some of these technologies are designed for Local Area Network (LAN) applications whereas others are meant to be used in a Wide Area Network (WAN). In this section we’ll look at the main LAN technologies and some of the processes that these technologies use to arbitrate access to the network.

Ethernet 802.3

The IEEE specified the details of Ethernet in the 802.3 standard. Prior to this standardization Ethernet existed in several earlier forms, the most common of which was called Ethernet ll or DIX Ethernet (DIX stands for the three companies that collaborated on its creation, DEC, Intel and Xerox).

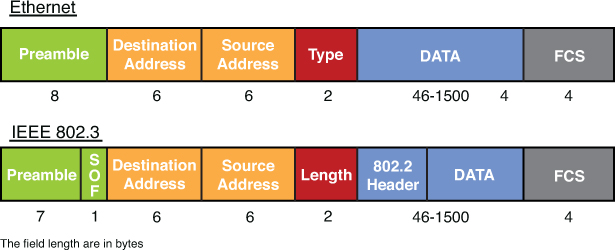

In the section on the OSI model you learned that the protocol data unit (PDU) created at layer 2 is called a frame. Because Ethernet is a layer 2 protocol we refer to the individual Ethernet packets as frames. There are small differences in the frame structures of Ethernet ll and 802.3, although they are compatible in the same network. Figure 3-20 shows a comparison of the two frames. The significant difference is that during the IEEE standardization process, the EtherType field was changed to a (data) length field in the new 802.3 standard. For purposes of identifying the data type another field called the 802.2 header was inserted to contain that information.

Figure 3-20. Ethernet ll and 802.3

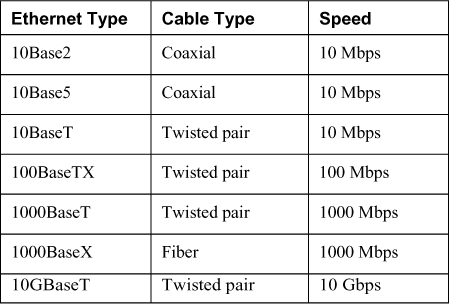

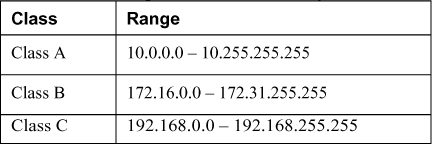

Ethernet has been implemented on coaxial, fiber, and twisted-pair wiring. Table 3-6 lists some of the more common Ethernet implementations.

Table 3-6. Ethernet Implementations

Note

Despite the fact that 1000 BaseT and 1000 BaseX are faster, 100 BaseTX is called Fast Ethernet! Also both 1000 BaseT and 1000 BaseX are usually referred to as Gigabit Ethernet.

Ethernet calls for devices to share the medium on a frame-by-frame basis. It arbitrates access to the media using a process called Carrier Sense Multiple Access with Collision Detection (CSMA/CD). This process is discussed in detail in the section “CSMA/CD versus CSMA/CA” where the process will be contrasted with the method used in 802.11 wireless networks.

Token Ring 802.5

Ethernet is the most common layer 2 protocol, but it has not always been that way. An example of a proprietary layer 2 protocol that enjoyed some small success is IBM Token Ring. This protocol operates using specific IBM connective devices and cables, and the nodes must have Token Ring network cards installed. It can operate at 16 Mbps, which at the time of its release was impressive, but the propriety nature of the equipment and the soon-to-be faster Ethernet caused Token Ring to fall from favor.

As mentioned earlier, in most cases the physical network topology is the same as the logical topology. Token Ring is the exception to that general rule. It is logically a ring and physically a star. It is a star in that all devices are connected to a central device called a Media Access Unit (MAU), but the ring is formed in the MAU and when you investigate the flow of the data it goes from one device to another in a ring design by entering and exiting each port of the MAU, as shown on Figure 3-21.

FDDI

Another layer 2 protocol that uses a ring topology is Fiber Distributed Data Interface (FDDI). Unlike Token Ring it is both a physical and a logical ring. It is actually a double ring, each going in a different direction to provide fault tolerance. It also is implemented with fiber cabling. In many cases it is used for a network backbone and is then connected to other network types, such as Ethernet, forming a hybrid network. It is also used in Metropolitan Area Networks (MANs) because it can be deployed up to 100 kilometers.

Figure 3-22 shows an example of an FFDI ring.

Contention Methods

Regardless of the layer 2 protocol in use, there must be some method used to arbitrate the use of the shared media. Four basic processes have been employed to act as the traffic cop, so to speak:

• CSMA/CD

• CSMA/CA

• Polling

This section compares and contrasts each and provides examples of technologies that use each.

CSMA/CD Versus CSMA/CA

To appreciate CSMA/CD and CSMA/CA, you must understand the concept of collisions and collision domains in a shared network medium. Collisions occur when two devices send a frame at the same time causing the frames and their underlying electrical signals to collide on the wire. When this occurs both signals and the frames they represent are destroyed or at the very least corrupted such that they are discarded when they reach the destination. Frame corruption or disposal causes both devices to resend the frames, resulting in a drop in overall throughput.

Collision Domains

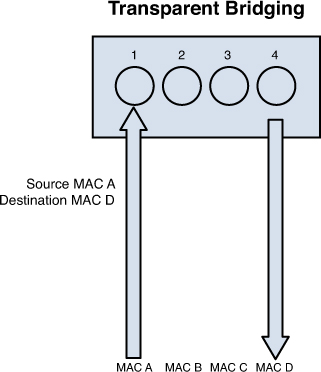

A collision domain is any segment of the network where the possibility exists for two or more devices’ signals to collide. In a bus topology, that would constitute the entire network because the entire bus is a shared medium. In a star topology, the scope of the collision domain or domains depends on the central connecting device. Central connecting devices include hubs and switches. Hubs and switches are discussed more fully in the section “Network Devices” but their differences with respect to collision domains need to be discussed here.

A hub is an unintelligent junction box into which all devices plug. All the ports in the hub are in the same collision domain because when a hub receives a frame the hub broadcasts the frame out all ports. So logically the network is still a bus.

A star topology with a switch in the center does not operate this way. A switch has the intelligence to record the MAC address of each device on every port. After all the devices’ MAC addresses are recorded, the switch sends a frame ONLY to the port on which the destination device resides. Because each device’s traffic is then segregated from any other device’s traffic, each device is considered to be in its own collision domain.

This segregation provided by switches has both performance and security benefits. From a performance perspective, it greatly reduces the number of collisions, thereby significantly increasing overall throughput in the network. From a security standpoint, it means that a sniffer connected to a port in the switch will ONLY capture traffic destined for that port, not all traffic. Compare this security to a hub-centric network. When a hub is in the center of a star network, a sniffer will capture all traffic regardless of the port to which it is connected because all ports are in the same collision domain.

In Figure 3-23, a switch has several devices and a hub connected to it with each collision domain marked to show how the two devices create collision domains. Note that each port on the switch is a collision domain whereas the entire hub is a single collision domain.

Figure 3-23. Collision Domains

CSMA/CD

In 802.3 networks a mechanism called Carrier Sense Multiple Access Collision Detection (CSMA/CD) is used when a shared medium is in use to recover from inevitable collisions. This process is a step-by-step mechanism that each station follows every time it needs to send a single frame. The steps to the process are as follow:

1. When a device needs to transmit, it checks the wire for existing traffic. This process is called carrier sense.

2. If the wire is clear, the device transmits and continues to perform carrier sense.

3. If a collision is detected, both devices issue a jam signal to all the other devices, which indicates to them to NOT transmit. Then both devices increment a retransmission counter. This is a cumulative total of the number of times this frame has been transmitted and a collision occurred. There is a maximum number at which it aborts the transmission of the frame.

4. Both devices calculate a random amount of time (called a called a random back off) and wait that amount of time before transmitting again.

5. In most cases because both devices choose random amounts of time to wait, another collision will not occur. If it does, the procedure repeats.

CSMA/CA

In 802.11 wireless networks CSMA/CD cannot be used as an arbitration method because unlike when using bounded media, the devices cannot detect a collision. The method used is called Carrier Sense Multiple Access Collision Avoidance (CSMA/CA). It is a much more laborious process because each station must acknowledge each frame that is transmitted.

The “Wireless Networks” section covers 802.11 network operations in more detail, but for the purposes of understanding CSMA/CA we must at least lay some groundwork. The typical wireless network contains an access point (AP) and at least one or more wireless stations. In this type of network (called Infrastructure Mode wireless network), traffic never traverses directly between stations but is always relayed through the AP. The steps in CSMA/CA are as follow:

1. Station A has a frame to send to Station B. It checks for traffic in two ways. First it performs carrier sense, which means it listens to see whether any radio waves are being received on its transmitter. Secondly, after the transmission is sent it will continue to monitor the network for possible collisions.

2. If traffic is being transmitted, Station A decrements an internal countdown mechanism called the random back-off algorithm. This counter will have started counting down after the last time this station was allowed to transmit. All stations will be counting down their own individual timers. When a station’s timer expires, it is allowed to send.

3. If Station A performs carrier sense, there is no traffic, and its timer hits zero, it sends the frame.

4. The frame goes to the AP.

5. The AP sends an acknowledgment back to Station A. Until that acknowledgment is received by Station A, all other stations must remain silent. For each frame that AP needs to relay, it must wait its turn to send using the same mechanism as the stations.

6. When its turn comes up in the cache queue, the frame from Station A is relayed to Station B.

7. Station B sends an acknowledgment back to the AP. Until that acknowledgment is received by the AP, all other stations must remain silent.

As you can see, these processes create a lot of overhead but are required to prevent collisions in a wireless network.

Token Passing

Both FDDI and Token Ring networks use a process called token passing. In this process a special packet called a token is passed around the network. A station cannot send until the token comes around and is empty. Using this process, NO collisions occur because two devices are never allowed to send at the same time. The problem with this process is that the possibility exists for a single device to gain control of the token and monopolize the network.

Polling

The final contention method to discuss is polling. In this system a primary device polls each other device to see whether it needs to transmit. In this way each device gets a transmit opportunity. This method is common in the mainframe environment.

Network Protocols/Services

Many protocols and services have been developed over the years to add functionality to networks. In many cases these protocols reside at the Application layer of the OSI model. These Application layer protocols usually perform a specific function and rely on the lower layer protocols in the TCP/IP suite and protocols at layer 2 (like Ethernet) to perform routing and delivery services.

This section covers some of the most important of these protocols and services, including some that do NOT operate at the Application layer, focusing on the function and port number of each. Port numbers are important to be aware of from a security standpoint because in many cases port numbers are referenced when configuring firewall rules. In cases where a port or protocol number is relevant they will be given as well.

ARP

Address Resolution Protocol (ARP), one of the protocols in the TCP/IP suite, operates at layer 3 of the OSI model. The information it derives is utilized at layer 2, however. ARP’s job is to resolve the destination IP address placed in the header by IP to a layer 2 or MAC address. Remember, when frames are transmitted on a local segment the transfer is done in terms of MAC addresses not IP addresses, so this information must be known.

Whenever a packet is sent across the network, at every router hop and again at the destination subnet, the source and destination MAC address pairs change but the source and destination IP addresses not. The process that ARP uses to perform this resolution is called an ARP broadcast.

First an area of memory called the ARP cache is consulted. If the MAC address has been recently resolved, the mapping will be in the cache and a broadcast is not required. If the record has aged out of the cache, ARP sends a broadcast frame to the local network that all devices will receive. The device that possesses the IP address responds with its MAC address. Then ARP places the MAC address in the frame and sends the frame. Figure 3-24 illustrates this process.

DHCP

Dynamic Host Configuration Protocol (DHCP) is a service that can be used to automate the process of assigning an IP configuration to the devices in the network. Manual configuration of an IP address, subnet mask, default gateway, and DNS server is not only time consuming but fraught with opportunity for human error. Using DHCP can not only automate this, but can also eliminate network problems from this human error.

DHCP is a client/server program. All modern operating systems contain a DHCP client, and the server component can be implemented either on a server or on a router. When a computer that is configured to be a DHCP client starts, it performs a precise four-step process to obtain its configuration. Conceptually, the client broadcasts for the IP address of the DHCP server. All devices receive this broadcast, but only DHCP servers respond. The device accepts the configuration offered by the first DHCP server from which it hears. The process uses four packets with distinctive names (see Figure 3-25). DHCP uses UDP ports 67 and 68. Port 67 sends data to the server, and port 68 sends data to the client.

DNS

Just as DHCP relieves us from having to manually configure the IP configuration of each system, Domain Name System (DNS) relieves all humans from having to know the IP address of every computer with which they want to communicate. Ultimately, an IP address must be known to connect to another computer. DNS resolves a computer name (or in the case of the web a domain name) to an IP address.

DNS is another client/server program with the client included in all modern operating systems. The server part resides on a series of DNS servers located both in the local network and on the Internet. When a DNS client needs to know the IP address that goes with a particular computer name or domain name, it queries the local DNS server. If the local DNS server does not have the resolution, it contacts other DNS servers on the client’s behalf, learns the IP address, and relays that information to the DNS client. DNS uses UDP port 53 and TCP port 53. The DNS servers use TCP port 53 to exchange information, and the DNS clients use UDP port 53 for queries.

FTP, FTPS, SFTP

File Transfer Protocol (FTP), and its more secure versions FTPS and SFTP, transfers files from one system to another. FTP is insecure in that the username and password is transmitted in clear text. The original clear text version uses TCP port 20 for data and TCP port 21 as the control channel. Using FTP when security is a consideration is not recommended.

FTPS is FTP that adds support for the Transport Layer Security (TLS) and the Secure Sockets Layer (SSL) cryptographic protocols. FTPS uses TCP ports 989 and 990.

FTPS is not the same as and should not be confused with another secure version of FTP, SSH File Transfer Protocol (SFTP). This is an extension of the Secure Shell Protocol (SSH). There have been a number of different versions with version 6 being the latest. Because it uses SSH for the file transfer, it uses TCP port 22.

HTTP, HTTPS, SHTTP

One of the most frequently used protocols today is Hypertext Transfer Protocol (HTTP) and its secure versions, HTTPS and SHTTP. This protocol is used to view and transfer web pages or web content. The original version (HTTP) has no encryption so when security is a concern one of the two secure versions should be used. HTTP uses TCP port 80.

Hypertext Transfer Protocol Secure (HTTPS) layers the HTTP on top of the SSL/TLS protocol, thus adding the security capabilities of SSL/TLS to standard HTTP communications. It is often used for secure websites because it requires no software or configuration changes on the web client to function securely. When HTTPS is used, port 80 is not used. Rather it uses port 443.

Unlike HTTPS, which encrypts the entire communication, SHTTP encrypts only the served page data and submitted data such as POST fields, leaving the initiation of the protocol unchanged. Secure-HTTP and HTTP processing can operate on the same TCP port, port 80. This version is rarely used.

ICMP

Internet Control Message Protocol (ICMP) operates at layer 3 of the OSI model and is used by devices to transmit error messages regarding problems with transmissions. It also is the protocol used when the ping and traceroute commands are used to troubleshoot network connectivity problems. Because IP is part of the TCP/IP suite, it doesn’t use a port number but is identified in the packet by its protocol number. Its protocol number is 1.

ICMP is a protocol that can be leveraged to mount several network attacks based on its operation, and for this reason many networks choose to block ICMP. These attacks are discussed in the section “Network Threats.”

IMAP

Internet Message Access Protocol (IMAP) is an Application layer protocol for email retrieval. Its latest version is IMAP4. It is a client email protocol used to access email from a server. Unlike POP3, another email client that can only download messages from the server, IMAP4 allows one to download a copy and leave a copy on the server. IMAP 4 uses port 143. A secure version also exists, IMAPS (IMAP over SSL) that uses port 993.

NAT

Network Address Translation is a service that maps private IP addresses to public IP addresses. It is discussed in the section “Logical and Physical addressing” earlier in this chapter.

PAT

Port Address Translation (PAT) is a specific version of NAT that uses a single public IP address to represent multiple private IP addresses. Its operation is discussed in the section “Logical and Physical Addressing” earlier in this chapter

POP

Post Office Protocol (POP) is an Application layer email retrieval protocol. POP3 is the latest version. It allows for downloading messages only and does not allow the additional functionality provided by IMAP4. POP3 uses port 110. A version that runs over SSL is also available that uses port 995.

SMTP

POP and IMAP are client email protocols used for retrieving email, but when email servers are talking to each other they use a protocol called Simple Mail Transfer Protocol (SMTP), a standard Application layer protocol. This is also the protocol used by clients to send email. SMTP uses port 25, and when it is runs over SSL it uses port 465.

SNMP

Simple Network Management Protocol (SNMP) is an Application layer protocol that is used to retrieve information from network devices and to send configuration changes to those devices. SNMP uses TCP port 162 and UDP ports 161 and 162.

SNMP devices are organized into communities and the community name must be known to either access information from or send a change to a device. It also can be used with a password. SNMP versions 1 and 2 are susceptible to packet sniffing, and all versions are susceptible to brute-force attacks on the community strings and password used. The defaults of community string names, which are widely known, are often left in place. The latest version, SNMPv3 is the most secure.

Network Routing

Routing occurs at layer 3 of the OSI model, which is also the layer at which IP operates and where the source and destination IP addresses are placed in the packet. Routers are devices that transfer traffic between systems in different IP networks. When computers are in different IP networks, they cannot communicate unless a router is available to route the packets to the other networks.

Routers keep information about the paths to other networks in a routing table. These tables can be populated several ways. Administrators manually enter these routes, or dynamic routing protocols allow the routers running the same protocol to exchange routing tables and routing information. Manual configuration, also called static routing, has the advantage of avoiding the additional traffic created by dynamic routing protocols and allows for precise control of routing behavior, but requires manual intervention when link failures occur. Dynamic routing protocols create traffic but are able to react to link outages and reroute traffic without manual intervention.

From a security standpoint, routing protocols introduce the possibility that routing update traffic might be captured, allowing a hacker to gain valuable information about the layout of the network. Moreover, Cisco devices (perhaps the most widely used) also use a proprietary layer 2 protocol by default called Cisco Discovery Protocol (CDP) that they use to inform each other about their capabilities. If the CDP packets are captured, additional information can be obtained that can be helpful to mapping the network in advance of an attack.

This section compares and contrasts routing protocols.

Distance Vector, Link State, or Hybrid Routing

Routing protocols have different capabilities and operational characteristics that impact when and where they are utilized. Routing protocols come in two basic types: interior and exterior. Interior routing protocols are used within an autonomous system, which is a network managed by one set of administrators, typically a single enterprise. Exterior routing protocols route traffic between systems or company networks. An example of this type of routing is what occurs on the Internet.

Routing protocols also can fall into three categories that describe their operations more than their scope: distance vector, link state, and hybrid (or advanced distance vector). The difference in these mostly revolves around the amount of traffic created and the method used to determine the best path out of possible paths to a network. The value used to make this decision is called a metric, and each has a different way of calculating the metric and thus determining the best path.

Distance vector protocols share their entire routing table with their neighboring routers on a schedule, thereby creating the most traffic of the three categories. They also use a metric called hop count. Hop count is simply the number of routers traversed to get to a network.

Link state protocols only share network changes (link outages and recoveries) with neighbors, thereby greatly reducing the amount of traffic generated. They also use a much more sophisticated metric that is based on many factors, such as the bandwidth of each link on the path and the congestion on each link. So when using one of these protocols, a path might be chosen as best even though it has more hops because the path chosen has better bandwidth, meaning less congestion.

Hybrid or advanced distance vector protocols exhibit characteristics of both types. EIGRP, discussed later in this section, is the only example of this type. In the past EIGRP has been referred to as a hybrid protocol but in the last several years Cisco (which created IGRP and EIGRP) has been calling this an advanced distance vector protocol so you might see both terms used. In the following sections several of the most common routing protocols are discussed briefly.

RIP

Routing Information Protocol (RIP) is a standards-based distance vector protocol that has two versions: RIPv1 and RIPv2. Both use hop count as a metric and share their entire routing tables every 30 seconds. Although RIP is the simplest to configure, it has a maximum hop count of 15, so it is only useful in very small networks. The biggest difference between the two versions is that RIPv1 can only perform classful routing whereas RIPv2 can route in a network where CIDR has been implemented.

OSPF

Open Shortest Path First (OSPF) is a standards-based link state protocol. It uses a metric called cost that is calculated based on many considerations. Thus it makes much more sophisticated routing decisions than a distance vector routing protocol such as RIP. It also only updates other routers with changes, greatly reducing the amount of traffic generated. To take full of advantage of OSPF, a much deeper knowledge of routing and OSPF itself is required. It can scale successfully to very large networks because it has no minimum hop count.

IGRP

Interior Gateway Routing Protocol is an obsolete classful Cisco-propriety routing protocol that you will not likely see in the real world because of its inability to operate in an environment where CIDR has been implemented. It has been replaced with the classless version Enhanced IGRP (EIGRP) discussed next.

EIGRP

Enhanced IGRP (EIGRP) is a classless Cisco-propriety routing protocol that is considered a hybrid or advanced distance vector protocol. It exhibits some characteristics of both link state and distance vector operations. It also has no limitations on hop count and is much simpler to implement than OSPF. It does, however, require that all routers be Cisco.

VRRP

When a router goes down, all hosts that use that router for routing will be unable to send traffic to other networks. Virtual Router Redundancy Protocol (VRRP) is not really a routing protocol but rather is used to provide multiple gateways to clients for fault tolerance in the case of a router going down. All hosts in a network are set with the IP address of the virtual router as their default gateway. Multiple physical routers are mapped to this address so there will be an available router even if one goes down.

IS-IS

Intermediate system to Intermediate system (IS-IS) is a complex interior routing protocol that is based on OSI protocols rather than IP. It is a link state protocol. The TCP/IP implementation is called Integrated IS-IS. OSPF has more functionality, but IS-IS creates less traffic than OSPF and is much less widely implemented than OSPF.

BGP

Border Gateway Protocol (BGP) is an exterior routing protocol considered to be a path vector protocol. It routes between autonomous systems (ASs) and is used on the Internet. It has a rich set of attributes that can be manipulated by administrators to control path selection and to control the exact way in which traffic enters and exits the AS. However, it is one of the most complex to understand and configure.

Network Devices

Network devices operate at all layers of the OSI model. The layer at which they operate reveals quite a bit about their level of intelligence and about the types of information used by each device. This section covers common devices and their respective roles in the overall picture.

Patch Panel

Patch panels operate at the Physical (layer 1) of the OSI model and simply function as a central termination point for all the cables running through the walls from wall outlets, which in turn are connected to computers with cables. The cables running through the walls to the patch panel are permanently connected to the panel. Short cables called patch cables are then used to connect each panel port to a switch or hub.

Multiplexer