Empirical Analyses of Networks in Finance

Giulia Iori⁎,1; Rosario N. Mantegna†,‡,§ ⁎Department of Economics, City, University of London, London, United Kingdom

†Department of Physics and Chemistry, University of Palermo, Palermo, Italy

‡Department of Computer Science, University College London, London, United Kingdom

§Complexity Science Hub Vienna, Vienna, Austria

1Corresponding author. email address: [email protected]

Abstract

The recent global financial crisis has triggered a huge interest in the use of network concepts and network tools to better understand how instabilities can propagate through the financial system. The literature is today quite vast, covering both theoretical and empirical aspects. This review concentrates on empirical work, and associated methodologies, concerned with the evaluation of the fragility and resilience of financial and credit markets. The first part of the review examines the literature on systemic risk that arise from banks mutual exposures. These exposures stem primarily from interbank lending and derivative positions, but also, indirectly, from common holdings of other asset classes, that can lead to common shocks in instances of fire sales, and from widespread non-performing loans to the real sector during period of economic downturns. We survey (a) studies that characterize the structure of national interbank networks, in some cases using a multiplex representations, (b) studies that introduce novel methods to quantify systemic risk and identify systemically important institutions, such as via stress test scenarios, (c) studies that assess which regulatory measures can help mitigate the propagation of contagion and distress in the financial system, and (d) studies that explore which location advantages may arise from holding privileged positions in the interbank network, such as via preferential lending relationships, or because of occupying a more central node, and if such advantages can provide an early indication of the build up of systemic risk. The second part of the review is dedicated to the analysis of indirect networks, specifically (e) proximity based network, i.e. networks obtained starting from a proximity measure sometime filtered with a network filtering methodology, (f) association network, i.e. networks where a link between two financial actors is set if a statistical test again a null hypothesis is rejected, and (g) statistically validated networks, i.e. event or relationship networks where a subset of links is selected according to a statistical validation associated with the rejection of a random null hypothesis. The need for a joint consideration of direct and indirect channels of contagion is briefly discussed.

Keywords

Financial networks; Systemic risk; Multilayer networks; Proximity networks; Association networks; Statistically validated networks; Network reconstruction; Stress test scenarios

1 Introduction

At the end of the 90s of the last century a new multidisciplinary research area took off. This research area is today called “network science”. Network science is a multidisciplinary research area analyzing and modeling complex networks from the perspective of several disciplines. The major ones are computer science, economics, mathematics, sociology and psychology, and statistical physics.

The onset of the financial crisis of 2007 triggered an enormous interest in applying networks concepts and tools, originating from several different disciplines, to study the role of interlinkages in financial systems on financial stability. The vast literature covers today both theoretical and empirical aspects. It is out of the scope of the present review to cover all the research lines today present in the analysis and modeling of economic and financial systems with network concept and with tools designed specifically for this concept. We will focus instead on a selection of studies that perform empirical analyses of some crucial areas of the financial system.

Our review complements others that have been published in the last few years. Allen and Babus (2008) review theoretical work on networks with application to systemic risk, investment decisions, corporate finance, and microfinance. Bougheas and Kirman (2015) review theoretical and empirical studies on systemic risk that implement networks and complex analysis techniques. Iori and Porter (2016) review agent based models of asset markets, including heterogeneous agents and networks. Grilli et al. (2017) focus on recent theoretical work related to credit networks, discussing in particular agent based computational approaches. Benoit et al. (2017) review studies that identify sources of systemic risk both using regulatory data and market data to produce measures of systemic risk. Aymanns et al. (2018) in this volume review computational approaches to financial stability considering multiple channels of contagion such counterparty risk, funding risk, and common assets holding. While there is some overlap between these papers and the present review, our review has a more empirical focus, exploring a broad range of methodologies that have been applied to the study of financial networks, such as the investigation and modeling of the interbank market, network reconstruction techniques, multilayer characterization of interbank exposures, indirect channels of contagion, proximity based networks, association networks, and statistically validated networks.

Specifically, the empirical studies of the interbank market will be discussed in detail because this market is of major interest for studies of systemic risk of national financial systems and of the global financial system. Recent studies of the systemic risk of the banking system of different countries and of the global financial system have also shown the importance of indirect links present between financial actors. The intensity of these indirect links are for example associated with the degree of similarity of the portfolio of assets owned by the financial institutions. An action of a distressed bank acting on a specific asset and triggering a fire sale of the asset can in fact impact other financial actors even in the absence of direct financial contracts between financial entities. Moreover, the understanding of the dynamic of contagion of distress has promoted the study of networks of influence between financial actors. For the above reasons in finance we have empirical studies covering four distinct types of networks. They are (i) the customary event or relationship networks as, for example, networks of market transactions or networks of the interbank loans exchanged between pairs of banks, (ii) proximity based network, i.e. networks obtained starting from a proximity measure often filtered with a network filtering methodology able to highlight the most significant pairwise similarity or dissimilarity present in the system, (iii) association network, i.e. networks where a link between two financial actors is set if a statistical test against a null hypothesis is rejected for a pair of financial actors (one example of association network is a network summarizing the Granger-causality relationships between all pairs of financial actors of a given financial system), and (iv) statistically validated networks, i.e. event or relationship networks where a subset of links is selected according to a statistical validation of each link performed by considering the rejection of a random null hypothesis assuming the same heterogeneity as observed in financial complex systems.

The review is organized as follows. Section 2 provides a historical perspective of the development of the new interdisciplinary research area of network science. Section 3 discusses the network approaches to the study of the stability of financial systems with a special focus on the interbank market. The section discusses the structure of national interbank markets, typical approaches in the setting and analysis of stress test scenarios, the detection of systemically important financial institutions, and the modeling of lending relationships. The methodological aspects that are considered are related to the problem of network reconstruction and to the multilayer nature of financial networks. Section 3 discusses the classes of financial networks that are different from relationship or event networks. Specifically, it discusses proximity based networks, association networks, and statistically validated networks. Contributions originating from network science, econophysics, and econometrics are illustrated and discussed. Section 4 discusses the indirect channels of contagion with an emphasis on portfolios overlap and firm–bank credit relationships. In Section 5 we conclude with a discussion on the state of the art and perspectives of empirical investigations performed in economic and financial systems.

The review also contains two appendices written to make the chapter self-contained so that readers not directly working in the field of network science could find a guide about tools and concepts that are usually defined and used in different disciplines. Appendix A briefly describes concepts and definitions used in the study of complex networks and provide a basic vocabulary needed to understand the following sections. In Appendix B we recall some econometrics systemic risk measures that have been also used and discussed in the systemic risk studies performed using network concepts.

2 A Brief Historical Perspective About the Use of Network Science in Economics and Finance

The main driver of the development of the new research area of network science was originally the invention of the World Wide Web and the rapid development and use of it that quickly involved hundreds of millions of people. Another strong input came in 2003–2004 with the creation of the first social network Myspace and the extraordinary success of Facebook. Studies about so-called complex networks benefited from the knowledge previously accumulated in several distinct disciplines. In mathematics Erdös and Rényi (1960) discovered in the 60s of the last century that a simple random graph presents an emergent phenomenon as a function of the average degree, i.e. the average number of connections each node has in the network. The emergent phenomenon concerns the setting of an infinite spanning component comprising the majority of nodes. In an infinite network this spanning component sets up exactly when the average degree is equal to one and it is absent for values lower than one. In sociology and psychology networks were used to understand social balance (Heider, 1946), social attitude towards the setting of relationships in social networks (Wasserman and Faust, 1994), and some puzzles about social networks as the so-called small world effect (Travers and Milgram, 1967). In statistical physics a pioneering paper was the one on the small world effect (Watts and Strogatz, 1998). Another seminal paper in statistical physics was the paper of Barabási and Albert that introduced the concept of scale free network and presented the preferential attachment model (Barabási and Albert, 1999). In computer science the growing importance of the physical Internet motivated the empirical analysis of it. This analysis was showing that the degree distribution of the network has a power-law behavior (Faloutsos et al., 1999). During the same years a small group of computer scientists focused on the properties of information networks with the aim of finding new solutions for the development of efficient search engines of the World Wide Web. Prominent examples of these efforts are the papers of Marchiori (1997) and Kleinberg (1998) that paved the way to the famous PageRank algorithm (Brin and Page, 1998). An early use of statistical physics concepts in the development of models for social networks can be encountered in the development of the so-called exponential random graphs (Holland and Leinhardt, 1981; Strauss, 1986).

The use of network concept in economics and finance was sporadic and ancillary during the last century. A prominent exception to this status was the model about the process of reaching a consensus in a social network introduced by the statistician Morris H. DeGroot (DeGroot, 1974). Another classic area where the role of a social network was considered instrumental to correctly interpret empirical observations was the area of the modeling of the labor market. In fact the type of a social network that it is present among job searchers and their acquaintances turned out affecting the probability of getting a job of the different social actors (Granovetter, 1973; Boorman, 1975; Calvo-Armengol and Jackson, 2004). Another pioneering empirical study used the concept of network to describe the social structure of a stock options market. The study concluded that distinct social structural patterns of traders affected the direction and magnitude of option price volatility (Baker, 1984).

The interest about the use of network concepts in the modeling of economic and financial systems started to growth at the beginning of this century and become widespread with the onset of the 2007 financial crisis. A pioneering study was published about the stability of the financial systems (Allen and Gale, 2000). During the first years of this century the studies of economic and financial systems performed by economists with network concepts can be classified in two broad areas (Allen and Babus, 2008). The first area concerns studies primarily devoted to the theoretical and empirical investigation of network formation resulting from the rational decisions of economic actors and from their convergence to an equilibrium presenting a Paretian optimum (Goyal and Vega-Redondo, 2005; Vega-Redondo, 2007; Jackson, 2008; Goyal, 2012). Another line of research initiated by the pioneering work of DeGroot (1974) was considering the problem of learning in a distributed system. In parallel to these attempts scholars economists and financial experts in collaboration with colleagues having a statistical physics background performed a series of studies focused on the topological structure of some important financial networks. The most investigated network was the network of interbank credit relationships (Boss et al., 2004; Iori et al., 2006; Soramäki et al., 2007). Another research area focused on the development of methods able to provide proximity based networks starting from empirical financial time series (Mantegna, 1999; Onnela et al., 2004; Tumminello et al., 2005) or generated by financial models (Bonanno et al., 2003).

3 Network Approaches to Financial Stability: The Interbank Market

Financial systems have grown increasingly complex and interlinked. A number of academics and policy-makers have pointed out the strong potential of network representation1 and analysis to capture the intricate structure of connectedness between financial institutions and sectors of the economy. Understanding of the growth, structure, dynamics, and functioning of these networks and their mutual interrelationships is essential in order to monitor and control the build-up of systemic risk, and prevent and mitigate the impact of financial crises.

The recent global financial crisis has illustrated how financial networks can amplify shocks as they spill over through the financial system, by creating feedback loops that can turn relatively minor events into major crises. This feedback between the micro and macro states is typical of complex adaptive dynamical systems. The regulatory efforts to maintain financial stability and mitigate the impact of financial crises has led to a shift from micro-prudential to macro-prudential regulatory approaches. Traditional micro-prudential approaches have relied on ensuring the stability of individual financial institutions and limiting idiosyncratic risk. However, while financial market's participants have clear incentives to manage their own risk and prevent their own collapse, they have limited understanding of the potential effects of their actions on other institutions. The recent emphasis on the adoption of a macro-prudential framework for financial regulation stems from the recognition that systemic risk depends on the collective behavior of market participants acting as a negative externality that needs to be controlled by monitoring the system as a whole. The new regulatory agenda has also brought to the fore the concept that institutions may be “too interconnected to fail”, in addition of being “too big to fail”, and the need for methods and tools that can help to better identify and manage systemically important financial institutions.

Network analysis in finance has been used to address two fundamental questions: (i) what is the effect of the network structure on the propagation of shocks?, and (ii) what is the rational for financial institutions to form links?, with the ultimate goal of identifying the incentives, in the form of regulatory policies, that would induce the reorganization of financial system into network structures that are more resilient to systemic risk. The first question is the one that has been more extensively studied in the literature. Several authors have analyzed how the financial network structure affect the way contagion propagates through the banking system and how the fragility of the system depends on the location of distressed institutions in the network. Other authors have directed their efforts to develop algorithms for the reconstruction of bilateral exposures form aggregate balance sheet information. A number of papers have focused on detecting long lasting relationships among banks in an effort to understand the determinants of links formation. Others have looked at possible network location advantages. A smaller but growing number of papers have focused on how policy and regulations can influence the shape of the network in order to minimize the costs of systemic risk. Theoretical papers are briefly reviewed in Section 3.1. While these papers provide important insights, the connectivity structure of real financial networks departs significantly from the stylized structures assumed, or endogenously derived, by the seminal paper of Allen and Gale (2000) and subsequent work. Given the empirical emphasis of this review, the characterization of real, or reconstructed, networks of interbank exposure and empirical approaches to estimate the danger of contagion owing to exposures in the interbank bank is the main focus of Sections 3.2 to 3.6. Section 3.7 summarizes complementary approaches developed to quantify systemic risk based on econometric methods while potential benefit arising from location advantages are discussed in Section 3.8.

3.1 Interbank Networks Connectivity and Contagion: Theoretical Contributions

Theoretical work has produced important insights to better understand the role of markets interconnectivity on financial stability. Allen and Gale (2000) were the first to show that interbank relations may create fragility in response to unanticipated shocks. In their seminal paper Allen and Gale suggested that a more equal distribution of interbank claims enhances the resilience of the system to the insolvency of any individual bank. Their argument was that in a more densely interconnected financial network, the losses of a distressed bank are divided among more creditors, reducing the impact of negative shocks to individual institutions on the rest of the system. In contrast to this view, however, others have suggested, via computational models, that dense interconnections may function as a destabilizing force (Iori et al., 2006; Nier et al., 2007; Gai and Kapadia, 2010; Battiston et al., 2012; Anand et al., 2012; Lenzu and Tedeschi, 2012; Georg, 2013; Roukny et al., 2013). Haldane (2013) has reconciled these findings by observing that highly interconnected financial networks are “robust-yet-fragile” in the sense that connections serve as shock-absorbers within a certain range but beyond it interconnections facilitate financial distress to spread through the banking system and fragility prevails.

More recent theoretical papers have confirmed these earlier computational results. Glasserman and Young (2015) show that spillover effects are most significant when node sizes are heterogeneous and the originating node is highly leveraged and has high financial connectivity. The authors also show the importance of mechanisms that magnify shocks beyond simple spillover effects. These mechanisms include bankruptcy costs, and mark-to-market losses resulting from credit quality deterioration or a loss of confidence.

Acemoglu et al. (2015) focus on the likelihood of systemic failures due to contagion of counterparty risk. The paper shows that the extent of financial contagion exhibits a phase transition: when the magnitude of negative shocks is below a certain threshold, a more diversified pattern of interbank liabilities leads to a less fragile financial system. However, as the magnitude or the number of negative shocks crosses certain thresholds dense interconnections serve as a mechanism for the propagation of shocks, leading to a more fragile financial system. While Acemoglu et al. (2015) characterize the best and worst networks, from a social planner's perspective, for moderate and very large shocks. Elliott et al. (2014) show how the probability of cascades and their extent depend, for intermediate shocks and for a variety of networks, on integration (how much banks rely on other banks) and diversification (number of banks on which a given bank's liabilities are spread over). Their results highlight that intermediate levels of diversification and integration can be the most problematic. Cabrales et al. (2017) investigate the socially optimal design of financial networks in diverse scenarios and their relationship with individual incentives. In their paper they generalize the Acemoglu et al. (2015) results by considering a richer set of possible shocks distributions and show how the optimal financial structure varies in response to the characteristics of these distributions. The overall picture that emerges from this literature is that the density of linkages has a non-monotonic impact on systemic stability and its effect varies with the nature of the shock, the heterogeneity of the players, and the state of the economy.

A large number of theoretical papers based on Agent Based simulations have investigated how the topological structure of the matrix of direct and indirect exposures between banks affects systemic risk. For a recent review of this literature we refer the interested readers to Grilli et al. (2017).

A different branch of the theoretical literature focuses on network formation mechanisms that reproduce features of trading decisions observed empirically. Of particular relevance to interbank lending markets, are theories on the formation of core–periphery networks.2

Anufriev et al. (2016) build a model of endogenous lending/borrowing decisions, which induce a network. By extending the notion of pairwise stability of Jackson and Wolinsky (1996) they allow the banks to make binary decision to form a link, which represents a loan, jointly with the direction of the loan, its amount, and its interest rate. In equilibrium, a bipartite network is found in which borrowers and lenders form generically a unique component, which well represents interbank markets when aggregating transactions at the daily scale. van der Leij et al. (2016) provide an explanation for the emergence of core–periphery structure by using network formation theory and find that while a core–periphery network cannot be unilaterally stable when banks are homogeneous such structure can form endogenously, if allowing for heterogeneity among banks in size. Heterogeneity is indeed a common characteristics of models that generate stable core–periphery structures. In Farboodi (2015) banks are heterogeneous in their investment opportunities, and they compete for intermediation benefits. A core–periphery network is formed with investment banks taking place at the core, as they are able to offer better intermediation rates. In Bedayo et al. (2016) intermediaries bargain sequentially and bilaterally on a path between two traders. Agents are heterogeneous in their time discounting. A core–periphery network is formed with impatient agents in the core. In Castiglionesi and Navarro (2016) heterogeneity in investments arises endogenously with some banks investing in safe projects, and others in risky projects. The interbank network allows banks to coinsure future idiosyncratic liquidity needs, however, establishing a link with banks that invest in the risky project, reduces the ex-ante probability of serving its own depositors. If counterparty risk is sufficiently high, the trade off leads to a core–periphery like structure. The core includes all the banks that invest in the safe project and form a complete network structure among themselves while the periphery includes all the gambling banks. In Chang and Zhang (2016) banks are heterogeneous in the volatility of their liquidity needs. More volatile banks trade with more stable banks, creating a multi-tier financial system with the most stable banks in the core. However, banks do not have incentives to link with other banks in the same tier, and hence, their network structure is more like a multipartite network than a core–periphery network.

3.2 The Structure of National Interbank Networks

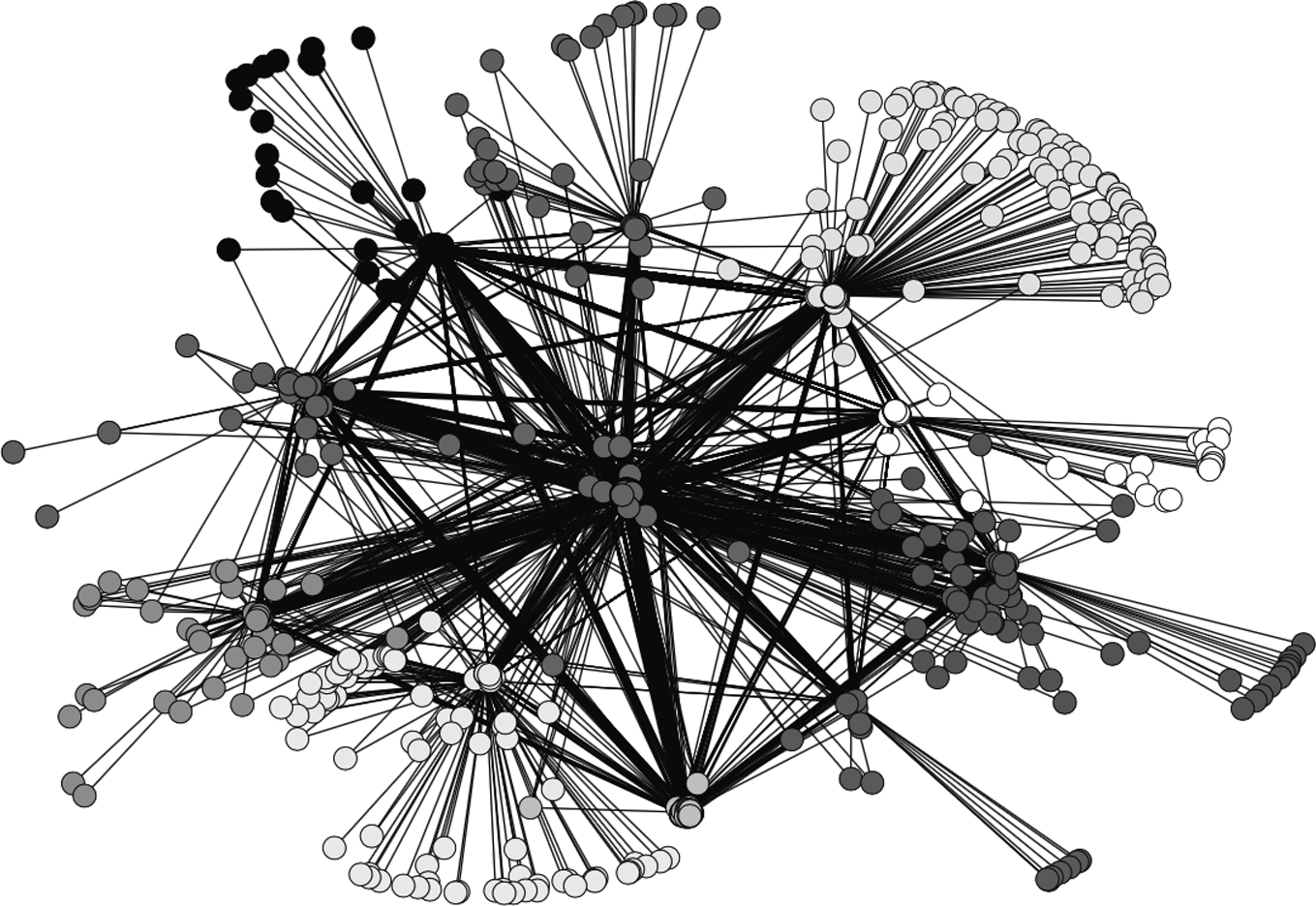

The mapping of interbank networks has been done for several countries, notably by Sheldon and Maurer (1998) for Switzerland; Inaoka et al. (2004) for Japan (BoJ-NET); Furfine (2003), Soramäki et al. (2007), and Bech and Atalay (2010) for the US Federal funds market (Fedwire); Boss et al. (2004), Elsinger et al. (2006), and Puhr et al. (2012) for Austria; Degryse and Nguyen (2007) for Belgium; van Lelyveld and Liedorp (2006) and Propper et al. (2013) for the Netherlands; Upper and Worms (2004) and Craig and von Peter (2014) for Germany; De Masi et al. (2006), Iori et al. (2008), and Finger et al. (2013) for the Italian based e-MID; Cont and Wagalath (2013), Tabak et al. (2010a) for Brazil; Wells (2004) and Langfield et al. (2014) for the United Kingdom; Martinez-Jaramillo et al. (2014) for Mexico; León (2015) for Colombia. In Fig. 1 we show an example of the typical shape of the interbank network. Specifically, we show the Austrian interbank market investigated in Boss et al. (2004). These studies of the interbank market have revealed a number of stylized facts and regularities. Interbank networks are sparse and display fat tailed degree distributions, with most banks attracting a few connections, and few banks concentrating most of the connections. While some papers identify a scale-free degree distribution (Boss et al., 2004; Inaoka et al., 2004; Soramäki et al., 2007; Propper et al., 2013; Bech and Atalay, 2010; Bargigli, 2014; León, 2015), others have reported heterogeneity but observe a departure from a strict power-law distribution of links (Martinez-Jaramillo et al., 2014; Craig and von Peter, 2014; Fricke and Lux, 2014; in't Veld and van Lelyveld, 2014) and propose to model the interbank market in terms of a core periphery network.

It might be worth noting that the fact that it is difficult to discriminate between a power-law scale free distribution and a core periphery network is not an accident of the empirical investigations performed in the interbank market. There are two important reasons explaining why it might be so difficult to discriminate between the two different models in empirical data. The first reason is that in empirical data a finite cut-off of a scale free distribution is unavoidable in finite systems and therefore empirically detected scale free distribution are typically observed only for a limited number of decades of the degree. The second reason is a theoretical reason that it is related to a mathematical property associated with a scale free system. In fact it has been proved by Chung and Lu (2002) that power-law random graphs with degree distribution proportional to ![]() with exponent β in the interval

with exponent β in the interval ![]() (including therefore scale free networks) almost surely contain a dense subgraph (i.e. a core) that has short distance to almost all other nodes. Therefore, also random scale free networks are almost surely characterized by the presence of a core in it. This fact makes the empirical discrimination between a scale free and a core periphery model difficult to asses because, due to the presence of a “core” also in the scale free network, core periphery or scale free can be quantified closely by a fitness indicator (see, for example, the comparison provided in Craig and von Peter, 2014 by using data of the German interbank market and simulations).

(including therefore scale free networks) almost surely contain a dense subgraph (i.e. a core) that has short distance to almost all other nodes. Therefore, also random scale free networks are almost surely characterized by the presence of a core in it. This fact makes the empirical discrimination between a scale free and a core periphery model difficult to asses because, due to the presence of a “core” also in the scale free network, core periphery or scale free can be quantified closely by a fitness indicator (see, for example, the comparison provided in Craig and von Peter, 2014 by using data of the German interbank market and simulations).

Interbank networks show disassortative mixing with respect to the bank size, so small banks tend to trade with large banks and vice versa; clustering coefficients are usually small and interbank networks satisfy the small-world property.3 Tabak et al. (2010a), Craig and von Peter (2014), Fricke and Lux (2014), and in't Veld and van Lelyveld (2014) point to a correlation between the size of financial institutions and their position in the interbank funds hierarchy in the respective Brazilian, German, Italian, and Dutch interbank markets. In these markets large banks tend to be in the core, whereas small banks are found in the periphery. The cores of the networks, composed of the most connected banks, processed a very high proportion of the total value of credit.

The dynamical evolution of interbank networks, as the subprime crisis unfolded, has been closely monitored in an attempt to identify early-warning signals of the building up of systemic risk. Fricke and Lux (2015) and Squartini et al. (2013) have analyzed respectively the e-MID market and the Dutch market. In both markets the networks only display an abrupt topological change in 2008, providing a clear, but unpredictable, signature of the crisis. Nonetheless, when controlling for banks' degree heterogeneity, Squartini et al. (2013) show that higher-order network properties, such as dyadic and triadic motifs, revealed a gradual transition into the crisis, starting already in 2005. Although these results provide some evidence of early warning precursors based on network properties, at least for the Dutch interbank market, a clear economic interpretation for the observed patterns is missing.

3.3 Multilayer Networks

When institutions are interconnected trough different types of financial contracts, such as loans of different maturities, derivatives, foreign exchange and other securities, it is critical to go beyond single-layer networks to properly address systemic risk. Multilayer networks explicitly incorporate multiple channels of connectivity and constitute the natural environment to describe systems interconnected through different types of exposures.

Taking into account the multilayer nature of networks can modify the conclusions on stability reached by considering individual network layers (see Boccaletti et al., 2014 and Kivela et al., 2014 for recent reviews). Contrary to what one would expect, the literature shows that the coupling of scale free networks may yield a less robust network (Buldyrev et al., 2010). In the case of single-layer networks, scale free networks are known to be much more robust to random failures than networks with a finite second moment e.g. of Poisson networks. Indeed, scale free networks continue to have a giant component even if most of the nodes are initially damaged. A finite percolation threshold in these networks is a finite size effect, i.e. the percolation threshold disappears in the limit when the network size becomes large. The robustness of multilayer networks can be evaluated by calculating the size of their mutually connected giant component (MCGC). The MCGC of a multilayer network is the largest component that remains after the random damage propagates back and forth thought the different layers. The percolation threshold for the mutually connected component is finite also for multiplex networks formed by layers of SF networks as in the case of a multiplex in which the layers are formed by Poisson networks. Exceptions to this finding would occur when the number of links (i.e. the degree) of interdependent participants coincides across the layers. That is, scale free networks robustness is likely to be preserved if positively correlated layers exist, such that a high-degree vertex in one layer likely is high-degree in the other layers as well (Kenett et al., 2014).

A number of financial markets have been characterized as multilayer networks. Montagna and Kok (2016) model interbank contagion in the Eurozone with a triple-layer multiplex network consisting of long-term direct bilateral exposures, short-term bilateral exposures, and common exposures to financial assets. Bargigli et al. (2015) examine a unique database of supervisory reports of Italian banks to the Banca d'Italia that includes all interbank bilateral exposures broken down by maturity and by the secured and unsecured nature of the contract. The authors found that layers have different topological properties and persistence over time.

Cont et al. (2013) use a set of different kinds of interbank exposures (i.e. fixed-income instruments, derivatives, borrowing, and lending) and study the potential contagion in the Brazilian market. Poledna et al. (2015) identify four layers of exposure among Mexican banks (unsecured interbank credit, securities, foreign exchange and derivative markets). Aldasoro and Alves (2015) analyze the multiplex networks of exposure among 53 large European banks. Langfield et al. (2014) analyze of different layers of the UK interbank system.

León (2015) studies the interactions of financial institutions on different financial markets in Colombia (sovereign securities market, foreign exchange market, equity, derivative, interbank funds). The approximate scale free connective structure of the Colombia interbank market is preserved across the layers in the multilayer mapping with financial institutions that are “too connected to fail” that are present across many of the network layers. This positive correlated multiplexity, coupled with the ultra-small world nature of the networks analyzed, suggest that the role of too connected financial institutions is critical for the stability of the market, not only at the single layer level, but for the market overall.

While a multilayer representation provides a more accurate characterizing the financial system, studies so far have mostly performed a comparison between the different layers. Future steps would require investigating the interconnections and interdependencies between these different layers and the implications of these interdependencies for financial stability.

3.4 Financial Regulations and Network Control

Of critical importance in macro-prudential policy is the identification of key players in the financial network. In September 2009, the G20 leaders requested the Financial Stability Board (FSB)4 to designate “Global Systemically Important Financial Institutions” (G-SIFIs). As a result, the FSB, IMF, and BIS cooperatively adopted the three valuation points – size, interconnectedness, and substitutability – as the evaluation criterion for G-SIFIs (IMF-BIS-FSB, 2009).

As we have discussed, financial networks are often characterized by skewed degree distributions, with most financial firms displaying few connections, and few financial firms concentrating many connections. In these networks the failure of a participant will have significantly different outcomes depending on which participant is selected. Those participants who are “close” (according to some measure of distance) to all other participants in the network can potentially generate widespread cascading failures if they default. A rising amount of financial literature has encouraged the usage of network metrics of centrality for identifying the institutions that are systemically important (Haldane and May, 2011; León and Murcia, 2013; Markose et al., 2012). In a broad sense, centrality refers to the importance of a node in the network. The centrality indicators typically used are constructed from measures of distance of a bank from the other banks in the network, where distance is expressed in terms of: (1) paths of length one, i.e. the number of incoming or outgoing links, for degree centrality; (2) geodesics (shortest) paths (no vertex is visited more than once), for betweenness; (3) walks (vertices and edges can be visited/traversed multiple times) for eigenvector centrality, Pagerank, Sinkrank, and Katz (see Appendix A for the mathematical definition of commonly used centrality measures). Another popular iterative centrality measure is hub and authority centrality. Acemoglu et al. (2015) introduced the notion of harmonic distance over the financial network to captures the propensity of a bank to be in distress when another bank is in distress. Markose et al. (2012) proposes a measure adapted from epidemiological studies (defined as the maximum eigenvalue for the network of liabilities expressed as a ratio of Tier 1 capital) to identify the most systemic financial institutions and determine the stability of the global OTC derivatives markets. Drehmann and Tarashev (2013) explore two different approaches to measuring systemic importance: one related to banks' participation in systemic events (PA) and another related to their contribution to systemic risk (GCA). The contribution approach is rooted in the Shapley value methodology, first proposed by Shapley (1953) for the allocation of the value created in cooperative games across individual players. Shapley values are portions of system-wide risk that are attributed to individual institutions. Because Shapley values are additive, the sum of these portions across the banks in the system equals exactly the level of system-wide risk. PA assigns a higher (lower) degree of systemic importance to an interbank lender (borrower) than GCA. The reason for this is that PA attributes the risk associated with an interbank transaction entirely to the lending counterparty, i.e. the counterparty that bears this risk and can eventually transfer it onto its creditors in a systemic event. By contrast, GCA splits this risk equally between the two counterparties. GCA is computed by focusing on each subsystem or subgroup of banks that belong to the entire system, and calculating the difference between the risk of this subsystem with and without a particular bank. Averaging such marginal risk contributions across all possible subsystems delivers the systemic importance of the bank.

A novel measure of systemic importance, based on the concept of feedback centrality, is the DebtRank (DR), introduced by Battiston et al. (2012) which measures the fraction of the total economic value that is potentially lost in the case of the distress (and not necessarily default) of a particular node. This method complements traditional approaches by providing a measure of the systemic importance of a bank even when default cascades models predict no impact at all. Applications of DR based stress test analysis (see Thiago et al., 2016 for the Brazilian interbank market, and Battiston et al., 2015 for the Italian interbank market) show that systemically important FIs do not need to be large and that traditional indicators of systemic risk underestimate contagion risk.

The identification of SIFIs is crucial to direct regulatory efforts and, for example, to assess the opportunity to limit institutions' exposures, set up some form of regulatory fees or capital surcharges, or to introduce an insurance fund financed through institution-specific insurance premia (Chan-Lau, 2010). Such an approach has recently also been taken in the IMF's Interim Report for the G20 (IMF, 2010), which outlines that an ideal levy on financial institutions should be based on a network model that would take into account all possible channels of contagion. The new Basel III rules (Basel Committee on Banking Supervision, 2013) in addition to higher capital requirements with a countercyclical component, and a framework for liquidity regulation, include limiting contagion risk as a new objective, in particular for SIFIs.

Several proposals have emerged with the purpose of creating the right incentives for institutions to control the risk they pose to the financial system. In the existing literature on prudential capital controls (Korinek, 2011), optimal policy measures are derived as tax wedges that could be implemented in a variety of equivalent ways. The opportunity cost of not receiving interest can be viewed as a Pigouvian tax. Along these lines, proposals have emerged to base capital requirements not on the risk of banks assets, but on banks' systemic importance, reflecting the impact their failure would have on the wider banking system and the likelihood of contagious losses occurring. Tarashev et al. (2010) set up a constrained optimization problem in which a policymaker equalizes banks' Shapley values subject to achieving a target level for the expected shortfall of assets to liabilities at the level of the system. Similarly Webber and Willison (2011) solve the constrained optimization problem for a policymaker who seeks to minimize the total level of capital in the UK banking system, subject to meeting its chosen systemic risk target. They show that optimal systemic capital requirements are increasing in balance sheet size (relative to other banks in the system), interconnectedness, and contagious bankruptcy costs. The paper illustrates, however, that risk-based systemic capital requirements derived in this way are procyclical and point to the need of approaches that would be explicitly countercyclical.

Gauthier et al. (2012) use different holdings-based systemic risk measures (e.g., marginal expected shortfall, ΔCoVaR, Shapley value) to reallocate capital in the banking system and to determine macroprudential capital requirements in the Canadian market using credit register data for a system of six banks. Alter et al. (2015) perform a similar exercise and compare the benchmark case, in which capital is allocated based on the risks in individual banks portfolios, and a system-based case, where capital is allocated based on some interbank network centrality metrics. Using the detailed German credit register for estimation, they find that capital rules based on eigenvectors dominate any other centrality measure, saving about 15 percent in expected bankruptcy costs.

Taxes imposed on banks in the form of contributions to a rescue fund have also been suggested. Markose et al. (2012) advocate a stabilization super-spreader fund, derived from her eigen-pair centrality measure. Like a ‘bail in’ escrow fund, the funds are deployed at the time of potential failure of a financial institution to mitigate tax payer bailouts of the failing bank. Similarly Zlatić et al. (2015) apply DebtRank to model cascade risk in the e-Mid market and determine a Pigouvian taxation to finance a rescue fund, which is used in the case of default of single financial players.

Poledna and Thurner (2016) and Leduc and Thurner (2017) have proposed to use a transaction-specific tax that discriminates among the possible transactions between different lending and borrowing banks. A regulator can use the systemic risk tax (SRT) to select an optimal equilibrium set of transactions that effectively ‘rewire’ the interbank network so as to make it more resilient to insolvency cascades. The SRT was introduced and its effect simulated using an agent-based model in Poledna and Thurner (2016), while Leduc and Thurner (2017) prove analytically that an SRT can be applied without reducing the total credit volume and thus without making the system less efficient. Using an equilibrium concept inspired by the matching markets literature the paper shows that the SRT induces a unique equilibrium matching of lenders and borrowers that is systemic-risk efficient, while without this SRT multiple equilibrium matchings exist, which are generally inefficient.

3.5 Stress-Test Scenario Analysis

A number of papers have used counterfactual simulations to test the stability of financial systems and assess the danger of contagion due to credit exposures in national interbank markets. The approach consists in simulating the breakdown of a single, or possibly more, banks and subsequently assess the scope of contagion. The baseline stress-test model runs as follows: a first bank defaults due to an exogenous shock; the credit event causes losses to other banks via direct exposures in the interbank market and one or more additional banks may default as a result; if this happens, a new round of losses is triggered. The simulation ends when no further bank defaults. Simulations are then repeated by assuming the unanticipated failure of a different bank, possibly spanning across the all system or focusing on the most important institutions.

Overall, the evidence of systemic risk from these tests is mixed. Risk of contagion has been reported, as a percentage of banking system's total assets lost, by Degryse and Nguyen (2007) for Belgium (20%), Mistrulli (2011) for Italy (16%), Upper and Worms (2004) for Germany (15%), and Wells (2004) for the UK (15%). By contrast, little possibility for contagion was found by Blavarg and Nimander (2002) for Sweden, Lubloy (2005) for Hungary, and Sheldon and Maurer (1998) for Switzerland. Furfine (2003) and Amundsen and Arnt (2005) also report only a limited possibility for contagion. Cont et al. (2013) show that in the Brazilian banking system the probability of contagion is small, however the loss resulting from contagion can be very large in some cases.

Recent studies have shown that not taking into account possible loss amplification due to indirect contagion associated with fire sales and bank exposures to the real sector can significantly underestimate the degree of fragility of financial system. We will discuss these effects in Sections 4.1 and 4.2.

3.6 Network Reconstruction

A major limitation of the stress-test analysis is that often the full network of interbank liabilities is not available to regulators. For most countries bilateral linkages are unobserved and aggregate balance sheet information are used for estimating counterparty exposures. Formally the problem is that the row and column sums of a matrix describing a financial network are known but the matrix itself is not known. To estimate bank-to-bank exposures, different network reconstruction methods have been proposed. The most popular approach for deriving individual interbank liabilities from aggregates has been to minimize the Kullback–Leibler divergence between the liabilities matrix and a previously specified input matrix (see Upper, 2011 for a review). This network reconstruction approach is essentially a constrained entropy maximization problem, where the constraints represent the available information and the maximization of the entropy ensures that the reconstructed network is maximally random, given the enforced constraints.

One drawback of the Maximum Entropy (ME) method is that the resulting interbank liabilities usually form a complete network. However, as we have seen, empirical research shows that interbank networks are sparse. The maximum entropy method might thus bias the results, in the light of the theoretical findings that better connected networks may be more resilient to the propagation of shocks. This is confirmed by Mistrulli (2011), who analyzes how contagion propagates within the Italian interbank market using actual bilateral exposures and reconstructed ME exposures. ME is found to generally underestimate the extent of contagion. Similarly Solórzano-Margain et al. (2013) showed that financial contagion arising from the failure of a financial institution in the Mexican financial system would be more widespread than from simulations based on reconstructed network based on ME algorithm.

Mastromatteo et al. (2012) have proposed an extension to the ME approach using a message-passing algorithm for estimating interbank exposures. Their aim is to fix a global level of sparsity for the network and, once this is given, allow the weights on the existing links to be distributed similarly to the ME method. Anand et al. (2014) have proposed the minimum density (MD) method which consists in minimizing the total number of edges that is consistent with the aggregated interbank assets and liabilities. They argue that the MD method tends to overestimate contagion, because minimize the number of links, and therefore can together with the ME method be used to provide upper and lower bounds for stress test results. However, increasing the number of links has a non-monotonous effect on the stability of a network, thus attempts to derive bounds on systemic risk by optimizing over the degree of completeness are unlikely to be successful. Montagna and Lux (2013) construct a Monte Carlo framework for an interbank market characterized by a power law degree distribution and disassortative link formation features via a fitness algorithm (De Masi et al., 2006).

The ME approach is deterministic in the sense that the method produces a point estimate for the financial network that is treated as the true network when performing stress tests. Probabilistic approaches to stress-testing have been attempted by Lu and Zhoua (2011), Halaj and Kok (2013), Squartini et al. (2017), Bargigli (2014), Montagna and Lux (2013), and Gandy and Veraart (2017). Such approaches consist in building an ensemble of random networks, of which the empirical network can be considered a typical sample. This allows to analyze not only the vulnerability of one particular network realization retrieved from the real data, but of many plausible alternative realizations, compatible with a set of constrains. However, to generate a realistic random sample it is crucial to impose the relevant constrains on the simulated networks, reproducing not just the observed exposures but also basic network properties. Cimini et al. (2015) introduce an analytical maximum-entropy technique to reconstruct unbiased ensembles of weighted networks from the knowledge of empirical node strengths and link density. The method directly provides the expected value of the desired reconstructed properties, in such a way that no explicit sampling of reconstructed graphs is required. Gandy and Veraart (2017) have proposed a reconstruction model, which, following a Bayesian approach, allows to condition on the observed total liabilities and assets and, if available, on observed individual liabilities. Their approach allow to construct a Gibbs sampler to generate samples from this conditional distribution that can be used in stress testing, giving probabilities for the outcomes of interest. De Masi et al. (2006) propose a fitness model, where the fitness of each bank is given by their daily trading volume. Fixing the level of heterogeneity the model reproduces remarkably well topological properties of the e-Mid interbank market such as degree distribution, clustering, and assortativity. Finally Iori et al. (2015) introduce a simple model of trading with memory that correctly reproduces features of preferential trading patterns observed in the e-Mid market.

An international study lead by several central banks has been conducted to test the goodness of network reconstruction algorithms (Anand et al., 2017). Initial results suggest that the performance of the tested methods depends strongly on the similarity measure used and the sparsity of the underlying network. This highlights that in order to avoid model risk arising from calibration algorithms, structural bilateral balance sheet, and off balance sheet, data are crucial to study systemic risk from financial interconnections.

Another common critique to stress test studies of contagion is the lack of dynamics in terms of banks' behavioral adjustments. Critics stress the importance to include indirect financial linkages, in terms of common exposures and business models, as well as fire sales or liquidity hoarding contagion driven by fear and uncertainty. To address these concerns, a few papers have explored the role of funding and liquidity risk via simulation experiments. The idea of liquidity risk as banks start fire-selling their assets, depressing overall prices in the market, was initially explored by Cifuentes et al. (2005) and has been further investigated in a simulation framework by Nier et al. (2007), Gai and Kapadia (2010), Haldane and May (2011), Tasca and Battiston (2016), Corsi et al. (2016), Cont and Wagalath (2013), Caccioli et al. (2014), and Poledna et al. (2014). The role of funding risk induced by liquidity hoarding is explored in Haldane and May (2011), Chan-Lau (2010), Espinosa-Vega and Solé (2011), Fourel et al. (2013), Roukny et al. (2013), and Gabrieli et al. (2014). Finally, simultaneous impact of market and funding liquidity risk is explored by Aikman et al. (2010) and Manna and Schiavone (2012).

While this body of work has considerably improved our understanding of the effects of the networks structure on the spreading of systemic risk unfortunately data on a range of relevant activities (securities lending, bilateral repos, and derivatives trading) remain inadequate. Moreover, the activities of non-bank market participants, such as asset managers, insurance and shadow banks, and their interconnections remain opaque. A proper assessment of systemic risk relies on a better understanding of the business models of these players and their interactions and on enhanced availability of individual transactions data.

3.7 Econometrics Systemic Risk Measures

Due to limited availability of supervisory data to capture systemic risk stemming from contagion via bilateral exposures, approaches have been suggested to derive systemic risk from available market data. A number of econometric measures, beyond Pearson correlation, have been proposed to measure the degree of correlation and concentration of risks among financial institutions, and their sensitivity to changes in market prices and economic conditions. Popular ones include Value-at-Risk (CoVaR) by Adrian and Brunnermeier (2016), CoRisk by Chan-Lau (2010), marginal and systemic expected shortfall by Acharya et al. (2012), distressed insurance premium by Huang et al. (2012), SRISK by Brownlees and Engle (2016), distance to distress by Castren and Kavonius (2009), and POD (probability that at least one bank becomes distressed) by Goodhart and Segoviano Basurto (2009). While these approaches are not network based, they are briefly mentioned here (see Appendix B for technical definitions) for completeness as they provide complementary methodologies to assess systemic risk and financial fragility. The underlying assumption behind these “market based” systemic risk measures is that the strength of relationships between different financial institutions, based on correlations between their asset values, is related to the materialization of systemic risk. The rationale is that common movements in underlying firms' asset values are typically driven by the business cycle, interbank linkages or shift in market sentiment that affects the valuation of bank assets simultaneously. By assuming that these are likely causes of contagion and common defaults, systemic risk should be captured by the correlations of observable equity returns.5 However the importance of short-term changes to market data might be overestimated, and the mechanisms that lead to the realization of systemic defaults are not well understood. In particular, when markets function poorly, market data are a poor indicator of financial environments. Correlations between distinct sectors of the financial system became higher during and after the crisis, not before, thus during non-crisis periods, correlation play little role in indicating a build-up of systemic risk using such measures. Moreover, measures based on probabilities invariably depend on market volatility, and during periods of prosperity and growth, volatility is typically lower than in periods of distress. This implies lower estimates of systemic risk until after a volatility spike occurs, which reduces the usefulness of such a measure as an early warning indicator. Overall these measures may be misleading in the build up to a crisis as they underprice risk during market booms.

3.8 Location Advantages in Interbank Networks

In addition to having implications for financial stability, holding a central position in the interbank networks, or establishing preferential relationships, may lead to funding benefit. The exploration of potential location advantages has been the focus of recent papers. Bech and Atalay (2010) analyze the topology of the Federal Funds market by looking at O/N transactions from 1997 to 2006. They show that reciprocity and centrality measures are useful predictors of interest rates, with banks gaining from their centrality. Akram and Christophersen (2013) study the Norwegian interbank market over the period 2006–2009. They observe large variations in interest rates across banks, with systemically more important banks, in terms of size and connectedness, receiving more favorable terms. Temizsoy et al. (2017) show that network measures are significant determinants of funding rates in the e-MID O/N market. Higher local measures of centrality are associated with increasing borrowing costs for borrowers and decreasing premia for lenders. However, global measures of network centrality (betweenness, Katz, PageRank, and SinkRank) benefit borrowers who receive a significant discount if they increase their intermediation activity, while lenders pay in general a premium (i.e. receive lower rates) for centrality. This effect is interpreted by the authors, as driven by the market perception that more central banks will be bailed out if in distress, because “too connected to fail”. The expectation of implicit subsidies could create moral hazard and provide incentives for banks to become systemically important, exacerbating system fragility. Thus Temizsoy et al. (2017) suggest that monitoring how funding cost advantages evolve over time can act as an effective early warning indicator of systemic risk and provide a way to measure the effectiveness of regulatory policy to reduce the market perception that systemically important institutions will not be allowed to default.

During the crisis, increased uncertainty about counterpart credit risk led banks to hoard liquidity rather than making it available in the interbank market. Money markets in most developed countries almost came to a freeze and banks were forced to borrow from Central Banks. Nonetheless there is growing empirical evidence that banks that had established long term interbank relationships had better access to liquidity both before and during the crisis (Furfine, 2001; Cocco et al., 2009; Liedorp et al., 2010; Affinito, 2012; Brauning and Fecht, 2012; Temizsoy et al., 2015).

Early papers on interbank markets focus on the existence of lending relationships in the US Federal Funds markets (Furfine, 1999, 2001). Furfine (1999) shows that larger institutions tend to have a high number of counterparties while Furfine (2001) finds that banking relationships have important effects on borrowing costs and longer relationship decreases the interest rate in the funds market. Affinito (2012) uses data from Italy to analyze interbank customer relationships. His findings are that stable relationships exist and remain strong during the financial crisis. Liedorp et al. (2010) examine bank to bank relationships in the Dutch interbank market to test whether market participants affect each other riskiness through such connections. They show that larger dependence on interbank market increases risk, but banks can reduce their risk by borrowing from stable neighbors. Brauning and Fecht (2012) show that German lenders anticipated the financial crisis by charging higher interest rates in the run-up to the crisis. By contrast, when the sub-prime crisis kicked in, lenders gave a discount to their close borrowers, thus pointing to a peer monitoring role of relationship lending. Temizsoy et al. (2015) analyze the structure of the links between financial institutions participating in the e-MID interbank market. They show that, particularly after the Lehman's collapse, when liquidity became scarce, established relationships with the same bank became an important determinant of interbank spreads. Both borrowers and lenders benefited from establishing relationship throughout the crisis by receiving better rates from and trading larger volume with their preferred counterparties.

Cocco et al. (2009) suggest that size may be the main factor behind the Portuguese interbank funds connective and hierarchical architecture. Small banks acting as borrowers are more likely to rely on lending relationship than larger banks. Thus they suggest that financial institutions do not connect to each other randomly, but rather interact based on a size-related preferential attachment process, possibly driven by too-big-to-fail implicit subsidies or market power.

Hatzopoulos et al. (2015) have investigated the matching mechanism between lenders and borrowers in the e-MID market and its evolution over time. They show that, when controlling for bank heterogeneity, the matching mechanism is fairly random. Specifically, when taking a lender who makes l transactions over a given period of time and a borrower who makes b transaction over the same period, and such that they have m trades in common over that period, Hatzopoulos et al. (2015) show that m is consistent with a random matching hypothesis for more than 90% of all lender/borrower pairs. Even though matches that occur more often than those consistent with a random null model (which they call over expressed links) exist and increase in number during the crisis, neither lenders nor borrowers systematically present several over expressed links at the same time. The picture that emerges from their study is that banks are more likely to be chosen as trading partners because they are larger and trade more often and not because they are more attractive in some dimension (such as their financial healthiness).

Overall the empirical evidence suggests that, particularly at a time of deteriorating trust towards credit rating agencies, private information acquired through repeated transactions plays an important role in mitigating asymmetric information about a borrower's creditworthiness and can ease liquidity redistribution among banks. These results show that interbank exposures are used as a peer-monitoring device. Private information acquired through frequent transactions, supported liquidity reallocation in the e-MID market during the crisis by improving the ability of banks to assess the creditworthiness of their counterparties. Relationship lending thus play a positive role for financial stability and can help policymakers to assess market discipline. Furthermore, the analysis of patterns of preferential relationships may help identifying systemically important financial institutions. If a bank, who is the preferential lender to several borrowers defaults, or stop lending, this may pose a serious funding risk for its borrowers who may find it difficult to satisfy their liquidity needs from other lenders and may be forced to accept deals at higher rates. This may eventually put them under distress and increase systemic risk in the system. Similarly if preferential borrowers exit the interbank market, such lenders may find it difficult to reallocate their liquidity surplus if they fail to find trusted counterparties. The resulting inefficient reallocation of liquidity, may in turn increase funding costs of other borrowers and again contribute to the spread of systemic risk. In this sense relationship lending provides a measure of the financial substitutability of a bank in the interbank market. Thus when establishing if a bank is too connected to fail, regulators should not only look at how connected a bank is, but also at how preferentially connected it is to other players. Finally, reliance on relationship lending is an indicator of trust evaporation in the banking system and monitoring the effect of stable relations on spreads and traded volume may help regulators to identify early warning indicator of a financial turmoil.

4 Networks and Information Filtering

So far we have primarily considered what we have called relationship or event networks. Event or relationship networks are the most common types of networks that are investigated in finance. In these networks a link between two nodes is set when a relationship or an event is occurring between them in a given period of time. For example two banks are linked when a credit relationship is present between them. In addition to this type of networks other classes of networks have been investigated in finance. We call these types of networks (i) proximity based networks, (ii) association networks, and (iii) statistically validated networks.

4.1 Proximity Based Networks

In proximity based networks a similarity or a dissimilarity measure (i.e. technically speaking a proximity measure, see a reference text of Data Mining for a formal definition as, for example, Han et al., 2011) computed between all pairs of elements of a system is used to determine a network. The network obtained highlights the most relevant proximities observed in the system. Let us make an example to clarify the concept. Let us consider two banks having no credit relationship in the interbank market connecting them in a given time period. Is this meaning that the two banks are isolated the one to the other unless a chain of bankruptcy of banks occurs? The general answer is no. We can see this by considering the fact that the two banks have both a portfolio of assets and that the two portfolios can present a given degree of similarity. Two banks characterized by a high degree of similarity of their portfolios will be impacted by market dynamics and by exogenous news in a similar way in spite of the fact that they do not present direct credit relationship. For example, the fire sale of a distressed bank of a given asset will impact all those banks having large weights of that asset in their portfolios. The interlinkages associated with different degree of similarity of the set of elements of a given system can therefore be summarized and visualized in a network. In the literature these networks are sometime called with different names. For example, in neuroscience they are called functional networks because they are obtained starting from the functional activity detected by functional magnetic resonance signals detected in different regions of the brain. The proximity can be estimated by considering features of the considered elements that can be numeric, binary or even categorical.

4.1.1 The Minimum Spanning Tree

The first example of proximity based network was introduced in Mantegna (1999). This study proposed to obtain a network starting from a dissimilarity measure estimated between pairs of stocks traded in a financial market. A dissimilarity measure between two financial stocks can be obtained by first estimating the Pearson correlation coefficient ρ between the two time series of return of stocks and then obtaining a dissimilarity d (more precisely a distance) through the relation ![]() . By following this approach, one can always associate a similarity or dissimilarity matrix to any multivariate time series and use this matrix to define a weighted complete network. The weight of each link being the value of the proximity measure.

. By following this approach, one can always associate a similarity or dissimilarity matrix to any multivariate time series and use this matrix to define a weighted complete network. The weight of each link being the value of the proximity measure.

For this type of weighted complete networks all information which is present in the proximity matrix is retained and it has associated the same degree of statistical reliability. However, in real cases this ideal condition is hardly verified. In fact, not all the information present in a proximity matrix has the same statistical reliability. The specific level of reliability depends on the way the proximity measure is obtained and limitations are always present when the estimation of the proximity measure is done with a finite number of experimental records or features. The unavoidable presence of this type of limitation is quantified by random matrix theory (Metha, 1991) in a very elegant way. For example, the eigenvalue spectrum of the correlation matrix for a random Gaussian multivariate set is given by the Marčenko–Pastur distribution (Marčenko and Pastur, 1967). Several studies have shown that the Marčenko–Pastur distribution computed for a correlation matrix of n stocks with a finite number of records widely overlaps with the ones empirically observed in financial markets although also clear deviations from this null hypothesis are observed (Laloux et al., 1999; Plerou et al., 1999).

In empirical analyses, it is therefore important and informative to perform a meaningful filtering of any proximity matrix obtained from a multivariate system described by a finite number of records or features. One of the most effective filtering procedures of a proximity matrix is the extraction from it of its minimum (for dissimilarity) or maximum (for similarity) spanning tree (Mantegna, 1999). The minimum spanning tree is a concept of graph theory and it is the minimum (or maximum) tree connecting all the nodes of a system through a path of minimal (maximal) length. The minimum spanning tree can be determined by using Prim's or Kruskal's algorithms. The information it selects from the original proximity measure is related to the hierarchical tree that can be obtained from the same proximity matrix by using the hierarchical clustering procedures known as single linkage. For more details about the extraction of minimum spanning trees from a proximity matrix one can consult (Tumminello et al., 2010).

In Fig. 2 we show an example of minimum spanning tree obtained for a set of 100 highly capitalized stocks traded in the US equity markets during the time period January 1995–December 1998. In the MST several stocks, such as, for example, BAC (Bank of America Corp.), INTC (Intel Corp.), or AEP (America Express), are linked with several stocks belonging to the same economic sector (financial, technology, and utility sector respectively) whereas others (the most notable case is General Electric Company (GE)) are linked to stocks of different sectors. In general the clustering in terms of economic sectors of the considered companies is rather evident. Since the original proposal of Mantegna (1999), minimum spanning trees have been investigated in a large number of empirical studies involving different financial markets operating worldwide or in diverse geographical areas. The classes of assets and geographically located markets investigated through the proximity based network methodology comprises6: stocks traded in equity markets geographically located in US (Mantegna, 1999; Bonanno et al., 2001; Onnela et al., 2002; Miccichè et al., 2003; Bonanno et al., 2003; Onnela et al., 2003; Precup and Iori, 2007; Eom et al., 2007; Tumminello et al., 2007; Brida and Risso, 2008; Zhang et al., 2011), in Europe (Coronnello et al., 2005), in Asia (Jung et al., 2006; Eom et al., 2007; Zhuang et al., 2008), market indices of major stock exchanges (Bonanno et al., 2000; Coelho et al., 2007; Gilmore et al., 2008; Song et al., 2011), bonds and interest rates (Di Matteo and Aste, 2002; Dias, 2012, 2013), currencies (McDonald et al., 2005; Mizuno et al., 2006; Górski et al., 2008; Jang et al., 2011; Wang et al., 2012, 2013), commodities (Sieczka and Holyst, 2009; Barigozzi et al., 2010; Tabak et al., 2010b; Kristoufek et al., 2012; Zheng et al., 2013; Kazemilari et al., 2017), interbank market (Iori et al., 2008), housing market (Wang and Xie, 2015), credit default swaps market (León et al., 2014).

As for other data mining approaches, there are multiple approaches to perform information filtering. The choice of specific approach depends on the posed scientific question and on the ability of the filtering process to highlight the information of interest. This is similar to the case of hierarchical clustering where there is no a priori recipe to select the most appropriate algorithm performing the clustering. In addition to the approach of the minimum spanning tree several other approaches have been proposed in the literature to perform information filtering on networks. We will discuss some examples in the following subsection.

4.1.2 Other Types of Proximity Based Networks and the Planar Maximally Filtered Graph

One of the first alternative approaches to the minimum spanning tree was the one proposed in Onnela et al. (2004) where a network is built starting from a correlation matrix by inserting links between two nodes when their correlation coefficient is above a given threshold. This approach is retaining a large amount of information but suffers by the arbitrariness in choosing the threshold. Moreover, the network can cover only part of the system when the correlation threshold is relatively high. When the threshold is selected by considering an appropriate null model as, for example, an uncorrelated multivariate time series characterized by the same return distributions as in real data, most of the estimated correlation coefficient are rejecting the null hypothesis of uncorrelated returns ending up with an almost complete correlation based graph.

To highlight information present in the system by selecting links in a proximity based network richer than the minimum spanning tree without inserting an arbitrarily selected threshold the use of a network topological constraint was proposed in Tumminello et al. (2005). Specifically, Tumminello et al. (2005) introduce a method to obtain a planar graph starting from a similarity or a dissimilarity matrix. The method of the network construction requires that the network remains always planar, i.e. can be embedded in a surface of genus 0, until all the nodes of the system are included in the network. The resulting network has the property of including the minimum (or maximum in case of similarity) spanning tree and of presenting also loops and cliques of 3 and 4 nodes. The planar maximally filtered graph is therefore extracting an amount of information larger than the minimum spanning tree but still linear in the number of nodes of the system. In fact the minimum spanning tree selects ![]() links among the possible

links among the possible ![]() links and the planar maximally filtered graph selects

links and the planar maximally filtered graph selects ![]() links. The approach of filtering the network under topological constraints can be generalized (Aste et al., 2005) by considering the embedding of the network into surfaces with genus larger than 0. The genus is a topological property of a surface. Roughly it is given by the integer number of handles observed in the connected, orientable surface. Unfortunately, this general approach which is very well defined from a mathematical point of view, is pretty difficult to implement computationally already for values of the genus equal to 1.

links. The approach of filtering the network under topological constraints can be generalized (Aste et al., 2005) by considering the embedding of the network into surfaces with genus larger than 0. The genus is a topological property of a surface. Roughly it is given by the integer number of handles observed in the connected, orientable surface. Unfortunately, this general approach which is very well defined from a mathematical point of view, is pretty difficult to implement computationally already for values of the genus equal to 1.

The study of proximity based networks is strongly interlinked with methodological approaches devoted to detect hierarchical structure and clustering of the considered nodes. Examples of this type of interlinkages are the clustering procedure achieved by considering Potts super-paramagnetic transitions (Kullmann et al., 2000). Within this approach, in the presence of anti-correlation, the methodology associates anti-correlation to a physical repulsion between the stocks which is reflected in the obtained clustering structure. Another approach to hierarchical clustering is using maximum likelihood procedure (Giada and Marsili, 2001, 2002), where authors define the likelihood by using a one-factor model, then varied to detect a clustering with high likelihood. In Tumminello et al. (2007) authors introduce the so-called average linkage minimum spanning tree, i.e. a tree associated with the hierarchical clustering procedure of the average linkage. An unsupervised and deterministic clustering procedure, labeled as directed bubble hierarchical tree, often finding high quality partitions based on the planar maximally filtered graph, is proposed in Song et al. (2012). Other clustering approaches have relied more on concepts originating from network science as it is the case for the approaches (i) using the concept of modularity maximization for cluster detection obtained from a correlation matrix (MacMahon and Garlaschelli, 2013), and (ii) using the concept of p-median to construct every cluster as a star network at a given level (Kocheturov et al., 2014).

4.2 Association Networks

Another class of networks is the class we name association networks. In this type of networks two nodes of a complex system are connected in a network by computing a quantity that is putting in relation node i with node j of a given system. The quantity computed can be a complex indicator as, for example, the partial correlation between the time evolution of node i with node j or an indicator of the rejection of a statistical test against a given null hypothesis.