Chapter 1

Computer Basics

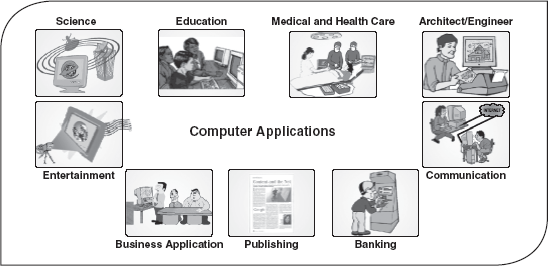

This chapter lays a foundation for one of the most influential forces available in modern times, the computer. A computer is an electronic device, operating under the control of instructions, which tells the machine what to do. It is capable of accepting data (input), processing data arithmetically and logically, producing output from the processing, and storing the results for future use. The chapter begins with the characteristics, evolution, and various generations of computers. The discussion also explores the classification of computers and their features. The chapter concludes with an overview on basic computer units and computer applications.

After reading this chapter, you will be able to understand:

The characteristics of computers that make them an essential part of every technology

The evolution of computers, from refining of abacus to supercomputers

The advancement in technology that has changed the way the computers operate, leading to powerful, efficient and cheaper computers

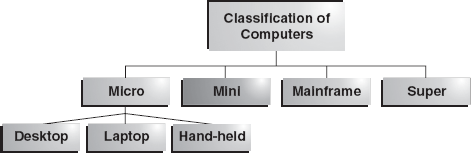

The classification of computers into micro, mini, mainframe and supercomputers

The computer system, which includes components such as the Central Processing Unit (CPU) and I/O devices

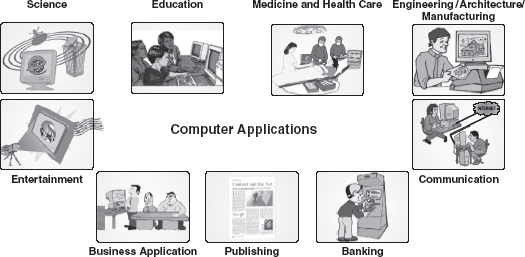

The application of computers in various fields, which increases efficiency, thus, resulting in proper utilization of resources

1.1 INTRODUCTION

In the beginning of the civilization, people used fingers and pebbles for computing purposes. In fact, the word digitus in Latin actually means finger and calculus means pebble. This gives a clue into the origin of early computing concepts. With the development of civilization, the computing needs also grew. The need for a mechanism to perform lengthy calculations led to the invention of, first, calculator and then computers.

The term computer is derived from the word compute, which means to calculate. A computer is an electronic machine devised for performing calculations and controlling operations that can be expressed either in logical or in numerical terms. In simple words, a computer is an electronic device that performs diverse operations with the help of instructions to process the data in order to achieve desired results. Although the application domain of a computer depends totally on human creativity and imagination, it covers a huge area of applications including education, industries, government, medicine, scientific research, law, and even music and arts.

Computers are one of the most influential forces available in modern times. Harnessing the power of computers enables relatively limited and fallible human capacities for memory, logical decision making, reaction and perfection to be extended to almost infinite levels. Millions of complex calculations can be done in a mere fraction of time; difficult decisions can be made with unerring accuracy for comparatively little cost. Computers are widely seen as instruments for future progress and as tools to achieve sustainability by way of improved access to information with the help of video-conferencing and e-mail. Indeed, computers have left such an impression on modern civilization that we call this era as the “information age”.

1.1.1 Characteristics of Computers

The human race developed computers so that it could perform intricate operations, such as calculation and data processing, or simply for entertainment. Today, much of the world's infrastructure runs on computers and it has profoundly changed our lives, mostly for the better. Let us discuss some of the characteristics of computers, which make them an essential part of every emerging technology and such a desirable tool in human development.

- Speed: The computers process data at an extremely fast rate, at millions or billions of instructions per second. A computer can perform a huge task in a few seconds that otherwise a normal human being may take days or even years to complete. The speed of a computer is calculated in MHz (megahertz), that is, one million instructions per second. At present, a powerful computer can perform billions of operations in just one second.

- Accuracy: Besides the efficiency, the computers are also very accurate. The level of accuracy depends on the instructions and the type of machines being used. Since the computer is capable of doing only what it is instructed to do, faulty instructions for data processing may lead to faulty results. This is known as Garbage In Garbage Out (GIGO).

- Diligence: Computer, being a machine, does not suffer from the human traits of tiredness and lack of concentration. If four million calculations have to be performed, then the computer will perform the last four-millionth calculation with the same accuracy and speed as the first calculation.

- Reliability: Generally, reliability is the measurement of the performance of a computer, which is measured against some predetermined standard for operation without any failure. The major reason behind the reliability of the computers is that, at hardware level, it does not require any human intervention between its processing operations. Moreover, computers have built-in diagnostic capabilities, which help in the continuous monitoring of the system.

- Storage Capability: Computers can store large amounts of data and can recall the required information almost instantaneously. The main memory of the computer is relatively small and it can hold only a certain amount of data; therefore, the data are stored on secondary storage devices such as magnetic tape or disks. Small sections of data can be accessed very quickly from these storage devices and brought into the main memory, as and when required, for processing.

- Versatility: Computers are quite versatile in nature. It can perform multiple tasks simultaneously with equal ease. For example, at one moment it can be used to draft a letter, another moment it can be used to play music and in between, one can print a document as well. All this work is possible by changing the program (computer instructions).

- Resource Sharing: In the initial stages of development, computers used to be isolated machines. With the tremendous growth in computer technologies, computers today have the capability to connect with each other. This has made the sharing of costly resources like printers possible. Apart from device sharing, data and information can also be shared among groups of computers, thus creating a large information and knowledge base.

Although processing has become less tedious with the development of computers, it is still a time-consuming and expensive job. Sometimes, a program works properly for some period and then suddenly produces an error. This happens because of a rare combination of events or due to an error in the instruction provided by the user. Therefore, computer parts require regular checking and maintenance in order to give correct results. Furthermore, computers need to be installed in a dust-free place. Generally, some parts of computers get heated up due to heavy processing. Therefore, the ambient temperature of the computer system should be maintained.

THINGS TO REMEMBER

Limitations of a Computer

A computer can only perform what it is programmed to do.

The computer needs well-defined instructions to perform any operation. Hence, computers are unable to give any conclusion without going through intermediate steps.

A computer's use is limited in areas where qualitative considerations are important. For instance, it can make plans based on situations and information, but it cannot foresee whether they will succeed.

1.2 EVOLUTION OF COMPUTERS

The need for a device to do calculations along with the growth in commerce and other human activities explains the evolution of computers. Having the right tool to perform these tasks has always been important for human beings. In their quest to develop efficient computing devices, humankind developed many apparatuses. However, many centuries elapsed before technology was adequately advanced to develop computers.

In the beginning, when the task was simply counting or adding, people used either their fingers or pebbles along lines in the sand. In order to conveniently have the sand and pebbles all the time, people in Asia Minor built a counting device called abacus. This device allowed users to do calculations using a system of sliding beads arranged on a rack. The abacus was simple to operate and was used worldwide for centuries. In fact, it is still used in many countries (Figure 1.1).

Figure 1.1 Abacus

With the passage of time, humankind invented many computing devices, such as Napier bones and slide rule. It took many centuries, however, for the next significant advancement in computing devices. In 1642, a French mathematician, Blaise Pascal, invented the first functional automatic calculator. This brass rectangular box, also called a Pascaline, used eight movable dials to add numbers up to eight figures long (Figure 1.2).

Figure 1.2 Pascaline

In 1694, a German mathematician, Gottfried Wilhem von Leibniz, extended Pascal's design to perform multiplication, division and to find square root. This machine is known as the Stepped Reckoner. It was the first mass-produced calculating device, which was designed to perform multiplication by repeated addition. Like its predecessor, Leibniz's mechanical multiplier worked by a system of gears and dials. The only problem with this device was that it lacked mechanical precision in its construction and was not very reliable.

FACT FILE

What's in a Name?

In 8196, Hollerith found the Tabulating Machine Company, which was later named IBM (International Business Machines). IBM developed numerous mainframes and operating systems, many of which are still in use today. For example, IBM co-developed OS/2 with Microsoft, which laid the foundation for Windows operating systems.

The real beginning of computers as we know them today, however, lay with an English mathematics professor, Charles Babbage. In 1822, he proposed a machine to perform differential equations, called a Difference Engine. Powered by steam and as large as a locomotive, the machine would have a stored program and could perform calculations and print the results automatically. However, Babbage never quite made a fully functional difference engine and in 1833 he quitted working on this machine to concentrate on the Analytical Engine. The basic design of this engine included input devices in the form of perforated cards containing operating instructions and a “store” for memory of 1,000 numbers of up to 50 decimal digits long. It also contained a control unit to allow processing instructions in any sequence and output devices to produce printed results. Babbage borrowed the idea of punch cards to encode the machine's instructions from Joseph-Marie Jacquard's loom. Although the analytical engine was never constructed, it outlined the basic elements of a modern computer.

In 1889, Herman Hollerith, who worked for the US Census Bureau, also applied Jacquard's loom concept to computing. Unlike Babbage's idea of using perforated cards to instruct the machine, Hollerith's method used cards to store the data, which he fed into a machine that compiled the results mechanically (Figure 1.3).

Figure 1.3 Hollerith's Tabulator

The start of World War II produced a substantial need for computer capacity, especially for military purposes. One early success was the Mark I, which was built as a partnership between Harvard Aiken and IBM in 1944. This electronic calculating machine used relays and electromagnetic components to replace mechanical components. In 1946, John Eckert and John Mauchly of the Moore School of Engineering at the University of Pennsylvania developed the Electronic Numerical Integrator and Calculator (ENIAC). This computer used electronic vacuum tubes to make the internal parts of the computer. It embodied almost all the components and concepts of today's high-speed, electronic computers. Later on, Eckert and Mauchly also proposed the development of the Electronic Discrete Variable Automatic Computer (EDVAC). It was the first electronic computer to use the stored program concept introduced by John Von Neumann. It also had the capability of conditional transfer of control, that is, the computer could stop any time and then resume operations. In 1949, at the Cambridge University, a team headed by Maurice Wilkes developed the Electronic Delay Storage Automatic Calculator (EDSAC), which was also based on John Von Neumann's stored program concept. This machine used mercury delay lines for memory and vacuum tubes for logic. The Eckert–Mauchly Corporation manufactured the Universal Automatic Computer (UNIVAC) in 1951 and its implementation marked the real beginning of the computer era.

In the 1960s, efforts to design and develop the fastest possible computer with the greatest capacity reached a turning point with the Livermore Advanced Research Computer (LARC), which had access time of less than 1 μs (pronounced as microsecond) and the total capacity of 100,000,000 words. During this period, the major computer manufacturers began to offer a range of capabilities and prices, as well as accessories such as card feeders, page printers and cathode ray tube displays. During the 1970s, the trend shifted towards a larger range of applications for cheaper computer systems. During this period, many business organizations adopted computers for their offices. The vacuum deposition of transistors became the norm and entire computer assemblies became available on tiny “chips”.

In the 1980s, Very Large Scale Integration (VLSI) design, in which hundreds of thousands of transistors were placed on a single chip, became increasingly common. The “shrinking” trend continued with the introduction of personal computers (PCs), which are programmable machines small enough and inexpensive enough to be purchased and used by individuals. Microprocessors equipped with the read-only memory (ROM), which stores constantly used and unchanging programs, performed an increased number of functions. By the late 1980s, some PCs were run by microprocessors that were capable of handling 32 bits of data at a time and processing about 4,000,000 instructions per second. By the 1990s, PCs became part of everyday life. This transformation was the result of the invention of the microprocessor, a processor on a single integrated circuit (IC) chip. The trend continued leading to the development of smaller and smaller microprocessors with a proportionate increase in processing powers. The computer technology continues to experience huge growth. Computer networking, electronic mail and electronic publishing are just a few applications that have grown in recent years. Advances in technologies continue to produce cheaper and more powerful computers, offering the promise that in the near future, computers or terminals will reside in most, if not all, homes, offices and schools.

1.3 GENERATIONS OF COMPUTERS

The history of computer development is often discussed with reference to the different generations of computing devices. In computer terminology, the word generation is described as a stage of technological development or innovation. A major technological development that fundamentally changed the way computers operate, resulting in increasingly smaller, cheaper, more powerful, and more efficient and reliable devices, characterizes each generation of computers. According to the type of “processor” installed in a machine, there are five generations of computers.

1.3.1 First Generation (1940 to 1956): Vacuum Tubes

First-generation computers were vacuum tubes/thermionic valve-based machines. These computers used vacuum tubes for circuitry and magnetic drums for memory. A magnetic drum is a metal cylinder coated with magnetic iron oxide material on which data and programs can be stored. The input was based on punched cards and paper tape, and the output was in the form of printouts (Figure 1.4).

Figure 1.4 Vacuum Tube

First-generation computers relied on binary-coded language also called machine language (language of 0s and 1s) to perform operations and were able to solve only one problem at a time. Each machine was fed with different binary codes and hence, were difficult to program. This resulted in lack of versatility and speed. In addition, to run on different types of computers, instructions must be rewritten or recompiled.

Examples: ENIAC, EDVAC and UNIVAC.

Characteristics of First-generation Computers

- These computers were based on vacuum tube technology.

- These were the fastest computing devices of their times (computation time was in milliseconds).

- These computers were very large and required a lot of space for installation.

- Since thousands of vacuum tubes were used, they generated a large amount of heat. Therefore, air conditioning was essential.

- These were non-portable and very slow equipments.

- They lacked in versatility and speed.

- They were very expensive to operate and used a large amount of electricity.

- These machines were unreliable and prone to frequent hardware failures. Hence, constant maintenance was required.

- Since machine language was used, these computers were difficult to program and use.

- Each individual component had to be assembled manually. Hence, commercial appeal of these computers was poor.

1.3.2 Second Generation (1956 to 1963): Transistors

Second-generation computers used transistors, which were superior to vacuum tubes. A transistor is made up of semiconductor material like germanium and silicon. It usually has three leads (see Figure 1.5) and performs electrical functions such as voltage, current or power amplification with low power requirements. Since a transistor is a small device, the physical size of computers was greatly reduced. Computers became smaller, faster, cheaper, energy efficient and more reliable than their predecessors. In second-generation computers, magnetic cores were used as the primary memory and magnetic disks as the secondary storage devices. However, they still relied on punched cards for the input and printouts for the output.

Figure 1.5 Transistor

One of the major developments of this generation includes the progress from machine language to assembly language. Assembly language uses mnemonics (abbreviations) for instructions rather than numbers, for example, ADD for addition and MULT for multiplication. As a result, programming became less cumbersome. Early high-level programming languages such as COBOL and FORTRAN also came into existence in this period.

Examples: PDP-8, IBM 1401 and IBM 7090.

Characteristics of Second-generation Computers

- These machines were based on transistor technology.

- These were smaller as compared to the first-generation computers.

- The computational time of these computers was reduced to microseconds from milliseconds.

- These were more reliable and less prone to hardware failure. Hence, they required less frequent maintenance.

- These were more portable and generated less amount of heat.

- Assembly language was used to program computers. Hence, programming became more time-efficient and less cumbersome.

- Second-generation computers still required air conditioning.

- Manual assembly of individual components into a functioning unit was still required.

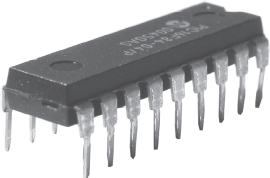

1.3.3 Third Generation (1964 to Early 1970s): Integrated Circuits

The development of the integrated circuit, also called an IC, was the trait of the third-generation computers. An IC consists of a single chip (usually silicon) with many components such as transistors and resistors fabricated on it. ICs replaced several individually wired transistors. This development made computers smaller in size, reliable and efficient (Figure 1.6).

Figure 1.6 Integrated Circuit

Instead of punched cards and printouts, users interacted with third-generation computers through keyboards and monitors, and interfaced with the operating system. This allowed the device to run many different applications simultaneously with a central program that monitored the memory. For the first time, computers became accessible to mass audience because they were smaller and cheaper than their predecessors.

Examples: NCR 395 and B6500.

Characteristics of Third-generation Computers

- These computers were based on IC technology.

- These were able to reduce the computational time from microseconds to nanoseconds.

- These were easily portable and more reliable than the second-generation computers.

- These devices consumed less power and generated less heat. In some cases, air conditioning was still required.

- The size of these computers was smaller as compared to previous-generation computers.

- Since hardware rarely failed, the maintenance cost was quite low.

- Extensive use of high-level languages became possible.

- Manual assembling of individual components was not required, so it reduced the large requirement of labour and cost. However, highly sophisticated technologies were required for the manufacturing of IC chips.

- Commercial production became easier and cheaper.

1.3.4 Fourth Generation (Early 1970s to Till Date): Microprocessors

The fourth generation is an extension of third generation technology. Although, the technology of this generation is still based on the IC, these have been made readily available to us because of the development of the microprocessor (circuits containing millions of transistors). The Intel 4004 chip, which was developed in 1971, took the IC one step further by locating all the components of a computer (CPU, memory and I/O controls) on a minuscule chip. A microprocessor is built on to a single piece of silicon, known as chip. It is about 0.5 cm along one side and no more than 0.05 cm thick.

The fourth-generation computers led to an era of Large Scale Integration (LSI) and VLSI technology. LSI technology allowed thousands of transistors to be constructed on one small slice of silicon material, whereas VLSI squeezed hundreds of thousands of components on to a single chip. Ultra Large Scale Integration (ULSI) increased that number to the millions. This way computers became smaller and cheaper than ever before (Figure 1.7).

Figure 1.7 Microprocessor

The fourth-generation computers became more powerful, compact, reliable and affordable. As a result, it gave rise to the PC revolution. During this period, magnetic core memories were substituted by semiconductor memories, which resulted in faster random access main memories. Moreover, secondary memories such as hard disks became economical, smaller and bigger in capacity. The other significant development of this era was that these computers could be linked together to form networks, which eventually led to the development of the Internet. This generation also saw the development of the Graphical User Interfaces (GUIs), mouse and handheld devices. Despite many advantages, this generation required complex and sophisticated technology for the manufacturing of the CPU and the other components.

Examples: Apple II, Altair 8800 and CRAY-1.

Characteristics of Fourth-generation Computers

- These computers are microprocessor-based systems.

- These are very small in size.

- These are the cheapest among all the other generation computers.

- These are portable and quite reliable.

- These machines generate negligible amount of heat, hence do not require air conditioning.

- Hardware failure is negligible so minimum maintenance is required.

- The production cost is very low.

- The GUI and pointing devices enabled users to learn to use the computer quickly.

- Interconnection of computers led to better communication and resource sharing.

1.3.5 Fifth Generation (Present and Beyond): Artificial Intelligence

The dream of creating a human-like computer that would be capable of reasoning and reaching at a decision through a series of “what-if-then” analyses has existed since the beginning of computer technology. Such a computer would learn from its mistakes and possess the skill of experts. These are the objectives for creating the fifth generation of computers. The starting point for the fifth generation of computers had been set in the early 1990s. The process of developing fifth-generation computers is still in the development stage. However, the expert system concept is already in use. The expert system is defined as a computer system that attempts to mimic the thought process and reasoning of experts in specific areas. Three characteristics can be identified with the fifth-generation computers. These are:

- Mega Chips: Fifth-generation computers will use Super Large Scale Integrated (SLSI) chips, which will result in the production of microprocessors having millions of electronic components on a single chip. In order to store instructions and information, fifth-generation computers require a great amount of storage capacity. Mega chips may enable the computer to approximate the memory capacity of the human mind.

- Parallel Processing: Computers with one processor access and execute only one instruction at a time. This is called serial processing. However, fifth-generation computers will use multiple processors and perform parallel processing, thereby accessing several instructions at once and working on them at the same time.

- Artificial Intelligence (AI): It refers to a series of related technologies that try to simulate and reproduce human behavior, including thinking, speaking and reasoning. AI comprises a group of related technologies: expert systems (ES), natural language processing (NLP), speech recognition, vision recognition and robotics.

1.4 CLASSIFICATION OF COMPUTERS

These days, computers are available in many sizes and types. Some computers can fit in the palm of the hand, while some can occupy the entire room. Computers also differ based on their data-processing abilities. Based on the physical size, performance and application areas, we can generally divide computers into four major categories: micro, mini, mainframe and supercomputers (Figure 1.8).

1.4.1 Microcomputers

A microcomputer is a small, low-cost digital computer, which usually consists of a microprocessor, a storage unit, an input channel and an output channel, all of which may be on one chip inserted into one or several PC boards. The addition of power supply and connecting cables, appropriate peripherals (keyboard, monitor, printer, disk drives and others), an operating system and other software programs can provide a complete microcomputer system. The micro-computer is generally the smallest of the computer family. Originally, these were designed for individual users only, but nowadays they have become powerful tools for many businesses that, when networked together, can serve more than one user. IBM-PC Pentium 100, IBM-PC Pentium 200 and Apple Macintosh are some of the examples of microcomputers. Microcomputers include desktop, laptop and hand-held models such as Personal Digital Assistants (PDAs).

Desktop Computer: The desktop computer, also known as the PC, is principally intended for stand-alone use by an individual. These are the most-common type of microcomputers. These microcomputers typically consist of a system unit, a display monitor, a keyboard, an internal hard disk storage and other peripheral devices. The main reason behind the importance of the PCs is that they are not very expensive for the individuals or the small businesses. Some of the major PC manufacturers are APPLE, IBM, Dell and Hewlett-Packard (Figure 1.9).

Figure 1.9 Desktop Computer

Laptop: A laptop is a portable computer that a user can carry around. Since the laptop resembles a notebook, it is also known as the notebook computer. Laptops are small computers enclosing all the basic features of a normal desktop computer. The biggest advantage of laptops is that they are lightweight and one can use them anywhere and at anytime, especially when one is travelling. Moreover, they do not need any external power supply as a rechargeable battery is completely self-contained in them. However, they are expensive as compared to desktop computers (Figure 1.10).

Figure 1.10 Laptop

Hand-held Computers: A hand-held computer such as a PDA is a portable computer that can conveniently be stored in a pocket (of sufficient size) and used while the user is holding it. PDAs are essentially small portable computers and are slightly bigger than the common calculators. A PDA user generally uses a pen or electronic stylus, instead of a keyboard for input. As shown in (Figure 1.11), the monitor is very small and is the only apparent form of output. Since these computers can be easily fitted on the top of the palm, they are also known as palmtop computers. Handheld computers usually have no disk drive; rather, they use small cards to store programs and data. However, they can be connected to a printer or a disk drive to generate output or store data. They have limited memory and are less powerful as compared to desktop computers. Some examples of hand-held computers are Apple Newton, Casio Cassiopeia and Franklin eBookMan.

1.4.2 Minicomputers

In the early 1960s, Digital Equipment Corporation (DEC) started shipping its PDP series computer, which the press described and referred to as minicomputers. A minicomputer is a small digital computer, which normally is able to process and store less data than a mainframe but more than a microcomputer, while doing so less rapidly than a mainframe but more rapidly than a microcomputer. It is about the size of a two-drawer filing cabinet. Generally, these computers are used as desktop devices that are often connected to a mainframe in order to perform the auxiliary operations (Figure 1.12).

Figure 1.12 Minicomputer

A minicomputer (sometimes called a mid-range computer) is designed to meet the computing needs of several people simultaneously in a small- to medium-sized business environment. It is capable of supporting from four to about 200 simultaneous users. It serves as a centralized storehouse for a cluster of workstations or as a network server. Minicomputers are usually multi-user systems so these are used in interactive applications in industries, research organizations, colleges and universities. They are also used for real-time controls and engineering design work. Some of the widely used minicomputers are PDP 11, IBM (8000 series) and VAX 7500.

1.4.3 Mainframes

A mainframe is an ultra-high performance computer made for high-volume, processor-intensive computing. It consists of a high-end computer processor, with related peripheral devices, capable of supporting large volumes of data processing, high-performance online transaction processing, and extensive data storage and retrieval. Normally, it is able to process and store more data than a minicomputer and far more than a microcomputer. Moreover, it is designed to perform at a faster rate than a minicomputer and at even more faster rate than a microcomputer. Mainframes are the second largest (in capability and size) of the computer family, the largest being the supercomputers. However, mainframes can usually execute many programs simultaneously at a high speed, whereas supercomputers are designed for a single process (Figure 1.13).

Figure 1.13 Mainframe

The mainframe allows its users to maintain a large amount of data storage at a centralized location and to access and process these data from different computers located at different locations. It is typically used by large businesses and for scientific purposes. Some examples of the mainframe are IBM's ES000, VAX 8000 and CDC 6600.

1.4.4 Supercomputers

Supercomputers are the special-purpose machines, which are especially designed to maximize the numbers of floating point operations per second (FLOPS). Any computer below one gigaflop per second is not considered a supercomputer. A supercomputer has the highest processing speed at a given time for solving scientific and engineering problems. Essentially, it contains a number of CPUs that operate in parallel to make it faster. Its processing speed lies in the range 400–10,000 MFLOPS (millions of floating point operations per second). Due to this feature, supercomputers help in many applications including information retrieval and computer-aided designing (Figure 1.14).

Figure 1.14 Supercomputer

A supercomputer can process a great deal of data and make extensive calculations very quickly. It can resolve complex mathematical equations in a few hours, which would have taken many years when performed using a paper and pencil or using a hand calculator. It is the fastest, costliest and most powerful computer available today. Typically, supercomputers are used to solve multivariant mathematical problems of existent physical processes, such as aerodynamics, metrology and plasma physics. They are also required by the military strategists to simulate defence scenarios. Cinematic specialists use them to produce sophisticated movie animations. Scientists build complex models and simulate them in a supercomputer. However, a supercomputer has limited broad-spectrum use because of its price and limited market. The largest commercial uses of supercomputers are in the entertainment/advertising industry. CRAY-3, Cyber 205 and PARAM are some well-known supercomputers.

FACT FILE

India's Super Achievement

In 2003, India developed the PARAM Padma supercomputer, which marks an important step towards high-performance computing. The PARAM Padma computer was developed by India's Center for Development of Advanced Computer (C-DAC) and promises processing speeds of up to 1 teraflop per second (1 trillion processes per second).

1.5 THE COMPUTER SYSTEM

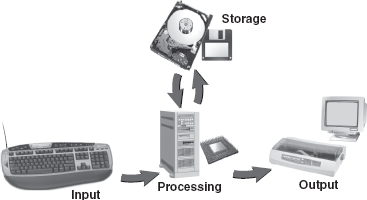

A computer can be viewed as a system, which consists of a number of interrelated components that work together with the aim of converting data into information. In a computer system, processing is carried out electronically, usually with little or no intervention from the user.

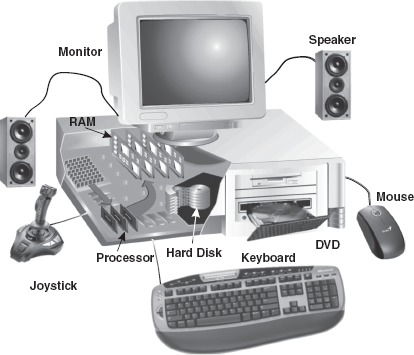

The general perception of people regarding the computer is that it is an “intelligent thinking machine”. However, this is not true. Every computer needs to be instructed exactly what to do and how to do. The instructions given to computers are called programs. Without programs, computers would be useless. The physical parts that make up a computer (the CPU, input, output and storage unit) are known as hardware. Any hardware device connected to the computer or any part of the computer outside the CPU and working memory is known as a peripheral. Some examples of peripherals are keyboards, mouse and monitors.

1.5.1 Components of a Computer System

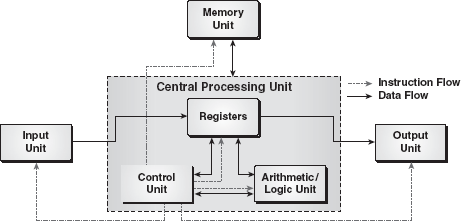

There are several computer systems in the market with a wide variety of makes, models and peripherals. In general, a computer system comprises the following components:

- CPU: This unit performs processing of instructions and data inside the computer.

- Input Unit: This unit accepts instructions and data.

- Output Unit: This unit communicates the results to the user.

- Storage Unit: This unit stores temporary and final results (Figure 1.15).

Figure 1.15 Components of a Computer System

Central Processing Unit: The CPU, also known as a processor, is the brain of the computer system that processes data (input) and converts it into meaningful information (output). It is referred to as the administrative section of the computer system that interprets the data and instructions, coordinates the operations, and supervises the instructions. The CPU works with data in discrete form, that is, either 1 or 0. It counts, lists, compares and rearranges the binary digits of data in accordance with the detailed program instructions stored within the memory. Eventually, the results of these operations are translated into characters, numbers and symbols that can be understood by the user. The CPU itself has three parts:

- Arithmetic Logic Unit (ALU): This unit performs the arithmetic (add, subtract) and logical operations (and, or) on the data made available to it. Whenever an arithmetic or logical operation is to be performed, the required data are transferred from the memory unit to the ALU, the operation is performed and the result is returned to the memory unit. Before the completion of the processing, data may need to be transferred back and forth several times between these two sections.

- Control Unit: This unit checks the correctness of the sequence of operations. It fetches the program instructions from the memory unit, interprets them and ensures correct execution of the program. It also controls the I/O devices and directs the overall functioning of the other units of the computer.

- Registers: These are the special-purpose, high-speed temporary memory units that can hold varied information such as data, instructions, addresses and intermediate results of calculations. Essentially, they hold the information that the CPU is currently working on. The registers can be considered as the CPU's working memory, an additional storage location that provides the advantage of speed.

Note: The circuits necessary to create a CPU for a PC are fabricated on a microprocessor.

Input, Output and Storage Unit: The user must enter instructions and data into the computer system before any operation can be performed on the given data. Similarly, after processing the data, the information must go out from the computer system to the user. For this, every computer system incorporates the I/O unit that serves as a communication medium between the computer system and the user.

An input unit accepts instructions and data from the user with the help of input devices such as keyboard, mouse, light pen, etc. Since the data and instructions entered through different input devices will be in different form, the input unit converts them into the form that the computer can understand. After this, the input unit supplies the converted instructions and data to the computer for further processing.

The output unit performs just opposite to that of input unit. It accepts the output (which is in machine-coded form) produced by the computer, converts them into the user-understandable form and supplies the converted results to the user with the help of an output device such as printer, monitor and plotter.

Besides, a computer system incorporates a storage unit to store the input entered through the input unit before processing starts and to store the results produced by the computer before supplying them to the output unit. The storage unit of a computer comprises two types of memory/storage: primary and secondary. The primary memory, also called the main memory, is the part of a computer that holds the instructions and data currently being processed by the CPU, the intermediate results produced during the course of calculations and the recently processed data. While the instructions and data remain in the main memory, the CPU can access them directly and quickly. However, the primary memory is quite expensive and has a limited storage capacity.

Due to the limited size of the primary memory, a computer employs the secondary memory, which is extensively used for storing data and instructions. It supplies the stored information to the other units of the computer as and when required. It is less expensive and has higher storage capacity than the primary memory. Some commonly used secondary storage devices are floppy disks, hard disks and tape drives (Figure 1.16).

1.5.2 How Does a Computer Work?

A computer performs three basic steps to complete any task: input, processing and output. A task is assigned to a computer in a set of step-by-step instructions, which is known as a program. These instructions tell the computer what to do with the input in order to produce the required output. A computer functions in the following manner:

| Step 1 | The computer accepts the input. The computer input is whatever entered or fed into a computer system. The input can be supplied by the user (such as by using a keyboard) or by another computer or device (such as a diskette or CD-ROM). Some examples of input include the words and symbols in a document, numbers for a calculation, instructions for completing a process, and so on. |

| Step 2 | The computer processes the data. During this stage, the computer follows the instructions using the data that have been input. Examples of processing include calculations, sorting lists of words or numbers and modifying documents according to user instructions. |

| Step 3 | The computer produces output. Computer output is the information that has been produced by a computer. Some examples of computer output include reports, documents and graphs. Output can be in several formats, such as printouts, or displayed on the screen (Figure 1.17). |

1.6 APPLICATIONS OF COMPUTERS

In the last few decades, computer technology has revolutionized the businesses and other aspects of human life all over the world. Practically, every company, large or small, is now directly or indirectly dependent on computers for data processing. Computer systems also help in the efficient operation of railway and airway reservation, hospital records, accounts, electronic banking and so on. Computers not only save time, but also save paper work. Some of the areas where computers are being used are listed below.

- Science: Scientists have been using computers to develop theories, to analyse and to test the data. The fast speed and the accuracy of the computer allow different scientific analyses to be carried out. They can be used to generate detailed studies of how earthquakes affect buildings or pollution affects weather pattern. Satellite-based applications would not have been possible without the use of computers. It would also not be possible to get the information of our solar system and the cosmos without computers.

- Education: Computers have also revolutionized the whole process of education. Currently, the classrooms, libraries and museums are utilizing computers to make the education much more interesting. Unlike recorded television shows, computer-aided education (CAE) and computer-based training (CBT) packages are making learning much more interactive.

- Medicine and Healthcare: There has been an increasing use of computers in the field of medicine. Now, doctors are using computers right from diagnosing the illness to monitoring a patient's status during complex surgery. By using automated imaging techniques, doctors are able to look inside a person's body and can study each organ in detail (such as CAT scans or MRI scans), which was not possible few years ago. There are several examples of special-purpose computers that can operate within the human body such as a cochlear implant, a special kind of hearing aid that makes it possible for deaf people to hear.

- Engineering/Architecture/Manufacturing: The architects and engineers are extensively using computers in designing and drawings. Computers can create objects that can be viewed from all the three dimensions. By using techniques like virtual reality, architects can explore houses that have been designed but not built. The manufacturing factories are using computerized robotic arms in order to perform hazardous jobs. Besides, computer-aided manufacturing (CAM) can be used in designing the product, ordering the parts and planning production. Thus, computers help in coordinating the entire manufacturing process.

- Entertainment: Computers are finding greater use in the entertainment industry. They are used to control the images and sounds. The special effects, which mesmerize the audience, would not have been possible without the computers. In addition, computerized animation and colourful graphics have modernized the film industry.

- Communication: E-mail or electronic mail is one of the communication media in which computers are used. Through an e-mail, messages and reports are passed from one person to one or more persons with the aid of computers and telephone lines. The advantage of this service is that while transferring the messages it saves time, avoids wastage of paper, and so on. Moreover, the person who is receiving the messages can read the messages whenever he is free and can save it, reply it, forward it or delete it from the computer.

- Business Application: This is one of the important uses of the computer. Initially, computers were used for batch processing jobs, where one does not require the immediate response from the computer. Currently, computers are mainly used for real-time applications (like at the sales counter) that require immediate response from the computer. There are various concerns for which computers are used such as in business forecasting, to prepare pay bills and personal records, in banking operations and data storage, in various types of life insurance business, and as an aid to management. Businesses are also using the networking of computers, where a number of computers are connected together to share the data and the information. Use of an e-mail and the Internet has changed the ways of doing business.

- Publishing: Computers have created a field known as Desktop Publishing (DTP). In DTP, with the help of a computer and a laser printer one can perform the publishing job all by oneself. Many of the tasks requiring long manual hours, such as making a table of contents and an index, can be automatically performed using the computers and DTP software.

- Banking: In the field of banking and finance, computers are extensively used. People can use the Automated Teller Machine (ATM) services 24 hours a day in order to deposit and withdraw cash. When the different branches of the bank are connected through the computer networks, the inter-branch transactions, such as drawing cheques and making drafts, can be performed by the computers without any delay (Figure 1.18).

Let Us Summarize

- Computer is an electronic device that performs diverse operations with the help of instructions to process the data in order to achieve desired results. Speed, accuracy, reliability, versatility, diligence, storage capability and resource sharing characterize the computers.

- Many devices, which humans developed for their computing requirements, preceded computers. Some of those devices were Sand Tables, Abacus, Napier Bones, Slide Rule, Pascaline, Stepped Reckoner, Difference Engine, Analytical Engine and Hollerith's Tabulator.

- Computer development is divided into five main generations. With every generation, computer technology has fundamentally changed, resulting in an increasingly smaller, cheaper, more powerful, more efficient and reliable devices.

- First-generation computers were vacuum tube based machines. These computers were very large, required a lot of space for installation, generated a large amount of heat were non-portable and have very slow equipments. In addition, these machines were unreliable and prone to frequent hardware failures.

- Second-generation computers used transistors in place of vacuum tubes. Since a transistor is a small device, the physical size of computers was greatly reduced. Computers became smaller, faster, cheaper, energy-efficient and more reliable than their predecessors.

- Third-generation computers were IC-based machines. The IC replaced several individually wired transistors, making computers smaller in size, reliable and efficient.

- Fourth-generation computers use microprocessors (circuits containing millions of transistors) as their basic processing device. These computers are the most powerful, compact, reliable and affordable as compared to their predecessors.

- Fifth-generation computers are still in the development stage. These computers will use megachips, which will result in the production of microprocessors having millions of electronic components on a single chip. They will use intelligent programming (AI) and knowledge-based problem-solving techniques.

- A microcomputer is a small, low-cost digital computer, which usually consists of a microprocessor, a storage unit, and an input and output channel, all of which may be on one chip inserted into one or several PC boards. Microcomputers include desktop, laptop and hand-held models, such as PDAs.

- A minicomputer is a small digital computer, which normally is able to process and store less data than a mainframe but more than a microcomputer, while doing so less rapidly than a mainframe but more rapidly than a microcomputer.

- A mainframe is an ultrahigh performance computer made for high-volume, processor-intensive computing. It is capable of supporting large volumes of data processing, high-performance online transaction processing, and extensive data storage and retrieval.

- Supercomputers are the special-purpose machines, which are specifically designed to maximize the number of FLOPS. Any computer below one gigaflop per second is not considered as a supercomputer.

- A computer can be viewed as a system that comprises several units (CPU, input unit, output unit and storage unit). These individual units work together to convert data into information.

- The CPU interprets, coordinates the operations and supervises the instructions. It has three parts: ALU, CU and registers. The ALU performs arithmetic (add, subtract) and logical operations (and, or) on the stored numbers. The CU checks the correctness of the sequence of operations, controls the I/O devices and directs the overall functioning of the other units of the computer. The registers are special-purpose, high-speed temporary memory units that can hold varied information such as data, instructions, addresses and intermediate results of calculations.

- The input unit involves the receipt of data or instructions from the user, in a computer acceptable form. The computer takes in the data through input devices like keyboard, mouse, light pen, etc.

- The output unit supplies the resulting data obtained from the data processing to the user. Monitors, printers and plotters are some of the examples of output devices.

- During processing, the intermediate and the results of processing are held by the storage unit until the manipulation of the data is completed. When the data to be processed or the results produced by the processing are in large volumes, they are stored on various storage media like floppies, hard disks and tapes.

- A computer performs three basic steps to complete any task: input, processing and output. A computer receives data as input, processes it, stores it and then produces output.

- Computers have entered in every sphere of human life and found applications in various fields, such as medicine and healthcare, business, education, science, technology, government, entertainment, engineering and architecture.

Exercises

Fill in the Blanks

- The basic component of first-generation computers was ..................

- The speed of a computer is calculated in ..................

- Third-generation computers were .................. based machines.

- Keyboard is an .................. device.

- Laptops are also known as ..................

- Computers can be classified as .................., .................., .................. and .................. computers.

- A computer performs three basic steps to complete any task, which are .................., .................. and ..................

- PDA stands for ...................................................

- The CPU consists of .................., .................. and ..................

- Physical components on which the data are stored permanently are called ..................

Multiple-choice Questions

- The development of computers can be divided ............ into generations.

- 3

- 4

- 5

- 6

- Choose the odd one out.

- Microcomputer

- Minicomputer

- Supercomputer

- Digital computer

- .................. is a very small computer that can be held in the palm of the hand.

- PDA

- PC

- Laptop

- Minicomputer

- Analytical engine was developed by ..................

- Gottfried Wilhem Von Leibriz

- Charles Babbage

- Herman Hollerith

- Joseph-Marie Jacquard

- The main distinguishing feature of fifth-generation computers will be ..................

- Liberal use of microprocessors

- Artificial Intelligence

- Extremely low cost

- Versatility

- The computer that is not considered as a portable computer is ..................

- Laptop

- PDA

- Minicomputer

- None of these

- The CPU stands for ..................

- Central protection unit

- Central processing unit

- Central power unit

- Central prerogative unit

- UNIVAC is an example of ..................

- First-generation computer

- Second-generation computer

- Third-generation computer

- Fourth-generation computer

- The unit that performs the arithmetic and logical operations on the stored numbers is known as ..................

- Arithmetic logic unit

- Control unit

- Memory unit

- Both (a) and (b)

- The .................. is the “administrative” section of the computer system.

- Input unit

- Output unit

- Memory unit

- Central processing unit

State True or False

- The ALU is responsible for performing the arithmetic and logical operations.

- Microcomputers are more powerful than minicomputers.

- Laptop is also known as a notebook.

- Vacuum tubes were a part of third-generation computers.

- EDVAC was a second-generation computer.

- LSI and VLSI technology are part of fifth-generation computers.

- A laptop is a portable computer.

- Primary memory and main memory are synonyms.

- Computer development is divided into four main generations.

- PARAM is an example of a portable computer.

Descriptive Questions

- Discuss the characteristics of computers.

- What are the advantages of transistors over vacuum tubes?

- Discuss various types of computers in detail.

- List out various applications of computers.

- Discuss various computer generations along with the key characteristics of the computers of each generation.

- Discuss the basic organization of a computer system and explain the functions of various units of a computer system.

ANSWERS

Fill in the Blanks

- Vacuum tube

- Megahertz

- Integrated circuits

- Input

- Notebook computers

- Micro, Mini, Mainframe, Super

- Input, Processing, Output

- Personal digital assistant

- ALU, CU, registers

- Secondary storage devices

Multiple-choice Questions

- (c)

- (d)

- (a)

- (b)

- (b)

- (c)

- (b)

- (a)

- (a)

- (d)

State True or False

- True

- False

- True

- False

- False

- False

- True

- True

- False

- False