As you did earlier, you should grab the code from https://github.com/mlwithtf/MLwithTF/.

We will be focusing on the chapter_05 subfolder that has the following three files:

- data_utils.py

- translate.py

- seq2seq_model.py

The first file handles our data, so let's start with that. The prepare_wmt_dataset function handles that. It is fairly similar to how we grabbed image datasets in the past, except now we're grabbing two data subsets:

- giga-fren.release2.fr.gz

- giga-fren.release2.en.gz

Of course, these are the two languages we want to focus on. The beauty of our soon-to-be-built translator will be that the approach is entirely generalizable, so we can just as easily create a translator for, say, German or Spanish.

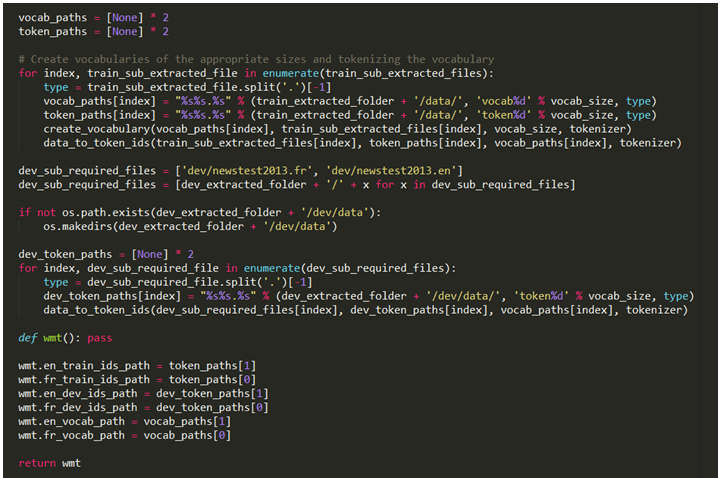

The following screenshot is the specific subset of code:

Next, we will run through the two files of interest from earlier line by line and do two things—create vocabularies and tokenize the individual words. These are done with the create_vocabulary and data_to_token_ids functions, which we will get to in a moment. For now, let's observe how to create the vocabulary and tokenize on our massive training set as well as a small development set, newstest2013.fr and dev/newstest2013.en:

We created a vocabulary earlier using the following create_vocabulary function. We will start with an empty vocabulary map, vocab = {}, and run through each line of the data file and for each line, create a bucket of words using a basic tokenizer. (Warning: this is not to be confused with the more important token in the following ID function.)

If we encounter a word we already have in our vocabulary, we will increment it as follows:

vocab[word] += 1

Otherwise, we will initialize the count for that word, as follows:

vocab[word] += 1

We will keep doing this until we run out of lines on our training dataset. Next, we will sort our vocabulary by order of frequency using sorted(vocab, key=vocab.get, and reverse=True).

This is important because we won't keep every single word, we'll only keep the k most frequent words, where k is the vocabulary size we defined (we had defined this to 40,000 but you can choose different values and see how the results are affected):

While working with sentences and vocabularies is intuitive, this will need to get more abstract at this point—we'll temporarily translate each vocabulary word we've learned into a simple integer. We will do this line by line using the sequence_to_token_ids function:

We will apply this approach to the entire data file using the data_to_token_ids function, which reads our training file, iterates line by line, and runs the sequence_to_token_ids function, which then uses our vocabulary listing to translate individual words in each sentence to integers:

Where does this leave us? With two datasets of just numbers. We have just temporarily translated our English to French problem to a numbers to numbers problem with two sequences of sentences consisting of numbers mapping to vocabulary words.

If we start with ["Brooklyn", "has", "lovely", "homes"] and generate a {"Brooklyn": 1, "has": 3, "lovely": 8, "homes": 17"} vocabulary, we will end up with [1, 3, 8, 17].

What does the output look like? The following typical file downloads:

ubuntu@ubuntu-PC:~/github/mlwithtf/chapter_05$: python translate.py

Attempting to download http://www.statmt.org/wmt10/training-giga-

fren.tar

File output path:

/home/ubuntu/github/mlwithtf/datasets/WMT/training-giga-fren.tar

Expected size: 2595102720

File already downloaded completely!

Attempting to download http://www.statmt.org/wmt15/dev-v2.tgz

File output path: /home/ubuntu/github/mlwithtf/datasets/WMT/dev-

v2.tgz

Expected size: 21393583

File already downloaded completely!

/home/ubuntu/github/mlwithtf/datasets/WMT/training-giga-fren.tar

already extracted to

/home/ubuntu/github/mlwithtf/datasets/WMT/train

Started extracting /home/ubuntu/github/mlwithtf/datasets/WMT/dev-

v2.tgz to /home/ubuntu/github/mlwithtf/datasets/WMT

Finished extracting /home/ubuntu/github/mlwithtf/datasets/WMT/dev-

v2.tgz to /home/ubuntu/github/mlwithtf/datasets/WMT

Started extracting

/home/ubuntu/github/mlwithtf/datasets/WMT/train/giga-

fren.release2.fixed.fr.gz to

/home/ubuntu/github/mlwithtf/datasets/WMT/train/data/giga-

fren.release2.fixed.fr

Finished extracting

/home/ubuntu/github/mlwithtf/datasets/WMT/train/giga-

fren.release2.fixed.fr.gz to

/home/ubuntu/github/mlwithtf/datasets/WMT/train/data/giga-

fren.release2.fixed.fr

Started extracting

/home/ubuntu/github/mlwithtf/datasets/WMT/train/giga-

fren.release2.fixed.en.gz to

/home/ubuntu/github/mlwithtf/datasets/WMT/train/data/giga-

fren.release2.fixed.en

Finished extracting

/home/ubuntu/github/mlwithtf/datasets/WMT/train/giga-

fren.release2.fixed.en.gz to

/home/ubuntu/github/mlwithtf/datasets/WMT/train/data/giga-

fren.release2.fixed.en

Creating vocabulary

/home/ubuntu/github/mlwithtf/datasets/WMT/train/data/vocab40000.fr

from

data /home/ubuntu/github/mlwithtf/datasets/WMT/train/data/giga-

fren.release2.fixed.fr

processing line 100000

processing line 200000

processing line 300000

...

processing line 22300000

processing line 22400000

processing line 22500000

Tokenizing data in

/home/ubuntu/github/mlwithtf/datasets/WMT/train/data/giga-

fren.release2.fr

tokenizing line 100000

tokenizing line 200000

tokenizing line 300000

...

tokenizing line 22400000

tokenizing line 22500000

Creating vocabulary

/home/ubuntu/github/mlwithtf/datasets/WMT/train/data/vocab

40000.en from data

/home/ubuntu/github/mlwithtf/datasets/WMT/train/data/giga-

fren.release2.en

processing line 100000

processing line 200000

...

I won't repeat the English section of the dataset processing as it is exactly the same. We will read the gigantic file line by line, create a vocabulary, and tokenize the words line by line for each of the two language files.