Nowadays, computers can't be left out of our society. Almost everyone in our modern society has some link with a computer or computer network. All our personal, financial, and government-related data, or perhaps even an account at an Internet shop, is stored in highly advanced computers. These systems should serve millions of people, without running out of resources and becoming a bad performing system. Also a lot of data needs to be available at any time and at any place. Downtime and bad performing systems are not an option in our 24-hour economy.

So, what could be a solution to fulfill all the above needs? I guess you already have the answer: Clustering.

Also your company, FinanceFiction, has to meet the satisfaction of all its customers. In this case, you've introduced Oracle WebLogic Server's solution.

An Oracle WebLogic server cluster consists of one or more Oracle WebLogic Managed Server instances running simultaneously and working together to provide increased scalability and reliability. A cluster appears to clients as one Oracle WebLogic Server instance. The server instances that constitute a cluster can run on one machine or on different machines.

By replicating the services provided by one instance, an enterprise system achieves a fail-safe and scalable environment. It is good practice to set all the servers in a cluster to provide the same services.

You can increase a cluster's capacity by adding server instances to the cluster on an existing machine, or by adding machines to the cluster to host the incremental server instances.

The clustering support for different types of applications is as follows:

- For Web applications, the cluster architecture enables replicating the HTTP session state of clients. You can balance the Web application load across a cluster by using an Oracle WebLogic server proxy plug-in or an external load-balancer.

- For Enterprise JavaBeans (EJBs) and Remote Method Invocation (RMI) objects, clustering uses the object's replica-aware stub. When a client makes a call through a replica-aware stub to a service that has failed, the stub detects the failure and retries the call on another replica.

- For JMS applications, clustering supports cluster-wide transparent access to destinations from any member of the cluster.

A WebLogic Server cluster provides the following benefits:

- Scalability: The capacity of a cluster is not limited to one server or one machine. Servers can be added to the cluster dynamically to increase capacity. If more hardware is needed, a new server instance can be added on a new host.

- Load balancing: The distribution of jobs and associated communications across the computing and networking resources in your environment can be evenly distributed, depending on your environment. Even Distributions include round-robin and random algorithms.

- Failover ability of applications: Distribution of applications and their objects on multiple servers enables easier failover of the applications.

- Availability: A cluster uses the redundancy of multiple servers for clients that fail. The same service can be provided on multiple servers in a cluster. If one server fails, another can take over. The capability to execute failover from a failed server to a functioning server increases the availability of the application to clients.

In this case, it makes Oracle WebLogic a redundant, scalable, and reliable system to host applications and their resources.

Clustering serves all the earlier mentioned capabilities for the following components that run in a WebLogic server environment:

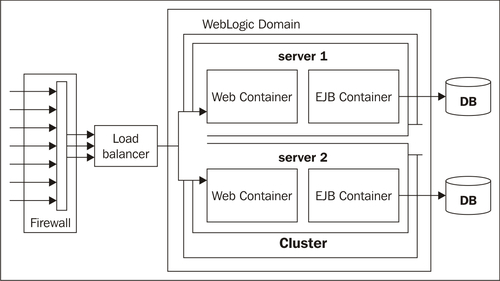

The basic cluster architecture combines all Web application tiers and puts the related services (static HTTP, presentation logic, and objects) into one cluster.

This architecture has the following advantages:

- Single point of administration: Because one cluster hosts static HTTP pages, servlets, and EJBs, you can configure the entire Web application and deploy or undeploy objects using one administration console. You need not maintain a separate layer of Web servers (and configure Oracle WebLogic proxy plug-ins) to benefit from clustered servlets.

- Load balancing: Using load-balancing hardware directly in front of the Oracle WebLogic cluster enables you to use advanced load-balancing policies for access to both HTML and servlet content.

- Better security: Putting a firewall in front of your load-balancing hardware enables you to set up a demilitarized zone (DMZ) for your web application using minimal firewall policies.

- Better performance: The combined-tier architecture gives the best performance for applications in which most or all the servlets or JSPs in the presentation layer typically access objects in the object layer, such as EJBs or JDBC objects.

Look at the following diagram to see this type of architecture:

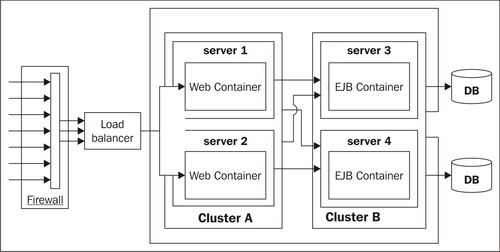

- Multi-Tier Architecture: Application layer is deployed on two clusters: a WebLogic server cluster for the Web Layer and Presentation tier and another WebLogic server cluster for the Object Layer (EJB, RMI).

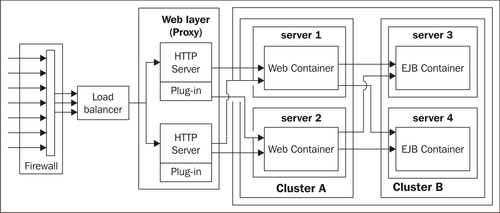

- Basic Cluster Proxy Architecture: This is similar to the basic cluster architecture, except that static content is hosted on HTTP servers. The two-tier proxy architecture has 2 layers, that is the Web layer and Servlet/Object layer.

The proxy architecture uses a layer of hardware and software that is dedicated to the task of providing the application's Web layer.

The physical layer of the Web servers should only provide static Web pages. Dynamic content such as servlets and JSPs are proxied via the proxy plug-in or

HttpClusterServletto an Oracle WebLogic server cluster that hosts servlets and JSPs for the presentation tier.The basic cluster proxy architecture hosts the presentation and object tiers on a cluster of Oracle WebLogic server instances. This cluster can be deployed either on a single machine or on multiple separate machines. The Servlet/Object layer differs from the combined-tier cluster, in that it does not provide static HTTP content to application clients.

- Multi-Tier Proxy Architecture: It is like Multi-Tier Recommended Architecture, but here the Web tier is hosted on a separate Web layer with WebLogic Proxy plug-in on the WebLogic Server.

If you require more load balancing options (EJBs) or higher availability, then a Multi-Tier solution could be the best option to choose. By dividing servlets and EJBs on separate clusters, the servlet-method calls to the EJBs can be load-balanced across multiple servers.

Separating the presentation and object tiers onto separate clusters provides you with more options for distributing the load of the Web application. For example, if the application accesses HTTP and servlet content more often than EJB content, you can use a large number of Oracle WebLogic instances in the presentation tier cluster to concentrate access to a smaller number of servers that host the EJBs. Also, if your Web clients make heavy use of servlets and JSPs but access a relatively smaller set of clustered objects, then the multi-tier architecture enables you to concentrate the load of servlets and EJB objects appropriately. You may configure a servlet cluster of 10 Oracle WebLogic server instances and an object cluster of three Managed Servers, while still fully using each server's hardware resources.

WebLogic server instances in a cluster communicate with one another using two basic network technologies:

- IP sockets, which are the conduits for peer-to-peer communication between clustered server instances.

- IP unicast or multicast, which is used by server instances to broadcast availability of services and heartbeats that indicate continued availability.

When creating a new cluster, it is recommended that you use unicast for messaging within a cluster. It is much easier to configure because it does not require cross-network configuration that multicast requires. It also reduces potential network errors that can occur from multicast address conflicts.

You could consider the following if you are going to use unicast to handle cluster communications:

- All members of a cluster must use the same message type. Mixing between multicast and unicast messaging is not allowed.

- You must use multicast if you need to support a previous version of WebLogic server within your cluster.

- Individual cluster members cannot override the cluster messaging type.

- The entire cluster must be restarted to change the messaging type. Unicast uses TCP socket communication and multicast uses UDP communication. On networks where UDP is not supported, unicast must be used.

For backwards compatibility with previous versions of Oracle WebLogic, you must use multicast for communications between clusters.

Multicast broadcasts one-to-many communications between clustered instances. WebLogic server uses IP multicast for all one-to-many communications among server instances in a cluster. Each WebLogic server instance in a cluster uses multicast to broadcast regular "heartbeat" messages. By monitoring heartbeat messages, server instances in a cluster determine when a server instance has failed (clustered server instances also monitor IP sockets as a more immediate method of determining when a server instance has failed).

IP multicast is a broadcast technology that enables multiple applications to subscribe to an IP address and port number and listen for messages. A multicast address is an IP address in the range 224.0.0.0 239.255.255.255.

If your cluster is divided over multiple subnets, your network must be configured to reliably transmit messages. A firewall can break IP multicast transmissions and the multicast address should not be shared with other applications. Also, multicast storms may occur, when server instances in a cluster do not process incoming messages on a timely basis, thus generating more network traffic, which causes round trips with no end.

You can use different ways to configure a cluster:

- Administration Console: If you have an operational domain within which you want to configure a cluster, you can use the Administration Console.

- Configuration Wizard: The Configuration Wizard is the recommended tool for creating a new domain with the cluster.

- WebLogic Scripting Tool (WLST): You can use the WebLogic Scripting Tool in a command-line scripting interface to monitor and manage clusters.

- Java Management Extensions (JMX): WebLogic server provides MBeans, that you can use to configure, monitor, and manage WebLogic server resources through JMX.

- WebLogic Server Application Programming Interface (API): You can write a program to modify the configuration attributes, based on configuration application programming.

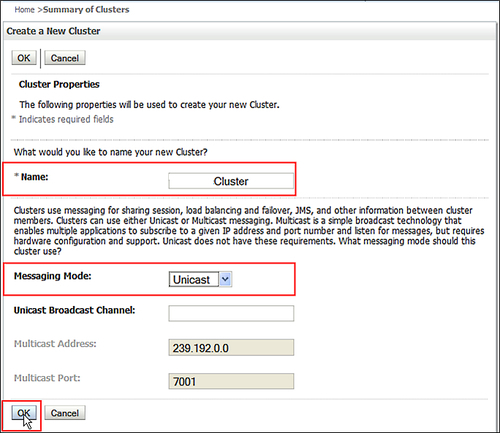

To configure a cluster with the Admin Console, perform the following steps:

- In the Administration Console, expand Environment and click on Clusters, and then New.

- Enter the name of the new cluster and select the messaging mode that you want to use for this cluster:

- Unicast is the default messaging mode. Unicast requires less network configuration than multicast.

- Multicast messaging mode is also available and may be appropriate in environments that use previous versions of Oracle WebLogic server. However, unicast is the preferred mode considering the simplicity of configuration and flexibility.

- If you are using Unicast message mode, enter the Unicast broadcast channel. This channel is used to transmit messages within the cluster. If you anticipate high volume of traffic and your applications use session replication, you may prefer defining a separate channel for cluster messaging mode. If you do not specify a channel, the default channel is used.

Each ListenAddress: ListenPort combination in the cluster address corresponds to the Managed Server and network channel that received the request. The order in which the ListenAddress: ListenPort combinations appear in the cluster address is random; the order varies from request to request.

The algorithm to be used for load balancing between replicated services, if none is specified for a particular service, is round-robin. The round-robin algorithm cycles in order through a list of Oracle WebLogic server instances. Weight-based load balancing improves on the round-robin algorithm by taking into account a pre-assigned weight for each server. In random load balancing, requests are routed to servers at random. This is only used for EJB clustering. These define global default and the EJB's configuration can override these.

With the cmo.createCluster a cluster can be created within WLST. The following is a simple example of a cluster create script snippet:

………

#Create and Configure a Cluster and assign the Managed Servers to that cluster

cd('/')

create('appcluster','Cluster')

assign('Server', 'app01,app02','Cluster','appcluster')

cd('Clusters/appcluster')

set('MulticastAddress','237.0.0.101')

set('MulticastPort',7204)

#Write the domain and Close the domain template

updateDomain()

closeDomain()

exit()

When configuring a domain, you can choose to create a cluster in the domain creation process. After the domain has been created, you can use the pack and unpack command to pack the domain configuration and deploy it on all the servers where you want a Managed Server to run.

After the domain is created, you can use pack to pick-up the configuration and transfer it to a second physical host.

pack-domain=path_of_domain-template=path_of_jar_file_to_create-template_name="template_name" [-template_author="author"][-template_desc="description"] [-managed=true|false][-log=log_file] [-log_priority=log_priority] unpack-template=path_of_jar_file-domain=path_of_domain_to_be_created [-user_name=username] [-password=password] [-app_dir=application_directory] [-java_home=java_home_directory] [-server_start_mode=dev|prod] [-log=log_file] [-log_priority=log_priority]

Run pack.sh to bundle domain and templates from Machine1 (this will create a jar file). Copy the jar file (created by using pack.sh command) from Machine1 to Machine2. Use unpack.sh to create Domain& Managed server on Machine2.

There are a lot of best practices about how to deal with a WebLogic cluster setup. Of course, you want to have the benefit of the cluster and not get stressed about all kinds of issues that can occur.

You can set up a cluster on a single computer for demonstration or development, but not for production environments. Also, each physical host in a cluster should have a static IP address.

There is no built-in limit for the number of server instances in a cluster. Large multiprocessor servers can host clusters with numerous servers. The recommendation is one server instance for every two CPUs.

The Administration Server should join a cluster. It's dedicated to the process of administering servers. But if the Administration Server joins a cluster, there is a risk of delays in accomplishing administration tasks.

Also, do not create a separate configuration file for each server in the cluster. Keep it with the Administration Server. It will be pushed to other servers, so no need to create it separately.

For a production environment, use the hostname resolved at DNS rather than IP addresses. Each server should have a unique name. The multicast address should not be used for anything other than cluster communications.

If the internal and external DNS names of a WebLogic server instance are not identical, use the ExternalDNSName attribute for the server instance, to define the server's external DNS name. Outside the firewall, ExternalDNSName should translate to the external IP address of the server.

If clients access Oracle WebLogic server over the default channel and T3, then do not set the ExternalDNSName, even if the internal and external DNS names of a WebLogic server instance are not identical, so as to avoid unnecessary DNS lookups.

The cluster address is used to communicate with entity and session beans by constructing the hostname portion of the request URLs. You can explicitly define the address of a cluster, or use the dynamically generated one. The cluster address should be a DNS name that maps to the IP addresses or the DNS name of each Oracle WebLogic server instance in the cluster.

You can also have Oracle WebLogic server dynamically generate an address for each new request. This gives you less configuration and ensures an accurate cluster address.

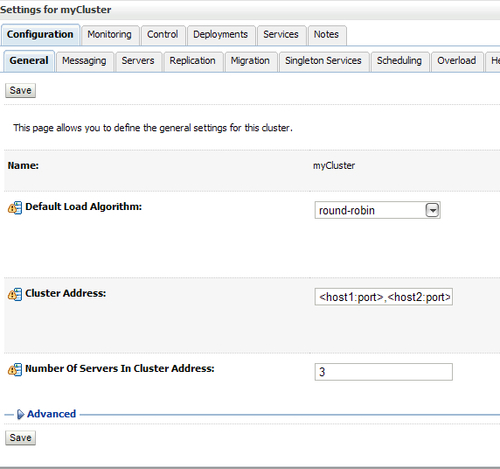

In the following screenshot, you can see where you should set the cluster address.

While choosing the cluster architecture, be aware of the position in the network. If you choose a multi-tier architecture or even a proxy variant, firewalls should be configured for the various components in the architecture.

If you place a firewall between the Web layer and object layer in a multi-tier architecture, then it is better to use public DNS names rather than IP addresses. Binding those servers with IP addresses can cause address translation problems and prevent the servlet cluster from accessing individual server instances. For proxy architectures, you could have a single firewall between untrusted clients and the web server layer and one between the proxy layer and the cluster.

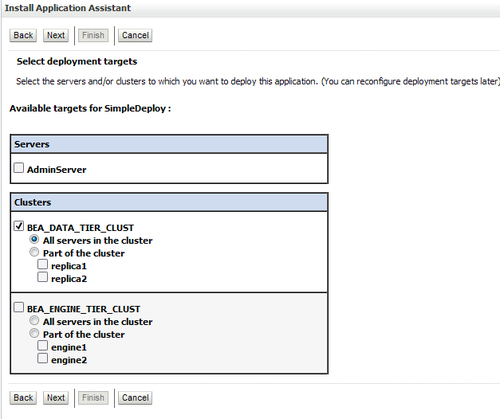

When you initiate the deployment process, you specify the components to be deployed and the targets to which they will be deployed. The main difference between the way you deploy an application to a single server and a cluster lies in your choice of the target. When you intend to deploy an application to the cluster, you select the target from the list of clusters and not from the list of servers.

Ideally, all servers in a cluster should be running and available during the deployment process. Deploying applications when some members of the cluster are unavailable is not recommended.

WebLogic clusters use the concept of two-phase deployment:

- Phase 1: During the first phase of deployment, components are distributed to the server instances and the deployment is validated to ensure that the application components are successfully deployed. During this phase, user requests to the application being deployed are not allowed. If failures are encountered during the distribution and validation processes, the deployment is aborted on all server instances, including those on which the validation succeeded. Files that have been staged are not removed; however, container-side changes performed during the preparation are reverted.

- Phase 2: After the components are distributed to targets and validated, they are fully deployed on the target server instances, and the deployed application is made available to the clients. If a failure occurs during this process, deployment to that server instance is canceled. However, a failure on one server of a cluster does not prevent successful deployment on other clustered servers.

If a cluster member fails to deploy an application, it fails at startup in order to ensure that the cluster will remain consistent, because any failure of a cluster-deployed application on a Managed Server would cause the Managed Server to abort its startup.

The two-phase commit feature enables you to avoid situations in which an application is successfully deployed on one node and not on the other. This is also referred to as partial deployment. One potential problem with partial deployment is that during the synchronization with other members of the cluster when other servers in the cluster reestablish communications with the previously partitioned server instance the user requests to the deployed applications and the attempts to create secondary sessions on that server instance may fail, causing inconsistencies in cached objects.

You can configure Oracle WebLogic server not to use relaxed or partial deployments by using the enforceClusterConstraints tag with weblogic.Deployer, WLST, or the Administration Console.

A technique to maintain availability can be arranged at the highest layer, the HTTP layer.

Web applications use HTTP sessions to track information in server memory for each client.

To provide high availability of Web applications, shared access to one HttpSession object must be provided. HttpSession objects can be replicated within Oracle WebLogic server by storing their data using in-memory replication, filesystem persistence, or in a database.

In a cluster, the load-balancing hardware or the proxy plug-in in Web server redirects the client requests to any available server in the WebLogic cluster. The cluster member that serves the request obtains a replica of the client's HTTP session state from the available secondary Managed Server in the cluster.

Oracle WebLogic supports several session replication strategies to recover sessions from failed servers:

- In-memory replication

- JDBC replication

Replication should be configured for each Web application within its weblogic.xml file.

Oracle WebLogic copies a session state from one server instance to another, using in-memory replication. The primary server creates a primary session state on the server to which the client first connects and also creates a secondary replica on another Oracle WebLogic server instance in the cluster. The replica is kept up-to-date so that it can be used, if the server that hosts the Web application fails.

The WebLogic proxy plug-ins maintain a list of Oracle WebLogic server instances that host a clustered servlet or JSP and forward HTTP requests to these instances by using a simple round-robin strategy.

Oracle HTTP Server with the mod_wl_ohs module configured and Oracle WebLogic server with HttpClusterServlet are supported; Apache with the Apache Server (proxy) plug-in (mod_weblogic) provides an open source solution.

For in-memory replication, the application should have a descriptor in the weblogic.xml.

<session-descriptor> <persistent-store-type>replicated</persistent-store-type> </session-descriptor

HTTP replication can also be configured with a JDBC to store session-persistent data in a database, or by using Coherence Web replication.

If you choose to use load-balancing hardware instead of a proxy plug-in, you must use hardware that supports SSL persistence and passive cookie persistence. Passive cookie persistence enables Oracle WebLogic to write cookies through the load balancer to the client. The load balancer, in turn, interprets an identifier in the client's cookie to maintain the relationship between the client and the primary Oracle WebLogic that hosts the HTTP session state.

EJBs that are based on the 3.0 specification can be configured using annotations and deployment plans:

weblogic-ejb-jar.xml:

<stateless-clustering> <stateless-bean-is-clusterable>True </stateless-bean-is-clusterable> <stateless-bean-load-algorithm>random </stateless-bean-load-algorithm> </stateless-clustering>

This is a typical example of a stateless EJB, but you can configure it for stateful EJBs. You should discuss these options with your development team.

The replication type for EJBs is In-Memory or None in the Admin Console.

You can configure the default EJB cluster settings for your domain using the following steps:

- Select Environment | Clusters within the Domain Structure panel of the console. Then select a specific cluster.

- On the General tab, update any of the fields described as follows:

- Default Load Algorithm: The algorithm used for load balancing between replicated services, such as EJBs, if none is specified for a particular service.

- The round-robin algorithm cycles through a list of Oracle WebLogic server instances in order.

- Weight-based load balancing improves on the round-robin algorithm by taking into account a preassigned weight for each server. In random load balancing, requests are routed to servers at random.

- Cluster Address: The address used by EJB clients to connect to this cluster. This address may be either the DNS host's name that maps to multiple IP addresses or a comma-separated list of single address hostnames or IP addresses.

- Number of Servers in Cluster Address: The number of servers listed from this cluster when generating a cluster address automatically.

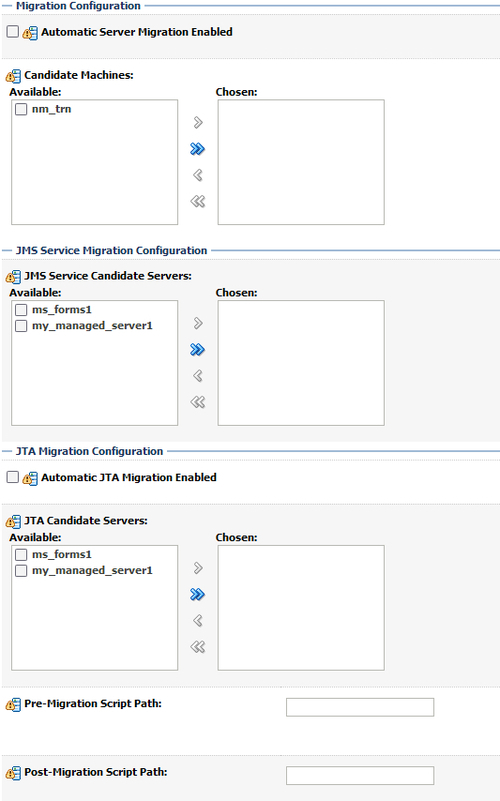

For pinned services, services that are attached to one single Managed Server, such as JMS, you can configure migratable targets. A pinned service is available in the cluster's JNDI tree, but does not have clustering, failover, and load balancing options. So if the Managed Server instance of the service is failing, the service is lost as well. But in this case you could use migratable targets. It defines a list of server instances in the cluster that can potentially host a migratable service, such as a JMS server or the Java Transaction API (JTA) transaction recovery service. If you want to use a migratable target, configure the target server list before deploying or activating the service in the cluster. By default, WebLogic can migrate the JTA transaction recovery service or a JMS server to any other Managed Server in the cluster. You can optionally configure a list of servers in the cluster that can potentially host a pinned service. This list of servers is referred to as a migratable target, and it controls the servers to which you can migrate a service. In the case of JMS, the migratable target also defines the list of servers to which you can deploy a JMS server.

WebLogic lets you to create separate migratable targets for the JTA transaction recovery service and JMS servers. This allows you to always keep each service running on a different server in the cluster, if necessary. Also, you can configure the same selection of servers as the migratable target for both JTA and JMS, to ensure that the services remain co-located on the same server in the cluster. For each server instance, you can set up migration on the migration tab.

The following screenshot shows you the Migration Configuration screen:

These tips could be helpful to prevent some common problems.

A problem with the multicast address is one of the most common reasons a cluster does not start or a server fails to join a cluster.

A multicast address is required for each cluster. The multicast address can be an IP number between 224.0.0.0 and 239.255.255.255, or a hostname with an IP address within that range.

You can check a cluster's multicast address and port on its Configuration | Multicast tab in the Administration Console.

For each cluster on a network, the combination of multicast address and port must be unique. If two clusters on a network use the same multicast address, they should use different ports. If the clusters use different multicast addresses, they can use the same port or accept the default port, 7001.

Before booting the cluster, make sure the cluster's multicast address and port are correct and do not conflict with the multicast address and port of any other clusters on the network.

The errors you are most likely to see if the multicast address is bad are:

- Unable to create a multicast socket for clustering

- Multicast socket send error

- Multicast socket receive error

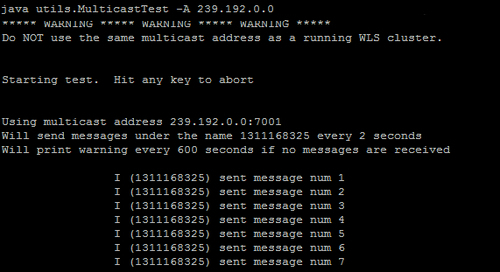

You can use the multicast test tool for multicast problems when configuring a WebLogic cluster.

Syntax:

java utils.MulticastTest n name -a address [-p portnumber] [-t timeout] [-s send]

The following screenshot shows an example of a running multicast test: