Chapter 9

The Role of Vibronic Coupling for Intersystem Crossing and Reverse Intersystem Crossing Rates in TADF Molecules

Thomas J. Penfold and Jamie Gibson

Newcastle University, School of Chemistry, NE1 7RU Newcastle upon Tyne, UK

9.1 Introduction

Since the first report of electroluminescence (EL) in poly(para‐phenylenevinylene) [1], there has been an enormous amount of effort dedicated to the development of organic light‐emitting diodes (OLEDs). However, early attempts to implement OLEDs based solely upon organic materials were restricted by an intrinsically low EL efficiency. This arose from the limit imposed by the statistics of the spin states formed under electrical excitation. Indeed, following electrical excitation only 25% of the excitons exist in the singlet states, while the remaining 75% form triplet excitons [2]. For organic species the latter, the triplet states, are not (strongly) dipole coupled to the molecular ground state due to the absence of strong spin–orbit coupling (SOC). Consequently, this energy cannot be harvested and is dissipated nonradiatively.

This limitation was overcome by doping the emitting layer with metal–organic emitters [3] yielding the so‐called phosphorescence‐based organic light‐emitting diodes (PhOLEDs). For these devices, the presence of a metal–organic complex containing heavy atoms opens the potential for significant SOC and gives rise to a radiative T![]() S

S![]() transition (triplet harvesting) [4]. The radiative rate of this transition,

transition (triplet harvesting) [4]. The radiative rate of this transition, ![]() , crucial to device efficiency [5] is formally zero but gains intensity through spin–orbit mixing with the close‐lying singlet states S

, crucial to device efficiency [5] is formally zero but gains intensity through spin–orbit mixing with the close‐lying singlet states S![]() :

:

where ![]() is the transition dipole moment between the ground state (S

is the transition dipole moment between the ground state (S![]() ) and excited singlet states, S

) and excited singlet states, S![]() .

. ![]() describes the first‐order mixing coefficient between triplet states (T

describes the first‐order mixing coefficient between triplet states (T![]() ) that besides stimulating

) that besides stimulating ![]() also promotes intersystem crossing (ISC) and reverse intersystem crossing (rISC). The second sum in the brackets describes the contribution to the phosphorescence rate owing to the perturbation of the electronic ground state, i.e. singlet ground state gaining triplet character, in contrast to triplet excited states mixing with singlet states. It is often argued that the energy gap between the electronic ground state and the excited triplet states is so much larger than the energy difference between the T

also promotes intersystem crossing (ISC) and reverse intersystem crossing (rISC). The second sum in the brackets describes the contribution to the phosphorescence rate owing to the perturbation of the electronic ground state, i.e. singlet ground state gaining triplet character, in contrast to triplet excited states mixing with singlet states. It is often argued that the energy gap between the electronic ground state and the excited triplet states is so much larger than the energy difference between the T![]() state and the excited singlet states and that the second sum can be neglected. However, this disregards the fact that the sum over

state and the excited singlet states and that the second sum can be neglected. However, this disregards the fact that the sum over ![]() includes

includes ![]() , i.e. the electronic ground state, and that the term for

, i.e. the electronic ground state, and that the term for ![]() in the second perturbation sum partially cancels this contribution. To avoid artefacts in the calculation, both perturbation sums should be always considered.

in the second perturbation sum partially cancels this contribution. To avoid artefacts in the calculation, both perturbation sums should be always considered.

However, although this approach can be extremely effective, until now the only phosphorescent materials found practically useful are iridium and platinum complexes, which are unappealing for commercial applications due to their high cost and low abundance [6]. Indeed, as iridium is the fourth most scarce naturally occurring element on the planet, it is unwise to base such large‐scale technologies such as lighting and displays on it.

An exciting new avenue for effective harvesting of both the singlet and triplet excitons can be achieved by using materials that emit through thermally activated delayed fluorescence (TADF) [7]. Here, the triplet excitons (75%) are not emitted through the T![]() radiative transition, but use thermal energy to promote upconversion via rISC so that they can emit as singlet excitons. This means that effective TADF requires the emitters to have both a small energy gap

radiative transition, but use thermal energy to promote upconversion via rISC so that they can emit as singlet excitons. This means that effective TADF requires the emitters to have both a small energy gap ![]() E

E![]() between the emitting singlet and triplet states, preferably

between the emitting singlet and triplet states, preferably ![]() 0.1 eV, and a nonzero SOC that mixes their spin character and permits efficient rISC, as shown in Eq. (9.1). The size of the former (

0.1 eV, and a nonzero SOC that mixes their spin character and permits efficient rISC, as shown in Eq. (9.1). The size of the former (![]() E

E![]() ) is two times the electron exchange interaction (2

) is two times the electron exchange interaction (2![]() ) between the ground and excited states [8]:

) between the ground and excited states [8]:

where ![]() and

and ![]() represent ground‐ and excited‐state wave functions, respectively;

represent ground‐ and excited‐state wave functions, respectively; ![]() is the charge; and

is the charge; and ![]() is the dielectric constant. This can usually be well approximated using the spatial overlap between the highest occupied molecular orbital (HOMO) and lowest unoccupied molecular orbital (LUMO), and consequently, the most promising TADF emitters have exploited charge‐transfer (CT) states that minimize the spatial overlap and therefore the exchange energy [9], [10]. Crucially, as shown in Eq. (9.1), this small energy gap is able to promote significant mixing between the singlet and triplet states, which depends inversely on the energy gap provided SOC is nonzero. This relationship reduces the emphasis upon the magnitude of SOC and opens the possibility for devices that contain exclusively organic materials [11], [12]. The challenge of this approach is that reducing the spatial overlap between the HOMO and LUMO orbitals also reduces the radiative rate of the emitting S

is the dielectric constant. This can usually be well approximated using the spatial overlap between the highest occupied molecular orbital (HOMO) and lowest unoccupied molecular orbital (LUMO), and consequently, the most promising TADF emitters have exploited charge‐transfer (CT) states that minimize the spatial overlap and therefore the exchange energy [9], [10]. Crucially, as shown in Eq. (9.1), this small energy gap is able to promote significant mixing between the singlet and triplet states, which depends inversely on the energy gap provided SOC is nonzero. This relationship reduces the emphasis upon the magnitude of SOC and opens the possibility for devices that contain exclusively organic materials [11], [12]. The challenge of this approach is that reducing the spatial overlap between the HOMO and LUMO orbitals also reduces the radiative rate of the emitting S![]() state. While high fluorescence efficiencies are important for high performing devices, it is more important that the radiative decay rate is much faster than all nonradiative rates (

state. While high fluorescence efficiencies are important for high performing devices, it is more important that the radiative decay rate is much faster than all nonradiative rates (![]() ), and therefore during the design of TADF emitters, it is important to understand the processes contributing to

), and therefore during the design of TADF emitters, it is important to understand the processes contributing to ![]() and reduce them as much as possible.

and reduce them as much as possible.

As illustrated in the previous paragraph, for third‐generation TADF OLED devices, the communication between low‐lying singlet and triplet excited states is of great importance and plays a fundamental role in determining key molecular and material properties relevant in a device context. Consequently, a thorough understanding of the basic principles governing the interplay between these manifolds of spin states is of great importance if one is to achieve systematic material design. Computationally, the most common approach for elucidating mechanistic information about processes occurring in electronically excited states is to compute energy profiles along viable reaction and/or decay pathways. These are usually represented as one‐dimensional curves, ![]() in Figure 9.1, containing displacements along multiple nuclear degrees of freedom. Important regions of the potential surface, such as crossings between states of the same or different multiplicities, potentially contributing to internal conversion (IC) and ISC, respectively (Figure 9.1), can also be identified. While useful, the resulting picture is static and lacks the dynamical information important for obtaining a complete understanding, especially given the complexity that can arise from dynamics occurring on a multidimensional potential energy surface (PES). This is especially pertinent in cases that exhibit a breakdown of the Born–Oppenheimer approximation, giving rise to nonadiabatic coupling [13]. Indeed, these nonadiabatic interactions arise from nuclear motion and occur via a dynamic effect in the sense that they become strong when a system traverses a region where coupled states are close in energy or degenerate, leading to a more efficient crossing. In this chapter an overview of dynamical methods used for understanding excited‐state processes is provided. We then summarize our recent research using quantum nuclear dynamics to understand the role of vibronic coupling for ISC and rISC rates in two molecules with implications to TADF.

in Figure 9.1, containing displacements along multiple nuclear degrees of freedom. Important regions of the potential surface, such as crossings between states of the same or different multiplicities, potentially contributing to internal conversion (IC) and ISC, respectively (Figure 9.1), can also be identified. While useful, the resulting picture is static and lacks the dynamical information important for obtaining a complete understanding, especially given the complexity that can arise from dynamics occurring on a multidimensional potential energy surface (PES). This is especially pertinent in cases that exhibit a breakdown of the Born–Oppenheimer approximation, giving rise to nonadiabatic coupling [13]. Indeed, these nonadiabatic interactions arise from nuclear motion and occur via a dynamic effect in the sense that they become strong when a system traverses a region where coupled states are close in energy or degenerate, leading to a more efficient crossing. In this chapter an overview of dynamical methods used for understanding excited‐state processes is provided. We then summarize our recent research using quantum nuclear dynamics to understand the role of vibronic coupling for ISC and rISC rates in two molecules with implications to TADF.

Figure 9.1A schematic representation of the adiabatic electronic energies against an arbitrary nuclear configuration  . Coupling between states of the same multiplicity originating from nonadiabatic coupling gives rise to internal conversion (IC). Transitions between states of different multiplicities, intersystem crossing (ISC), arise from spin–orbit coupling.

. Coupling between states of the same multiplicity originating from nonadiabatic coupling gives rise to internal conversion (IC). Transitions between states of different multiplicities, intersystem crossing (ISC), arise from spin–orbit coupling.

9.1.1 Background to Delayed Fluorescence

Molecular fluorescence is usually a two‐step process, i.e. an initial absorption giving rise to an electronically excited state followed by the radiative decay of that state or another one of lower energy (Kasha's rule) into the electronic ground state. This is known as prompt fluorescence (PF). However, if the florescence and nonradiative decay rate from this state is a lot less than the rate of ISC, i.e. ![]() (see Figure 9.2), then fluorescence may occur by a more complicated route, the triplet manifold. In this case, the S

(see Figure 9.2), then fluorescence may occur by a more complicated route, the triplet manifold. In this case, the S![]() state decays via ISC into the T

state decays via ISC into the T![]() state. Subsequently, if the phosphorescence and nonradiative decay of the triplet state is slow, as expected, and the energy gap between the singlet and triplet states is small enough (normally

state. Subsequently, if the phosphorescence and nonradiative decay of the triplet state is slow, as expected, and the energy gap between the singlet and triplet states is small enough (normally ![]() 0.2 eV), then after vibrational thermalization, a second ISC, often called rISC, back to the S

0.2 eV), then after vibrational thermalization, a second ISC, often called rISC, back to the S![]() can occur followed by emission. This is known as delayed fluorescence (DF). If all radiative and nonradiative processes are very small, this process can occur multiple times before DF actually occurs. The system can therefore be said to be cycling between the two states [15].

can occur followed by emission. This is known as delayed fluorescence (DF). If all radiative and nonradiative processes are very small, this process can occur multiple times before DF actually occurs. The system can therefore be said to be cycling between the two states [15].

Figure 9.2 A simplified energy diagram representing a general schematic of the upconversion of triplet states to a higher‐energy singlet state.  is approximately equal to the

is approximately equal to the  multiplied by a Boltzmann term, which determines the number of states with sufficient energy to overcome the barrier (

multiplied by a Boltzmann term, which determines the number of states with sufficient energy to overcome the barrier ( ). Deviations from this relationship arise from a higher density of final states expected for the direct intersystem crossing case.

). Deviations from this relationship arise from a higher density of final states expected for the direct intersystem crossing case.

Source: Ref. [14]. Reproduced with permission of American Chemical Society.

The first observation of DF was by Perrin and coworkers [16] who reported two long‐lived emission bands in solid uranyl salts naming them true phosphorescence and fluorescence of long duration. This was later characterized in more detail by Lewis et al. [17] in rigid media and Parker and Hatchard [18] using Eosin. The latter study is responsible for the original name, E‐type DF, which is now most commonly referred to as TADF.

The most common description of TADF is the equilibrium model, first proposed by Parker and Hatchard [18] and later used by Kirchhoff et al. [19] to describe the TADF of Cu(I) metal–organic complexes. This assumes that ![]() , and in this limit the steady‐state populations of the emitting singlet and triplet states are determined by Boltzmann statistics, as the molecule spends sufficient time in the excited state for an equilibrium to form before emission eventually occurs. The relative population of the two states can be expressed using an equilibrium constant:

, and in this limit the steady‐state populations of the emitting singlet and triplet states are determined by Boltzmann statistics, as the molecule spends sufficient time in the excited state for an equilibrium to form before emission eventually occurs. The relative population of the two states can be expressed using an equilibrium constant:

making it possible to express the rate of the whole TADF process (![]() ), i.e. rISC proceeded by fluorescence as the product of the amount of population in the S

), i.e. rISC proceeded by fluorescence as the product of the amount of population in the S![]() state and the rate‐limiting step, i.e.

state and the rate‐limiting step, i.e. ![]() :

:

Here, the prefactor of ![]() is a consequence of the three triplet substates (i.e.

is a consequence of the three triplet substates (i.e. ![]() ). This approach, adopted by Adachi and coworkers [12], [20] for organic emitters, defines the TADF process as depending exclusively on the energy gap between the singlet and triplet states and crucially, as shown in Eq. (9.4), independent of the coupling between them, i.e. the rates of ISC (

). This approach, adopted by Adachi and coworkers [12], [20] for organic emitters, defines the TADF process as depending exclusively on the energy gap between the singlet and triplet states and crucially, as shown in Eq. (9.4), independent of the coupling between them, i.e. the rates of ISC (![]() ) and rISC (

) and rISC (![]() ), provided they are not zero. This motives design procedures that focus upon minimizing this energy gap using CT states and suppressing larger amplitude molecular vibrations to reduce nonradiative pathways to ensure a maximum emission quantum yield, despite the potentially low radiative rates associated with the CT states.

), provided they are not zero. This motives design procedures that focus upon minimizing this energy gap using CT states and suppressing larger amplitude molecular vibrations to reduce nonradiative pathways to ensure a maximum emission quantum yield, despite the potentially low radiative rates associated with the CT states.

While this represents a convenient approach for the analysis of photophysical data, the key assumption, i.e. ![]() , must be and often is broken to support new emitters with stronger fluorescence yields [20], [21]. Within this regime, TADF is cast in terms of a kinetic process [22], for which the population of the emitting states in the longtime limit, assuming nonradiative and phosphorescence channels are small, can be expressed as

, must be and often is broken to support new emitters with stronger fluorescence yields [20], [21]. Within this regime, TADF is cast in terms of a kinetic process [22], for which the population of the emitting states in the longtime limit, assuming nonradiative and phosphorescence channels are small, can be expressed as

Solving Eqs. (9.5a) and (9.5b) yields

This illustrates the importance in not only tuning the energy gap, as is the focus from the equilibrium representations presented in Eq. (9.4), but also determining and optimizing other factors that influence ![]() , for which a wide variety of values exist in the literature. This was recently affirmed by Inoue et al. [23] who demonstrated significant reduction in device roll‐off efficiency for complexes exhibiting larger

, for which a wide variety of values exist in the literature. This was recently affirmed by Inoue et al. [23] who demonstrated significant reduction in device roll‐off efficiency for complexes exhibiting larger ![]() , as the device is less susceptible the quenching effects, such as triplet–triplet annihilation.

, as the device is less susceptible the quenching effects, such as triplet–triplet annihilation.

9.1.2 The Mechanism of rISC

In Section 9.1.1 the core elements of TADF and the importance of ![]() were established. However, despite the significant amount of research undertaken in this area, the mechanism for rISC and especially the efficient rISC recently reported in organic emitters (

were established. However, despite the significant amount of research undertaken in this area, the mechanism for rISC and especially the efficient rISC recently reported in organic emitters (![]() s

s![]() [20], [21]) has not been fully established.

[20], [21]) has not been fully established.

Transitions between two states of different spin multiplicities usually occur via the SOC interaction [24]. SOC between the lowest singlet and triplet metal–ligand CT states (![]() MLCT and

MLCT and ![]() MLCT) of Cu(I) complexes [25] is small but usually sufficient to explain the values of

MLCT) of Cu(I) complexes [25] is small but usually sufficient to explain the values of ![]() and

and ![]() reported [26]. For Cu(I) complexes that exhibit significant conformation freedom within the excited states, it can be important to also consider the SOC beyond the Franck–Condon geometry and incorporate how vibrational motion affects its magnitude, i.e. spin–vibronic coupling; see Refs. [27]–[32]. However, the situation is not quite so simple for donor–acceptor (D–A) complexes, which form the majority of organic TADF emitters. Indeed, Lim et al. [33] showed that SOC between

reported [26]. For Cu(I) complexes that exhibit significant conformation freedom within the excited states, it can be important to also consider the SOC beyond the Franck–Condon geometry and incorporate how vibrational motion affects its magnitude, i.e. spin–vibronic coupling; see Refs. [27]–[32]. However, the situation is not quite so simple for donor–acceptor (D–A) complexes, which form the majority of organic TADF emitters. Indeed, Lim et al. [33] showed that SOC between ![]() CT and

CT and ![]() CT in these intramolecular charge transfer (ICT) complexes is formally zero. This is because spin–orbit coupling matrix elements (SOCMEs) between a singlet and triplet state with equal spatial orbital occupations are formally forbidden. The origin of this is analogous to El‐Sayed's law [34] regarding conservation of total angular momentum during spin–flip transitions.

CT in these intramolecular charge transfer (ICT) complexes is formally zero. This is because spin–orbit coupling matrix elements (SOCMEs) between a singlet and triplet state with equal spatial orbital occupations are formally forbidden. The origin of this is analogous to El‐Sayed's law [34] regarding conservation of total angular momentum during spin–flip transitions.

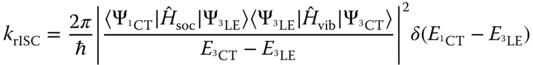

This issue, limiting our understanding of the mechanisms driving rISC, would appear reduced by the observation that the lowest triplet state for many complexes exhibiting efficient rISC is a ![]() locally excited triplet state (

locally excited triplet state (![]() LE) [21], [35]. In this case

LE) [21], [35]. In this case ![]() LE

LE ![]() CT conversion could have a finite, if small, SOCME permitting rISC. However, using a Fermi's golden rule (FGR) approach, Chen et al. [36] showed that the rate of

CT conversion could have a finite, if small, SOCME permitting rISC. However, using a Fermi's golden rule (FGR) approach, Chen et al. [36] showed that the rate of ![]() LE

LE ![]() CT conversion in a series of TADF molecules was

CT conversion in a series of TADF molecules was ![]() s

s![]() , four to five orders of magnitude less than the rates reported experimentally, and consequently a key component leading to complete understanding is clearly lacking.

, four to five orders of magnitude less than the rates reported experimentally, and consequently a key component leading to complete understanding is clearly lacking.

To address this, Ogiwara et al. [37] used electron paramagnetic resonance (EPR) spectroscopy to probe the population of the ![]() LE and

LE and ![]() CT states. By fitting the transient experimental signals, they reported that complexes showing the largest rISC exhibited an EPR signal consistent with a mixture of both the

CT states. By fitting the transient experimental signals, they reported that complexes showing the largest rISC exhibited an EPR signal consistent with a mixture of both the ![]() LE and

LE and ![]() CT states. The authors used this to propose that efficient rISC includes not only the SOC pathway (

CT states. The authors used this to propose that efficient rISC includes not only the SOC pathway (![]() LE

LE ![]() CT) but also a hyperfine coupling‐induced ISC pathway (

CT) but also a hyperfine coupling‐induced ISC pathway (![]() CT

CT ![]() CT). This mechanism arises from interactions between an electron's spin and the magnetic nuclei of its molecule. It is therefore completely local and not quenched by significant electron‐hole separation experienced in CT states like SOC [38]. This conclusion is consistent with the proposal of Adachi and coworkers [20], who rationalized efficient rISC from

CT). This mechanism arises from interactions between an electron's spin and the magnetic nuclei of its molecule. It is therefore completely local and not quenched by significant electron‐hole separation experienced in CT states like SOC [38]. This conclusion is consistent with the proposal of Adachi and coworkers [20], who rationalized efficient rISC from ![]() LE to

LE to ![]() CT, as proceeding via reverse internal conversion (rIC) to the

CT, as proceeding via reverse internal conversion (rIC) to the ![]() CT and then using hyperfine coupling‐induced ISC to cross to the

CT and then using hyperfine coupling‐induced ISC to cross to the ![]() CT. However, crucially the hyperfine coupling constants are very small, usually in the range of 10

CT. However, crucially the hyperfine coupling constants are very small, usually in the range of 10![]() meV, and they therefore also appear highly unlikely that such coupling accounts for efficient rISC.

meV, and they therefore also appear highly unlikely that such coupling accounts for efficient rISC.

Recently D–A and D–A–D complexes reported by Ward et al. [39] point to a strong dynamical component to the rISC mechanism. Indeed, their work found that complexes including steric hindrance around the D–A dihedral angle switch off the main TADF pathway and make the molecule phosphorescent at room temperature. This dynamical aspect, i.e. motion along the D–A dihedral coordination, which appears to promote rISC is consistent with the recent simulations of Marian [40] who used multireference quantum chemistry methods to show, in agreement with Ref. [36], that direct SOC was indeed too small to explain efficient rISC. Marian proposed that it is mediated by mixing with an energetically close‐lying ![]() LE state along a carbonyl stretching mode, promoting spin–vibronic mixing between multiple excited states as being crucial to efficient rISC. Similar mechanisms have been widely reported for the ISC rate [27]–[32]. However while hugely informative, these calculations are inherently static and therefore do not provide mechanistic understanding of the TADF process. For this detail, simulations with temporal resolution are required and are the focus of the present work.

LE state along a carbonyl stretching mode, promoting spin–vibronic mixing between multiple excited states as being crucial to efficient rISC. Similar mechanisms have been widely reported for the ISC rate [27]–[32]. However while hugely informative, these calculations are inherently static and therefore do not provide mechanistic understanding of the TADF process. For this detail, simulations with temporal resolution are required and are the focus of the present work.

9.2 Beyond a Static Description

The rate of population transfer between two states can be described using a FGR approach as described in Ref. [28]. This was illustrated using high‐level quantum chemistry calculations of the rISC rates for both Cu(I) and organic TADF molecules. This first‐order perturbative approach is very effective, as demonstrated on numerous occasions by Marian and coworkers [27]–[29], describing the excited‐state kinetics, provided that the coupling between the two states is small compared with their energy difference. If this is not the case, the validity of this approach, i.e. perturbation theory, becomes questionable, although it has still been used with some success [41].

Importantly, as described in the previous section, recent work demonstrates a dynamical mechanism for efficient rISC [39], [42]. This strongly motivates computations that go beyond inherently static quantum chemistry calculations and solves the time‐dependent motion of the nuclei over a PES to elucidate a full understanding of the fine mechanistic details. In the following subsections we describe a variety of approaches for achieving this.

The starting point for any attempts aiming to elucidate fine details about excited‐state dynamics is the time‐dependent Schrödinger equation (TDSE):

To derive working equations from this, the Born–Huang ansatz [43] for the total wave function is applied:

where ![]() describes a complete set of electronic states that are solutions of the time‐independent electronic Schrödinger equation:

describes a complete set of electronic states that are solutions of the time‐independent electronic Schrödinger equation:

In practice solutions for ![]() are achieved using a quantum chemistry method at various configurations in nuclear coordinate space,

are achieved using a quantum chemistry method at various configurations in nuclear coordinate space, ![]() , which then provides the PES. Approaches for obtaining an accurate representation of the PES are discussed in Section 9.2.1. Inserting the Born–Huang ansatz into Eq. (9.7) and multiplying from the left by

, which then provides the PES. Approaches for obtaining an accurate representation of the PES are discussed in Section 9.2.1. Inserting the Born–Huang ansatz into Eq. (9.7) and multiplying from the left by ![]() yields, after integration over the electronic coordinates

yields, after integration over the electronic coordinates ![]() , the equation describing the temporal evolution of the nuclear wave function

, the equation describing the temporal evolution of the nuclear wave function ![]() :

:

where

![]() are the first‐order nonadiabatic coupling vectors, defined

are the first‐order nonadiabatic coupling vectors, defined

and ![]() are the second‐order nonadiabatic coupling elements given

are the second‐order nonadiabatic coupling elements given

![]() describes a complete set of electronic basis functions that are solutions of the time‐independent Schrödinger equation, Eq. (9.9). Neglecting the latter two terms Eqs. (9.12) and (9.13) represents the Born–Oppenheimer approximation. This assumes complete decoupling between the electronic and nuclear degrees of freedom. In this scenario, a wave packet excited onto state I stays on state I indefinitely. To perform excited‐state dynamics simulations, it is the solution of Eqs. (9.9) and (9.10) that is required. This is the subject of the following sections.

describes a complete set of electronic basis functions that are solutions of the time‐independent Schrödinger equation, Eq. (9.9). Neglecting the latter two terms Eqs. (9.12) and (9.13) represents the Born–Oppenheimer approximation. This assumes complete decoupling between the electronic and nuclear degrees of freedom. In this scenario, a wave packet excited onto state I stays on state I indefinitely. To perform excited‐state dynamics simulations, it is the solution of Eqs. (9.9) and (9.10) that is required. This is the subject of the following sections.

9.2.1 Obtaining the Potential Energy Surfaces

The Born–Oppenheimer approximation allows us to visualize the nuclei evolving over a PES generated by the electrons. Consequently, excited‐state nuclear dynamics require an accurate potential. Given that the number of nuclear degrees of freedom in a molecule is ![]() , calculating a full PES for a system containing more than five atoms is challenging. Indeed, if the potential is represented on a grid of points, the number of points, and therefore quantum chemistry calculations, is required to define the potential scales as

, calculating a full PES for a system containing more than five atoms is challenging. Indeed, if the potential is represented on a grid of points, the number of points, and therefore quantum chemistry calculations, is required to define the potential scales as ![]() , where

, where ![]() is the number of grid points along each

is the number of grid points along each ![]() degree of freedom.

degree of freedom.

This dimensionality problem has led to two distinct groups of excited‐state dynamics simulations. The first retains a description of the potential and wave function upon a grid of points. Here the number of degrees of freedom that can be included is restricted, but significant effort is placed upon the development of the potential, i.e. the model Hamiltonian, with the aim of capturing the key physics of the problem understudy. Models adopting this approach are typically limited to a few tens of nuclear degrees of freedom, and therefore the excited‐state dynamics occurring on this potential have traditionally been performed using grid‐based quantum nuclear wavepacket dynamics [13]. Consequently, these simulations usually provide a rigorous description of the nuclear dynamics within the constraints imposed by the model PES. Provided the approximations are appropriate to capture the core elements of the dynamical process understudy, this approach can be extremely powerful. Indeed, such simulations have contributed to our understanding of a wide variety of fundamental photophysical and photochemical processes [32], [44]–[47].

The alternative is to adopt an on‐the‐fly approach, i.e. calculate the potential as and when it is required during the nuclear dynamics. The distinct advantage of this is that it removes the need to preparametrize a model PES and subsequently makes it possible, unlike the model approach, to follow the nuclear dynamics in unconstrained nuclear configuration space. This field was born from the development of ab initio molecular dynamics, which emerged following the pioneering work of Car and Parrinello [48], for studying dynamics and properties in the electronic ground state. However, more recently it has been extended to enable the study of excited‐state dynamics [49]–[51].

Here the nuclear motion is described using either a Gaussian wavepacket basis or semiclassical trajectories [50], [52], [53], and does not require a grid of points to be defined. However, despite the advantages of full configuration space simulations, these simulations still require a large computational effort to reach statistical convergence, i.e. dynamics does not change when increasing either the number of trajectories or Gaussian functions. Indeed, a large number of these basis functions are usually required, and therefore the rigorous applicability of this approach remains limited to relatively small molecular systems, ![]() 100 atoms. In comparison with grid‐based methods, one can roughly state that during such methods the approximations are shifted more to the nuclear dynamics themselves, rather than in the potential as is the case for grid‐based methods. Of course in both cases, the accuracy of the potential depends upon the underlying accuracy of the quantum chemistry method used.

100 atoms. In comparison with grid‐based methods, one can roughly state that during such methods the approximations are shifted more to the nuclear dynamics themselves, rather than in the potential as is the case for grid‐based methods. Of course in both cases, the accuracy of the potential depends upon the underlying accuracy of the quantum chemistry method used.

Independent of the method adopted for simulating the PES, computations of excited‐state dynamics are complicated by the breakdown of the Born–Oppenheimer approximation and the subsequent presence of nonadiabatic coupling. The challenge of these terms can be seen by rewriting Eq. (9.12) as

where ![]() and

and ![]() represent the potential energy of states I and J, respectively. This clearly shows that within the adiabatic basis of Eqs. (9.10)–(9.13), which is standard for quantum chemistry programs, the nonadiabatic corrections necessary for describing the coupling between two different excited states depend inversely on the energy gap between surfaces. When this gap becomes small, the coupling increases induced coupling between the nuclear motions on different surfaces. If two surfaces become degenerate, the coupling becomes infinite, leading to a strong likelihood for numerical instabilities during the simulations. These singularities can be removed by switching to a diabatic electronic basis (Figure 9.3), which is therefore the method of choice for quantum dynamics simulations. In contrast to the adiabatic picture, which provides sets of energy‐ordered PES and nonlocal coupling elements via nuclear momentum‐like operators, Eqs. (9.12) and (9.13), the diabatic picture provides PES that are related to an electronic configuration, and the couplings are provided by local multiplicative

represent the potential energy of states I and J, respectively. This clearly shows that within the adiabatic basis of Eqs. (9.10)–(9.13), which is standard for quantum chemistry programs, the nonadiabatic corrections necessary for describing the coupling between two different excited states depend inversely on the energy gap between surfaces. When this gap becomes small, the coupling increases induced coupling between the nuclear motions on different surfaces. If two surfaces become degenerate, the coupling becomes infinite, leading to a strong likelihood for numerical instabilities during the simulations. These singularities can be removed by switching to a diabatic electronic basis (Figure 9.3), which is therefore the method of choice for quantum dynamics simulations. In contrast to the adiabatic picture, which provides sets of energy‐ordered PES and nonlocal coupling elements via nuclear momentum‐like operators, Eqs. (9.12) and (9.13), the diabatic picture provides PES that are related to an electronic configuration, and the couplings are provided by local multiplicative ![]() ‐dependent potential‐like operators. Importantly, as the surfaces in the diabatic picture are smooth, they can often be described by a low‐order Taylor expansion, as described in the following section.

‐dependent potential‐like operators. Importantly, as the surfaces in the diabatic picture are smooth, they can often be described by a low‐order Taylor expansion, as described in the following section.

Figure 9.3 Schematic representation of the adiabatic (black solid) and diabatic (red dashed) representation of the electronic states along an arbitrary nuclear configuration  .

.

9.2.1.1 Vibronic Coupling Model Hamiltonian

One approach for obtaining an appropriate PES within the diabatic basis suitable for quantum dynamics simulations and the method of choice for the case studies presented below is within the framework vibronic coupling model [54]. This approach exploits two key aspects: Firstly, in contrast to adiabatic PES, the diabatic potential is usually smooth and can be expressed as a low‐order Taylor expansion around a geometry of interest defined as Q![]() . Secondly, while transformations from the adiabatic to the diabatic representation are normally difficult to perform, the opposite way (i.e. diabatic to adiabatic) is relatively simple using a unitary transformation [55]. Consequently, starting from an initial guess, the diabatic potential can be refined using least‐squares fit to the adiabatic potential computed using standard quantum chemical methods. At each iteration of the fit, the diabatic Hamiltonian is transformed into the adiabatic basis to assess the quality of the fit. This approach is often referred to as diabatization by ansatz. It is noted that this can also be achieved without fitting, using only a few point near Q

. Secondly, while transformations from the adiabatic to the diabatic representation are normally difficult to perform, the opposite way (i.e. diabatic to adiabatic) is relatively simple using a unitary transformation [55]. Consequently, starting from an initial guess, the diabatic potential can be refined using least‐squares fit to the adiabatic potential computed using standard quantum chemical methods. At each iteration of the fit, the diabatic Hamiltonian is transformed into the adiabatic basis to assess the quality of the fit. This approach is often referred to as diabatization by ansatz. It is noted that this can also be achieved without fitting, using only a few point near Q![]() ; see Refs. [44], [56]. While quick and computationally inexpensive, this approach can lack important information of the potential away from Q

; see Refs. [44], [56]. While quick and computationally inexpensive, this approach can lack important information of the potential away from Q![]() and can lead unrealistic potential curves at distorted geometries.

and can lead unrealistic potential curves at distorted geometries.

The starting point for obtaining the vibronic coupling Hamiltonian is a Taylor series expansion around Q![]() , using dimensionless (mass–frequency scaled) normal mode coordinates:

, using dimensionless (mass–frequency scaled) normal mode coordinates:

Truncation at first order, as shown here, is referred to the linear vibronic coupling (LVC) model, while truncation at second order is referred to the quadratic vibronic coupling (QVC) model. The zeroth‐order term is the ground‐state harmonic oscillator approximation:

with the vibrational frequencies ![]() . The zeroth‐order coupling matrix contains the adiabatic state energies at

. The zeroth‐order coupling matrix contains the adiabatic state energies at ![]() . The adiabatic potential surfaces are equal to the diabatic surfaces at this point, so

. The adiabatic potential surfaces are equal to the diabatic surfaces at this point, so ![]() is diagonal and is expressed as

is diagonal and is expressed as

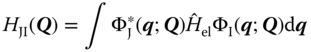

where H![]() is the standard clamped nucleus electronic Hamiltonian and

is the standard clamped nucleus electronic Hamiltonian and ![]() is the diabatic electronic functions. The first‐order linear coupling matrix elements are written as

is the diabatic electronic functions. The first‐order linear coupling matrix elements are written as

where the on‐diagonal and off‐diagonal terms are written as

![]() and

and ![]() are the expansion coefficients corresponding to the on‐ and off‐diagonal matrix elements. The on‐diagonal elements are the forces acting within an electronic surface and are responsible for structural changes of excited‐state potentials compared with the ground state. The off‐diagonal elements are the nonadiabatic couplings responsible for transferring wavepacket population between different excited states. The second‐order terms,

are the expansion coefficients corresponding to the on‐ and off‐diagonal matrix elements. The on‐diagonal elements are the forces acting within an electronic surface and are responsible for structural changes of excited‐state potentials compared with the ground state. The off‐diagonal elements are the nonadiabatic couplings responsible for transferring wavepacket population between different excited states. The second‐order terms, ![]() , are expressed as

, are expressed as

where the specific on‐diagonal and off‐diagonal terms are written as

The off‐diagonal terms (![]() ) are rarely used; however the on‐diagonal terms can often be very important. The quadratic terms (i.e.

) are rarely used; however the on‐diagonal terms can often be very important. The quadratic terms (i.e. ![]() ) are responsible for changes in frequency of theexcited‐state potential compared with the ground state, while the bilinear terms (i.e.

) are responsible for changes in frequency of theexcited‐state potential compared with the ground state, while the bilinear terms (i.e. ![]() ) are responsible for mixing the normal modes in the excited state, i.e. the so‐called Duschinsky rotation effect (DRE). Higher‐order terms can be important and are included in the same manner [31], [57].

) are responsible for mixing the normal modes in the excited state, i.e. the so‐called Duschinsky rotation effect (DRE). Higher‐order terms can be important and are included in the same manner [31], [57].

Figure 9.4 provides an illustration, up to second order, of the stepwise fitting procedure often used. The first two steps involve defining ![]() and

and ![]() and using the frequency of the normal modes and excited‐state energies at Q

and using the frequency of the normal modes and excited‐state energies at Q![]() , respectively, and obtained from quantum chemistry calculations. Subsequently, the linear model is refined (

, respectively, and obtained from quantum chemistry calculations. Subsequently, the linear model is refined (![]() ,

, ![]() ), while the remaining parameters are set to zero. Lower‐order terms, i.e.

), while the remaining parameters are set to zero. Lower‐order terms, i.e. ![]() and

and ![]() , are fixed to the value determined during the previous step. Next thesecond‐order terms (

, are fixed to the value determined during the previous step. Next thesecond‐order terms (![]() ) are refined. In this case only the quadratic terms are relevant, and the representative potentials shown only include displacements along one normal mode. The inclusion of bilinear terms requires calculation of the potential simultaneously along two normal modes (

) are refined. In this case only the quadratic terms are relevant, and the representative potentials shown only include displacements along one normal mode. The inclusion of bilinear terms requires calculation of the potential simultaneously along two normal modes (![]() and

and ![]() ). Again, parameters arising from lower‐order terms are kept fixed.

). Again, parameters arising from lower‐order terms are kept fixed.

Figure 9.4 Schematic showing the stepwise fitting procedure used to obtain the vibronic coupling Hamiltonian. Initially,  and

and  are defined using a frequency and excited‐state calculation at Q

are defined using a frequency and excited‐state calculation at Q . Subsequently, the linear model and the quadratic terms are fit. This is done in a stepwise manner, always keeping parameters at lower orders fixed. It is noted that all states (red, green, and blue lines) are present in all of the fits but may not be observed as they overlap.

. Subsequently, the linear model and the quadratic terms are fit. This is done in a stepwise manner, always keeping parameters at lower orders fixed. It is noted that all states (red, green, and blue lines) are present in all of the fits but may not be observed as they overlap.

An important consideration when setting up a model Hamiltonian for large polyatomic molecules is which nuclear degrees of freedom are required in the model to ensure an accurate description of the dynamics of interest. In addition, it is clear that for large systems the number of relevant terms, especially at second order, can rapidly become very large making it challenging to refine all of them. The former can be addressed by identifying important excited‐state structures, such as energy minima and conical intersections, and expressing them as a function of the normal mode displacements from Q![]() . This helps to clearly identify the modes responsible for significant structural changes in the excited state, but will not necessarily identify nonadiabatic coupling modes that do not always induce structural changes in the excited states. Here additional help is provided using, if present, molecular symmetry. Indeed, an LVC matrix element will only be nonzero if the product vibrational mode symmetry and that of the states is written as

. This helps to clearly identify the modes responsible for significant structural changes in the excited state, but will not necessarily identify nonadiabatic coupling modes that do not always induce structural changes in the excited states. Here additional help is provided using, if present, molecular symmetry. Indeed, an LVC matrix element will only be nonzero if the product vibrational mode symmetry and that of the states is written as

where ![]() and

and ![]() are symmetries of the two states and

are symmetries of the two states and ![]() is the symmetry of the mode. Therefore off‐diagonal elements (nonadiabatic coupling elements) will only be nonzero if the product of the two states gives the symmetry of the specific mode. On‐diagonal elements can only be nonzero for totally symmetric modes. While establishing an accurate Hamiltonian is still possible within low symmetry molecules, the use of such rules does significantly simplify the fitting process, and many of the coupling terms in the Hamiltonian can be excluded as they are known to be zero.

is the symmetry of the mode. Therefore off‐diagonal elements (nonadiabatic coupling elements) will only be nonzero if the product of the two states gives the symmetry of the specific mode. On‐diagonal elements can only be nonzero for totally symmetric modes. While establishing an accurate Hamiltonian is still possible within low symmetry molecules, the use of such rules does significantly simplify the fitting process, and many of the coupling terms in the Hamiltonian can be excluded as they are known to be zero.

9.2.2 Solving for the Motion of the Nuclei

Motivated primarily by the desire to overcome the exponential scaling of computational effort with the number of degrees of freedom outlined in Section 9.2, a large number of approaches for addressing the nuclear dynamics over excited‐state PES have been developed. These range from full quantum approaches, which explicitly address the nuclear motion according to the TDSE to mixed quantum–classical approaches, such as Tully's trajectory surface hopping (TSH). This latter approach replaces the wave packet with classical trajectories and incorporates nonadiabatic transitions using a stochastic algorithm [52], [53]. The appeal of mixed quantum–classical approach is driven largely by the relative simplicity in applying then within a framework of on‐the‐fly excited‐state dynamics [49], [51], [58], as discussed above.

Developments of more sophisticated trajectory approaches based upon Gaussian wave packets instead of independent point trajectories have seen a revival in recent years and include multiple spawning [59], [60], coupled coherent states (CCS) [61], multiconfigurational Ehrenfest (MCE) [62], variational multiconfigurational Gaussian wave packet (vMCG) [50], [63], and most recently the multiple cloning method [64]. All of these can be applied to on‐the‐fly excited‐state dynamics, but unlike TSH converged to the correct quantum description in the limit of a complete basis set. For larger molecules, ![]() 100 atoms, this limit will be very computationally expensive to reach, and consequently, despite significant recent growth in on‐the‐fly methodologies, there remains a strong place for grid‐based quantum dynamics based upon model vibronic coupling Hamiltonians as described above. In the following two sections, we outline the details of one of the most efficient ways of doing this, the multiconfigurational time‐dependent Hartree (MCTDH) method that we have focused upon and used for both of the case studies discussed below.

100 atoms, this limit will be very computationally expensive to reach, and consequently, despite significant recent growth in on‐the‐fly methodologies, there remains a strong place for grid‐based quantum dynamics based upon model vibronic coupling Hamiltonians as described above. In the following two sections, we outline the details of one of the most efficient ways of doing this, the multiconfigurational time‐dependent Hartree (MCTDH) method that we have focused upon and used for both of the case studies discussed below.

9.2.2.1 Multiconfigurational Time‐Dependent Hartree Approach

The simplest way of solving the TDSE is to expand the nuclear wave function into a time‐independent product basis set, with time‐dependent coefficients:

where ![]() is an orthonormal basis, such as the eigenfunctions of the harmonic oscillator, and

is an orthonormal basis, such as the eigenfunctions of the harmonic oscillator, and ![]() is the nuclear coordinate. The analogous approach in electronic structure theory is full configuration interaction (full CI). Consequently, while it rigorously describes the motion of a nuclear wave packet, like full CI, it suffers from a severe scaling problem. Indeed, it scales exponentially with the number of degrees of freedom, making it difficult to describe nuclear dynamics in systems containing more than five to six degrees of freedom; hence, as in exactly the same way as electronic structure theory, approximate methods are required.

is the nuclear coordinate. The analogous approach in electronic structure theory is full configuration interaction (full CI). Consequently, while it rigorously describes the motion of a nuclear wave packet, like full CI, it suffers from a severe scaling problem. Indeed, it scales exponentially with the number of degrees of freedom, making it difficult to describe nuclear dynamics in systems containing more than five to six degrees of freedom; hence, as in exactly the same way as electronic structure theory, approximate methods are required.

One such approximate scheme is the time‐dependent Hartree (TDH) method [65], also known as time‐dependent self‐consistent field (TDSCF). Here one assumes the total wave function of the system can be approximated as a single Hartree product of single‐particle functions or orbitals (![]() ), and consequently the nuclear wave function is defined as

), and consequently the nuclear wave function is defined as

The effort required is clearly significantly reduced as the sum over ![]() configurations and

configurations and ![]() degrees of freedom is absent. But obviously this comes at the cost that the correlation between the degrees of freedom is no longer treated correctly. In this case, in contrast to electronic structure theory, this is the correlation between the nuclear degrees of freedom. It can be shown that the error introduced by the TDH approach is small if the variation in the potential function over the width of the wave function is small. This is usually the case for dynamics around the equilibrium but clearly will be insufficient when considering excited‐state dynamics due to the strong variations in the potential.

degrees of freedom is absent. But obviously this comes at the cost that the correlation between the degrees of freedom is no longer treated correctly. In this case, in contrast to electronic structure theory, this is the correlation between the nuclear degrees of freedom. It can be shown that the error introduced by the TDH approach is small if the variation in the potential function over the width of the wave function is small. This is usually the case for dynamics around the equilibrium but clearly will be insufficient when considering excited‐state dynamics due to the strong variations in the potential.

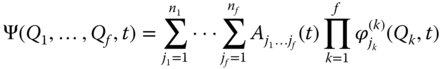

As the performance of the TDH method is usually rather poor, an obvious way of improving it is to move beyond a single configuration and take multiple configurations into account [66], i.e. multiconfigurational time‐dependent self‐consistent field (MC‐TDSCF) approach. A particularly powerful variant of the MC‐TDSCF family, which has become extensively used, is the MCTDH method [67]. In this approach the nuclear wave function ansatz takes the form

where ![]() are the nuclear coordinates,

are the nuclear coordinates, ![]() are the MCTDH expansion coefficients, and

are the MCTDH expansion coefficients, and ![]() are the

are the ![]() expansion functions for each degree of freedom

expansion functions for each degree of freedom ![]() known as single‐particle functions. Note that setting

known as single‐particle functions. Note that setting ![]() retrieves the TDH formulation.

retrieves the TDH formulation.

The ansatz for the MCTDH nuclear wave function appears rather similar to standard nuclear wavepacket approach Eq. (9.31); however crucially the basis functions are time dependent. This means that fewer basis functions are required to converge the calculations as they adapt to provide the best possible basis for the description of the evolving wave packet. In addition, the coordinate for each set of single‐particle functions, ![]() , can be a composite coordinate of one or more system coordinates. Thus the basis functions are

, can be a composite coordinate of one or more system coordinates. Thus the basis functions are ![]() ‐dimensional, where

‐dimensional, where ![]() is the number of system coordinates, usually between 1 and 4. This reduces the effective number of degrees of freedom for the purpose of the simulations. The memory required by the standard method is proportional to

is the number of system coordinates, usually between 1 and 4. This reduces the effective number of degrees of freedom for the purpose of the simulations. The memory required by the standard method is proportional to ![]() , where

, where ![]() is the number of grid points for each

is the number of grid points for each ![]() degree of freedom. In contrast, the memory needed by the MCTDH method scales as

degree of freedom. In contrast, the memory needed by the MCTDH method scales as

where the first term is due to the (single‐mode) single‐particle function representation and the second term is the wavefunction coefficient vector ![]() . As

. As ![]() , often by a factor of 5 or more, the MCTDH method needs much less memory than the standard method, so allowing larger systems to be treated. Indeed the standard implementation of MCTDH can treat, depending on the exact details of the calculations,

, often by a factor of 5 or more, the MCTDH method needs much less memory than the standard method, so allowing larger systems to be treated. Indeed the standard implementation of MCTDH can treat, depending on the exact details of the calculations, ![]() 50 nuclear degrees of freedom. A detailed discussion on all of these aspects, beyond the remit of the present chapter, can be found in Ref. [68].

50 nuclear degrees of freedom. A detailed discussion on all of these aspects, beyond the remit of the present chapter, can be found in Ref. [68].

9.2.2.2 Density Matrix Formalism of MCTDH:  MCTDH

MCTDH

MCTDH" "density"?> MCTDH" "density"?>The standard MCTDH wavefunction approach, outlined in the previous section, describes the evolution of a particular well‐defined initial state, and therefore calculations are effectively performed at 0 K. However, given the importance of the thermal aspect in the present topic upon TADF, it clearly is important to explicitly address the temperature of the system, evoking the need to adopt a density matrix approach. In density operator form the 0 K state is expressed as

where it is a pure state. However a system at finite temperature is an incoherent mixture of very many thermally excited states, ![]() , and therefore the correct description is in the form of a density matrix:

, and therefore the correct description is in the form of a density matrix:

where ![]() is a probability, not an amplitude of finding a system in state

is a probability, not an amplitude of finding a system in state ![]() . So in addition to a probability of finding a particle described by a wave function at a specific location, there is now also a probability for being in a different state [69].

. So in addition to a probability of finding a particle described by a wave function at a specific location, there is now also a probability for being in a different state [69].

The equation of motion for the density matrix follows naturally from the definition of ![]() and the TDSE, leading to the well‐known Liouville–von Neumann equation:

and the TDSE, leading to the well‐known Liouville–von Neumann equation:

This applies to a so‐called closed quantum system, i.e. the Hamiltonian is not in contact with a dissipative environment. When used to describe an open quantum system, the equation has an additional term used to describe dissipation or population decay; however this was not considered for the examples discussed below.

In common with the multiconfiguration wavefunction expansion used in the MCTDH scheme for wave functions, a similar expansion can be used in the case of density operators, in this case, expressed as

where ![]() denotes the MCTDH coefficients and

denotes the MCTDH coefficients and ![]() presents the single‐particle density operators (SPDO's) analogous to the single‐particle functions of the conventional scheme. This implementation of this approach is described in detailed in Refs. [70], [71]. It is important to bear in mind that compared with the wavefunction approaches described in the previous section, the numerical treatment of density operators is more difficult since the dimensionality of the system formally doubles [69].

presents the single‐particle density operators (SPDO's) analogous to the single‐particle functions of the conventional scheme. This implementation of this approach is described in detailed in Refs. [70], [71]. It is important to bear in mind that compared with the wavefunction approaches described in the previous section, the numerical treatment of density operators is more difficult since the dimensionality of the system formally doubles [69].

9.3 Case Studies

In the following subsections two recent examples of using quantum wavepacket dynamics simulations to reveal information about the fine details of excited‐state processes, in particular ISC and rISC, are discussed. The first is a study of the ultrafast relaxation in a prototypical Cu(I)–phenanthroline complex, [Cu(dmp)![]() ]

]![]() (dmp = 2,9‐dimethyl‐1,10‐phenanthroline) [25], [32], [72], [73], as a prototypical example of the excited‐state dynamics of Cu(I) complexes, which was one of the first to be applied as a TADF OLED device [74]. In the second example, we illustrate the critical contribution of vibronic coupling for achieving efficient rISC within organic D–A molecule [42], which have become the most popular area of research for TADF.

(dmp = 2,9‐dimethyl‐1,10‐phenanthroline) [25], [32], [72], [73], as a prototypical example of the excited‐state dynamics of Cu(I) complexes, which was one of the first to be applied as a TADF OLED device [74]. In the second example, we illustrate the critical contribution of vibronic coupling for achieving efficient rISC within organic D–A molecule [42], which have become the most popular area of research for TADF.

9.3.1 Ultrafast Dynamics of a Cu(I)–phenanthroline Complex

The necessity to harvest the 75% of the triplet excitons generated following electrical excitation has placed a great emphasis upon the heavier transition metals. Consequently, metal–organic complexes based upon first‐row transition metal ions, such as Cu(I), were largely excluded as the absence of any significant heavy atom effect meant that the triplet lifetime of these complexes was often too long for devices [75]. However, while this is true when focusing upon the triplet harvesting mechanism, Cu(I) emitters have been known, since the pioneering work of McMillin and coworkers [19], to exhibit TADF, and therefore if one is able to optimize this singlet harvesting pathway, the Cu(I) emitters become an attractive, earth‐abundant emitter. Indeed, as previously stated, Cu(I) complexes were the first to be used for TADF OLED devices [74].

Within the plethora of Cu(I) complexes that exist [76], a subset that has received significant attention, especially regarding their ultrafast dynamics and ISC rate, is the mononuclear Cu(I)–phenanthroline complexes [77], [78]; among which [Cu(dmp)![]() ]

]![]() is a prototypical example. In general, the ground state of these complexes adopts a pseudotetrahedral geometry with the two ligands being orthogonal; see Figure 9.5c [79]. Upon excitation into the singlet metal‐to‐ligand charge transfer (MLCT) states, the oxidation state of copper becomes Cu(II), and a flattening of the complex occurs, because the copper d

is a prototypical example. In general, the ground state of these complexes adopts a pseudotetrahedral geometry with the two ligands being orthogonal; see Figure 9.5c [79]. Upon excitation into the singlet metal‐to‐ligand charge transfer (MLCT) states, the oxidation state of copper becomes Cu(II), and a flattening of the complex occurs, because the copper d![]() electronic configuration is susceptible to pseudo‐Jahn–Teller (PJT) distortions [80], as shown in Figure 9.5c.

electronic configuration is susceptible to pseudo‐Jahn–Teller (PJT) distortions [80], as shown in Figure 9.5c.

![Illustrations of cuts through the PES along (left) ν8, a breathing mode acting on the CuN distances and (middle) ν21 responsible for the pseudo-Jahn-Teller distortion. The dots and lines are the results of calculations for the singlet and triplet states. (Right) DFT-optimised geometry of the ground state (upper) and excited state (lower) of [Cu(dmp)2]+.](http://images-20200215.ebookreading.net/4/1/1/9783527339006/9783527339006__highly-efficient-oleds__9783527339006__images__c09f005.jpg)

Figure 9.5 Cuts through the PES along (a)  , a breathing mode acting on the Cu–N distances, and (b)

, a breathing mode acting on the Cu–N distances, and (b)  responsible for the pseudo‐ Jahn–Teller distortion and therefore affecting the dihedral between the two ligands. The dots are results from the quantum chemistry calculations for the singlet (black) and triplet (green) states. The lines correspond to their fit from which the expansion coefficients are determined. (c) DFT‐optimized geometry of the ground state (upper) and lowest triplet state (lower) of [Cu(dmp)

responsible for the pseudo‐ Jahn–Teller distortion and therefore affecting the dihedral between the two ligands. The dots are results from the quantum chemistry calculations for the singlet (black) and triplet (green) states. The lines correspond to their fit from which the expansion coefficients are determined. (c) DFT‐optimized geometry of the ground state (upper) and lowest triplet state (lower) of [Cu(dmp) ]

] . The latter clearly shows the reduction of the dihedral angle between the two ligands arising from the pseudo‐Jahn–Teller effect.

. The latter clearly shows the reduction of the dihedral angle between the two ligands arising from the pseudo‐Jahn–Teller effect.

Over the past decade, numerous time‐resolved spectroscopic studies of [Cu(dmp)![]() ]

]![]() have been performed to provide a detailed picture of kinetic processes occurring within the excited state [80]–[86]. Interestingly while these studies reach a consensus on the time scales observed, with three principal dynamical processes (50–100 fs, 500–900 fs, and 10–20 ps) consistently reported, the conclusions drawn from these kinetics are somewhat contrasting with some groups assigning subpicosecond ISC followed by a slow structural distortion, while others attribute the processes the other way around. To address this, we performed a quantum dynamics study, which is now described.

have been performed to provide a detailed picture of kinetic processes occurring within the excited state [80]–[86]. Interestingly while these studies reach a consensus on the time scales observed, with three principal dynamical processes (50–100 fs, 500–900 fs, and 10–20 ps) consistently reported, the conclusions drawn from these kinetics are somewhat contrasting with some groups assigning subpicosecond ISC followed by a slow structural distortion, while others attribute the processes the other way around. To address this, we performed a quantum dynamics study, which is now described.

Importantly, as outlined in the previous section, we must first obtain a description for the PES. As [Cu(dmp)![]() ]

]![]() has 57 atoms, and therefore 165 nuclear degrees of freedom, calculating a full PES is unrealistic, and therefore we adopt the model vibronic coupling Hamiltonian approach outlined above. In total our model Hamiltonian is based upon a reduced subspace of the full configuration space, including eight vibrational degrees of freedom, most important for the first picosecond of excited‐state dynamics [32], [73]. This identification was achieved using the magnitude of the linear coupling constants Eqs. and calculated for the lowest 60 frequency normal modes and by expressing the excited‐state structural changes in normal mode coordinates. Although this clearly represents a significant reduction in the dimensionality of the potential, as shown in Figure 9.6, the modes included closely correspond to those identified from the femtosecond transient absorption study of Ref. [84]. Consequently, although the present Hamiltonian will be unable to capture longer time effects, such as vibrational cooling, this model Hamiltonian will provide accurate insight into the femtosecond dynamics.

has 57 atoms, and therefore 165 nuclear degrees of freedom, calculating a full PES is unrealistic, and therefore we adopt the model vibronic coupling Hamiltonian approach outlined above. In total our model Hamiltonian is based upon a reduced subspace of the full configuration space, including eight vibrational degrees of freedom, most important for the first picosecond of excited‐state dynamics [32], [73]. This identification was achieved using the magnitude of the linear coupling constants Eqs. and calculated for the lowest 60 frequency normal modes and by expressing the excited‐state structural changes in normal mode coordinates. Although this clearly represents a significant reduction in the dimensionality of the potential, as shown in Figure 9.6, the modes included closely correspond to those identified from the femtosecond transient absorption study of Ref. [84]. Consequently, although the present Hamiltonian will be unable to capture longer time effects, such as vibrational cooling, this model Hamiltonian will provide accurate insight into the femtosecond dynamics.

![Illustration displaying reproduction of the Fourier transform power spectrum of the oscillatory components within the excited state dynamics of [Cu(dmp)2]+. This is overplayed with the vibrational frequencies included in the model Hamiltonian.](http://images-20200215.ebookreading.net/4/1/1/9783527339006/9783527339006__highly-efficient-oleds__9783527339006__images__c09f006.jpg)

Figure 9.6 Reproduction of the Fourier transform power spectrum of the oscillatory components within the excited‐state dynamics of [Cu(dmp) ]

] reported in Ref. [84] (filled with gray). This is overplayed with the vibrational frequencies (red) included in the model Hamiltonian.

reported in Ref. [84] (filled with gray). This is overplayed with the vibrational frequencies (red) included in the model Hamiltonian.

Source: Ref. [84]. Reproduced with permission of John Wiley & Sons.

Figure 9.5 shows the potential energy curves along two vibrational degrees of freedom identified to be most important during the excited dynamics. The first, (![]() ), is a totally symmetric breathing mode acting on the Cu–N distances of the first coordination sphere. The excited‐state potentials are slightly shifted from the minimum energy geometry of the ground state (

), is a totally symmetric breathing mode acting on the Cu–N distances of the first coordination sphere. The excited‐state potentials are slightly shifted from the minimum energy geometry of the ground state (![]() ), and point to a Cu–N is slightly contracted in the excited state, consistent with previous experimental analysis [72]. The second potential (

), and point to a Cu–N is slightly contracted in the excited state, consistent with previous experimental analysis [72]. The second potential (![]() ) corresponds to the mode responsible for the PJT distortion, as illustrated by the double‐well potential shape in both the lowest singlet and triplet states. This potential shape arises from strong nonadiabatic coupling between the low‐lying excited states and therefore will be crucial for describing the population dynamics in the excited state.

) corresponds to the mode responsible for the PJT distortion, as illustrated by the double‐well potential shape in both the lowest singlet and triplet states. This potential shape arises from strong nonadiabatic coupling between the low‐lying excited states and therefore will be crucial for describing the population dynamics in the excited state.

Finally, in terms of generation of the model potential, it is noted that in Figure 9.5, the singlet and triplet states were calculated and fitted separately yielding a set of two spin‐free PES. In order to probe the role of ISC, the singlet and triplet manifolds are coupled by SOC. The SOCMEs computed along the important normal modes were included to generate a complete Hamiltonian, enabling direct insight into the ultrafast spin–vibronic dynamics.

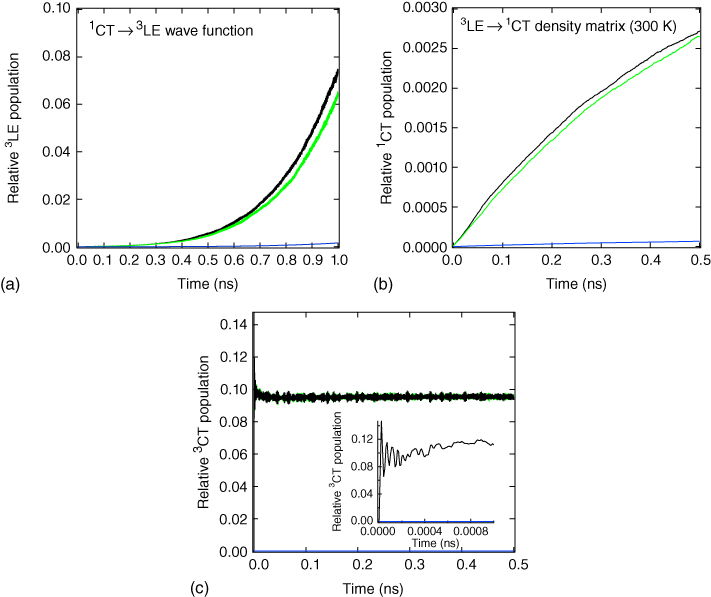

Using the Hamiltonian outlined above and described in detail in Refs. [32], [73], Figure 9.7 shows the relative diabatic state populations during the first picosecond after photoexcitation in the S![]() state for the model Hamiltonian, without (Figure 9.7a) and with (Figure 9.7b) SOC. In both cases their initial dynamics shows an initial ultrafast IC from the S

state for the model Hamiltonian, without (Figure 9.7a) and with (Figure 9.7b) SOC. In both cases their initial dynamics shows an initial ultrafast IC from the S![]() to the S

to the S![]() and S

and S![]() states. The electronic energy of the wave packet on S

states. The electronic energy of the wave packet on S![]() is then converted into kinetic energy on the lower states, resulting in an incoherent distribution of vibrationally hot levels. Initially (up to 200 fs) the two states (S

is then converted into kinetic energy on the lower states, resulting in an incoherent distribution of vibrationally hot levels. Initially (up to 200 fs) the two states (S![]() and S

and S![]() ) can be said to be in equilibrium; however as the dynamics proceeds the population occurs increasingly in the S

) can be said to be in equilibrium; however as the dynamics proceeds the population occurs increasingly in the S![]() state. When fitted with a biexponential, the first kinetic component of the S

state. When fitted with a biexponential, the first kinetic component of the S![]() state is

state is ![]() 100 fs, in good agreement experiment [82], [84].

100 fs, in good agreement experiment [82], [84].

Figure 9.7 (a) Relative diabatic state populations of S (cyan), S

(cyan), S (purple), and S

(purple), and S (black) for 1 ps following photoexcitation (no spin–orbit coupling). (b) Relative diabatic state populations of S

(black) for 1 ps following photoexcitation (no spin–orbit coupling). (b) Relative diabatic state populations of S (cyan), S

(cyan), S (purple), S

(purple), S (black), and the triplet (T

(black), and the triplet (T ) states (red), for 1 ps following photoexcitation (with spin–orbit coupling).

) states (red), for 1 ps following photoexcitation (with spin–orbit coupling).

Source: Ref. [32]. Reproduced with permission of American Chemical Society.

Figure 9.7b shows that when SOCMEs between the low‐lying singlet and triplet states, we observe ![]() 80% of the wave packet populating the triplet states consistent with ultrafast ISC. Importantly, this cannot occur via SOC between the S

80% of the wave packet populating the triplet states consistent with ultrafast ISC. Importantly, this cannot occur via SOC between the S![]() and T

and T![]() , as previously assumed in the literature, due to a relatively large energy gap (

, as previously assumed in the literature, due to a relatively large energy gap (![]() 0.2 eV) and small SOCMEs. Instead, ISC initially occurs via S

0.2 eV) and small SOCMEs. Instead, ISC initially occurs via S![]() T

T![]() and S

and S![]() T

T![]() , due to the strong SOC and degeneracies of these three states along the PJT (

, due to the strong SOC and degeneracies of these three states along the PJT (![]() ) mode (see Figure 9.5b) [73]. Here ISC occurs via a dynamical effect by traversing a region where the coupled singlet and triplet states are degenerate, leading to efficient and multiple ultrafast ISC channels. Therefore clearly the excited‐state vibrational motion plays a crucial part in this.

) mode (see Figure 9.5b) [73]. Here ISC occurs via a dynamical effect by traversing a region where the coupled singlet and triplet states are degenerate, leading to efficient and multiple ultrafast ISC channels. Therefore clearly the excited‐state vibrational motion plays a crucial part in this.

In the context of TADF, and especially rISC, it is stressed that the present dynamics describing ultrafast ISC would not influence the k![]() rate, as it derives from dynamics in the S

rate, as it derives from dynamics in the S![]() state. For rISC, the system would not only be in the triplet state but also in the relaxed geometry, and therefore the rate of rISC can be described using the SOCME between S

state. For rISC, the system would not only be in the triplet state but also in the relaxed geometry, and therefore the rate of rISC can be described using the SOCME between S![]() and T

and T![]() at this geometry. However, these findings of ultrafast ISC have significant implications if one wishes to apply these complexes for solar energy conversion. Indeed, Huang et al. [87] demonstrated that when attached to a TiO

at this geometry. However, these findings of ultrafast ISC have significant implications if one wishes to apply these complexes for solar energy conversion. Indeed, Huang et al. [87] demonstrated that when attached to a TiO![]() , charge injection for a related Cu(I)–phenanthroline complex, from the

, charge injection for a related Cu(I)–phenanthroline complex, from the ![]() MLCT, is two orders of magnitude faster than from the

MLCT, is two orders of magnitude faster than from the ![]() MLCT. Consequently for efficient charge injection one needs to restrict ISC.