Performance Bounds and Statistical Analysis of DOA Estimation

Jean Pierre Delmas, TELECOM SudParis, Département CITI, CNRS UMR 5157, Evry Cedex, France, [email protected]

Abstract

The aim of this chapter is to provide a unified methodology to study the theoretical statistical performance of arbitrary DOA estimation and source number detection methods and to tackle the resolvability of closely space sources. A particular attention is given to the asymptotic distribution, mean and covariance of DOA estimates and to the Cramer Rao and asymptotically minimum variance bounds. To illustrate this general framework, several examples are detailed such as the conventional MUSIC algorithm, the MDL criterion and the angular resolution limit based on the detection theory. Furthermore robustness with respect to the Gaussian distribution, the independence and narrow band assumptions, and array modeling errors are also considered.

Keywords

Performance analysis; Cramer Rao Bound (CRB); Maximum likelihood (ML) estimation; Subspace-based algorithms; Second-order algorithms; Minimum description length (MDL) criterion; Angular resolution limit

3.16.1 Introduction

Over the last three decades, many direction of arrival (DOA) estimation and source number detection methods have been proposed in the literature. Early studies on statistical performance were only based on extensive Monte Carlo experiments. Analytical performance evaluations, that allow one to evaluate the expected performance, as pioneering by Kaveh and Barabell [1], have since attracted much excellent research.

The earlier works were devoted to the statistical performance analysis of subspace-based algorithms. In particular the celebrated MUSIC algorithm has been extensively investigated (see, e.g., [2–5] among many others). But curiously, these works were based on first-order perturbations of the eigenvectors and eigenvalues of the sample covariance matrix, and thus involved very complicated derivations. Subsequently, Krim et al. [6] carried out a performance analysis of two eigenstructure-based DOA estimation algorithms, using a series expansion of the orthogonal projectors on the signal and noise subspaces, allowing considerable simplification of the previous approaches. Motivated by this point of view, several unified analyses of subspace-based algorithms have been presented (see, e.g., [7–9]). In parallel to these works, a particular attention has been paid to the statistical performance of the exact and approximative maximum likelihood algorithms (ML), in relation to the celebrated Cramer-Rao bound (see, e.g., [10,11], and the tutorial [12] with the references therein).

The statistical performance analysis of the difficult and critical problem of the detection of the number of sources impinging on an array, has been based on principally standard techniques of the statistical detection literature. In particular, the information theoretical criteria and especially the minimum description length (MDL), as popularized in the signal processing literature by [13], have been analyzed (see, e.g., [14–16]). Related to the DOA estimation accuracy and to the detection of the number of sources, the resolvability of closely spaced signals in terms of their parameters of interest have been also extensively studied (see, e.g., [17,18]).

The aim of this chapter is not to give a survey of all performance analysis of DOA estimation and source detection methods that have appeared in the literature, but rather, to provide a unified methodology introduced in [19] and then specialized to second-order in [20] to study the theoretical statistical performance of arbitrary DOA estimation and source number detection methods and to tackle the resolvability of closely space sources. To illustrate this framework, several examples are detailed such as the conventional MUSIC algorithm, the MDL criterion and the angular resolution limit based on the detection theory.

This chapter is organized as follows. Section 3.16.2 presents the mathematical model of the array output and introduce the basic assumptions. General statistical tools for performance bounds and statistical analysis of DOA estimation algorithms are given in Section 3.16.3 based on a functional approach providing a common unifying framework. Then, Section 3.16.4 embarks on statistical performance analysis of beamforming-based, maximum likelihood and second-order algorithms with a particular attention paid to the subspace-based algorithms. In particular the robustness w.r.t. the Gaussian distribution, the independence and narrowband assumptions, and array modeling errors are considered. Finally some elements of statistical performance analysis of high-order algorithms complete this section. A glimpse into the detection of the number of sources is given in Section 3.16.5 where a performance analysis of the minimum description length (MDL) criterion is derived. Finally, Section 3.16.6 is devoted to criteria for resolving two closely spaced sources.

The following notations are used throughout this chapter: ![]() and

and ![]() denote quantities such that

denote quantities such that ![]() and

and ![]() is bounded in the neighborhood of

is bounded in the neighborhood of ![]() , respectively.

, respectively.

3.16.2 Models and basic assumption

3.16.2.1 Parametric array model

Consider an array of ![]() sensors arranged in an arbitrary geometry that receives the waveforms generated by

sensors arranged in an arbitrary geometry that receives the waveforms generated by ![]() point sources (electromagnetic or acoustic). The output of each sensor is modeled as the response of a linear time-invariant bandpass system of bandwidth

point sources (electromagnetic or acoustic). The output of each sensor is modeled as the response of a linear time-invariant bandpass system of bandwidth ![]() . The impulse response of each sensor to a signal impinging on the array depends on the physical antenna structure, the receiver electronics and other antennas in the array through mutual coupling. The complex amplitudes

. The impulse response of each sensor to a signal impinging on the array depends on the physical antenna structure, the receiver electronics and other antennas in the array through mutual coupling. The complex amplitudes ![]() of these sources w.r.t. a carrier frequency

of these sources w.r.t. a carrier frequency ![]() are assumed to vary very slowly relative to the propagation time across the array (more precisely, the array aperture measured in wavelength, is much less than the inverse relative bandwidth

are assumed to vary very slowly relative to the propagation time across the array (more precisely, the array aperture measured in wavelength, is much less than the inverse relative bandwidth ![]() ). This so-called narrowband assumption allows the time delays

). This so-called narrowband assumption allows the time delays ![]() of the

of the ![]() th source at the

th source at the ![]() th sensor, relative to some fixed reference point, to be modeled as a simple phase-shift of the carrier frequency. If

th sensor, relative to some fixed reference point, to be modeled as a simple phase-shift of the carrier frequency. If ![]() is the complex envelope of the additive noise, the complex envelope of the signals collected at the output of the sensors is given by applying the superposition principle for linear sensors by:

is the complex envelope of the additive noise, the complex envelope of the signals collected at the output of the sensors is given by applying the superposition principle for linear sensors by:

(16.1)

(16.1)

where ![]() and

and ![]() may include generally azimuth, elevation, range and polarization of the

may include generally azimuth, elevation, range and polarization of the ![]() th source. However, we will here assume that there is only one parameter per source, referred as the direction of arrival (DOA)

th source. However, we will here assume that there is only one parameter per source, referred as the direction of arrival (DOA) ![]() .

. ![]() is the steering vector associated with the

is the steering vector associated with the ![]() th source. The array manifold, defined as the set

th source. The array manifold, defined as the set ![]() for some region

for some region ![]() in DOA space, is perfectly known, either analytically or by measuring it in the field. It is further required for performance analysis that

in DOA space, is perfectly known, either analytically or by measuring it in the field. It is further required for performance analysis that ![]() be continuously twice differentiable w.r.t.

be continuously twice differentiable w.r.t. ![]() .

. ![]() is the

is the ![]() steering matrix with

steering matrix with ![]() .

.

To illustrate the parameterization of the steering vector ![]() , assume that the sources are in the far field of the array, and that the medium is non-dispersive, so that the waveforms can be approximated as planar. In this case, the

, assume that the sources are in the far field of the array, and that the medium is non-dispersive, so that the waveforms can be approximated as planar. In this case, the ![]() th component of

th component of ![]() is simply

is simply ![]() where

where ![]() is the directivity gain of the

is the directivity gain of the ![]() th sensor,

th sensor, ![]() ,

, ![]() represents the speed of propagation,

represents the speed of propagation, ![]() is a unit vector pointing in the direction of propagation and

is a unit vector pointing in the direction of propagation and ![]() is the position of the

is the position of the ![]() th sensor relative the origin of the different delays.

th sensor relative the origin of the different delays.

The by far most studied sensor geometry is that of uniform linear array (ULA), where the ![]() sensors are assumed to be identical and omnidirectional over the DOA range of interest. Referenced w.r.t. the first sensor that is used as the origin,

sensors are assumed to be identical and omnidirectional over the DOA range of interest. Referenced w.r.t. the first sensor that is used as the origin, ![]() and

and ![]() , where

, where ![]() is the wavelength. To avoid any ambiguity,

is the wavelength. To avoid any ambiguity, ![]() must be less than or equal to

must be less than or equal to ![]() . The standard ULA has

. The standard ULA has ![]() that ensures a maximum accuracy on the estimation of

that ensures a maximum accuracy on the estimation of ![]() . In this case

. In this case

![]() (16.2)

(16.2)

3.16.2.2 Signal assumptions and problem formulation

Each vector observation ![]() is called a snapshot of the array output. Let the process

is called a snapshot of the array output. Let the process ![]() be observed at

be observed at ![]() time instants

time instants ![]() .

. ![]() is often sampled at a slow sampling frequency

is often sampled at a slow sampling frequency ![]() compared to the bandwidth of

compared to the bandwidth of ![]() for which

for which ![]() are independent. Temporal correlation between successive snapshots is generally not a problem, but implies that a larger number

are independent. Temporal correlation between successive snapshots is generally not a problem, but implies that a larger number ![]() of snapshots is needed for the same performance. We will prove in Section 3.16.4.3 that the parameter that fixes the performance is not

of snapshots is needed for the same performance. We will prove in Section 3.16.4.3 that the parameter that fixes the performance is not ![]() , but the observation interval

, but the observation interval ![]() . The signals

. The signals ![]() and

and ![]() are assumed independent.1 For well calibrated arrays,

are assumed independent.1 For well calibrated arrays, ![]() is often assumed to be dominated by thermal noise in the receivers, which can be well modeled as zero-mean temporally and spatially white circular Gaussian random process. In this case,

is often assumed to be dominated by thermal noise in the receivers, which can be well modeled as zero-mean temporally and spatially white circular Gaussian random process. In this case, ![]() and

and ![]() , for which the spatial covariance and spatial complementary covariance matrices are given by

, for which the spatial covariance and spatial complementary covariance matrices are given by ![]() and

and ![]() , respectively. A common, alternative model assumes that

, respectively. A common, alternative model assumes that ![]() is spatially correlated where

is spatially correlated where ![]() is known up to a scalar multiplicative term

is known up to a scalar multiplicative term ![]() , i.e.,

, i.e., ![]() where

where ![]() is a known definite positive matrix. In this case,

is a known definite positive matrix. In this case, ![]() can be pre-multiplied by an inverse square-root factor

can be pre-multiplied by an inverse square-root factor ![]() of

of ![]() , which renders the resulting noise spatially white and preserves model (16.1) by replacing the steering vectors

, which renders the resulting noise spatially white and preserves model (16.1) by replacing the steering vectors ![]() by

by ![]() .

.

Two kind of assumptions are used for ![]() . In the first one, called stochastic or unconditional model (see, e.g., [10,11]),

. In the first one, called stochastic or unconditional model (see, e.g., [10,11]), ![]() are assumed to be zero-mean random variables for which the most commonly used distribution is the circular Gaussian one with spatial covariance

are assumed to be zero-mean random variables for which the most commonly used distribution is the circular Gaussian one with spatial covariance ![]() and spatial complementary covariance

and spatial complementary covariance ![]() .

. ![]() is nonsingular for not fully correlated sources (called also noncoherent) or near-singular for highly correlated sources. In the case of coherent sources (specular multipath or smart jamming, where some signals impinging on the array of sensors can be sums of scaled and delayed versions of the others),

is nonsingular for not fully correlated sources (called also noncoherent) or near-singular for highly correlated sources. In the case of coherent sources (specular multipath or smart jamming, where some signals impinging on the array of sensors can be sums of scaled and delayed versions of the others), ![]() is singular. In this chapter

is singular. In this chapter ![]() is usually assumed nonsingular. For these assumptions, the snapshots

is usually assumed nonsingular. For these assumptions, the snapshots ![]() are zero-mean complex circular Gaussian distributed with covariance matrix

are zero-mean complex circular Gaussian distributed with covariance matrix

![]() (16.3)

(16.3)

This circular Gaussian assumption lies not only in the fact that circular Gaussian data are rather frequently encountered in applications, but also because optimal detection and estimation algorithms are much easier to deduce under this assumption. Furthermore, as will be discussed in Section 3.16.4, under rather general conditions and in large samples [21], the Gaussian CRB is the largest of all CRB matrices corresponding to different distributions of the sources of identical covariance matrix ![]() . This stochastic model can be extended by assuming that

. This stochastic model can be extended by assuming that ![]() is arbitrarily distributed with finite fourth-order moments [20] including the case where

is arbitrarily distributed with finite fourth-order moments [20] including the case where ![]() associated with the second-order noncircular distributions.

associated with the second-order noncircular distributions.

A common alternative assumption, called deterministic or conditional model (see, e.g., [10,11]) is used when the distribution of ![]() is unknown or/and clearly non-Gaussian, for example in radar and radio communications. Here

is unknown or/and clearly non-Gaussian, for example in radar and radio communications. Here ![]() is nonrandom, i.e., the sequence

is nonrandom, i.e., the sequence ![]() is frozen in all realizations of the random snapshots

is frozen in all realizations of the random snapshots ![]() . Consequently,

. Consequently, ![]() is considered as a complex unknown parameter in

is considered as a complex unknown parameter in ![]() . For this assumption, the snapshots

. For this assumption, the snapshots ![]() are complex circular Gaussian distributed with mean

are complex circular Gaussian distributed with mean ![]() and covariance matrix

and covariance matrix ![]() .

.

With these preliminaries, the main DOA problem can now be formulated as follows: Given the observations, ![]() and the described model (16.1), detect the number

and the described model (16.1), detect the number ![]() of incoming sources and estimate their DOAs

of incoming sources and estimate their DOAs ![]() .

.

3.16.2.3 Parameter identifiability

Once the distribution of the observations ![]() has been fixed, the question of the identifiability of the parameters (including the DOA

has been fixed, the question of the identifiability of the parameters (including the DOA ![]() ) must be raised. For example, under the assumption of independent, zero-mean circular Gaussian distributed observations, all information in the measured data is contained in the covariance matrix

) must be raised. For example, under the assumption of independent, zero-mean circular Gaussian distributed observations, all information in the measured data is contained in the covariance matrix ![]() (16.3). The question of parameter identifiability is thus reduced to investigating under which conditions

(16.3). The question of parameter identifiability is thus reduced to investigating under which conditions ![]() determines the unknown parameters. Thus, if no a priori information on

determines the unknown parameters. Thus, if no a priori information on ![]() is available, the unknown parameter

is available, the unknown parameter ![]() of

of ![]() contains the following

contains the following ![]() real-valued parameters:

real-valued parameters:

(16.4)

(16.4)

and the parameter ![]() is identifiable if and only if

is identifiable if and only if ![]() . To ensure this identifiability, it is necessary that

. To ensure this identifiability, it is necessary that ![]() be full column rank for any collection of

be full column rank for any collection of ![]() , distinct

, distinct ![]() . An array satisfying this assumption is said to be unambiguous. Notice that this requirement is problem-dependent and, therefore, has to be established for the specific array under study. For example, due to the Vandermonde structure of

. An array satisfying this assumption is said to be unambiguous. Notice that this requirement is problem-dependent and, therefore, has to be established for the specific array under study. For example, due to the Vandermonde structure of ![]() in the ULA case (16.2), it is straightforward to prove that the ULA is unambiguous if

in the ULA case (16.2), it is straightforward to prove that the ULA is unambiguous if ![]() . In the case where the rank of

. In the case where the rank of ![]() , that is the dimension of the linear space spanned by

, that is the dimension of the linear space spanned by ![]() is known and equal to

is known and equal to ![]() , different conditions of identifiability has been given in the literature. In particular, the condition

, different conditions of identifiability has been given in the literature. In particular, the condition

![]() (16.5)

(16.5)

has been proved to be sufficient [22] and practically necessary [23].

When ![]() are not circularly Gaussian distributed, the identifiability condition is generally much more involved. For example, when

are not circularly Gaussian distributed, the identifiability condition is generally much more involved. For example, when ![]() is noncircularly Gaussian distributed,

is noncircularly Gaussian distributed, ![]() is noncircularly Gaussian distributed as well with complementary covariance

is noncircularly Gaussian distributed as well with complementary covariance

![]() (16.6)

(16.6)

and the distribution of the observations are now characterized by both ![]() and

and ![]() . Consequently, the condition of identifiability will be modified w.r.t. the circular case given in (16.5). This condition has not been presented in the literature, except for the particular case of uncorrelated and rectilinear (called also maximally improper) sources impinging on a ULA for which, the augmented covariance matrix

. Consequently, the condition of identifiability will be modified w.r.t. the circular case given in (16.5). This condition has not been presented in the literature, except for the particular case of uncorrelated and rectilinear (called also maximally improper) sources impinging on a ULA for which, the augmented covariance matrix ![]() with

with ![]() is given by

is given by

(16.7)

(16.7)

where ![]() with

with ![]() is the second-order phase of noncircularity defined by

is the second-order phase of noncircularity defined by

![]() (16.8)

(16.8)

Due to the Vandermonde-like structure of the extended steering matrix ![]() , the condition of identifiability is now here

, the condition of identifiability is now here ![]() .

.

Note that when ![]() is discrete distributed (for example when

is discrete distributed (for example when ![]() are symbols

are symbols ![]() of a digital modulation taking

of a digital modulation taking ![]() different values), the condition of identifiability is nontrivial despite the distribution of

different values), the condition of identifiability is nontrivial despite the distribution of ![]() is a mixture of

is a mixture of ![]() circular Gaussian distributions of mean

circular Gaussian distributions of mean ![]() and covariance

and covariance ![]() .

.

3.16.3 General statistical tools for performance analysis of DOA estimation

3.16.3.1 Performance analysis of a specific algorithm

3.16.3.1.1 Functional analysis

To study the statistical performance of any DOA’s estimator (often called an algorithm as a succession of different steps), it is fruitful to adopt a functional analysis that consists in recognizing that the whole process of constructing the estimate ![]() is equivalent to defining a functional relation linking this estimate to the measurements from which it is inferred. As generally

is equivalent to defining a functional relation linking this estimate to the measurements from which it is inferred. As generally ![]() are functions of some statistics

are functions of some statistics ![]() (assumed complex-valued vector in

(assumed complex-valued vector in ![]() ) deduced from

) deduced from ![]() , we have the following mapping:

, we have the following mapping:

![]() (16.9)

(16.9)

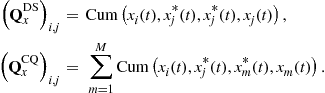

Many often, the statistics ![]() are sample moments or cumulants of

are sample moments or cumulants of ![]() . The most common ones are second-order sample moments of

. The most common ones are second-order sample moments of ![]() deduced from the sample covariance and complementary covariance matrices

deduced from the sample covariance and complementary covariance matrices ![]() and

and ![]() , respectively. For non-Gaussian symmetric sources distributions, even sample high-order cumulants of

, respectively. For non-Gaussian symmetric sources distributions, even sample high-order cumulants of ![]() are also used, in particular the fourth-order sample cumulants deduced from the sample quadrivariance matrices

are also used, in particular the fourth-order sample cumulants deduced from the sample quadrivariance matrices ![]() ,

, ![]() and

and ![]() where

where ![]() ,

, ![]() and

and ![]() , estimated through the associated fourth and second-order sample moments. In these cases, the algorithms are called second-order, high-order and fourth-order algorithms, respectively.

, estimated through the associated fourth and second-order sample moments. In these cases, the algorithms are called second-order, high-order and fourth-order algorithms, respectively.

The statistic ![]() generally satisfies two conditions:

generally satisfies two conditions:

i. ![]() converges almost surely (from the strong law of large numbers) to

converges almost surely (from the strong law of large numbers) to ![]() when

when ![]() tends to infinity, that is a function of the DOAs and other parameters denoted

tends to infinity, that is a function of the DOAs and other parameters denoted ![]() .

.

ii. The DOAs ![]() are identifiable from

are identifiable from ![]() , i.e., there exists a mapping

, i.e., there exists a mapping ![]() .

.

Furthermore, we assume that the algorithm ![]() satisfies

satisfies ![]() for all

for all ![]() . Consequently the functional dependence

. Consequently the functional dependence ![]() constitutes a particular extension of the mapping

constitutes a particular extension of the mapping ![]() in the neighborhood of

in the neighborhood of ![]() that characterizes all algorithm based on the statistic

that characterizes all algorithm based on the statistic ![]() .

.

Note that for circular Gaussian stochastic and deterministic models of the sources, the likelihood functions of the measurements depend on ![]() through only the sample covariance

through only the sample covariance ![]() , and therefore the algorithms called respectively stochastic maximum likelihood (SML) and deterministic maximum likelihood (DML) algorithms are second-order algorithms [12]. The SML algorithm has been extended to noncircular Gaussian sources, for which the ML algorithm is built from both

, and therefore the algorithms called respectively stochastic maximum likelihood (SML) and deterministic maximum likelihood (DML) algorithms are second-order algorithms [12]. The SML algorithm has been extended to noncircular Gaussian sources, for which the ML algorithm is built from both ![]() and

and ![]() [24].

[24].

However, due to their complexity, many suboptimal algorithms with much lower computational requirements have been proposed in the literature. Among them, many algorithms are based on the noise (or signal) orthogonal projector ![]() onto the noise (or signal) subspace associated with the sample covariance

onto the noise (or signal) subspace associated with the sample covariance ![]() . These algorithms are called subspace-based algorithms. The most celebrated is the MUSIC algorithm that offers a good trade-off between performance and computational costs. Its statistical performance has been thoroughly studied in the literature (see, e.g., [1,3,25,26]). In these cases, the mapping (16.9) becomes

. These algorithms are called subspace-based algorithms. The most celebrated is the MUSIC algorithm that offers a good trade-off between performance and computational costs. Its statistical performance has been thoroughly studied in the literature (see, e.g., [1,3,25,26]). In these cases, the mapping (16.9) becomes

![]() (16.10)

(16.10)

where the mapping ![]() characterizes the specific subspace-based algorithm. Some of these algorithms have been extended for noncircular sources through subspace-based algorithms based on

characterizes the specific subspace-based algorithm. Some of these algorithms have been extended for noncircular sources through subspace-based algorithms based on ![]() or

or ![]() where

where ![]() and

and ![]() are the orthogonal projectors onto the noise subspace associated with the sample complementary covariance

are the orthogonal projectors onto the noise subspace associated with the sample complementary covariance ![]() and the sample augmented covariance

and the sample augmented covariance ![]()

![]() with

with ![]() , respectively [27].

, respectively [27].

3.16.3.1.2 Asymptotic distribution of statistics

Due to the nonlinearity of model (16.1) w.r.t. the DOA’s parameter, the performance analysis of detectors for the number of sources and the DOA’s estimation procedures are not possible for a finite number ![]() of snapshots. But in many cases, asymptotic performance analyses are available when the number

of snapshots. But in many cases, asymptotic performance analyses are available when the number ![]() of measurements, the signal-to-noise ratio (SNR) (see, e.g., [28]) or the number of sensors

of measurements, the signal-to-noise ratio (SNR) (see, e.g., [28]) or the number of sensors ![]() converges to infinity (see, e.g., [29]). In practice

converges to infinity (see, e.g., [29]). In practice ![]() , SNR and

, SNR and ![]() are naturally finite and thus available results in the asymptotic regime are approximations, whose domain of validity are specified through Monte Carlo simulations. We will consider in this chapter, only asymptotic properties w.r.t.

are naturally finite and thus available results in the asymptotic regime are approximations, whose domain of validity are specified through Monte Carlo simulations. We will consider in this chapter, only asymptotic properties w.r.t. ![]() and thus, the presented results will be only valid in practice when

and thus, the presented results will be only valid in practice when ![]() . When

. When ![]() is of the same order of magnitude than

is of the same order of magnitude than ![]() , although very large, the approximations given by the asymptotic regime w.r.t.

, although very large, the approximations given by the asymptotic regime w.r.t. ![]() are generally very bad.

are generally very bad.

To derive the asymptotic distribution, covariance and bias of estimated DOAs w.r.t. the number ![]() of measurements, we first need to specify the asymptotic distribution of some statistics

of measurements, we first need to specify the asymptotic distribution of some statistics ![]() .

.

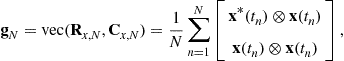

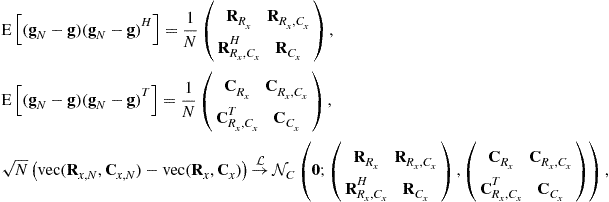

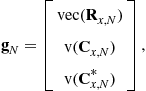

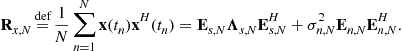

For the second-order statistics

where ![]() and

and ![]() denote, respectively, the vectorization operator that turns a matrix into a vector by stacking the columns of the matrix one below another and the standard Kronecker product of matrices, closed-form expressions of the covariance

denote, respectively, the vectorization operator that turns a matrix into a vector by stacking the columns of the matrix one below another and the standard Kronecker product of matrices, closed-form expressions of the covariance ![]() and complementary covariance

and complementary covariance ![]() matrices (where

matrices (where ![]() for short), and their asymptotic distributions2 have been given [30] for independent measurements, fourth-order arbitrary distributed sources and Gaussian distributed noise:

for short), and their asymptotic distributions2 have been given [30] for independent measurements, fourth-order arbitrary distributed sources and Gaussian distributed noise:

(16.11)

(16.11)

with

![]() (16.12)

(16.12)

where ![]() for short and

for short and ![]() denotes the vec-permutation matrix which transforms

denotes the vec-permutation matrix which transforms ![]() to

to ![]() for any square matrix

for any square matrix ![]() .

. ![]() ,

, ![]() , and

, and ![]() are defined as for

are defined as for ![]() defined previously and

defined previously and ![]() ,

, ![]() ,

, ![]() . Note that the asymptotic distribution of

. Note that the asymptotic distribution of ![]() has been extended to non independent measurements with arbitrary distributed sources and noise of finite fourth-order moments with

has been extended to non independent measurements with arbitrary distributed sources and noise of finite fourth-order moments with ![]() arbitrarily structured in [20,31].

arbitrarily structured in [20,31].

Consider now the noise orthogonal projector ![]() . Its asymptotic distribution is deduced from the standard first-order perturbation for orthogonal projectors [32] (see also [6]):

. Its asymptotic distribution is deduced from the standard first-order perturbation for orthogonal projectors [32] (see also [6]):

![]() (16.13)

(16.13)

where ![]() ,

, ![]() and

and ![]() is the Moore-Penrose inverse of

is the Moore-Penrose inverse of ![]() . The remainder in (16.13) is a standard

. The remainder in (16.13) is a standard ![]() for a realization of the random matrix

for a realization of the random matrix ![]() , but an

, but an ![]() if

if ![]() is considered as random. The relation (16.13) proves that

is considered as random. The relation (16.13) proves that ![]() is differentiable w.r.t.

is differentiable w.r.t. ![]() in the neighborhood of

in the neighborhood of ![]() and its differential matrix (called also Jacobian matrix) evaluated at

and its differential matrix (called also Jacobian matrix) evaluated at ![]() is

is

![]() (16.14)

(16.14)

Then using the standard theorem of continuity (see, e.g., [33, Theorem B, p. 124]) on regular functions of asymptotically Gaussian statistics, the asymptotic behaviors of ![]() and

and ![]() are directly related:

are directly related:

![]() (16.15)

(16.15)

where ![]() is given for independent measurements, fourth-order arbitrary distributed sources and Gaussian distributed noise, using (16.12) by

is given for independent measurements, fourth-order arbitrary distributed sources and Gaussian distributed noise, using (16.12) by

![]() (16.16)

(16.16)

with ![]() . We see that

. We see that ![]() does not depend on

does not depend on ![]() and the quadrivariances of the sources. Consequently, all subspace-based algorithms are robust to the distribution and to the noncircularity of the sources; i.e., the asymptotic performances are those of the standard complex circular Gaussian case. Note that the asymptotic distribution of

and the quadrivariances of the sources. Consequently, all subspace-based algorithms are robust to the distribution and to the noncircularity of the sources; i.e., the asymptotic performances are those of the standard complex circular Gaussian case. Note that the asymptotic distribution of ![]() and

and ![]() have also been derived under the same assumptions in [27], where it is proved that they do not depend on the quadrivariances of the sources, as well. The asymptotic distributions of

have also been derived under the same assumptions in [27], where it is proved that they do not depend on the quadrivariances of the sources, as well. The asymptotic distributions of ![]() ,

, ![]() and

and ![]() will allow us to derive the statistical performance of arbitrary subspace-based algorithms based on these orthogonal projectors in Section 3.16.4.4.

will allow us to derive the statistical performance of arbitrary subspace-based algorithms based on these orthogonal projectors in Section 3.16.4.4.

Note that the second-order expansion of ![]() w.r.t.

w.r.t. ![]() has been used in [6] to analyze the behavior of the root-MUSIC and root-min-norm algorithms dedicated to ULA, but is useless as far as we are concerned by the asymptotic distribution of the DOAs alone, as it has been specified in [27], where an extension of the root-MUSIC algorithm to noncircular sources has been proposed.

has been used in [6] to analyze the behavior of the root-MUSIC and root-min-norm algorithms dedicated to ULA, but is useless as far as we are concerned by the asymptotic distribution of the DOAs alone, as it has been specified in [27], where an extension of the root-MUSIC algorithm to noncircular sources has been proposed.

Finally, consider now the asymptotic distribution of the signal eigenvalues of ![]() that is useful for the statistical performance analysis of information theoretic criteria (whose MDL criterion popularized by Wax and Kailath [13] is one of the most successful), for the detection of the number

that is useful for the statistical performance analysis of information theoretic criteria (whose MDL criterion popularized by Wax and Kailath [13] is one of the most successful), for the detection of the number ![]() of sources. Let

of sources. Let ![]() denote the eigenvalues of

denote the eigenvalues of ![]() , ordered in decreasing order and

, ordered in decreasing order and ![]() the associated eigenvectors (defined up to a multiplicative unit modulus complex number) of the signal subspace. Then, suppose that for a “small enough” perturbation

the associated eigenvectors (defined up to a multiplicative unit modulus complex number) of the signal subspace. Then, suppose that for a “small enough” perturbation ![]() , the largest

, the largest ![]() associated eigenvalues of the sample covariance

associated eigenvalues of the sample covariance ![]() are

are ![]() . It is proved in [14], extending the work by Kaveh and Barabell [1] to arbitrary distributed independent measurements (16.1) with finite fourth-order moment, not necessarily circular and Gaussian, the following convergence in distribution:

. It is proved in [14], extending the work by Kaveh and Barabell [1] to arbitrary distributed independent measurements (16.1) with finite fourth-order moment, not necessarily circular and Gaussian, the following convergence in distribution:

![]() (16.17)

(16.17)

with ![]() ,

, ![]() , and

, and ![]() for

for ![]() ,

, ![]() is the Kronecker delta,

is the Kronecker delta, ![]() and

and ![]() . In contrast to the circular Gaussian distribution [1], we see that the estimated eigenvalues

. In contrast to the circular Gaussian distribution [1], we see that the estimated eigenvalues ![]() are no longer asymptotically mutually independent. Furthermore, it is proved in [14] that for

are no longer asymptotically mutually independent. Furthermore, it is proved in [14] that for ![]() :

:

(16.18)

(16.18)

![]() (16.19)

(16.19)

We note that these results are also valid for the augmented covariance matrix ![]() where

where ![]() and

and ![]() are replaced by

are replaced by ![]() and the rank of

and the rank of ![]() , respectively.

, respectively.

3.16.3.1.3 Asymptotic distribution of estimated DOA

In the following, we consider arbitrary DOA algorithms that are in practice “regular” enough.3 More specifically, we assume that the mapping ![]() is

is ![]() -differentiable w.r.t.

-differentiable w.r.t. ![]() in the neighborhood of

in the neighborhood of ![]() , i.e.,

, i.e.,

![]() (16.20)

(16.20)

with ![]() and

and ![]() matrix

matrix ![]() is the

is the ![]() -differential matrix (Jacobian) of the mapping

-differential matrix (Jacobian) of the mapping ![]() evaluated at

evaluated at ![]() . In practice, this matrix is derived from the chain rule by decomposing the algorithm as successive simpler mappings, and in each of these mapping, this matrix is simply deduced from first-order expansions. Then, applying a simple extension of the standard theorem of continuity [33, Theorem B, p. 124] (also called

. In practice, this matrix is derived from the chain rule by decomposing the algorithm as successive simpler mappings, and in each of these mapping, this matrix is simply deduced from first-order expansions. Then, applying a simple extension of the standard theorem of continuity [33, Theorem B, p. 124] (also called ![]() -method), it is straightforwardly proved the following convergence in distribution:

-method), it is straightforwardly proved the following convergence in distribution:

![]() (16.21)

(16.21)

where ![]() and

and ![]() are the covariance and the complementary covariance matrices of the asymptotic distribution of the statistics

are the covariance and the complementary covariance matrices of the asymptotic distribution of the statistics ![]() . We note that for subspace-based algorithms and second-order algorithms based on

. We note that for subspace-based algorithms and second-order algorithms based on ![]() or

or ![]() ,

, ![]() (because the orthogonal projector matrices and the covariance matrices are Hermitian structured), and generally for statistics

(because the orthogonal projector matrices and the covariance matrices are Hermitian structured), and generally for statistics ![]() that contain all conjugate of its components, the mapping

that contain all conjugate of its components, the mapping ![]() is

is ![]() -differentiable w.r.t.

-differentiable w.r.t. ![]() in the neighborhood of

in the neighborhood of ![]() and (16.20) and (16.21) become respectively:

and (16.20) and (16.21) become respectively:

![]() (16.22)

(16.22)

where now, ![]() is the

is the ![]() -differential matrix of the mapping

-differential matrix of the mapping ![]() evaluated at

evaluated at ![]() and

and

![]() (16.23)

(16.23)

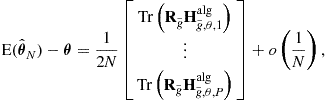

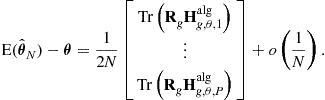

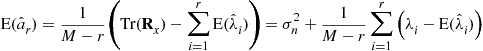

3.16.3.1.4 Asymptotic covariance and bias

Under additional regularities of the algorithm ![]() , that are generally satisfied, the covariance of

, that are generally satisfied, the covariance of ![]() is given by

is given by

![]() (16.24)

(16.24)

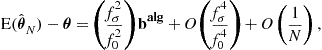

Using a second-order expansion of ![]() and

and ![]() -calculus, where

-calculus, where ![]() is assumed to be twice-

is assumed to be twice-![]() -differentiable, the bias is given by

-differentiable, the bias is given by

(16.25)

(16.25)

where  is the complex augmented Hessian matrix [34, A2.3] of the

is the complex augmented Hessian matrix [34, A2.3] of the ![]() th component of the function

th component of the function ![]() at point

at point ![]() and

and  is the augmented covariance of the asymptotic distribution of

is the augmented covariance of the asymptotic distribution of ![]() . In the particular case where

. In the particular case where ![]() is twice-

is twice-![]() -differentiable (see, e.g., the examples given for

-differentiable (see, e.g., the examples given for ![]() -differentiable algorithms (16.22)), i.e.,

-differentiable algorithms (16.22)), i.e.,

(16.26)

(16.26)

(16.25) reduces to

(16.27)

(16.27)

We note that relations (16.24), (16.25) and (16.27) are implicitly used in the signal processing literature by simple first and second-order expansions of the estimate ![]() w.r.t. the involved statistics without checking any necessary mathematical conditions concerning the remainder terms of the first and second-order expansions. In fact these conditions are very difficult to prove for the involved mappings

w.r.t. the involved statistics without checking any necessary mathematical conditions concerning the remainder terms of the first and second-order expansions. In fact these conditions are very difficult to prove for the involved mappings ![]() . For example, the following necessary conditions are given in [35, Theorem 4.2.2] for second-order algorithms: (i) the measurements

. For example, the following necessary conditions are given in [35, Theorem 4.2.2] for second-order algorithms: (i) the measurements ![]() are independent with finite eighth moments, (ii) the mapping

are independent with finite eighth moments, (ii) the mapping ![]() is four times

is four times ![]() -differentiable, (iii) the fourth derivative of this mapping and those of its square are bounded. These assumptions that do not depend on the distribution of the measurements are very strong, but fortunately (16.24), (16.25) and (16.27) continue to hold in many cases in which these assumptions are not satisfied, in particular for Gaussian distributed data (see, e.g., [35, Example 4.2.2]).

-differentiable, (iii) the fourth derivative of this mapping and those of its square are bounded. These assumptions that do not depend on the distribution of the measurements are very strong, but fortunately (16.24), (16.25) and (16.27) continue to hold in many cases in which these assumptions are not satisfied, in particular for Gaussian distributed data (see, e.g., [35, Example 4.2.2]).

In practice, (16.24), (16.25), and (16.27) show that the mean square error (MSE)

![]() (16.28)

(16.28)

is then also of order ![]() . Its main contribution comes from the variance term, since the square of the bias is of order

. Its main contribution comes from the variance term, since the square of the bias is of order ![]() . But as empirically observed, this bias contribution may be significant when SNR or

. But as empirically observed, this bias contribution may be significant when SNR or ![]() is not sufficiently large. However, there are very few contributions in the literature, that have derived closed-form bias expressions. Among them, Xu and Cave [36] has considered the bias of the MUSIC algorithm, whose derivation ought to be simplified by using the asymptotic distribution of the orthogonal projector

is not sufficiently large. However, there are very few contributions in the literature, that have derived closed-form bias expressions. Among them, Xu and Cave [36] has considered the bias of the MUSIC algorithm, whose derivation ought to be simplified by using the asymptotic distribution of the orthogonal projector ![]() , rather than those of the sample signal eigenvectors

, rather than those of the sample signal eigenvectors ![]() .

.

3.16.3.2 Cramer-Rao bounds (CRB)

The accuracy measures of performance in terms of covariance and bias of any algorithm, described in the previous section may be of limited interest, unless one has an idea of what the best possible performance is. An important measure of how well a particular DOA finding algorithm performs is the mean square error (MSE) matrix ![]() of the estimation error

of the estimation error ![]() . Among the lower bounds on this matrix, the celebrated Cramer-Rao bound (CRB) is by far the most commonly used. We note that this CRB is indeed deduced from the CRB on the complete unknown parameter

. Among the lower bounds on this matrix, the celebrated Cramer-Rao bound (CRB) is by far the most commonly used. We note that this CRB is indeed deduced from the CRB on the complete unknown parameter ![]() of the parametrized DOA model, for example, given by (16.4) for the circular Gaussian stochastic model. Furthermore, rigorously speaking, this CRB ought to be only used for unbiased estimators and under sufficiently regular distributions of the measurements. Fortunately, these technical conditions are satisfied in practice and due to the property that the bias contribution is often weak w.r.t. the variance term in the mean square error (16.28) for

of the parametrized DOA model, for example, given by (16.4) for the circular Gaussian stochastic model. Furthermore, rigorously speaking, this CRB ought to be only used for unbiased estimators and under sufficiently regular distributions of the measurements. Fortunately, these technical conditions are satisfied in practice and due to the property that the bias contribution is often weak w.r.t. the variance term in the mean square error (16.28) for ![]() , the CRB that lower bounds the covariance matrix of any unbiased estimators is used to lower bound the MSE matrix of any asymptotically unbiased estimator4

, the CRB that lower bounds the covariance matrix of any unbiased estimators is used to lower bound the MSE matrix of any asymptotically unbiased estimator4

![]() (16.29)

(16.29)

with ![]() is given under weak regularity conditions by:

is given under weak regularity conditions by:

![]() (16.30)

(16.30)

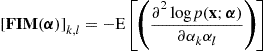

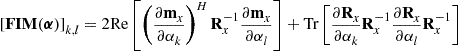

where ![]() is the Fisher information matrix (FIM) given elementwise by

is the Fisher information matrix (FIM) given elementwise by

(16.31)

(16.31)

associated with the probability density function ![]() of the measurements

of the measurements ![]() .

.

The main reason for the interest of this CRB is that it is often asymptotically (when the amount ![]() of data is large) tight, i.e., there exist algorithms, such that the stochastic maximum likelihood (ML) estimator (see 3.16.4.2), whose covariance matrices asymptotically achieve this bound. Such estimators are said to be asymptotically efficient. However, at low SNR and/or at low number

of data is large) tight, i.e., there exist algorithms, such that the stochastic maximum likelihood (ML) estimator (see 3.16.4.2), whose covariance matrices asymptotically achieve this bound. Such estimators are said to be asymptotically efficient. However, at low SNR and/or at low number ![]() of snapshots, the CRB is not achieved and is overly optimistic. This is due to the fact that estimators are generally biased in such non-asymptotic cases. For these reasons, other lower bounds are available in the literature, that are more relevant to lower bound the MSE matrices. But unfortunately, their closed-form expressions are much more complex to derive and are generally non interpretable (see, e.g., the Weiss-Weinstein bound in [38]).

of snapshots, the CRB is not achieved and is overly optimistic. This is due to the fact that estimators are generally biased in such non-asymptotic cases. For these reasons, other lower bounds are available in the literature, that are more relevant to lower bound the MSE matrices. But unfortunately, their closed-form expressions are much more complex to derive and are generally non interpretable (see, e.g., the Weiss-Weinstein bound in [38]).

In practice, closed-form expressions of the FIM (16.31) are difficult to obtain for arbitrary distributions of the sources and noise. In general, the involved integrations of (16.31) are solved numerically by replacing the expectations by arithmetical averages over a large number of computer generated measurements. But for Gaussian distributions, there are a plethora of closed-form expressions of ![]() in the literature. And the reason of the popularity of this CRB is the simplicity of the FIM for Gaussian distributions of

in the literature. And the reason of the popularity of this CRB is the simplicity of the FIM for Gaussian distributions of ![]() .

.

3.16.3.2.1 Gaussian stochastic case

On way to derive closed-form expressions of ![]() is to use the extended Slepian-Bangs [39,40] formula, where the FIM (16.31) is given elementwise by

is to use the extended Slepian-Bangs [39,40] formula, where the FIM (16.31) is given elementwise by

(16.32)

(16.32)

for a circular5 Gaussian ![]() distribution of

distribution of ![]() . But there are generally difficulties to derive compact matrix expressions of the CRB for DOA parameters alone given by

. But there are generally difficulties to derive compact matrix expressions of the CRB for DOA parameters alone given by

![]()

with ![]() where

where ![]() gathers all the nuisance parameters (in many applications, only the DOAs are of interest). Another way, based on the asymptotic efficiency of the ML estimator (under certain regularity conditions) has been used to indirectly derive the CRB on the DOA parameter alone (see 3.16.4.2).

gathers all the nuisance parameters (in many applications, only the DOAs are of interest). Another way, based on the asymptotic efficiency of the ML estimator (under certain regularity conditions) has been used to indirectly derive the CRB on the DOA parameter alone (see 3.16.4.2).

For the circular Gaussian stochastic model of the sources introduced in Section 3.16.2.2, compact matrix expressions of ![]() have been given in the literature, when no a priori information is available on the structure of the spatial covariance

have been given in the literature, when no a priori information is available on the structure of the spatial covariance ![]() of the sources. For example, Stoica et al. [41] have derived the following expression for one parameter per source and uniform white noise (i.e.,

of the sources. For example, Stoica et al. [41] have derived the following expression for one parameter per source and uniform white noise (i.e., ![]() )

)

![]() (16.33)

(16.33)

where ![]() denotes the Hadamard product (i.e., element-wise multiplication),

denotes the Hadamard product (i.e., element-wise multiplication), ![]() is the orthogonal projector on the noise subspace, i.e.,

is the orthogonal projector on the noise subspace, i.e., ![]() and

and ![]() . We note the surprising fact that when the sources are known to be coherent (i.e.,

. We note the surprising fact that when the sources are known to be coherent (i.e., ![]() singular), the associated Gaussian CRB

singular), the associated Gaussian CRB ![]() that includes this prior, keeps the same expression (16.33)[43].

that includes this prior, keeps the same expression (16.33)[43].

As is well known, the importance of this Gaussian CRB formula lies in the fact that circular Gaussian data are rather frequently encountered in applications. Another important point is that under rather general conditions that will be specified in Section 3.16.4.2, the circular complex Gaussian CRB matrix (16.33) is the largest of all CRB matrices among the class of arbitrary complex distributions of the sources with given covariance matrix ![]() (see, e.g., [21, p. 293]). Note that many extensions of (16.33) have been given. For example this formula has been extended to several parameters per source (see, e.g., [44, Appendix D]), to nonuniform white noise (i.e.,

(see, e.g., [21, p. 293]). Note that many extensions of (16.33) have been given. For example this formula has been extended to several parameters per source (see, e.g., [44, Appendix D]), to nonuniform white noise (i.e., ![]() ) and unknown parameterized noise field (i.e.,

) and unknown parameterized noise field (i.e., ![]() ) in [45–47], respectively. Due to the domination of the Gaussian distribution, these bounds have often been denoted in the literature as stochastic CRB (e.g., in [10]) or unconditional CRB (e.g., in [11]), without specifying the involved distribution.

) in [45–47], respectively. Due to the domination of the Gaussian distribution, these bounds have often been denoted in the literature as stochastic CRB (e.g., in [10]) or unconditional CRB (e.g., in [11]), without specifying the involved distribution.

Furthermore, all these closed-form expressions of the CRB have been extended to the noncircular Gaussian stochastic model of the sources in [42,44,48, Appendix D], given associated ![]() expressions satisfying

expressions satisfying

![]()

corresponding to the same covariance matrix ![]() . For example, for a single source, with one parameter

. For example, for a single source, with one parameter ![]() ,

, ![]() decreases monotonically as the second-order noncircularity rate

decreases monotonically as the second-order noncircularity rate ![]() (defined by

(defined by ![]() and satisfying

and satisfying ![]() ) increases from 0 to 1, for which we have, respectively,

) increases from 0 to 1, for which we have, respectively,

(16.34)

(16.34)

where ![]() is the purely geometrical factor

is the purely geometrical factor ![]() with

with ![]() .

.

If the source covariance ![]() is constrained to have a specific structure, (i.e., if a prior on

is constrained to have a specific structure, (i.e., if a prior on ![]() is taken into account), a specific expression of

is taken into account), a specific expression of ![]() , which integrates this prior ought to be derived, to assess the performance of an algorithm that uses this prior. But unfortunately, the derivation of

, which integrates this prior ought to be derived, to assess the performance of an algorithm that uses this prior. But unfortunately, the derivation of ![]() is very involved and lacks any engineering insight. For example, when it is known that the sources are uncorrelated, the expression given in [49, Theorem 1] of

is very involved and lacks any engineering insight. For example, when it is known that the sources are uncorrelated, the expression given in [49, Theorem 1] of ![]() includes a matrix

includes a matrix ![]() , defined as any matrix, whose columns span the null space of

, defined as any matrix, whose columns span the null space of ![]() . And to the best of our knowledge no closed-form expression of

. And to the best of our knowledge no closed-form expression of ![]() has been published in the important case of coherent sources, when the rank of

has been published in the important case of coherent sources, when the rank of ![]() is fixed strictly smaller than

is fixed strictly smaller than ![]() .

.

Finally, note that the scalar field modeling one component of electromagnetic field or acoustic pressure (16.1) has been extended to vector fields with vector sensors, where associated stochastic CRBs for the DOA (azimuth and elevation) alone have been derived and analyzed for a single source. In particular, the electromagnetic (six electric and magnetic field components) and acoustic (three velocity components and pressure) fields have been considered in [50,51], respectively.

3.16.3.2.2 Gaussian deterministic case

For the deterministic model of the sources introduced in Section 3.16.2.2, the unknown parameter ![]() of

of ![]() is now

is now

![]() (16.35)

(16.35)

Applying the extended Slepian-Bangs formula (16.32) to the circular Gaussian  distribution of

distribution of ![]() , Stoica and Nehorai [11] have obtained the following CRB for the DOA alone:

, Stoica and Nehorai [11] have obtained the following CRB for the DOA alone: ![]() , where

, where ![]() . Furthermore, it was proved in [3] that

. Furthermore, it was proved in [3] that ![]() decreases monotonically with increasing

decreases monotonically with increasing ![]() (and

(and ![]() ). This implies, that if the sources

). This implies, that if the sources ![]() are second-order ergodic sequences,

are second-order ergodic sequences, ![]() has a limit

has a limit ![]() when

when ![]() tends to infinity, and we obtain for large

tends to infinity, and we obtain for large ![]() , the following expression denoted in the literature as deterministic CRB or conditional CRB (e.g., in [11])

, the following expression denoted in the literature as deterministic CRB or conditional CRB (e.g., in [11])

![]() (16.36)

(16.36)

Finally, we remark that the CRB for near-field DOA localization has been much less studied than the far-field one. To the best of our knowledge, only papers [52–54] have given and analyzed closed-form expressions of the stochastic and deterministic CRB, and furthermore in the particular case of a single source for specific arrays. For a ULA where the DOA parameters are the azimuth ![]() and the range

and the range ![]() , based on the DOA algorithms, the steering vector (16.2) has been approximated in [53] by

, based on the DOA algorithms, the steering vector (16.2) has been approximated in [53] by

![]()

where ![]() and

and ![]() are the so-called electric angles connected to the physical parameters

are the so-called electric angles connected to the physical parameters ![]() and

and ![]() by

by ![]() and

and ![]() . Then in [52], the exact propagation model

. Then in [52], the exact propagation model

![]()

has been used, that has revealed interesting features and interpretations not shown in [53]. Very recently, the uniform circular array (UCA) has been investigated in [54] in which the exact propagation model is now:

where ![]() and

and ![]() denote the radius of the UCA, the azimuth and the elevation of the source. Note that in contrast to the closed-form expressions given in [53] and [52], the ones given in [54] relate the near and far-field CRB on the azimuth and elevation by very simple expressions.

denote the radius of the UCA, the azimuth and the elevation of the source. Note that in contrast to the closed-form expressions given in [53] and [52], the ones given in [54] relate the near and far-field CRB on the azimuth and elevation by very simple expressions.

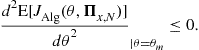

3.16.3.2.3 Non Gaussian case

The stochastic CRB for the DOA appears to be prohibitive to compute for non-Gaussian sources. To cope with this difficulty, the deterministic model for the sources has been proposed for its simplicity. But in contrast to the stochastic ML estimator, the corresponding deterministic (or conditional) ML method does not asymptotically achieve this deterministic CRB, because the deterministic likelihood function does not meet the required regularity conditions (see Section 3.16.4.2). Consequently, this deterministic CRB is only a nonattainable lower bound on the covariance of any unbiased DOA estimator for arbitrary non-Gaussian distributions of the sources. So, it is useful to have explicit expressions of the stochastic CRB under non-Gaussian distributions.

To the best of our knowledge, such stochastic CRBs have only been given in the case of binary phase-shift keying (BPSK), quaternary phase-shift keying (QPSK) signal waveforms [55] and then, to arbitrary ![]() -ary square QAM constellation [56], and for a single source only. In these works, it is assumed Nyquist shaping and ideal sample timing apply so that the intersymbol interference at each symbol spaced sampling instance can be ignored. In the absence of frequency offset but with possible phase offset, the signals at the output of the matched filter can be represented as

-ary square QAM constellation [56], and for a single source only. In these works, it is assumed Nyquist shaping and ideal sample timing apply so that the intersymbol interference at each symbol spaced sampling instance can be ignored. In the absence of frequency offset but with possible phase offset, the signals at the output of the matched filter can be represented as ![]() , where

, where ![]() are independent identically distributed random symbols taking values

are independent identically distributed random symbols taking values ![]() for BPSK symbols and

for BPSK symbols and ![]() with

with ![]() for

for ![]() -ary square QAM symbols, where

-ary square QAM symbols, where ![]() is the intersymbol distance in the I/Q plane, which is adjusted such that

is the intersymbol distance in the I/Q plane, which is adjusted such that ![]() . For these discrete sources, the unknown parameter of this stochastic model is

. For these discrete sources, the unknown parameter of this stochastic model is

![]()

and it has been proved in [55,56] that the parameters ![]() and

and ![]() are decoupled in the associated FIM. This allows one to derive closed-form expressions of the so called non-data-aided (NDA) CRBs on the parameter

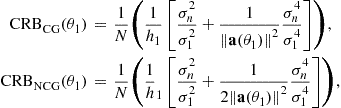

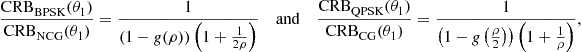

are decoupled in the associated FIM. This allows one to derive closed-form expressions of the so called non-data-aided (NDA) CRBs on the parameter ![]() alone. In particular, it has been proved [55] that for a BPSK and QPSK source, that is respectively rectilinear and second-order circular, we have

alone. In particular, it has been proved [55] that for a BPSK and QPSK source, that is respectively rectilinear and second-order circular, we have

(16.37)

(16.37)

where ![]() and

and ![]() are given by (16.34) and with

are given by (16.34) and with ![]() and

and ![]() is the following decreasing function of

is the following decreasing function of ![]() :

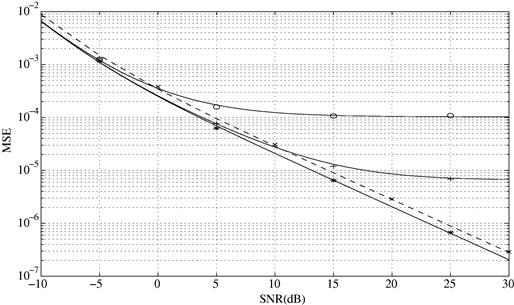

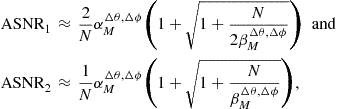

: ![]() . Equation (16.37) is illustrated in Figure 16.1 for a ULA of

. Equation (16.37) is illustrated in Figure 16.1 for a ULA of ![]() sensors spaced a half-wavelength apart. We see from this figure that the CRBs under the non-circular [resp. circular] complex Gaussian distribution are tight upper bounds on the CRBs under the BPSK [resp. QPSK] distribution at very low and very high SNRs only. Finally, note that among the numerous results of [55,56], these stochastic NDA CRBs have been compared with those obtained with different a priori knowledge. In particular, it has been proved that in the presence of any unknown phase offset (i.e., non-coherent estimation), the ultimate achievable performance on the NDA DOA estimates holds almost the same irrespectively of the modulation order

sensors spaced a half-wavelength apart. We see from this figure that the CRBs under the non-circular [resp. circular] complex Gaussian distribution are tight upper bounds on the CRBs under the BPSK [resp. QPSK] distribution at very low and very high SNRs only. Finally, note that among the numerous results of [55,56], these stochastic NDA CRBs have been compared with those obtained with different a priori knowledge. In particular, it has been proved that in the presence of any unknown phase offset (i.e., non-coherent estimation), the ultimate achievable performance on the NDA DOA estimates holds almost the same irrespectively of the modulation order ![]() . However, the NDA CRBs obtained in the absence of phase offset (i.e., coherent estimation) vary, in the high SNR region, from one modulation order to another.

. However, the NDA CRBs obtained in the absence of phase offset (i.e., coherent estimation) vary, in the high SNR region, from one modulation order to another.

Finally note that the ML estimation of the DOAs of these discrete sources has been proposed [57], where the maximization of the ML criterion (which is rather involved) is iteratively carried out by the expectation maximization (EM) algorithm. Adapted to the distribution of these sources, this approach allows one to account for any arbitrary noise covariance ![]() as soon as

as soon as ![]() is Gaussian distributed.

is Gaussian distributed.

3.16.3.3 Asymptotically minimum variance bounds (AMVB)

To assess the performance of an algorithm based on a specific statistic ![]() built on

built on ![]() , it is interesting to compare the asymptotic covariance

, it is interesting to compare the asymptotic covariance ![]() (16.21) or (16.23) to an attainable lower bound that depends on the statistic

(16.21) or (16.23) to an attainable lower bound that depends on the statistic ![]() only. The asymptotically minimum variance bound (AMVB) is such a bound. Furthermore, we note that the CRB appears to be prohibitive to compute for non-Gaussian sources and noise, except in simple cases and consequently this AMVB can be used as an useful benchmark against which potential estimates

only. The asymptotically minimum variance bound (AMVB) is such a bound. Furthermore, we note that the CRB appears to be prohibitive to compute for non-Gaussian sources and noise, except in simple cases and consequently this AMVB can be used as an useful benchmark against which potential estimates ![]() are tested. To extend the derivations of Porat and Friedlander [58] concerning this AMVB to complex-valued measurements, two additional conditions to those introduced in Section 3.16.3.1.1 must be satisfied:

are tested. To extend the derivations of Porat and Friedlander [58] concerning this AMVB to complex-valued measurements, two additional conditions to those introduced in Section 3.16.3.1.1 must be satisfied:

iii. The involved function ![]() that defines the considered algorithm must be

that defines the considered algorithm must be ![]() -differentiable, i.e., must satisfy (16.22). In practice, it is sufficient to add conjugate components to all complex-valued components of

-differentiable, i.e., must satisfy (16.22). In practice, it is sufficient to add conjugate components to all complex-valued components of ![]() , as in example (16.41);

, as in example (16.41);

iv. The covariance ![]() of the asymptotic distribution of

of the asymptotic distribution of ![]() must be nonsingular. To satisfy this latter condition, the components of

must be nonsingular. To satisfy this latter condition, the components of ![]() that are random variables, must be asymptotically linearly independent. Consequently the redundancies in

that are random variables, must be asymptotically linearly independent. Consequently the redundancies in ![]() must be withdrawn.

must be withdrawn.

Under these four conditions, the covariance matrix ![]() of the asymptotic distribution of any estimator

of the asymptotic distribution of any estimator ![]() built on the statistics

built on the statistics ![]() is bounded below by

is bounded below by ![]() :

:

![]() (16.38)

(16.38)

where ![]() is the

is the ![]() matrix

matrix ![]() .

.

Furthermore, this lowest bound ![]() is asymptotically tight, i.e., there exists an algorithm

is asymptotically tight, i.e., there exists an algorithm ![]() whose covariance of its asymptotic distribution satisfies (16.38) with equality. The following nonlinear least square algorithm is an AMV second-order algorithm:

whose covariance of its asymptotic distribution satisfies (16.38) with equality. The following nonlinear least square algorithm is an AMV second-order algorithm:

![]() (16.39)

(16.39)

where we have emphasized here the dependence of ![]() on the unknown DOA

on the unknown DOA ![]() . In practice, it is difficult to optimize the nonlinear function (16.39), where it involves the computation of

. In practice, it is difficult to optimize the nonlinear function (16.39), where it involves the computation of ![]() . Porat and Friedlander proved for the real case in [59] that the lowest bound (16.38) is also obtained if an arbitrary weakly consistent estimate

. Porat and Friedlander proved for the real case in [59] that the lowest bound (16.38) is also obtained if an arbitrary weakly consistent estimate ![]() of

of ![]() is used in (16.39), giving the simplest algorithm:

is used in (16.39), giving the simplest algorithm:

![]() (16.40)

(16.40)

This property has been extended to the complex case in [60].

This AMVB and AMV algorithm have been applied to second-order algorithms that exploit both ![]() and

and ![]() in [24]. In this case, to fulfill the previously mentioned conditions (i)–(iv), the second-order statistics

in [24]. In this case, to fulfill the previously mentioned conditions (i)–(iv), the second-order statistics ![]() are given by

are given by

(16.41)

(16.41)

where ![]() denotes the operator obtained from

denotes the operator obtained from ![]() by eliminating all supradiagonal elements of a matrix. Finally, note that these AMVB and AMV DOA finding algorithm have been also derived for fourth-order statistics by splitting the measurements and statistics

by eliminating all supradiagonal elements of a matrix. Finally, note that these AMVB and AMV DOA finding algorithm have been also derived for fourth-order statistics by splitting the measurements and statistics ![]() into its real and imaginary parts in [60].

into its real and imaginary parts in [60].

3.16.3.4 Relations between AMVB and CRB: projector statistics

The AMVB based on any statistics is generally lower bounded by the CRB because this later bound concerns arbitrary functions of the measurements ![]() . But it has been proved in [44], that the AMVB associated with the different estimated projectors

. But it has been proved in [44], that the AMVB associated with the different estimated projectors ![]() ,

, ![]() and

and ![]() introduced in Section 3.16.3.1.2, which are functions of the second-order statistics of the measurements, attains the stochastic CRB in the case of circular or noncircular Gaussian signals. Consequently, there always exist asymptotically efficient subspace-based DOA algorithms in the Gaussian context.

introduced in Section 3.16.3.1.2, which are functions of the second-order statistics of the measurements, attains the stochastic CRB in the case of circular or noncircular Gaussian signals. Consequently, there always exist asymptotically efficient subspace-based DOA algorithms in the Gaussian context.

To prove this asymptotic efficiency, i.e.,

![]() (16.42)

(16.42)

and

![]() (16.43)

(16.43)

the condition (iv) of Section 3.16.3.3 that is not satisfied [61] for these statistics ought to be extended and consequently the results (16.38) and (16.39) must be modified as well, because here ![]() is singular.

is singular.

In this singular case, it has been proved [61] that if the condition (iv) in the necessary conditions (i)–(iv) is replaced by the new condition ![]() , (16.38) and (16.39) becomes respectively

, (16.38) and (16.39) becomes respectively

![]() (16.44)

(16.44)

and

![]() (16.45)

(16.45)

And it is proved that the three statistics ![]() ,

, ![]() , and

, and ![]() satisfy the conditions (i)–(iii) and (v) and thus satisfy results (16.44) and (16.45).

satisfy the conditions (i)–(iii) and (v) and thus satisfy results (16.44) and (16.45).

Finally, note that this efficiency property of the orthogonal projectors extends to the model of spatially correlated noise, for which ![]() where

where ![]() is a known positive definite matrix. In this case, for example, the orthogonal projector

is a known positive definite matrix. In this case, for example, the orthogonal projector ![]() defined after whitening

defined after whitening

satisfies

![]()

where ![]() is insensitive to the choice of the square root

is insensitive to the choice of the square root ![]() of

of ![]() , and is no longer a projection matrix.

, and is no longer a projection matrix.

3.16.4 Asymptotic distribution of estimated DOA

We are now specifying in this section the asymptotic statistical performances of the main DOA algorithms that may be classified into three main categories, namely beamforming-based, maximum like-lihood and moments-based algorithms.

3.16.4.1 Beamforming-based algorithms

Among the so-called beamforming-based algorithms, also referred to as low-resolution, compared to the parametric algorithms, the conventional (Bartlett) beamforming and Capon beamforming are the most referenced representatives of this family. These algorithms do not make any assumption on the covariance structure of the data, but the functional form of the steering vector ![]() is assumed perfectly known. These estimators

is assumed perfectly known. These estimators ![]() are given by the

are given by the ![]() highest (supposed isolated) maximizer and minimizer in

highest (supposed isolated) maximizer and minimizer in ![]() of the respective following criteria:

of the respective following criteria:

![]() (16.46)

(16.46)

where ![]() is the unbiased sample estimate

is the unbiased sample estimate ![]() and

and ![]() is either the biased estimate

is either the biased estimate ![]() or the unbiased estimate

or the unbiased estimate ![]() (that both give the same estimate

(that both give the same estimate ![]() ). Note that these algorithms extend to

). Note that these algorithms extend to ![]() parameters per source, where

parameters per source, where ![]() is replaced by

is replaced by ![]() in (16.46).

in (16.46).

For arbitrary noise field (i.e., arbitrary noise covariance ![]() ) and/or an arbitrary number

) and/or an arbitrary number ![]() of sources, the estimate

of sources, the estimate ![]() given by these two algorithms are non-consistent, i.e.,

given by these two algorithms are non-consistent, i.e.,

![]()

and asymptotically biased. The asymptotic bias ![]() can be straightforwardly derived by a second-order expansion of the criterion

can be straightforwardly derived by a second-order expansion of the criterion ![]() around each true values

around each true values ![]() (with

(with ![]() [resp.,

[resp., ![]() ] for the conventional [resp. Capon] algorithm), but noting that

] for the conventional [resp. Capon] algorithm), but noting that ![]() is a maximizer or minimizer

is a maximizer or minimizer ![]() of

of ![]() or

or ![]() , respectively. The following value is obtained [62]

, respectively. The following value is obtained [62]

(16.47)

(16.47)

with ![]() and

and ![]() .

.

Following the methodology of Section 3.16.3.1.2, the additional bias for finite value of ![]() , that is of order

, that is of order ![]() can be derived, which gives

can be derived, which gives

![]()

see, e.g., the involved expression of ![]() for the Capon algorithm [62, rel. (35)].

for the Capon algorithm [62, rel. (35)].

In the same way, the covariance ![]() which is of order

which is of order ![]() can be derived. It is obtained with

can be derived. It is obtained with ![]()

![]()

see, e.g., the involved expression [50, rel. (24)] of ![]() associated with a source for several parameters per source. The relative values of the asymptotic bias, additional bias and standard deviation depend on the SNR,

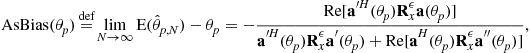

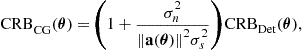

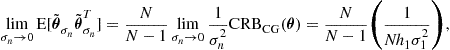

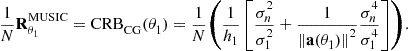

associated with a source for several parameters per source. The relative values of the asymptotic bias, additional bias and standard deviation depend on the SNR, ![]() and