Chapter 18. Advanced ADO.NET Techniques

IN THIS CHAPTER

- Working with the New Improved

DataTable - Accessing Data Asynchronously

- Updating Data in Batches

- Using the new

System.TransactionsNamespace

Undoubtedly, ADO.NET has made life a lot easier for developers. It provided a unified means for communicating with relational data sources, whether the source was SQL Server, Oracle, Access, or even XML. In this chapter, you will see some extremely powerful techniques that you can use in your ADO.NET 2.0 programming. You will see some of the improvements made to the DataTable class, as well as how to access data asynchronously, update data in batches, and even how to use the new unified transaction model provided by the System.Transactions namespace.

Working with the New Improved DataTable

The DataSet has always been at the core of previous versions of ADO.NET, providing an in-memory representation of relational data including keys, constraints, data relations, and even limited querying capability. The problem many developers had with the DataSet is that quite often they only needed to work with a single table at a time. In these situations, the DataSet provided too much functionality. The problem with this was that quite often, developers couldn’t get around the fact that they needed to use the DataSet; the DataTable class didn’t work very well outside the context of a DataSet.

The new version of ADO.NET takes this into consideration by promoting the DataTable to a first-class citizen. The DataTable can read and write its own data to files without having to reside within a DataSet. In addition, you can create a DataReader on top of the data contained within a single table.

Loading and Saving DataTables Using XML

With ADO.NET 2.0, you can now read and write the contents of a DataTable using XML. In previous versions of ADO.NET, you had to place a table in a DataSet and then call the ReadXml() and WriteXml() methods on the DataSet to get XML persistence.

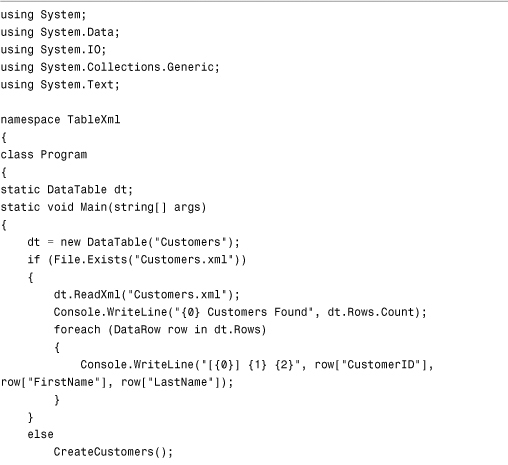

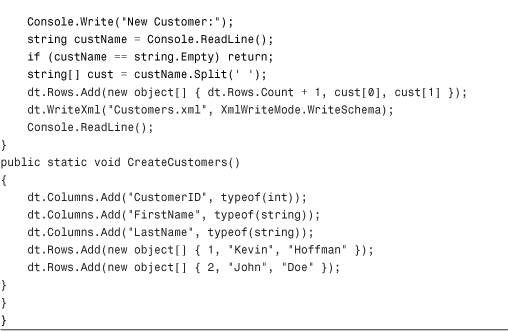

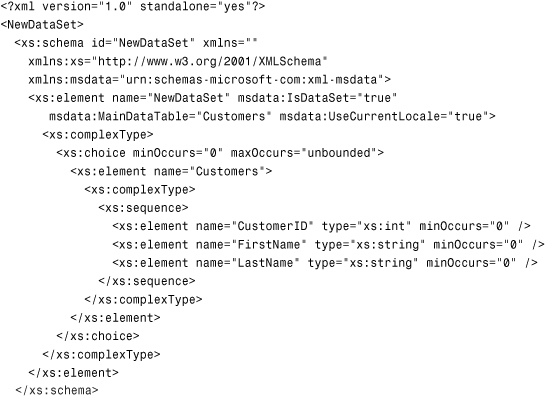

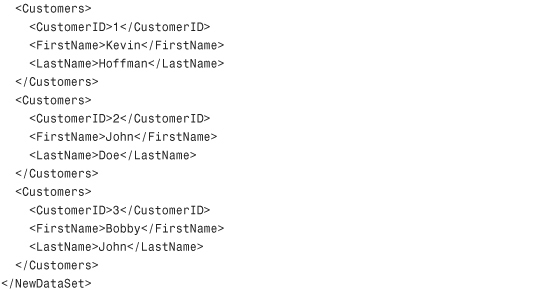

Now the DataTable class has its own ReadXml() and WriteXml() methods. One thing you might have to watch out for is that although the current documentation on MSDN indicates that schema inference is possible on a DataTable from XML data, attempting to infer schema at runtime from XML throws an exception indicating that it is not supported on the DataTable class. To get around this, the sample shown in Listing 18.1 uses the XmlWriteMode.WriteSchema option to include the schema in the top of the document.

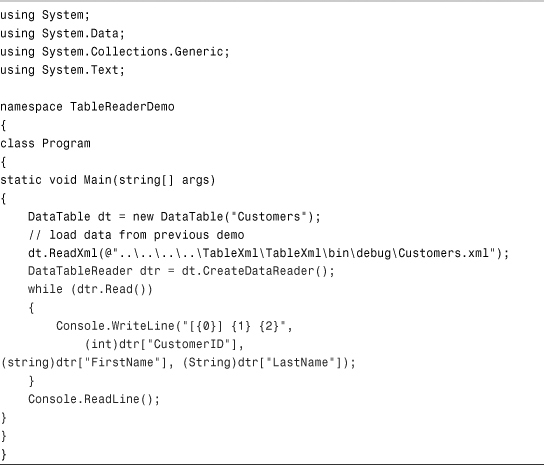

Listing 18.1 Reading and Writing XML DataTable Contents

The preceding code looks for the Customers.xml file. If it finds the file, it loads the contents into a DataTable; otherwise, it creates a new file with a couple of sample rows. Then the code prompts the user for a new customer, adds that customer as a row, and saves the data back out to the Customers.xml file with the schema information included. If you have any experience using the WriteXml() and ReadXml() methods on the DataSet class, the preceding code should seem pretty straightforward. It produces XML in the following format:

Using the New DataTableReader Class

The new DataTableReader class works just like any of the other DataReader classes that are available in ADO.NET. Like a SqlDataReader, the DataTableReader exposes fast, forward-only, read-only access to the underlying data. Whereas the SqlDataReader exposes data from a SQL Server database, the DataTableReader simply exposes data from an underlying DataTable.

The code in Listing 18.2 is a quick illustration of how to use a DataTableReader. Fortunately, if you know how to use any of the data readers provided by ADO.NET, you will be familiar with how to use the DataTableReader class.

Listing 18.2 Using the DataTableReader

Accessing Data Asynchronously

One of the biggest problems with ADO.NET in previous versions is that no matter how responsive your application was, everything still had to wait for a command to execute. In other words, all command executions were done synchronously, whether the command took .2 or 200 seconds to execute.

Most developers got around this by wrapping the command execution in a method that was spawned in a background thread to allow the execution to take place while the rest of the application remained responsive.

With ADO.NET 2.0, that workaround is no longer necessary. You can use the standard Begin/End asynchronous method pattern that is prevalent throughout the .NET Framework. The SqlCommand class now has a corresponding Begin/End pair for some of its execute methods: ExecuteNonQuery(), ExecuteReader(), and ExecuteXmlReader().

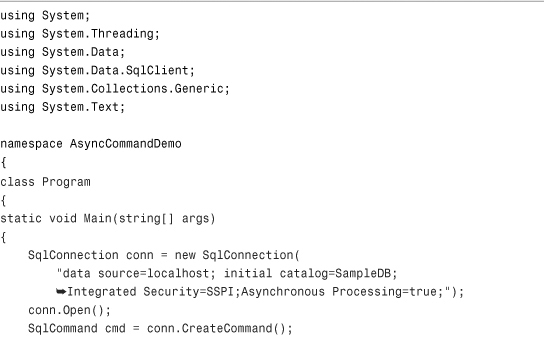

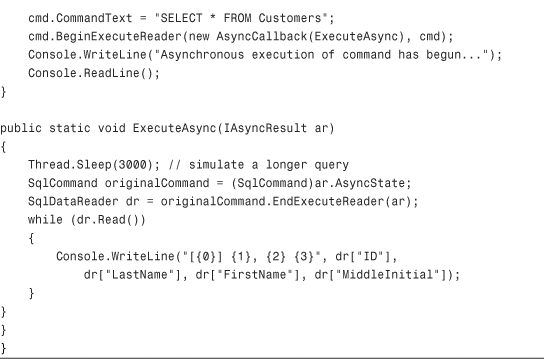

Using these new methods, you can initiate an asynchronous command and then continue responding to events from the rest of the application. When the results are available, they will be returned to your application using one of the appropriate End methods, as shown in Listing 18.3.

Listing 18.3 Asynchronous Command Execution

Note that you have to include the Asynchronous Processing=true option in the connection string in order to enable asynchronous command execution in SQL Server. Also keep in mind that asynchronous processing is not part of the DbCommand abstract base class; it is part of the SqlCommand class only.

Updating Data in Batches

In previous versions of ADO.NET, batch updates using DataSets weren’t very efficient. The sequence of events using previous versions was as follows:

- Retrieve data from the database into a

DataSetusing the adapter’sFillmethod. - Data in existing rows is modified, new rows are added, and yet other rows might be deleted.

- The adapter’s

Update()method is called. The adapter then runs through the entire list of rows in the given table(s). For each row that has been inserted, deleted, or updated since the last time theDataSet’sAcceptChanges()method was called (or since creation), a single SQL statement is executed to perform the change on the server.

The problem with this scenario is in the case where there are large numbers of changed records in a DataSet. When this happens, the process of creating a new SQL statement, executing it, and then moving to the next updated record in the list adds unnecessary overhead to the update operation.

With the new ADO.NET 2.0 data adapters, you can specify the size of the command batch. If you specify a size of 0, the new data adapter will use the maximum batch size available. If you specify a value greater than 1, the data adapter will perform its update in batches containing the number of statements you indicated.

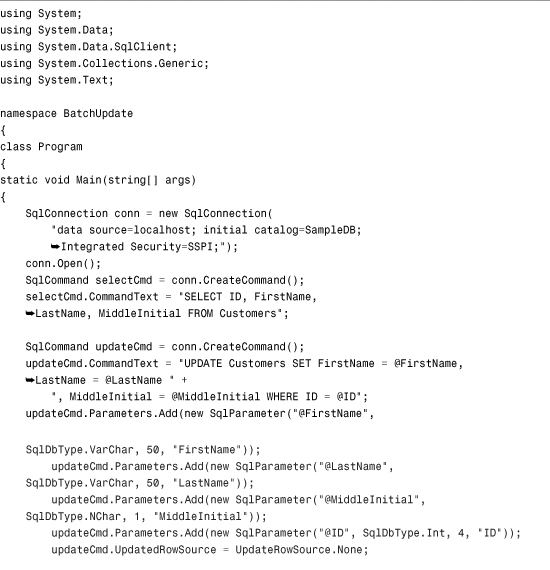

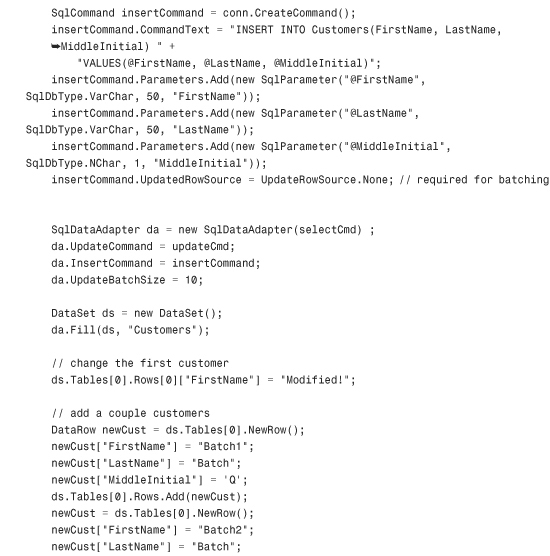

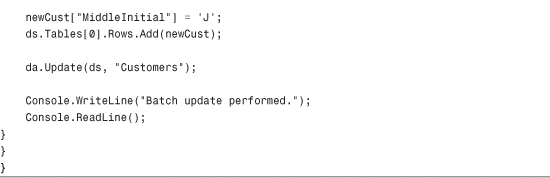

The only tricky thing to remember about batch updating is that you need to specify UpdateRowSource.None for the UpdatedRowSource property on all batch-involved commands, as shown in Listing 18.4.

Listing 18.4 Performing Batch Updates using a SqlDataAdapter

The preceding code modifies an existing row and then creates two additional rows. In previous versions of ADO.NET, this would generate three separate command executions, including the latency involved with starting and finishing each command. In ADO.NET 2.0, the preceding code will only issue one command to SQL Server. The command issued to SQL Server will contain one UPDATE statement and two INSERT statements. If you want to see how this works, you can easily watch how the statements are sent to SQL Server using SQL administration tools.

Using the New System.Transactions Namespace

The new System.Transactions namespace provides a single, unified means by which your code can take advantage of transactional resources, such as SQL Server databases and Microsoft Message Queues (MSMQ). In addition to providing a new way of consuming transactions as a client, the System.Transactions namespace also allows you to create your own resource manager, allowing other developers and code to take advantage of your proprietary resources in a transactional manner using the same client code that they would use for SQL, DTC, ADO.NET, or MSMQ transactions.

The new transaction manager automatically takes care of transaction escalation. Before System.Transactions, developers had to decide at design time if they needed their transactions to be local or distributed. The new transaction manager can automatically escalate local transactions to distributed transactions based on the resources being consumed and the resource managers involved in the transaction. What this means is that without having to change your code, your application can use low-cost local transactions during one execution, and then autoescalate to using distributed transactions the next time it is run, based on which resources the end user is accessing. The benefits of having a single set of transactional code to write that can be applied to any supported resource manager are too numerous to count.

The new transaction system provides two ways of making use of transactions as a consumer: explicitly using derivates of the Transaction class, and implicitly using transaction scopes.

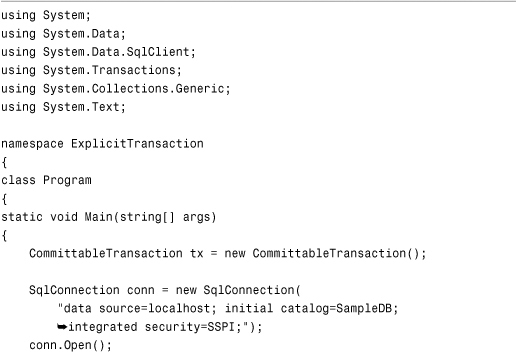

Using Explicit Transactions

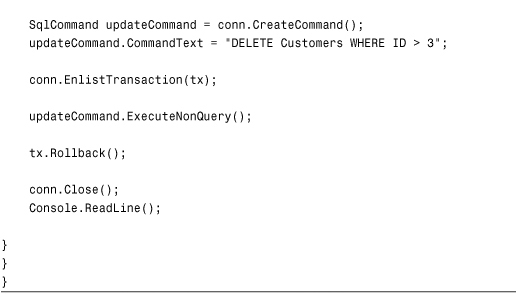

When you explicitly create a transaction, you need to manually enlist resource managers in that transaction. In the example shown in Listing 18.5, the resource manager being enlisted is a SqlConnection instance. The CommittableTransaction hosts the Commit() and Rollback() methods. This makes it so that whether you’re working with MSMQ, DTC, SQL, or any other resource manager, your transactional code can remain identical.

Listing 18.5 Using Explicit Transactions

The preceding sample is reusing the SampleDB database used in many chapters throughout this book. It’s a simple database with a Customers table that contains a few columns and an ID column. When you run the preceding code, no changes to the table should be visible because the transaction was explicitly rolled back. If you change the Rollback() in the preceding code to Commit(), the transaction will complete and the changes made within the context of the transaction will be saved to the database, effectively deleting all customers with an ID greater than 3. Also note that you need to add a reference to the System.Transactions.dll assembly for the code in Listing 18.5 to work properly.

Using Implicit Transactions

Implicit transactions are much easier to manage by their very nature. As such, Microsoft actually recommends that you use implicit transactions for all of your transactional code unless you really need the explicit transactions shown in the preceding example. The main reason for this is that when using implicit transactions, transactions from multiple resource managers can coexist and operate with each other according to some predefined rules. For example, if you use implicit transactions, your code can simply automatically enlist in a parent transaction if one exists, or your code can create a new transaction if necessary. Even more useful is that if your code invokes other code that is also using implicit transactions, your implicit transaction may not commit until all nested transactions have voted to commit as well. This type of transaction nesting and the notion of submitting a vote on whether the transaction commits should be very familiar to anyone who has worked with COM+ before. In fact, System.Transactions transactions can actually interoperate with COM+ transactions, as you’ll see in Chapter 40, “Using Enterprise Services.”

Implicit transactions are accomplished through the use of the TransactionScope class, which has a usage very similar to the using keyword. Code that resides within a transaction scope is inherently transactional, and access to any supported resource managers (such as SQL 2005) from within a transaction scope will use transactions implicitly. If your code doesn’t get to the line where the Complete() method is called on a TransactionScope, any transactions created within the scope will be rolled back, and any parent or ambient transactions utilized through nesting will receive a vote to roll back.

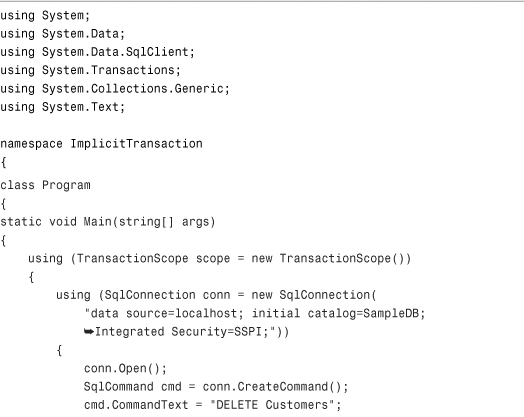

The code in Listing 18.6 illustrates the simple use of implicit transactions using transaction scopes.

Listing 18.6 Using Implicit Transactions

The preceding code tricks the compiler into allowing the programmer to create a divide-by-zero situation. Division by zero is never good and is guaranteed to throw an exception. As mentioned earlier, if your code can’t execute the scope.Complete() line, the transaction won’t be committed. If the scope created the transaction, it will be rolled back immediately. If the scope is part of a parent scope, it will vote to roll back the parent scope by virtue of its inability to complete. If you remove the four lines of code that create the divide-by-zero exception from Listing 18.6, the transaction will commit and the Customers table will be emptied.

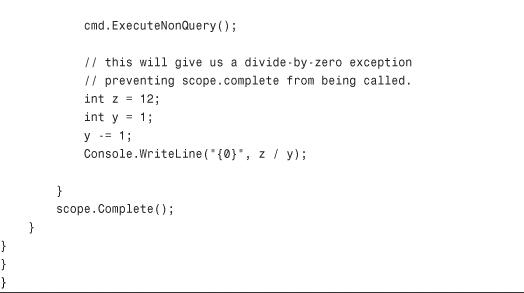

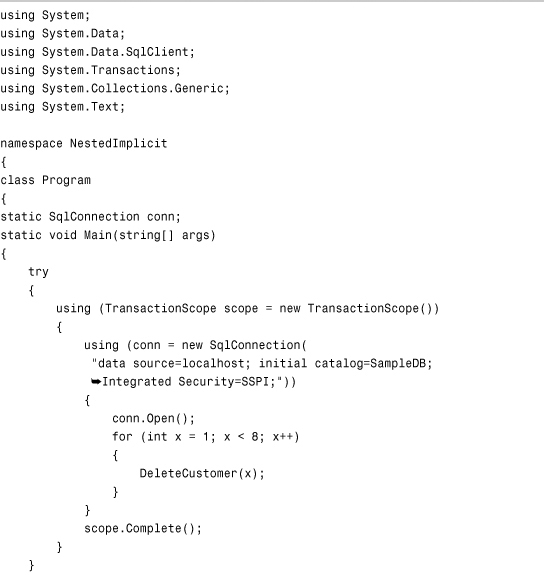

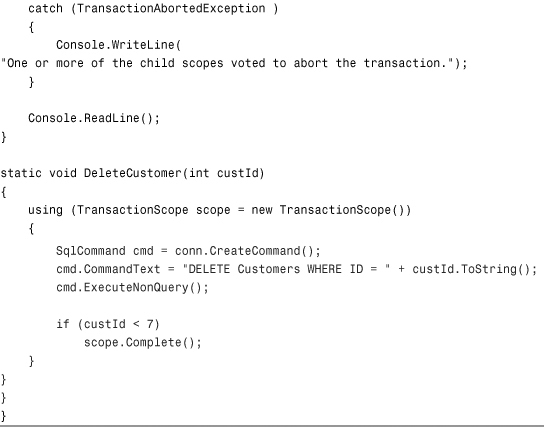

Take a look at the code in Listing 18.7. It shows how you can nest TransactionScope instances and have the Complete() method affect the parent scope. The main thing to remember is this: Every time a scope finishes without the Complete() method being called, a TransactionAbortedException is thrown.

Listing 18.7 Using Nested Transaction Scopes

The preceding code creates a parent scope. Inside the scope, a loop is iterated seven times; each iteration attempts to delete a customer. The DeleteCustomer() method will properly delete all customers, except those with an ID of seven or greater. This simulates a potential problem with the database, showing that six out of seven loop iterations called the Complete() method on their respective transaction scopes. The seventh iteration fails to call the Complete() method, and this is the iteration that causes the TransactionAbortedException to be thrown. The presence of this exception causes the parent transaction scope block to stop—its Complete() method is never called.

Summary

This chapter has shown you some of the more powerful things that you can accomplish with ADO.NET and with the System.Transactions namespace. The chapter started out by showing you some of the powerful new features that have been added to the ADO.NET DataTable class, such as the ability to read and write XML and to create a DataTableReader. Next, you saw how to update data in batches and how to perform asynchronous data updates using the appropriate Begin/End methods on the SqlCommand class. Finally, the chapter concluded with a discussion of how to use the System.Transactions namespace to create transactional code that will work with any supported resource manager, such as SQL 2005, MSMQ, DTC, and so on. Having read and understood this chapter, you should feel pretty good about your ADO.NET skills and your ability to access and manipulate data using C# 2.0.