BDD doesn't require that we use any particular tool. Instead, it's more focused on the approach to testing. That is why it's possible to using Python doctests to write BDD test scenarios. Doctests aren't restricted to the module's code. With this recipe, we will explore creating independent text files to run through Python's doctest library.

If this is doctest, why wasn't it included in the previous chapter's recipes? Because the context of writing up a set of tests in separate test document fits more naturally into the philosophy of BDD than with testable docstrings that are available for introspection when working with a library.

For this recipe, we will be using the shopping cart application shown at the beginning of this chapter.

With the following steps, we will explore capturing various test scenarios in doctest files and then running them.

- Create a file called

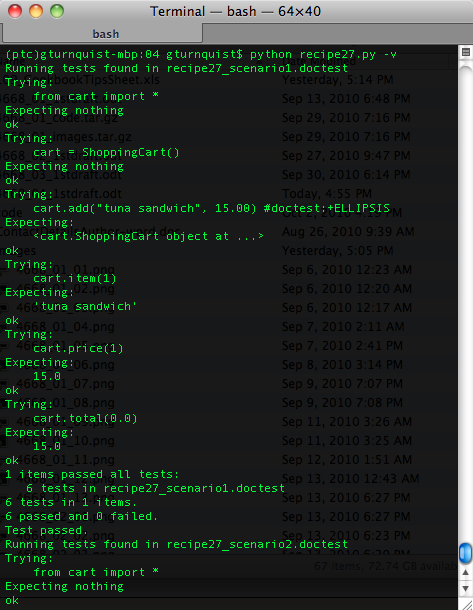

recipe27_scenario1.doctestthat contains doctest-style type tests to exercise the shopping cart.This is a way to exercise the shopping cart from a pure text file containing tests. First, we need to import the modules >>> from cart import * Now, we can create an instance of a cart >>> cart = ShoppingCart() Here we use the API to add an object. Because it returns back the cart, we have to deal with the output >>> cart.add("tuna sandwich", 15.00) #doctest:+ELLIPSIS <cart.ShoppingCart object at ...> Now we can check some other outputs >>> cart.item(1) 'tuna sandwich' >>> cart.price(1) 15.0 >>> cart.total(0.0) 15.0 - Create another scenario in the file

recipe27_scenario2.doctestthat tests the boundaries of the shopping cart.This is a way to exercise the shopping cart from a pure text file containing tests. First, we need to import the modules >>> from cart import * Now, we can create an instance of a cart >>> cart = ShoppingCart() Now we try to access an item out of range, expecting an exception. >>> cart.item(5) Traceback (most recent call last): ... IndexError: list index out of range We also expect the price method to fail in a similar way. >>> cart.price(-2) Traceback (most recent call last): ... IndexError: list index out of range

- Create a file called

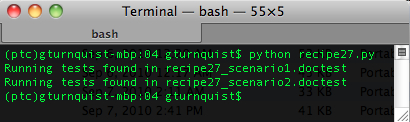

recipe27.pyand put in the test runner code that finds files ending in.doctestand runs them through doctest'stestfilemethod.if __name__ == "__main__": import doctest from glob import glob for file in glob("recipe27*.doctest"): print "Running tests found in %s" % file doctest.testfile(file) - Run the test suite.

- Run the test suite with

-v.

Doctest provides the convenient testfile function that will exercise a block of pure text as if it were contained inside a docstring. This is why no quotations are needed compared to when we had doctests inside docstrings. The text files aren't docstrings.

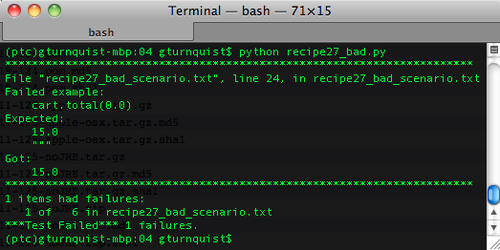

In fact, if we include triple quotes around the text, the tests won't work correctly. Let's take the first scenario—put """ at the top and bottom of the file, and save it as recipe27_bad_scenario.txt. Then let's create a file called recipe27_bad.py and create an alternate test runner that runs our bad scenario.

if __name__ == "__main__":

import doctest

doctest.testfile("recipe27_bad_scenario.txt")We get the following error message:

It has confused the tail end triple quotes as part of the expected output. It's best to just leave them out.

What is so great about moving docstrings into separate files? Isn't this the same thing that we were doing in Creating testable documentation with doctest recipe discussed in Chapter 3? Yes and no. Yes, it's technically the same thing: doctest exercising blocks of code embedded in test.

But BDD is more than simply a technical solution. It is driven by the philosophy of customer-readable scenarios. BDD aims to test the behavior of the system. The behavior is often defined by customer-oriented scenarios. Getting a hold of these scenarios is strongly encouraged when our customer can easily understand the scenarios that we have captured. It is further enhanced when the customer can see what passes and fails and, in turn, sees a realistic status of what has been accomplished.

By decoupling our test scenarios from the code and putting them into separate files, we have the key ingredient to making readable tests for our customers using doctest.

In Chapter 3 there are several recipes that show how convenient it is to embed examples of code usage in docstrings. They are convenient, because we can read the docstrings from an interactive Python shell. What do you think is different about pulling some of this out of the code into separate scenario files? Do you think there are some doctests that would be useful in docstrings and others that may serve us better in separate scenario files?