5.2 Orthogonal Subspaces

Let A be an matrix and let , the null space of A. Since , we have

for . Equation (1) says that x is orthogonal to the ith column vector of for . Since x is orthogonal to each column vector of , it is orthogonal to any linear combination of the column vectors of . So if y is any vector in the column space of , then . Thus, each vector in N(A) is orthogonal to every vector in the column space of . When two subspaces of have this property, we say that they are orthogonal.

Example 1

Let X be the subspace of spanned by , and let Y be the subspace spanned by . If , these vectors must be of the form

Thus,

Therefore, .

The concept of orthogonal subspaces does not always agree with our intuitive idea of perpendicularity. For example, the floor and wall of the classroom “look” orthogonal, but the xy-plane and the yz-plane are not orthogonal subspaces. Indeed, we can think of the vectors and as lying in the xy- and yz-planes, respectively. Since

the subspaces are not orthogonal. The next example shows that the subspace corresponding to the z-axis is orthogonal to the subspace corresponding to the xy-plane.

Example 2

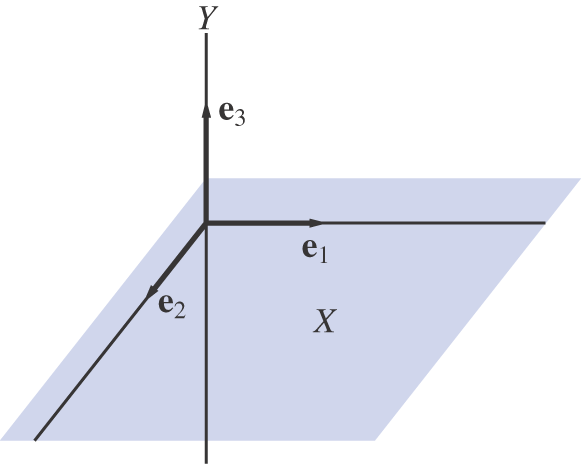

Let X be the subspace of spanned by and , and let Y be the subspace spanned by . If and , then

Thus, . Furthermore, if z is any vector in that is orthogonal to every vector in Y, then , and hence

But if , then . Therefore, X is the set of all vectors in that are orthogonal to every vector in Y (see Figure 5.2.1).

Figure 5.2.1.

Note

The subspaces and of given in Example 1 are orthogonal, but they are not orthogonal complements. Indeed,

Remarks

1. If X and Y are orthogonal subspaces of , then .

2. If Y is a subspace of , then is also a subspace of .

Proof of (1)

If and , then and hence .

∎

Proof of (2)

If and α is a scalar, then for any ,

Therefore, . If and are elements of , then

for each . Hence, . Therefore, is a subspace of .

∎

Fundamental Subspaces

Let A be an matrix. We saw in Chapter 3 that a vector is in the column space of A if and only if for some . If we think of A as a linear transformation mapping into , then the column space of A is the same as the range of A. Let us denote the range of A by R(A). Thus,

The column space of ), is a subspace of :

The column space of is essentially the same as the row space of A, except that it consists of vectors in ( matrices) rather than n-tuples. Thus, ) if and only if is in the row space of A. We have seen that . The following theorem shows that N(A) is actually the orthogonal complement of .

Theorem 5.2.1 Fundamental Subspaces Theorem

If A is an matrix, then .

Proof

On the one hand, we have already seen that , and this implies that . On the other hand, if x is any vector in , then x is orthogonal to each of the column vectors of and, consequently, . Thus, x must be an element of N(A) and hence . This proof does not depend on the dimensions of A. In particular, the result will also hold for the matrix . Consequently,

∎

Example 3

Let

The column space of A consists of all vectors of the form

Note that if x is any vector in and , then

The null space of consists of all vectors of the form . Since and are orthogonal, it follows that every vector in R(A) will be orthogonal to every vector in . The same relationship holds between and N(A). consists of vectors of the form , and N(A) consists of all vectors of the form . Since and are orthogonal, it follows that each vector in is orthogonal to every vector in N(A).

Theorem 5.2.1 is one of the most important theorems in this chapter. In Section 5.3, we will see that the result provides a key to solving least squares problems. For the present, we will use Theorem 5.2.1 to prove the following theorem, which, in turn, will be used to establish two more important results about orthogonal subspaces.

Theorem 5.2.2

If S is a subspace of , then . Furthermore, if is a basis for S and is a basis for , then is a basis for .

Proof

If , then and

If , then let , be a basis for S and define X to be an matrix whose ith row is for each i. By construction, the matrix X has rank r and . By Theorem 5.2.1,

It follows from Theorem 3.6.5 that

To show that is a basis for , it suffices to show that the n vectors are linearly independent. Suppose that

Let and . We then have

Thus, y and z are both elements of . But . Therefore,

Since are linearly independent,

Similarly, are linearly independent and hence

So are linearly independent and form a basis for .

∎

Given a subspace S of , we will use Theorem 5.2.2 to prove that each can be expressed uniquely as a sum , where and.

Theorem 5.2.3

If S is a subspace of , then

Proof

The result is trivial if either or . In the case where dim , it follows from Theorem 5.2.2 that each vector can be represented in the form

where is a basis for S and is a basis for . If we let

then , and . To show uniqueness, suppose that x can also be written as a sum , where and . Thus,

But and , so each is in . Since

it follows that

∎

Theorem 5.2.4

If S is a subspace of , then .

Proof

On the one hand, if , then x is orthogonal to each y in . Therefore, and hence . On the other hand, suppose that z is an arbitrary element of . By Theorem 5.2.3, we can write z as a sum , where and . Since , it is orthogonal to both u and z. It then follows that

and, consequently, . Therefore, and hence .

∎

It follows from Theorem 5.2.4 that if T is the orthogonal complement of a subspace S, then S is the orthogonal complement of T, and we may say simply that S and T are orthogonal complements. In particular, it follows from Theorem 5.2.1 that N(A) and are orthogonal complements of each other and that and R(A) are orthogonal complements. Hence, we may write

Recall that the system is consistent if and only if . Since , we have the following result, which may be considered a corollary to Theorem 5.2.1.

Corollary 5.2.5

If A is an matrix and , then either there is a vector such that or there is a vector such that and .

Corollary 5.2.5 is illustrated in Figure 5.2.2 for the case where R(A) is a two-dimensional subspace of . The angle in the figure will be a right angle if and only if .

Figure 5.2.2.

Example 4

Let

Find the bases for N(A), , and R(A).

SOLUTION

We can find bases for N(A) and by transforming A into reduced row echelon form:

Since (1, 0, 1) and (0, 1, 1) form a basis for the row space of A, it follows that and form a basis for . If , it follows from the reduced row echelon form of A that

Thus,

Setting , we see that N(A) consists of all vectors of the form . Note that is orthogonal to and .

To find bases for R(A) and , transform to reduced row echelon form.

Thus, and form a basis for R(A). If , then . Hence, is the subspace of spanned by . Note that is orthogonal to and .

We saw in Chapter 3 that the row space and the column space have the same dimension. If A has rank r, then

Actually, A can be used to establish a one-to-one correspondence between and R(A).

We can think of an matrix A as a linear transformation from to :

Since and N(A) are orthogonal complements in ,

Each vector can be written as a sum

It follows that

and hence

Thus, if we restrict the domain of A to , then A maps onto R(A). Furthermore, the mapping is one-to-one. Indeed, if and

then

and hence

Since , it follows that . Therefore, we can think of A as determining a one-to-one correspondence between and R(A). Since each corresponds to exactly one , we can define an inverse transformation from R(A) to . Indeed, every matrix A is invertible when viewed as a linear transformation from to R(A).

Example 5

Let is spanned by and , and N(A) is spanned by . Any vector can be written as a sum

where

If we restrict ourselves to vectors , then

In this case, and the inverse transformation from R(A) to is defined by