Chapter 3

Synchronization Primitives

3.1 Introduction

All of our previous solutions to the mutual exclusion problem were wasteful in one regard. If a process is unable to enter the critical section, it repeatedly checks for the entry condition to be true. While a process is doing this, no useful work is accomplished. This way of waiting is called busy wait. Instead of checking the entry condition repeatedly, if the process checked the condition only when it could have become true, it would not waste CPU cycles. Accomplishing this requires support from the operating system.

In this chapter we introduce synchronization primitives that avoid busy wait. Synchronization primitives are used for mutual exclusion as well as to provide order between various operations by different threads. Although there are many types of synchronization constructs in various programming languages, two of them are most prevalent: semaphores and monitors. We discuss these constructs in this chapter.

3.2 Semaphores

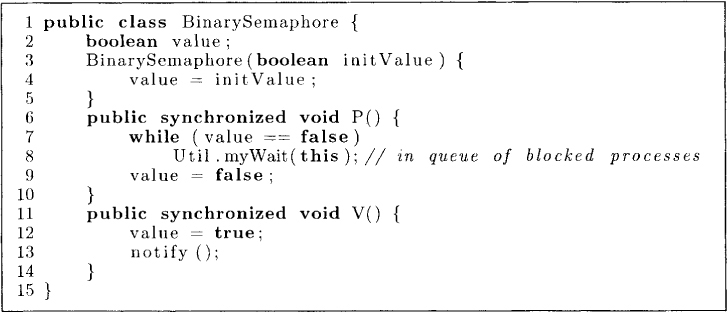

Dijkstra proposed the concept of semaphore that solves the problem of busy wait. A semaphore has two fields, its value and a queue of blocked processes, and two operations associated with it—P() and V(). The semantics of a binary semaphore is shown in Figure 3.1. The value of a semaphore (or a binary semaphore) can be only false or true. The queue of blocked processes is initially empty and a process may add itself to the queue when it makes a call to P(). When a process calls P() and value is true, then the value of the semaphore becomes false. However, if the value of the semaphore is false, then the process gets blocked at line 7 until it becomes true. The invocation of Util.myWait() at line 8 achieves this. The class Util is shown in the appendix, but for now simply assume that this call inserts the caller process into the queue of blocked processes.

When the value becomes true, the process can make it false at, line 9 and return from P(). The call to V() makes the value true and also notifies a process if the queue of processes sleeping on that semaphore is nonempty.

Figure 3.1: Binary semaphore

Now, mutual exclusion is almost trivial to implement:

BinarySemaphore mutex = new BinarySemaphore(true) ;

mutex.P() ;

criticalSection() ;

mutex.V() ;

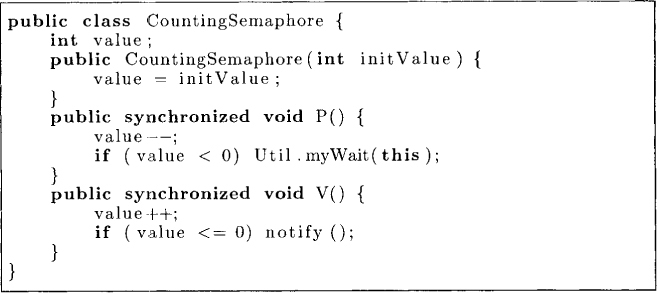

Another variant of semaphore allows it to take arbitrary integer as its value. These semaphores are called counting semaphores. Their semantics is shown in Figure 3.2.

Semaphores can be used to solve a wide variety of synchronization problems. Note that Java does not provide semaphores as basic language construct, but they can easily be implemented in Java using the idea of monitors, which we will cover later. For now we simply assume that semaphores are available to us and solve synchronization problems using them.

Figure 3.2: Counting semaphore

3.2.1 The Producer-Consumer Problem

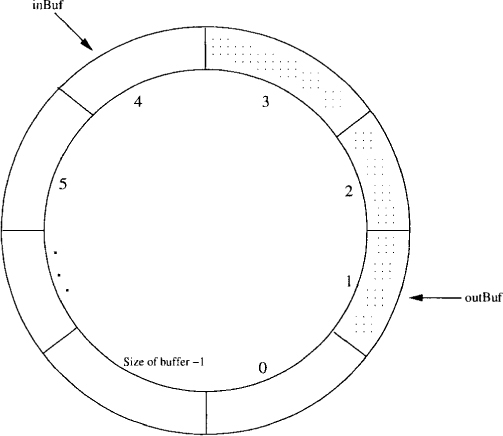

We first consider the producer-consumer problem. In this problem, there is a shared buffer between two processes called the producer and the consumer. The producer produces items that are deposited in the buffer and the consumer fetches items from the buffer and consumes them. For simplicity, we assume that our items are of type double. Since the buffer is shared, each process must access the buffer in a mutually exclusive fashion. We use an array of double of size size as our buffer. The buffer has two pointers, inBuf and outBuf, which point to the indices in the array for depositing an item and fetching an item, respectively. The variable count keeps track of the number of items currently in the buffer. Figure 3.3 shows the buffer as a circular array in which inBuf and outBuf are incremented modulo size to keep track of the slots for depositing and fetching items.

In this problem, we see that besides mutual exclusion, there are two additional synchronization constraints that need to be satisfied:

1. The consumer should not fetch any item from an empty buffer.

2. The producer should not deposit any item in the buffer if it is full. The buffer can become full if the producer is producing items at a greater rate than the rate at which the items are consumed by the consumer.

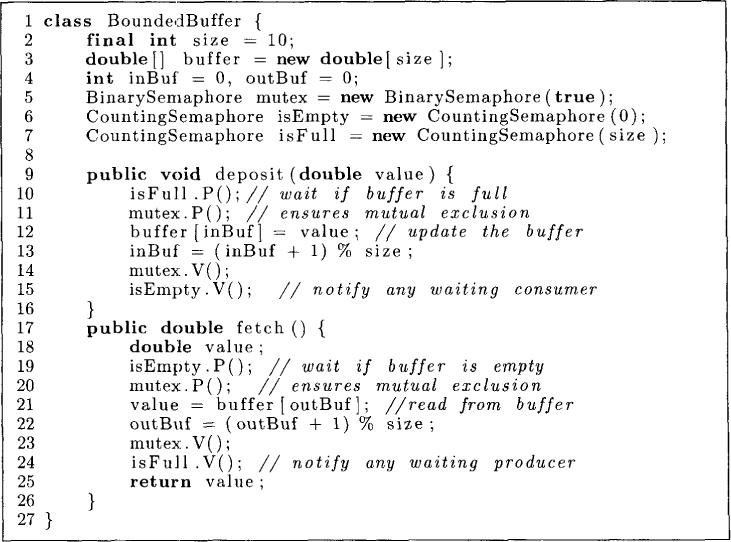

Such form of synchronization is called conditional synchronization. It requires a process to wait for some condition to become true (such as the buffer to become nonempty) before continuing its operations. The class BoundedBuffer is shown in Figure 3.4. It uses mutex semaphore to ensure that all shared variables are accessed in mutually exclusive fashion. The counting semaphore isFull is used for making a producer wait in case the buffer is full, and the semaphore isEmpty is used to make a consumer wait when the buffer is empty.

Figure 3.3: A shared buffer implemented with a circular array

In the method deposit, line 10 checks whether the buffer is full. If it is, the process making call waits using the semaphore isFull. Note that this semaphore has been initialized to the value size, and therefore in absence of a consumer, first size calls to isFull.P() do not block. At this point, the buffer would be full and any call to isFull.P() will block. If the call to isFull.P() does not block, then we enter the critical section to access the shared buffer. The call mutex.P() at line 11 serves as entry to the critical section, and mutex.V() serves as the exit from the critical section. Once inside the critical section, we deposit the value in buffer using the pointer inBuf at line 12 (see Figure 3.4). Line 15 makes a call to isEmpty.V() to wake up any consumer that may be waiting because the buffer was empty. The method fetch is dual of the method deposit.

Figure 3.4: Bounded buffer using semaphores

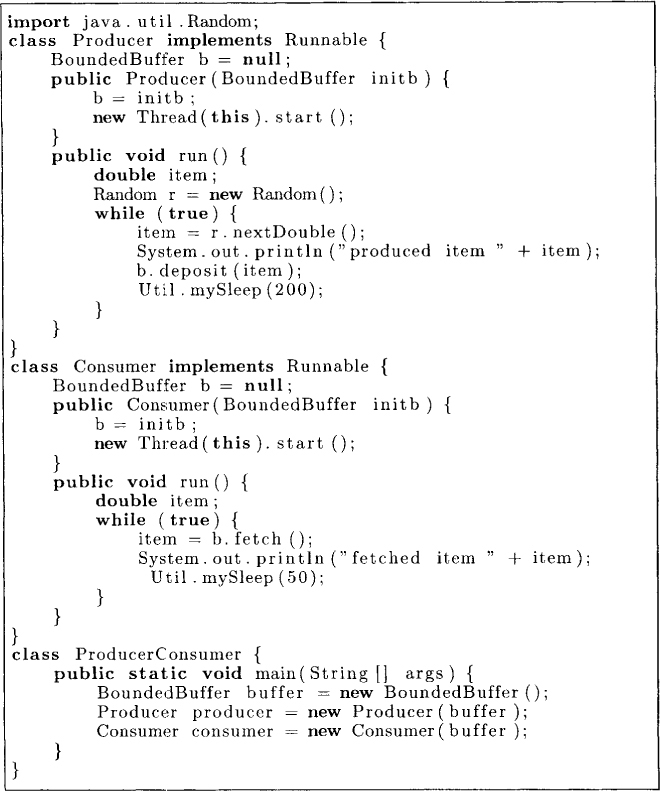

The class BoundedBuffer can be exercised through the producer-consumer program shown in Figure 3.5. This program starts a Producer thread and a Consumer thread, repeatedly making calls to deposit and fetch, respectively.

3.2.2 The Reader-Writer Problem

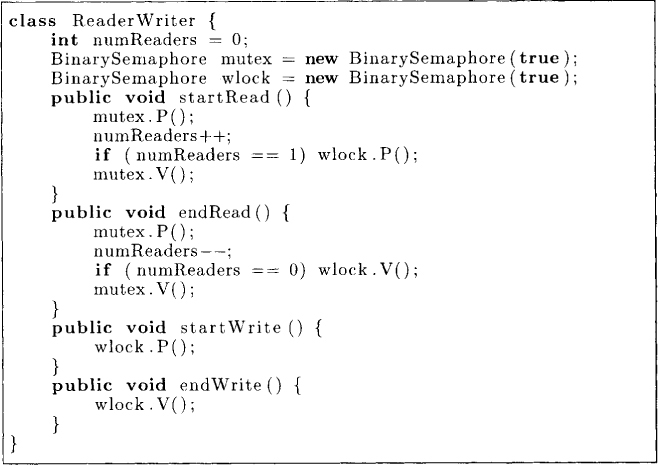

Next we show the solution to the reader-writer problem. This problem requires us to design a protocol to coordinate access to a shared database. The requirements are as follows:

1. No read-write conflict: The protocol should ensure that a reader and a writer do not access the database concurrently.

2. No write-write conflict: The protocol should ensure that two writers do not access the database concurrently.

Further, we would like multiple readers to be able to access the database concurrently. A solution using semaphores is shown in Figure 3.6. We assume that the readers follow the protocol that they call startRead before reading the database and call endRead after finishing the read. Writers follow a similar protocol. We use the wlock semaphore to ensure that either there is a single writer accessing the database or only readers are accessing it. To count the number of readers accessing the database, we use the variable numReaders.

The methods startWrite and endWrite are quite simple. Any writer that wants to use the database locks it using wlock.P(). If the database is not locked, this writer gets the access. Now no other reader or writer can access the database until this writer releases the lock using endWrite().

Now let us look at the startRead and the endRead methods. In startRead, a reader first increments numReaders. If it is the first reader (numReaders equals 1), then it needs to lock the database; otherwise, there are already other readers accessing the database and this reader can also start using it. In endRead, the variable numReaders is decremented and the last reader to leave the database unlocks it using the call wlock.V().

This protocol has the disadvantage that a writer may starve in the presence of continuously arriving readers. A starvation-free solution to the reader-writer problem is left as an exercise.

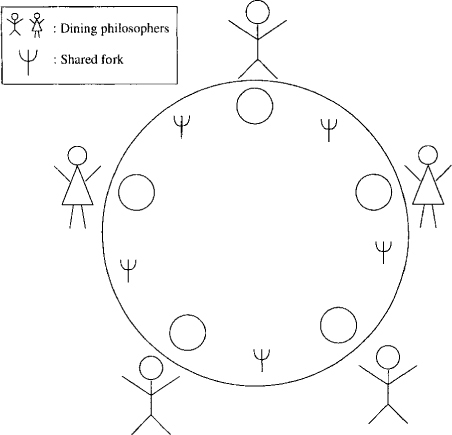

3.2.3 The Dining Philosopher Problem

This problem, first posed and solved by Dijkstra, is useful in bringing out issues associated with concurrent programming and symmetry. The dining problem consists of multiple philosophers who spend their time thinking and eating spaghetti. However, a philosopher requires shared resources, such as forks, to eat spaghetti (see Figure 3.7). We are required to devise a protocol to coordinate access to the shared resources. A computer-minded reader may substitute processes for philosophers and files for forks. The task of eating would then correspond to an operation that requires access to shared files.

Figure 3.5: Producer-consumer algorithm using semaphores

Figure 3.6: Reader-writer algorithm using semaphores

Figure 3.7: The dining philosopher problem

Figure 3.8: Dining Philosopher

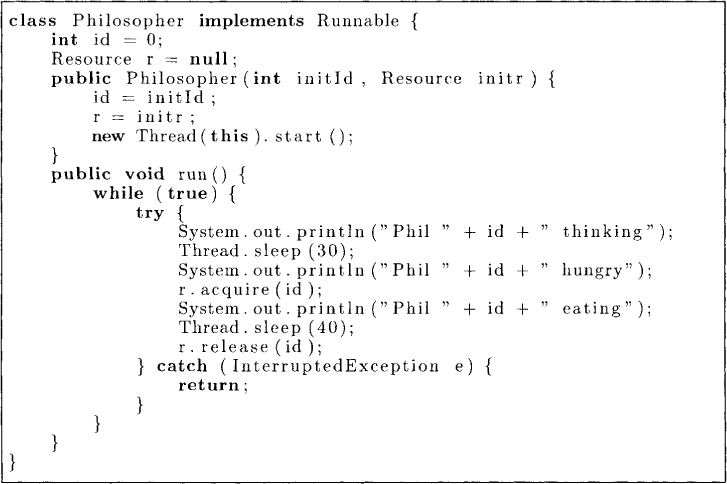

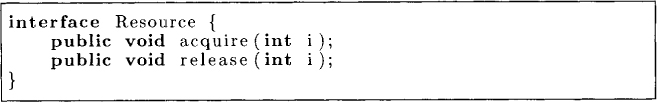

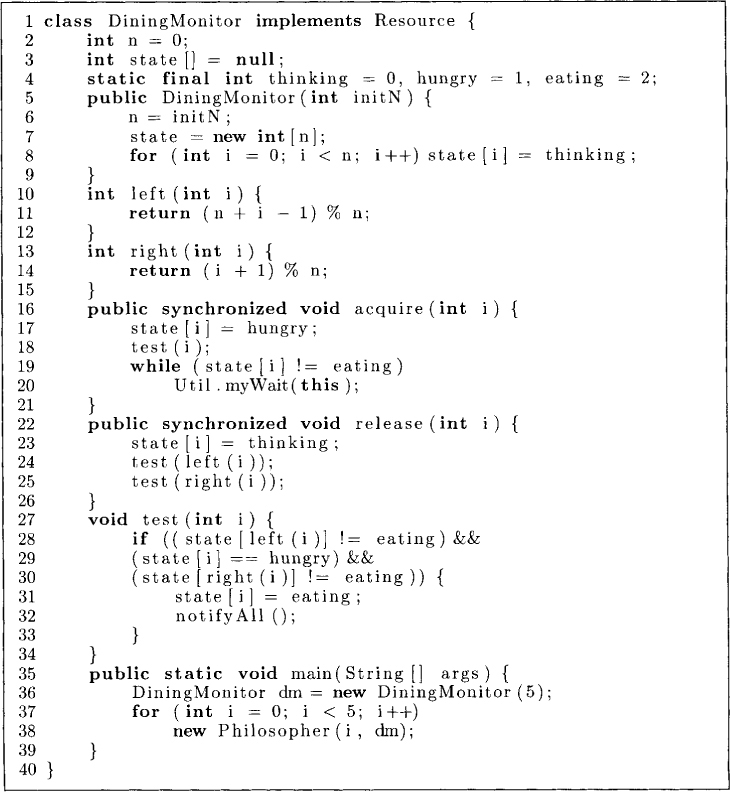

Let us first model the process of a philosopher. The class Philosopher is shown in Figure 3.8. Each philosopher Pi repeatedly cycles through the following states—thinking, hmgry, and eating. To eat, a philosopher requires resources (forks) for which it makes call to acquire(i). Thus, the protocol to acquire resources is abstracted as an interface Resource shown in Figure 3.9.

The first attempt to solve this problem is shown in Figure 3.10. It uses a binary semaphore for each of the forks. To acquire the resources for eating, a philosopher i grabs the fork on its left by using fork[i] .P() at line 12, and the fork on the right by using fork[(i+l) % n] .P() at line 13. To release the resources, the philosopher invokes V() on both the forks at lines 16 and 17.

This attempt illustrates the dangers of symmetry in a distributed system. This protocol can result in deadlock when each philosopher is able to grab its left fork and then waits for its right neighbor to release its fork.

Figure 3.9: Resource Interface

Figure 3.10: Dining philosopher using semaphores

There are many ways that one can extend the solution to ensure freedom from deadlock. For example:

1. We can introduce asymmetry by requiring one of the philosophers to grab forks in a different order (i.e., the right fork followed by the left fork instead of vice versa).

2. We can require philosophers to grab both the forks at the same time.

3. Assume that a philosopher has to stand before grabbing any fork. Allow at most four philosophers to be standing at any given time.

It is left as an exercise for the reader to design a protocol that is free from deadlocks.

The dining philosopher problem also illustrates the distinction between deadlock freedom and starvation freedom. Assume that we require a philosopher to grab both the forks at the same time. Although this eliminates deadlock, we still have the problem of a philosopher being starved because its neighbors continuously alternate in eating. The reader is invited to come up with a solution that is free from deadlock as well as starvation.

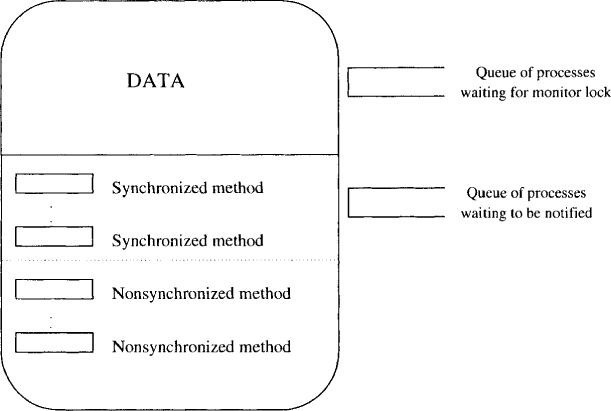

3.3 Monitors

The Monitor is a high-level object-oriented construct for synchronization in concurrent programming. A monitor can be viewed as a class that can be used in concurrent programs. As any class, a monitor has data variables and methods to manipulate that data. Because multiple threads can access the shared data at the same time, monitors support the notion of entry methods to guarantee mutual exclusion. It is guaranteed that at most one thread can be executing in any entry method at any time. Sometimes the phrase “thread t is inside the monitor” is used to denote that thread t is executing an entry method. It is clear that at most one thread can be in the monitor at any time. Thus associated with every monitor object is a queue of threads that are waiting to enter the monitor.

As we have seen before, concurrent programs also require conditional synchronization when a thread must wait for a certain condition to become true. To address conditional synchronization, the monitor construct, supports the notion of condition variables. A condition variable has two operations defined on it: wait and notify (also called a signal). For any condition variable x, any thread, say, t1, that makes a call to x.wait() is blocked and put into a queue associated with x. When another thread, say, t2, makes a call to x.notify(), if the queue associated with x is nonempty, a thread is removed from the queue and inserted into the queue of threads that are eligible to run. Since at most one thread can be in the monitor, this immediately poses a problem: which thread should continue after the notify operation—the one that called the notify method or the thread that was waiting. There are two possible answers:

1. One of the threads that was waiting on the condition variable continues execution. Monitors that follow this rule are called Hoare monitors.

2. The thread that made the notify call continues its execution. When this thread goes out of the monitor, then other threads can enter the monitor. This is the semantics followed in Java.

One advantage of Hoare’s monitor is that the thread that was notified on the condition starts its execution without intervention of any other thread. Therefore, the state in which this thread starts executing is the same as when the notify was issued. On waking up, it can assume that the condition is true. Therefore, using Hoare’s mointor, a thread’s code may be

if (!B) x.wait();

Assuming that t2 notifies only when B is true, we know that t1 can assume B on waking up. In Java-style monitor, even though t2 issues the notify, it continues its execution. Therefore, when t1 gets its turn to execute, the condition B may not be true any more. Hence, when using Java, the threads usually wait for the condition as

while (!B) x.wait():

The thread t1 can take a notify() only as a hint that B may be true. Therefore, it explicitly needs to check for truthness of B when it wakes up. If B is actually false, it issues the wait() call again.

In Java, we specify an object to be a monitor by using the keyword synchronized with its methods. To get conditional synchronization, Java provides

1. wait(): which inserts the thread in the wait queue. For simplicity, we use Util.myWait() instead of wait() in Java. The only difference is that myWait catches the InterruptedException.

2. notify(): which wakes up a thread in the wait queue.

3. notifyAll(): which wakes up all the threads in the wait queue.

Java does not have condition variables. Thus, associated with each object there is a single wait queue for conditions. This is sufficient for most programming needs. If one needs, it is also easy to simulate condition variables in Java. A pictorial representation of a Java monitor is shown in Figure 3.11. There are two queues associated with an object- a queue of threads waiting for the lock associated with the monitor and another queue of threads waiting for some condition to become true.

Figure 3.11: A pictorial view of a Java monitor

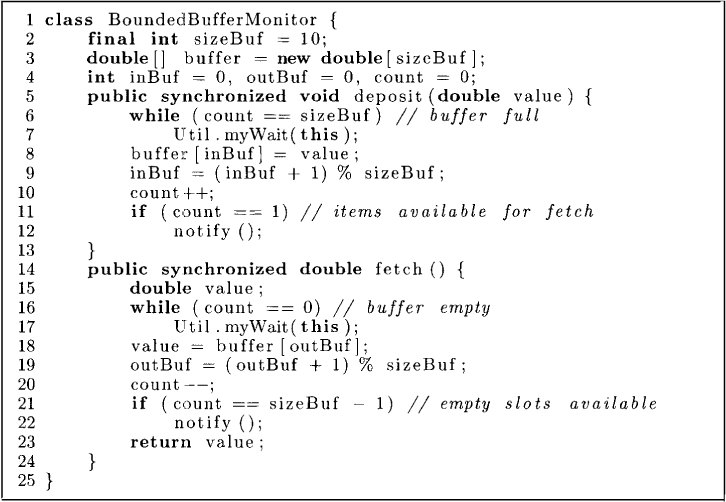

Let us solve some synchronization problems with Java monitors. We first look at the producer-consumer problem. The BoundedBufferMonitor shown in Figure 3.12 has two entry methods: deposit and fetch. This means that if a thread is executing the method deposit or fetch, then no other thread can execute deposit or fetch. The synchronized keyword at lines 5 and 14 allows mutual exclusion in access of shared variables and corresponds to acquiring the monitor lock. Let us now look at the method deposit. At line 6, if the buffer is full, (i.e., count is equal to sizeBuf), then the thread that called deposit must wait for a slot in the buffer to be consumed. Therefore, it invokes the method myWait(). When a thread waits for the condition, it goes in a queue waiting to be notified by some other thread. It also has to release the monitor lock so that other threads can enter the monitor and make the condition on which this thread is waiting true. When this thread is notified, it has to acquire the monitor lock again before continuing its execution.

Now assume that the condition in the while statement at line 6 is false. Then the value can be deposited in the buffer. The variable inBuf points to the tail of the circular buffer. It is advanced after the insertion at line 9 and the count of the number of items in the buffer is incremented at line 10. We are not really done yet. While designing a monitor, one also needs to ensure that if some thread may be waiting for a condition that may have become true, then that thread must be notified. In this case, a consumer thread may be waiting in the method fetch for some item to become available. Therefore, if count is 1, we notify any waiting thread at line 12.

The method fetch is very similar to deposit.

Figure 3.12: Bounded buffer monitor

Now let us revisit the dining philosophers problem. In the solution shown in Figure 3.13, a philosopher i uses the method test at line 18 to determine if any of neighboring philosophers is eating. If not, then this philosopher can start eating. Otherwise the philosopher must wait for the condition (state[i] == eating) at line 19 to begin eating. This condition can become true when one of the neighboring philosophers finishes eating. After eating, the philosopher invokes the method release to check at lines 24 and 25, whether the left neighbor or the right neighbor can now eat. If any of them can eat, this philosopher wakes up all the waiting philosophers by invoking notifyAll() at line 32. This solution guarantees mutual exclusion of neighboring philosophers and is also free from deadlock. However, it does not guarantee freedom from starvation. The reader should devise a protocol for starvation freedom.

3.4 Other Examples

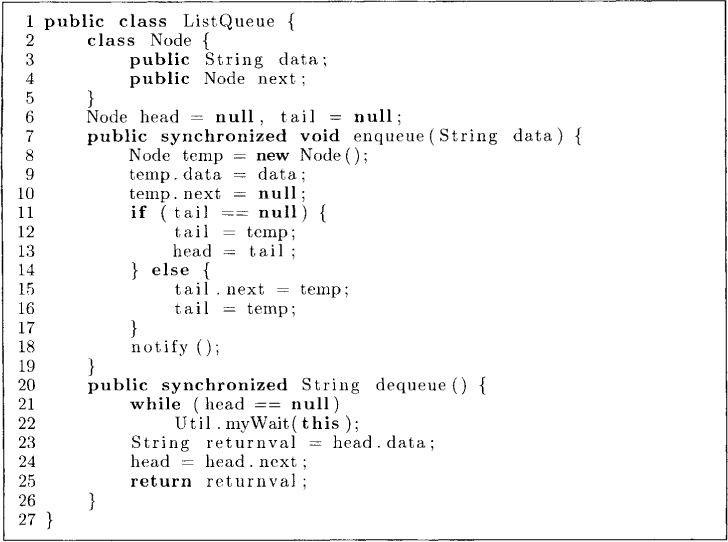

In this section we give another example of concurrent programming in Java. Figure 3.14 shows a thread-safe implementation of a queue that is based on a linked list. The class Node declared at line 2 contains a String as data and the reference to the next node in the linked list. To enqueue data, we first create a temp node at line 8. This node is inserted at the tail. If the linked list is empty, this is the only node in the linked list and both head and tail are made to point to this node at lines 11-13. To dequeue a node, a thread must wait at line 22 if head is null (the linked list is empty). Otherwise, the data in the head node is returned and head is moved to the next node.

As mentioned earlier, whenever a thread needs to execute a synchronized method, it needs to get the monitor lock. The keyword synchronized can also be used with any statement as synchronized (expr) statement. The expression expr must result in a reference to an object on evaluation. The semantics of the above construct is that the statement can be executed only when the thread has the lock for the object given by the expr. Thus a synchronized method

public synchronized void method() {

body() ;

}

can simply be viewed as a short form for

public void method() {

synchronized (this) {

body() ;

{

}

Just as nonstatic methods can be synchronized, so can the static methods. A synchronized static method results in a classwide lock.

Figure 3.13: Dining philosopher using monitors

Figure 3.14: Linked list

One also needs to be careful with inheritance. When an extended class overrides a synchronized method with an unsynchronized method, the method of the original class stays synchronized. Thus, any call to super.method() will result in synchronization.

3.5 Dangers of Deadlocks

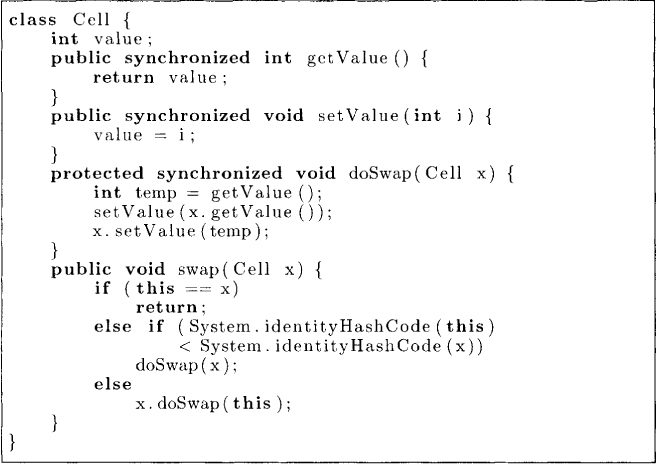

Since every synchronized call requires a lock, a programmer who is not careful can introduce deadlocks. For example, consider the following class that allows a cell to be swapped with the other cell. An object of class BCell provides three methods: getValue, setValue and swap. Although the implementation appears correct at first glance, it suffers from deadlock. Assume that we have two objects, p and q, as instances of class BCell. What happens if a thread t1 invokes p.swap(q) and another thread, say, t2, invokes q.swap(p) concurrently? Thread t1 acquires the lock for the monitor object p and t2 acquires the lock for the monitor object q. Now, thread t1 invokes q.getValue() as part of the swap method. This invocation has to wait because object q is locked by t2. Similarly, t2 has to wait for the lock for p, and we have a deadlock!

class BCell { // can result in deadlocks

int value;

public synchronized int getvalue () {

return value ;

}

public synchronized void setvalue ( int i ) {

value = i ;

}

public synchronized void swap(BCel1 x) {

int temp = getvalue ();

setvalue (x. getvalue ());

x. setvalue (temp);

}

}

The program that avoids the deadlock is given below. It employs a frequently used strategy of totally ordering all the objects in a system and then acquiring locks only in increasing order. In this program, both p.swap(q) and q.swap(p) result in either p.doSwap(q) or q.doSwap(p), depending on the identityHashCode value of the objects p and q.

Some other useful methods in Java Thread class are as follows:

1. The interrupt() method allows a thread to be interrupted. If thread t1 calls t2.interrupt(), then t2 gets an InterruptedException.

2. The yield() method allows a thread to yield the CPU to other threads temporarily. It does not require any interaction with other threads, and a program without yield() would be functionally equivalent to yield() call. A thread may choose to yield() if it is waiting for some data to become available from say InputStream.

3. The method holdsLock(x) returns true if the current thread holds the monitor lock of the object x.

3.6 Problems

3.1. Show that if the P() and V() operations of a binary semaphore are not executed atomically, then mutual exclusion may be violated.

3.2. Show that a counting semaphore can be implemented using binary semaphores. (Hint: Use a shared variable of type integer and two binary semaphores)

3.3. Give a starvation-free solution to the reader-writer problem using semaphores.

3.4. The following problem is known as the sleeping barber problem. There is one thread called barber. The barber cuts the hair of any waiting customer. If there is no customer, the barber goes to sleep. There are multiple customer threads. A customer waits for the barber if there is any chair left in the barber room. Otherwise, the customer leaves immediately. If there is a chair available, then the customer occupies it. If the barber is sleeping, then the customer wakes the barber. Assume that there are n chairs in the barber shop. Write a Java class for SleepingBarber using semaphores that allows the following methods:

runBarber() // called by the barber thread; runs forever

hairCut() // called by the customer thread

How will you extend your algorithm to work for the barber shop with multiple barbers.

3.5. Give a deadlock-free solution to the dining philosophers problem using semaphores. Assume that one of the philosophers picks forks in a different order.

3.6. Assume that there are three threads—P, Q, and R—that repeatedly print “P”, “Q”, and “R’ respectively. Use semaphores to coordinate the printing such that the number of “R” printed is always less than or equal to the sum of “P” and “Q” printed.

3.7. Write a monitor for the sleeping barber problem.

3.8. Show how condition variables of a monitor can be implemented in Java.

3.9. Write a monitor class counter that allows a process to sleep until the counter reaches a certain value. The counter class allows two operations: increment() and sleepUntil(int x).

3.10. Write a Java class for BoundedCounter with a minimum and a maximum value. This class provides two methods: increment() and decrement(). Decrement at the minimum value and increment at the maximum value result in the calling thread waiting until the operation can be performed without violating the bounds on the counter.

3.7 Bibliographic Remarks

The semaphores were introduced by Dijkstra [Dij65a]. The monitor concept was introduced by Brinch Hansen [Han72] and the Hoare-style monitor, by Hoare [Hoa74]. Solutions to classical synchronization problems in Java are also discussed in the book by Hartley [Har98]. The example of deadlock and its resolution based on resource ordering is discussed in the book by Lea [Lea99].