Once a search service application is configured, it needs data for indexing. In this recipe, we will add a new content source for our search service application.

For this recipe, we should have a search service application created in the Provisioning a search service application recipe.

Follow these steps to add a new content source to our search service application:

- Navigate to Central Administration in your preferred web browser.

- Click on Manage service applications from the Application Management section.

- Click on the Search Service Application link we created in the previous recipe.

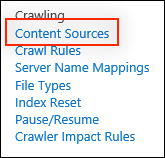

- In the quick launch, click on Content Sources from the Crawling section as shown in the following screenshot:

- Click on New Content Source.

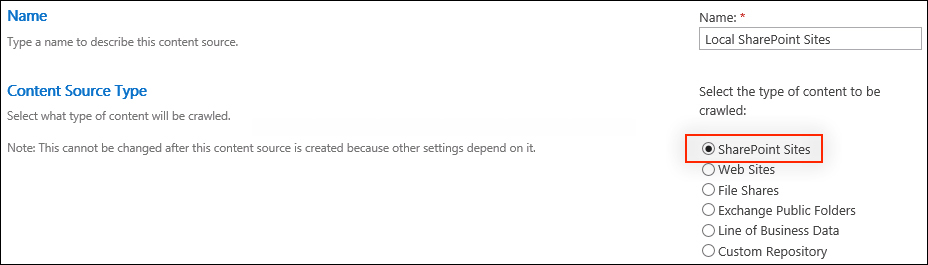

- Provide a name, such as

Local SharePoint Sites, for the content source in the Name field. - Select SharePoint Sites for the Content Source Type as shown in the following screenshot:

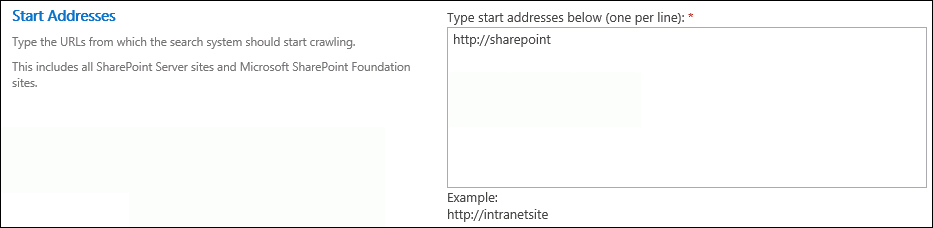

- Add the URL to the root SharePoint site to index to the Start Addresses section,

http://sharepoint/for instance. Multiple SharePoint sites may be indexed as a single content source. To add more SharePoint sites, add them on a new line in the Start Addresses field.

- Select Crawl Everything Under the Hostname for Each Start Address in the Crawl Settings.

Tip

The content source can be configured to index only the site collection that matches the URL provided or to index everything under that URL. For instance, when enabled, http://sharepoint/site will be indexed when http://sharepoint/ is added to the Start Addresses field.

- Select Enable Continuous Crawl in the Crawl Schedules.

- Click on OK.

Search crawls in SharePoint are conducted on a per content source basis. Content sources define what is being crawled and how often. They can include SharePoint sites, websites, file shares, Microsoft Exchange public folders, line-of-business data from business data connectivity services connections, and custom repositories. Each content source defined can use multiple content sources of the same content type. For instance, a content source could include multiple, different websites. A content source, however, could not include both a website and a line-of-business data connection.

Content sources can also be created and configured with PowerShell.

Follow these steps to configure a content source using PowerShell:

- Assign our search service application to a variable using the

Get-SPEnterpriseSearchServiceApplicationCmdlet:$ssa = Get-SPEnterpriseSearchServiceApplication "Search Service Application" - Create a new content source with the

New-SPEnterpriseSearchCrawlContentSourceCmdlet and assign it to a variable:$cs = New-SPEnterpriseSearchCrawlContentSource -Name "SharePoint Sites" -SearchApplication $ssa -Type SharePoint -SharePointCrawlBehavior CrawlVirtualServers -StartAddresses "http://sharepoint/"The

SharePointCrawlBehaviorparameter is the equivalent of the Crawl Settings section in the web interface.CrawlVirtualServersinstructs the indexer to index all content under the URL provided andCrawlSitesinstructs the indexer to only index the site collection at the URL provided. - Enable Continuous Crawl and then update the content source using the following commands:

$cs.EnableContinuousCrawls = $true $cs.Update()

- The Add, Edit, or Delete a content source in SharePoint 2013 article on TechNet at http://technet.microsoft.com/en-us/library/jj219808.aspx

- The Get-SPEnterpriseSearchServiceApplication topic on TechNet at http://technet.microsoft.com/en-us/library/ff608050.aspx

- The New-SPEnterpriseSearchCrawlContentSource topic on TechNet at http://technet.microsoft.com/en-us/library/ff607867.aspx