Chapter 3. *Dictionary Data Structures

For the enhanced efficiency of A* this chapter discusses different dictionary data structures. Priority queues are provided for integer and total ordered keys. For duplicate elimination, hashing is studied, including provably constant access time. Moreover, maintaining partial states in form of substrings or subsets is considered.

Keywords: priority queue, 1-level bucket, 2-level bucket, Radix heap, van-Emde-Boas queue, heap, pairing heap, weak-heap, Fibonacci heap, relaxed weak queue, hash function, hash table, incremental hashing, universal hashing, perfect hashing, ranking and unranking, cuckoo hashing, suffix list, bitstate hashing, hash compaction, collapse compression, subset dictionary, unlimited branching tree, substring dictionary, suffix tree.

The exploration efficiency of algorithms like A* is often measured with respect to the number of expanded/generated problem graph nodes, but the actual runtimes depend crucially on how the Open and Closed lists are implemented. In this chapter we look closer at efficient data structures to represent these sets.

For the Open list, different options for implementing a priority queue data structure are considered. We distinguish between integer and general edge costs, and introduce bucket and advanced heap implementations.

For efficient duplicate detection and removal we also look at hash dictionaries. We devise a variety of hash functions that can be computed efficiently and that minimize the number of collisions by approximating uniformly distributed addresses, even if the set of chosen keys is not (which is almost always the case). Next we explore memory-saving dictionaries, the space requirements of which come close to the information-theoretic lower bound and provide a treatment of approximate dictionaries.

Subset dictionaries address the problem of finding partial state vectors in a set. Searching the set is referred to as the Subset Query or the Containment Query problem. The two problems are equivalent to the Partial Match retrieval problem for retrieving a partially specified input query word from a file of k-letter words with k being fixed. A simple example is the search for a word in a crossword puzzle. In a state space search, subset dictionaries are important to store partial state vectors like dead-end patterns in Sokoban that generalize pruning rules (see Ch. 10).

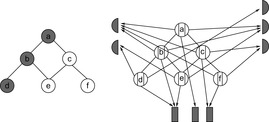

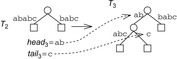

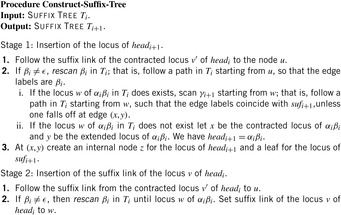

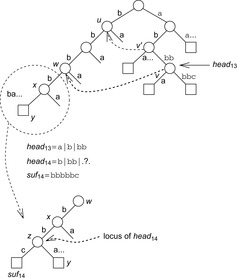

In state space search, string dictionaries are helpful to exclude a set of forbidden action sequences, like UD or RL in the (n2 − 1)-Puzzle, from being generated. Such sets of excluded words are formed by concatenating move labels on paths that can be learned and generalized (see Ch. 10). Therefore, string dictionaries provide an option to detect and eliminate duplicates without hashing and can help to reduce the efforts for storing all visited states. Besides the efficient insertion (and deletion) of strings, the task to determine if a query string is the substring of a stored string is most important and has to be executed very efficiently. The main application for string dictionaries are web search engines. The most flexible data structure for efficiently solving this Dynamic Dictionary Matching problem is the Generalized Suffix Tree.

3.1. Priority Queues

When applying the A* algorithm to explore a problem graph, we rank all generated but not expanded nodes u in list Open by their priority  . As basic operations we need to find the element of the minimal f-value: to insert a node together with its f-value and to update the structure if a node becomes a better f-value due to a shorter path. An abstract data structure for the three operations Insert, DeleteMin, and DecreaseKey is a priority queue.

. As basic operations we need to find the element of the minimal f-value: to insert a node together with its f-value and to update the structure if a node becomes a better f-value due to a shorter path. An abstract data structure for the three operations Insert, DeleteMin, and DecreaseKey is a priority queue.

In Dijkstra's original implementation, the Open list is a plain array of nodes together with a bitvector indicating if elements are currently open or not. The minimum is found through a complete scan, yielding quadratic execution time in the number of nodes. More refined data structures have been developed since, which are suitable for different classes of weight functions. We will discuss integer and general weights; for integer cost we look at bucket structures and for general weights we consider refined heap implementations.

3.1.1. Bucket Data Structures

In many applications, edge weights can only be positive integers (sometimes for fractional values it is also possible and beneficial to achieve this by rescaling). As a general assumption we state that the difference between the largest key and the smallest key is less than or equal to a constant C.

Buckets

A simple implementation for the priority queues is a 1-Level Bucket. This priority queue implementation consists of an array of C + 1 buckets, each of which is the first link in a linked list of elements. With the array we associate the three numbers minValue, minPos, and n: minValue denotes the smallest f value in the queue, minPos fixes the index of the bucket with the smallest key, and n is the number of stored elements. The i th bucket  contains all elements v with

contains all elements v with  ,

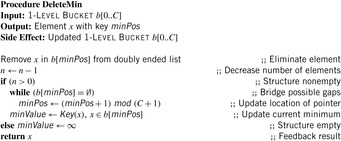

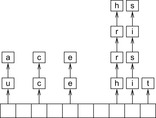

,  . Figure 3.1 illustrates an example for the set of keys {16, 16, 18, 20, 23, 25}. The implementations for the four main priority queue operations Initialize, Insert, DeleteMin, and DecreaseKey are shown in Algorithm 3.1, Algorithm 3.2, Algorithm 3.3 and Algorithm 3.4.

. Figure 3.1 illustrates an example for the set of keys {16, 16, 18, 20, 23, 25}. The implementations for the four main priority queue operations Initialize, Insert, DeleteMin, and DecreaseKey are shown in Algorithm 3.1, Algorithm 3.2, Algorithm 3.3 and Algorithm 3.4.

With doubly linked lists (each element has a predecessor and successor pointer) we achieve constant runtimes for the Insert and DecreaseKey operations, while the DeleteMin operation consumes O (C) time in the worst-case for searching a nonempty bucket. For DecreaseKey we generally assume that a pointer to the element to be deleted is available. Consequently, Dijkstra's algorithm and A* run in  time, where e is the number of edges (generated) and n is the number of nodes (expanded).

time, where e is the number of edges (generated) and n is the number of nodes (expanded).

Given that the f-value can often be bounded by a constant fmax in a practical state space search, authors usually omit the modulo operation mod (C + 1), which reduces the space for array b to O (C), and take a plain array addressed by f instead. If fmax is not known in advance a doubling strategy can be applied.

Multilayered Buckets

In state space search, we often have edge weights that are of moderate size, say realized by a 32-bit integer for which a bucket array b of size 232 is too large, whereas 216 can be afforded.

The space complexity and the worst-case time complexity O (C) for DeleteMin can be reduced to an amortized complexity of  operations by using a 2-Level Bucket data structure with one top and one bottom level, both of length

operations by using a 2-Level Bucket data structure with one top and one bottom level, both of length  .

.

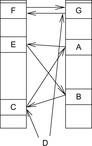

In this structure we have two pointers for the minimum position, minPosTop and minPosBottom, and a number nbot of bottom elements. Although each bucket in the bottom array holds a list of elements with the same key as before, the top layer points to lower-level arrays. If after a DeleteMin operation that yields a minimum key k no insertion is performed with a key less than k(as it is the case for a consistent heuristic in A*), it is sufficient to maintain only one bottom bucket (at minPosTop ), and collect elements in higher buckets in the top level; the lower-level buckets can be created only when the current bucket at minPosTop becomes empty and minPosTop moves on to a higher one. One advantage is that in the case of maximum distance between keys, DeleteMin has to inspect only the  buckets of the top level; moreover, it saves space if only a small fraction of the available range C is actually filled.

buckets of the top level; moreover, it saves space if only a small fraction of the available range C is actually filled.

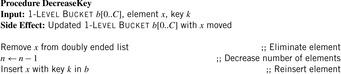

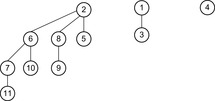

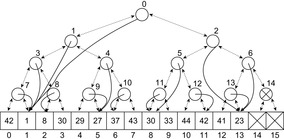

As an example, take C = 80, minPosTop = 2, minPosBottom = 1, and the set of element keys  . The intervals and elements in the top buckets are

. The intervals and elements in the top buckets are  ,

,  ,

,  ,

,  ,

,  ,

,  ,

,  ,

,  ,

,  , and

, and  . Bucket b [2] is expanded with nonempty bottom buckets 1, 5, and 7 containing the elements 7, 11, and 13, respectively. Figure 3.2 illustrates the example.

. Bucket b [2] is expanded with nonempty bottom buckets 1, 5, and 7 containing the elements 7, 11, and 13, respectively. Figure 3.2 illustrates the example.

Since DeleteMin reuses the bottom bucket in case it becomes empty, in some cases it is fast and in other cases it is slow. In our case of the 2-Level Bucket, let  be the number of elements in the top-level bucket, for the l th operation, then DeleteMin uses

be the number of elements in the top-level bucket, for the l th operation, then DeleteMin uses  time in the worst-case, where ml is the number of elements that move from top to bottom. The term

time in the worst-case, where ml is the number of elements that move from top to bottom. The term  is the worst-case distance passed by in the top bucket, and ml are efforts for the reassignment, which costs are equivalent to the number of elements that move from top to bottom. Having to wait until all moved elements in the bottom layer are dealt with, the worst-case work is amortized over a longer time period. By amortization we have

is the worst-case distance passed by in the top bucket, and ml are efforts for the reassignment, which costs are equivalent to the number of elements that move from top to bottom. Having to wait until all moved elements in the bottom layer are dealt with, the worst-case work is amortized over a longer time period. By amortization we have  operations. Both operations Insert and DecreaseKey run in real and amortized constant time.

operations. Both operations Insert and DecreaseKey run in real and amortized constant time.

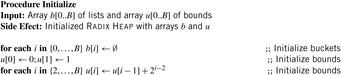

Radix Heaps

For achieving an even better amortized runtime, namely  , a so-called Radix Heap maintains a list of

, a so-called Radix Heap maintains a list of  buckets of sizes 1, 1, 2, 4, 8, 16, and so on (see Fig. 3.3). The main difference to layered buckets is to use buckets of exponentially increasing sizes instead of a hierarchy. Therefore, only

buckets of sizes 1, 1, 2, 4, 8, 16, and so on (see Fig. 3.3). The main difference to layered buckets is to use buckets of exponentially increasing sizes instead of a hierarchy. Therefore, only  buckets are needed.

buckets are needed.

For the implementation we maintain buckets  and bounds

and bounds  with

with  and

and  . Furthermore, the bucket number

. Furthermore, the bucket number  denotes the index of the actual bucket for key k. The invariants of the algorithms are (1) all keys in

denotes the index of the actual bucket for key k. The invariants of the algorithms are (1) all keys in  are in

are in  ; (2)

; (2)  ; and (3) for all

; and (3) for all  we have

we have  .

.

| Figure 3.3 |

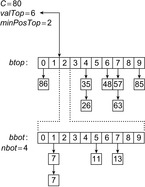

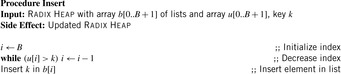

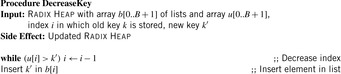

The operations are as follows. Initialize generates an empty Radix Heap according to the invariants (2) and (3). The pseudo code is shown in Algorithm 3.5.

To insert an element with key k, in a linear scan a bucket i is searched, starting from the largest one (i = B). Then the new element with key k is inserted into the bucket  with

with  . The pseudo-code implementation is depicted in Algorithm 3.6.

. The pseudo-code implementation is depicted in Algorithm 3.6.

For DecreaseKey, bucket i for an element with key k is searched linearly. The difference is that the search starts from the actual bucket i for key k as stored in  . The implementation is shown in Algorithm 3.7.

. The implementation is shown in Algorithm 3.7.

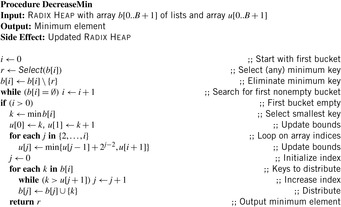

For DeleteMin we first search for the first nonempty bucket  and identify the element with minimum key k therein. If the smallest bucket contains an element it is returned. For the other case

and identify the element with minimum key k therein. If the smallest bucket contains an element it is returned. For the other case  is set to k and the bucket bounds are adjusted according to the invariances; that is,

is set to k and the bucket bounds are adjusted according to the invariances; that is,  is set to

is set to  and for

and for  bound

bound  is set to

is set to  . Lastly, the elements of

. Lastly, the elements of  are distributed to buckets

are distributed to buckets  and the minimum element is extracted from the nonempty smallest bucket. The implementation is shown in Algorithm 3.8.

and the minimum element is extracted from the nonempty smallest bucket. The implementation is shown in Algorithm 3.8.

As a short example for DeleteMin consider the following configuration (written as  ) of a Radix Heap

) of a Radix Heap  ,

,  ,

,

,

,  ,

,  (see Fig. 3.4). Extracting key 0 from bucket 1 yields

(see Fig. 3.4). Extracting key 0 from bucket 1 yields  ,

,  ,

,  ,

,  ,

,  ,

,  . Now, key 6 and 7 are distributed. If

. Now, key 6 and 7 are distributed. If  then the interval size is at most

then the interval size is at most  . In

. In  we have

we have  buckets available. Since all keys in

buckets available. Since all keys in  are in

are in  all elements fit into

all elements fit into  .

.

The amortized analysis of the costs of maintaining a Radix Heap uses the potential  for operation l. We have that Initialize runs in O (B), and Insert runs in O (B). DecreaseKey has an amortized time complexity in

for operation l. We have that Initialize runs in O (B), and Insert runs in O (B). DecreaseKey has an amortized time complexity in  , and DeleteMin runs in time

, and DeleteMin runs in time  amortized. In total we have a running time of

amortized. In total we have a running time of  for m Insert and l DecreaseKey and ExtractMin operations.

for m Insert and l DecreaseKey and ExtractMin operations.

Van Emde Boas Priority Queues

A Van Emde Boas Priority Queue is efficient when  for a universe

for a universe  of keys. In this implementation, all priority queue operations reduce to successor computation, which takes

of keys. In this implementation, all priority queue operations reduce to successor computation, which takes  time. The space requirements are

time. The space requirements are  .

.

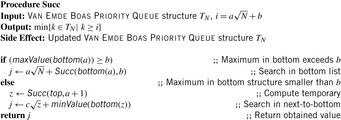

We start by considering a data structure TN on the elements  defining only three operations: Insert (x), Delete (x), and Succ (x), where the two first ones have an obvious semantics and the last one returns the smallest item in TN that is larger than or equal to x. All priority queue operations use the recursive operation Succ (x) that finds the smallest y in the structure TN with

defining only three operations: Insert (x), Delete (x), and Succ (x), where the two first ones have an obvious semantics and the last one returns the smallest item in TN that is larger than or equal to x. All priority queue operations use the recursive operation Succ (x) that finds the smallest y in the structure TN with  . For the priority queue data structure, DeleteMin is simply implemented as Delete (Succ (0)), assuming positive key values, and DecreaseKey is a combination of a Delete and an Insert operation.

. For the priority queue data structure, DeleteMin is simply implemented as Delete (Succ (0)), assuming positive key values, and DecreaseKey is a combination of a Delete and an Insert operation.

Using an ordinary bitvector, Insert and Delete are constant-time operations, but Succ is inefficient. Using balanced trees, all operations run in time  . A better solution is to implement a recursive representation with

. A better solution is to implement a recursive representation with  distinct versions of

distinct versions of  . The latter trees are called bottom, and an element

. The latter trees are called bottom, and an element  is represented by the entry b in bottom (a). The conversion from i to a and b in bit-vector representation is simple, since a and b refer to the most and least significant half of the bits. Moreover, we have another version

is represented by the entry b in bottom (a). The conversion from i to a and b in bit-vector representation is simple, since a and b refer to the most and least significant half of the bits. Moreover, we have another version  called top that contains a only if a is nonempty.

called top that contains a only if a is nonempty.

Algorithm 3.9 depicts a pseudo-code implementation of Succ. The recursion for the runtime is  . If we set

. If we set  then

then  so that

so that  and

and  . The subsequent implementations for Insert and Delete are shown in Algorithm 3.10 and Algorithm 3.11. Inserting element x in TN locates a possible place by first seeking the successor Succ (x) of x. This leads to a running time of

. The subsequent implementations for Insert and Delete are shown in Algorithm 3.10 and Algorithm 3.11. Inserting element x in TN locates a possible place by first seeking the successor Succ (x) of x. This leads to a running time of  . Deletion used the doubly linked structure and the successor relation. It also runs in

. Deletion used the doubly linked structure and the successor relation. It also runs in  time.

time.

A Van Emde Boas Priority Queue k-structure is recursively defined. Consider the example k = 4 (implying N = 16) with the set of five elements  . Set top is a 2-structure on

. Set top is a 2-structure on  based on the set of possible prefixes in the binary encoding of the values in S. Set bottom is a vector of 2-structures (based on the suffixes of the binary state encodings in S) with bottom

based on the set of possible prefixes in the binary encoding of the values in S. Set bottom is a vector of 2-structures (based on the suffixes of the binary state encodings in S) with bottom  , bottom

, bottom  , bottom

, bottom  , and bottom

, and bottom  , since

, since  ,

,  ,

,  ,

,  , and

, and  . Representing top as a 2-structure implies k = 2 and N = 4, such that the representation of

. Representing top as a 2-structure implies k = 2 and N = 4, such that the representation of  with

with  ,

,  ,

,  , and

, and  leads to a sub-top structure on

leads to a sub-top structure on  and two sub-bottom structures bottom

and two sub-bottom structures bottom  and bottom

and bottom  .

.

To realize the structures in practice, a mixed representation of the element set is appropriate. On one hand, a doubly connected linked list contains the elements sorted according to the values they have in the universe. On the other hand, a bitvector b is devised, with bit i denoting if an element with value bi is contained in the list. The two structures are connected via links that point from each nonzero element to an item in the doubly connected list. The mixed representation (bitvector and doubly ended leaf list) for the earlier 4-structure (without unrolling the references to the single top and four bottom structures) is shown Figure 3.5.

3.1.2. Heap Data Structures

Let us now assume that we can have arbitrary (e.g., floating-point) keys. Each operation in a priority queue then divides into compare-exchange steps. For this case, the most common implementation of a priority queue (besides a plain list) is a Binary Search Tree or a Heap.

Binary Search Trees

A Binary Search Tree is a binary tree implementation of a priority queue in which each internal node x stores an element. The keys in the left subtree of x are smaller than (or equal) to the one of x, and keys in the right subtree of x are larger than the one of x. Operations on a binary search tree take time proportional to the height of the tree. If the tree is a linear chain of nodes, linear comparisons might be induced in the worst-case. If the tree is balanced, a logarithmic number of operations for insertion and deletion suffice. Because balancing can be involved, in the following we discuss more flexible and faster data structures for implementing a priority queue.

Heaps

A Heap is a complete binary tree; that is, all levels are completely filled except possibly the lowest one, which is filled from the left. This means that the depth of the tree (and every path length from the root to a leaf) is  . Each internal node v satisfies the heap property: The key of v is smaller than or equal to the key of either of its two children.

. Each internal node v satisfies the heap property: The key of v is smaller than or equal to the key of either of its two children.

Complete binary trees can be embedded in an array A as follows. The elements are stored levelwise from left to right in ascending cells of the array;  is the root; the left and right child of

is the root; the left and right child of  are

are  and

and  , respectively; and its parent is

, respectively; and its parent is  . On most current microprocessors, the operation of multiplication by two can be realized as a single shift instruction. An example of a Heap (including its array embedding) is provided in Figure 3.6.

. On most current microprocessors, the operation of multiplication by two can be realized as a single shift instruction. An example of a Heap (including its array embedding) is provided in Figure 3.6.

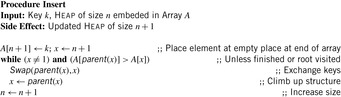

To insert an element into a Heap, we first tentatively place it in the next available leaf. As this might violate the heap property, we restore the heap property by swapping the element with its parent, if the parent's key is larger; then we check for the grandparent key, and so on, until the heap property is valid or the element reaches the root. Thus, Insert needs at most  time. In the array embedding we start with the last unused index n + 1 in array A and place key k into

time. In the array embedding we start with the last unused index n + 1 in array A and place key k into  . Then, we climb up the ancestors until a correct Heap is constructed. An implementation is provided in Algorithm 3.12.

. Then, we climb up the ancestors until a correct Heap is constructed. An implementation is provided in Algorithm 3.12.

DecreaseKey starts at the node x that has changed its value. This reference has to be maintained with the elements that are stored. Algorithm 3.13 shows a possible implementation.

To extract the minimum key is particularly easy: It is always stored at the root. However, we have to delete it and guarantee the heap property afterward. First, we tentatively fill the gap at the root with the last element on the bottom level of the tree. Then we restore the heap property using two comparisons per node while going down. This operation is referred to as SiftDown. That is, at a node we determine the minimum of the current key and that of the children; if the node is actually the minimum of the three, we are done, otherwise it is exchanged with the minimum, and the balancing continues at its previous position. Hence, the running time for DeleteMin is again  in the worst-case. The implementation is displayed in Algorithm 3.14. Different SiftDown procedures are known: (1) top-down (as in Alg. 3.15); (2) bottom-up (first following the special path of smaller children to the leaf, then sifting up the root element as in Insert ); or (3) with binary search (on the special path).

in the worst-case. The implementation is displayed in Algorithm 3.14. Different SiftDown procedures are known: (1) top-down (as in Alg. 3.15); (2) bottom-up (first following the special path of smaller children to the leaf, then sifting up the root element as in Insert ); or (3) with binary search (on the special path).

An implementation of the priority queue using a Heap leads to an  algorithm for A*, where n(resp. e) is the number of generated problem graph nodes (resp. edges). The data structure is fast in practice if n is small, say a few million elements (an accurate number depends on the efficiency of the implementation).

algorithm for A*, where n(resp. e) is the number of generated problem graph nodes (resp. edges). The data structure is fast in practice if n is small, say a few million elements (an accurate number depends on the efficiency of the implementation).

Pairing Heaps

A Pairing Heap is a heap-ordered (not necessarily binary) self-adjusting tree. The basic operation on a Pairing Heap is pairing, which combines two Pairing Heaps by attaching the root with the larger key to the other root as its left-most child. More precisely, for two Pairing Heaps with respective root values k1 and k2, pairing inserts the first as the left-most subtree of the second if  , and otherwise inserts the second into the first as its left-most subtree. Pairing takes constant time and the minimum is found at the root.

, and otherwise inserts the second into the first as its left-most subtree. Pairing takes constant time and the minimum is found at the root.

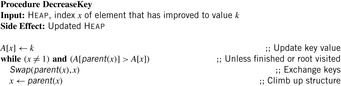

In a multiway tree representation realizing the priority queue operations is simple. Insertion pairs the new node with the root of the heap. DecreaseKey splits the node and its subtree from the heap (if the node is not the root), decreases the key, and then pairs it with the root of the heap. Delete splits the node to be deleted and its subtree, performs a DeleteMin on the subtree, and pairs the resulting tree with the root of the heap. DeleteMin removes and returns the root, and then, in pairs, pairs the remaining trees. Then, the remaining trees from right to left are incrementally paired (see Alg. 3.16).

Since the multiple-child representation is difficult to maintain, the child-sibling binary tree representation for Pairing Heaps is often used, in which siblings are connected as follows. The left link of a node accesses its first child, and the right link of a node accesses its next sibling, so that the value of a node is less than or equal to all the values of nodes in its left subtree. It has been shown that in this representation Insert takes O (1) and DeleteMin takes  amortized, and DecreaseKey takes at least

amortized, and DecreaseKey takes at least  and at most

and at most  steps.

steps.

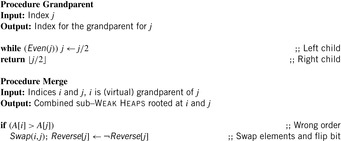

Weak Heaps

A Weak Heap is obtained by relaxing the Heap requirements. It satisfies three conditions: the key of a node is smaller than or equal to all elements to its right, the root has no left child, and leaves are found on the last two levels only.

The array representation uses extra bits Reverse  ,

,  . The location of the left child is located at

. The location of the left child is located at  and the right child is found at

and the right child is found at  . By flipping Reverse

. By flipping Reverse  the locations of the left child and the right child are exchanged. As an example take

the locations of the left child and the right child are exchanged. As an example take  and

and  as an array representation of a Weak Heap. Its binary tree equivalent is shown in Figure 3.7.

as an array representation of a Weak Heap. Its binary tree equivalent is shown in Figure 3.7.

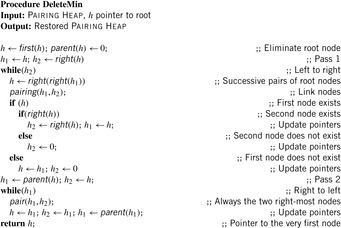

The function Grandparent is defined as  in case i is a left child, and

in case i is a left child, and  if i is a right one. In a Weak Heap,

if i is a right one. In a Weak Heap,  refers to the index of the deepest element known to be smaller than or equal to the one at i. An illustration is given in Figure 3.8.

refers to the index of the deepest element known to be smaller than or equal to the one at i. An illustration is given in Figure 3.8.

Let node v be the root of a balanced tree T and let node u with the left subtree of T and v with the right subtree of T each form a Weak Heap. Merging u and v yields a new Weak Heap. If  then the tree with root u and right child v is a Weak Heap. If, however,

then the tree with root u and right child v is a Weak Heap. If, however,  we swap

we swap  with

with  and reflect the subtrees in T(see Fig. 3.9, right). Algorithm 3.17 provides the pseudo-code implementation for Merge and Grandparent.

and reflect the subtrees in T(see Fig. 3.9, right). Algorithm 3.17 provides the pseudo-code implementation for Merge and Grandparent.

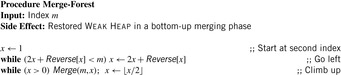

To restore the Weak Heap all subtrees corresponding to grandchildren of the root are combined. Algorithm 3.18 shows the implementation of this Merge-Forest procedure. The element at position m serves as a root node. We traverse the grandchildren of the root in which the second largest element is located. Then, in a bottom-up traversal, the Weak Heap property is restored by a series of Merge operations.

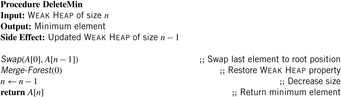

For DeleteMin we restore the Weak Heap property after exchanging the root element with the last one in the underlying array. Algorithm 3.19 gives an implementation.

To construct a Weak Heap from scratch all nodes at index i for decreasing i are merged to their grandparents, resulting in the minimal number of n − 1 comparisons.

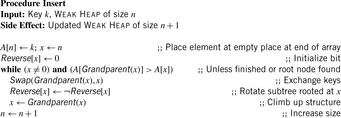

For Insert given a key k, we start with the last unused index x in array A and place k into  . Then we climb up the grandparents until the Weak Heap property is satisfied (see Alg. 3.20). On the average, the path length of grandparents from a leaf node to a root is approximately half of the depth of the tree.

. Then we climb up the grandparents until the Weak Heap property is satisfied (see Alg. 3.20). On the average, the path length of grandparents from a leaf node to a root is approximately half of the depth of the tree.

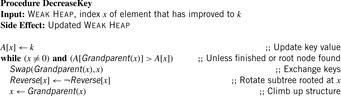

For the DecreaseKey operation we start at the node x that has changed its value. Algorithm 3.21 shows an implementation.

Fibonacci Heaps

A Fibonacci Heap is an involved data structure with a detailed presentation that exceeds the scope of this book. In the following, therefore, we only motivate Fibonacci Heaps.

Intuitively, Fibonacci Heaps are relaxed versions of Binomial Queues, which themselves are extensions to Binomial Trees. A Binomial Tree Bn is a tree of height n with 2n nodes in total and  nodes in depth i. The structure of Bn is found by unifying 2-structure

nodes in depth i. The structure of Bn is found by unifying 2-structure  , where one is added as an additional successor to the second.

, where one is added as an additional successor to the second.

Binomial Queues are unions of heap-ordered Binomial Trees. An example is shown in Figure 3.10. Tree Bi is represented in queue Q if the i th bit in the binary representation of n is set. The partition of a Binomial Queue structure Q into trees Bi is unique as there is only one binary representation of a given number. Since the minimum is always located at the root of one Bi, operation Min takes  time. Binomial Queues Q1 and Q2 of sizes n1 and n2 are meld by simulating binary addition of n1 and n2. This corresponds to a parallel scan of the root lists of Q1 and Q2. If

time. Binomial Queues Q1 and Q2 of sizes n1 and n2 are meld by simulating binary addition of n1 and n2. This corresponds to a parallel scan of the root lists of Q1 and Q2. If  then meld can be performed in time

then meld can be performed in time  . Having to meld the queues

. Having to meld the queues  and

and  leads to a queue

leads to a queue  .

.

Binomial Queues are themselves priority queues. Operations Insert and DeleteMin both use procedure meld as a subroutine. The former creates a tree B0 with one element, and the latter extracts tree Bi containing the minimal element and splits it into its subtrees  . In both cases the resulting trees are merged with the remaining queue to perform the update. DecreaseKey for element v updates the Binomial Tree Bi in which v is located by propagating the element change bottom-up. All operations run in

. In both cases the resulting trees are merged with the remaining queue to perform the update. DecreaseKey for element v updates the Binomial Tree Bi in which v is located by propagating the element change bottom-up. All operations run in  .

.

A Fibonacci Heap is a collection of heap-ordered Binomial Trees, maintained in the form of a circular doubly linked unordered list of root nodes. In difference to Binomial Queues, more than one Binomial Tree of rank i may be represented in one Fibonacci Heap. Consolidation traverses the linear list and merges trees of the same rank (each rank is unique). For this purpose, an additional array is devised that supports finding the trees of the same rank in the root list. The minimum element in a Fibonacci Heap is accessible in O (1) time through a pointer in the root list. Insert performs a meld operation with a singleton tree.

For the critical operation consolidate (see Algorithm 3.22) a node is marked if it loses a child. Before it is marked twice, a cut is performed, which separates the node from its parent. The subtree of the node is inserted in the root list (where the node becomes unmarked again). The cut may cascade as it is propagated to the parent node. An example for a cascading cut is shown in Figure 3.11. Nodes with the keys 3, 6, and 8 are already marked. Now we decrease the key 9 to 1, so that 3, 6, and 8 will lose their second child.

DecreaseKey performs the update on the element in the heap-ordered tree. It removes the updated node from the child list of its parent and inserts it into the root list while updating the minimum. DeleteMin extracts the minimum and includes all subtrees into the root list and consolidates it.

A heuristic parameter can be set to call consolidation less frequently. Moreover, a bitvector can improve the performance of consolidation, as it avoids additional links and faster access to the trees of the same rank to be merged. In an eager variant Fibonacci heaps maintain the consolidation heap store at any time.

Relaxed Weak Queues

Relaxed Weak Queues are worst-case efficient priority queues, by means that all running times of Fibonacci Heaps are worst-case instead of amortized.

Weak Queues contribute to the observation that Perfect Weak Heaps inherit a one-to-one correspondence to Binomial Queues by only taking edges that are defined by the Grandparent relation. Note that in Perfect Weak Heaps the right subtree of the root is a complete binary tree. A Weak Queue stores n elements and is a collection of disjoint (nonembedded) Perfect Weak Heaps based on the binary representation of  . In its basic form, a Weak Queue contains a Perfect Weak Heap Hi of size 2i if and only if

. In its basic form, a Weak Queue contains a Perfect Weak Heap Hi of size 2i if and only if  .

.

Relaxed Weak Queues relax the requirement of having exactly one Weak Heap of a given rank in the Weak Queue and allow some inconsistent elements that violate the Weak Heap property. A structure (of logarithmic size) called the heap store maintains Perfect Weak Heaps of the same rank similar to Fibonacci Heaps. At most two heaps per rank suffice to efficiently realize injection and ejection of the heaps. To keep the worst-case complexity bounds, merging Weak Heaps of the same rank is delayed by maintaining the following structural property on the sequence of numbers of Perfect Weak Heaps of the same rank.

The rank sequence  is regular if any digit 2 is preceded by a digit 0, possibly having some digits 1 in between. A subsequence of the form

is regular if any digit 2 is preceded by a digit 0, possibly having some digits 1 in between. A subsequence of the form  is called a block. That is, every digit 2 must be part of a block, but there can be digits, 0's and 1's, that are not part of a block. For example, the rank sequence (1011202012) contains three blocks. For injecting a Weak Heap, we join the first two Weak Heaps that are of the same size, if there are any. They are found by scanning the rank sequence. For O (1) access, a stack of pending joins, the so-called join schedule implements the rank sequence of pending joins. Then we insert the new Weak Heap, which will preserve the regularity of the rank sequence. For ejection, the smallest Weak Heap is eliminated from the heap sequence and, if this Perfect Weak Heap forms a pair with some other Perfect Weak Heap, the top of the join schedule is also popped.

is called a block. That is, every digit 2 must be part of a block, but there can be digits, 0's and 1's, that are not part of a block. For example, the rank sequence (1011202012) contains three blocks. For injecting a Weak Heap, we join the first two Weak Heaps that are of the same size, if there are any. They are found by scanning the rank sequence. For O (1) access, a stack of pending joins, the so-called join schedule implements the rank sequence of pending joins. Then we insert the new Weak Heap, which will preserve the regularity of the rank sequence. For ejection, the smallest Weak Heap is eliminated from the heap sequence and, if this Perfect Weak Heap forms a pair with some other Perfect Weak Heap, the top of the join schedule is also popped.

To keep the complexity for DecreaseKey constant, resolving Weak Heap order violations is also delayed. The primary purpose of a node store is to keep track and reduce the number of potential violation nodes at which the key may be smaller than the key of its grandparent. A node that is a potential violation node is marked. A marked node is tough if it is the left child of its parent and also the parent is marked. A chain of consecutive tough nodes followed by a single nontough marked node is called a run. All tough nodes of a run are called its members; the single nontough marked node of that run is called its leader. A marked node that is neither a member nor a leader of a run is called a singleton. To summarize, we can divide the set of all nodes into four disjoint node type categories: unmarked nodes, run members, run leaders, and singletons.

A pair (type, height) with (type) being either unmarked, member, leader, or singleton and (height) being a value in  denotes the state of a node. Transformations induce a constant number of state transitions. A simple example of such a transformation is a join, where the height of the new root must be increased by one. Other operations are cleaning, parent, sibling, and pair transformations (see Fig. 3.12). A cleaning transformation rotates a marked left child to a marked right one, provided its neighbor and parent are unmarked. A parent transformation reduces the number of marked nodes or pushes the marking one level up. A sibling transformation reduces the markings by eliminating two markings in one level, while generating a new marking one level up. A pair transformation has a similar effect, but also operates on disconnected trees.

denotes the state of a node. Transformations induce a constant number of state transitions. A simple example of such a transformation is a join, where the height of the new root must be increased by one. Other operations are cleaning, parent, sibling, and pair transformations (see Fig. 3.12). A cleaning transformation rotates a marked left child to a marked right one, provided its neighbor and parent are unmarked. A parent transformation reduces the number of marked nodes or pushes the marking one level up. A sibling transformation reduces the markings by eliminating two markings in one level, while generating a new marking one level up. A pair transformation has a similar effect, but also operates on disconnected trees.

All transformations run in constant time. The node store consists of different list items containing the type of the node marking, which can either be a fellow, a chairman, a leader, or a member of a run, where fellows and chairmen refine the concept of singletons. A fellow is a marked node, with an unmarked parent, if it is a left child. If more than one fellow has a certain height, one of them is elected a chairman. The list of chairmen is required for performing a singleton transformation. Nodes that are left children of a marked parent are members, while the parent of such runs is entitled the leader. The list of leaders is needed for performing a run transformation.

|

| Figure 3.12 |

The four primitive transformations are combined to a λ-reduction, which invokes either a singleton or run transformation (see Alg. 3.23). A singleton transformation reduces the number of markings in a given level by one, not producing a marking in the level above; or it reduces the number of markings in a level by two, producing a marking in the level above. A similar observation applies to a run transformation, so that in both transformations the number of markings is reduced by at least one in a constant amount of work and comparisons. A λ-reduction is invoked once for each DecreaseKey operation. It invokes either a singleton or a run transformation and is enforced, once the number of marked nodes exceeds  .

.

Table 3.1 measures the time in μ-seconds (for each operation) for inserting n integers (randomly assigned to values from n to 2n − 1). Next, their values are decreased by 10 and then the minimum element is deleted n times. (The lack of results in one row is due to the fact that Fibonacci Heaps ran out of space.)

3.2. Hash Tables

Duplicate detection is essential for state space search to avoid redundant expansions. As no access to all states is given in advance, a dynamically growing dictionary to represent sets of states has to be provided. For the Closed list, we memorize nodes that have been expanded and for each generated state we check whether it is already stored. We also have to search for duplicates in the Open list, so another dictionary is needed to assist lookups in the priority queue. The Dictionary problem consists of providing a data structure with the operations Insert, Lookup, and Delete. In search applications, deletion is not always necessary. The slightly easier membership problem neglects any associated information. However, many implementations of membership data structures can be easily generalized to dictionary data structures by adding a pointer. Instead of maintaining two dictionaries for Open and Closed individually, more frequently, the Open and Closed lists are maintained together in a combined dictionary.

There are two major techniques for implementing dictionaries: (balanced) search trees and hashing. The former class of algorithms can achieve all operations in  worst-case time and O (n) storage space, where n is the number of stored elements. Generally, for hashing constant time for lookup operations is required, so we concentrate on hash dictionaries. We first introduce different hash functions and algorithms. Incremental hashing will be helpful to enhance the efficiency of computing hash addresses. In perfect hashing we consider bijective mapping of states to addresses. In universal hashing, we consider a class of hash functions that will be useful for more general perfect hashing strategies. Because memory is a big concern in state space search we will also address memory-saving dictionary data structures. At the end of this section, we show how to save additional space by being imprecise (saying in the dictionary when it is not).

worst-case time and O (n) storage space, where n is the number of stored elements. Generally, for hashing constant time for lookup operations is required, so we concentrate on hash dictionaries. We first introduce different hash functions and algorithms. Incremental hashing will be helpful to enhance the efficiency of computing hash addresses. In perfect hashing we consider bijective mapping of states to addresses. In universal hashing, we consider a class of hash functions that will be useful for more general perfect hashing strategies. Because memory is a big concern in state space search we will also address memory-saving dictionary data structures. At the end of this section, we show how to save additional space by being imprecise (saying in the dictionary when it is not).

3.2.1. Hash Dictionaries

Hashing serves as a method to store and retrieve states  efficiently. A dictionary over a universe

efficiently. A dictionary over a universe  of possible keys is a partial function from a subset

of possible keys is a partial function from a subset  (the stored keys ) to some set I(the associated information). In state space hashing, every state

(the stored keys ) to some set I(the associated information). In state space hashing, every state  is assigned to a key k (x), which is a part of the representation that uniquely identifies S. Note that every state representation can be interpreted as a binary integer number. Then not all integers in the universe will correspond to valid states. For simplicity, in the following we will identify states with their keys.

is assigned to a key k (x), which is a part of the representation that uniquely identifies S. Note that every state representation can be interpreted as a binary integer number. Then not all integers in the universe will correspond to valid states. For simplicity, in the following we will identify states with their keys.

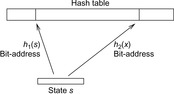

The keys are mapped into a linear array  , called the hash table. The mapping

, called the hash table. The mapping  is called the hash function (see Fig. 3.13). The lack of injectiveness yields address collisions; that is, different states that are mapped to the same table location. Roughly speaking, hashing is all about computing keys and detecting collisions. The overall time complexity for hashing depends on the time to compute the hash function, the collision strategy, and the ratio between the number of stored keys and the hash table size, but usually not on the size of the keys.

is called the hash function (see Fig. 3.13). The lack of injectiveness yields address collisions; that is, different states that are mapped to the same table location. Roughly speaking, hashing is all about computing keys and detecting collisions. The overall time complexity for hashing depends on the time to compute the hash function, the collision strategy, and the ratio between the number of stored keys and the hash table size, but usually not on the size of the keys.

The choice of a good hash function is the central problem for hashing. In the worst-case, all keys are mapped to the same address; for example, for all  we have

we have  , with

, with  . In the best case, we have no collisions and the access time to an element is constant. A special case is that of a fixed stored set R, and a hash table of at least m entries; then a suitable hash function is

. In the best case, we have no collisions and the access time to an element is constant. A special case is that of a fixed stored set R, and a hash table of at least m entries; then a suitable hash function is  with

with  and

and  .

.

These two extreme cases are more of theoretical interest. In practice, we can avoid the worst-case by a proper design of the hash function.

3.2.2. Hash Functions

A good hash function is one that can be computed efficiently and minimizes the number of address collisions. The returned addresses for given keys should be uniformly distributed, even if the set of chosen keys in S is not, which is almost always the case.

Given a hash table of size m and the sequence  of keys to be inserted, for each pair (ki, kj of keys,

of keys to be inserted, for each pair (ki, kj of keys,  , we define a random variable

, we define a random variable Then

Then  is the sum of collisions. Assuming a random hash function with uniform distribution, the expected value of X is

is the sum of collisions. Assuming a random hash function with uniform distribution, the expected value of X is Using a hash table of size

Using a hash table of size  , for 1 million elements, we expect about

, for 1 million elements, we expect about  address collisions.

address collisions.

Remainder Method

If we can extend S to  , then

, then  is the quotient space with equivalence classes

is the quotient space with equivalence classes  induced by the relation

induced by the relation Therefore, a mapping

Therefore, a mapping  with

with  distributes S on T. For the uniformity, the choice of m is important; for example, if m is even then h (x) is even if and only if x is.

distributes S on T. For the uniformity, the choice of m is important; for example, if m is even then h (x) is even if and only if x is.

The choice  , for some

, for some  , is also not appropriate, since for

, is also not appropriate, since for  we have

we have This means that the distribution takes only the last w digits into account.

This means that the distribution takes only the last w digits into account.

Multiplicative Hashing

In this approach the product of the key and an irrational number ϕ is computed and the fractional part is preserved, resulting in a mapping into  . This can be used for a hash function that maps the key x to

. This can be used for a hash function that maps the key x to  as follows:

as follows:

One of the best choices for ϕ for multiplicative hashing is  , the golden ratio. As an example take

, the golden ratio. As an example take  and

and  ; then

; then  .

.

Rabin and Karp Hashing

For incremental hashing based on the idea of Rabin and Karp, states are interpreted as strings over a fixed alphabet. In case no natural string representation exists it is possible to interpret the binary representation of a state as a string over the alphabet {0, 1}. To increase the effectiveness of the method the string of bits may be divided into blocks. For example, a state vector consisting of bytes yields 256 different characters.

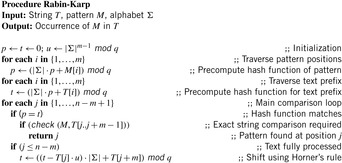

The idea of Rabin and Karp originates in matching a pattern string  to a text

to a text  . For a certain hash function h, pattern M is mapped to the number h (M), assuming that h (M) fits into a single memory cell and can be processed in constant time. For

. For a certain hash function h, pattern M is mapped to the number h (M), assuming that h (M) fits into a single memory cell and can be processed in constant time. For  the algorithm checks if

the algorithm checks if  . Due to possible collisions, this check is a necessary but not a sufficient condition for a valid match of M and

. Due to possible collisions, this check is a necessary but not a sufficient condition for a valid match of M and  . To validate that the match is indeed valid in case

. To validate that the match is indeed valid in case  , a character-by-character comparison has to be performed.

, a character-by-character comparison has to be performed.

To compute  in constant time, the value is calculated incrementally—the algorithm takes the known value

in constant time, the value is calculated incrementally—the algorithm takes the known value  into account to determine

into account to determine  with a few CPU operations. The hash function has to be chosen carefully to be suited to the incremental computation; for example, linear hash functions based on a radix number representation such as

with a few CPU operations. The hash function has to be chosen carefully to be suited to the incremental computation; for example, linear hash functions based on a radix number representation such as  are suitable, where q is a prime and the radix r is equal to

are suitable, where q is a prime and the radix r is equal to  .

.

Algorithmically, the approach works as follows. Let q be a sufficiently large prime and  . We assume that numbers of size

. We assume that numbers of size  fit into a memory cell, so that all operations can be performed with single precision arithmetic. To ease notation, we identify characters in Σ with their order. The algorithm of Rabin and Karp as presented in Algorithm 3.24 performs the matching process.

fit into a memory cell, so that all operations can be performed with single precision arithmetic. To ease notation, we identify characters in Σ with their order. The algorithm of Rabin and Karp as presented in Algorithm 3.24 performs the matching process.

The algorithm is correct due to the following observation.

Theorem 3.1

(Correctness Rabin-Karp) Let the steps ofAlgorithm 3.24be numbered wrt. the loop counter j. At the start of the j th iteration we have

Proof

Certainly,  and inductively we have

and inductively we have

As an example take  and q = 13. Furthermore, let M = 31415 and T = 2359023141526739921. The application of the mapping h is illustrated in Figure 3.14.

and q = 13. Furthermore, let M = 31415 and T = 2359023141526739921. The application of the mapping h is illustrated in Figure 3.14.

We see h produces collisions. The incremental computation works as follows.

Incremental Hashing

For a state space search, we have often the case that a state transition changes only a part of the representation. In this case, the computation of the hash function can be executed incrementally. We refer to this approach as incremental state space hashing. The alphabet Σ denotes the set of characters in the string to be hashed. In the state space search, the set Σ will be used for denoting the domain(s) of the state variables.

Take, for example, the Fifteen-Puzzle. With  , a natural vector representation for state u is

, a natural vector representation for state u is  , where

, where  means that the tile labeled with l is located at position i, and

means that the tile labeled with l is located at position i, and  is the blank. Because successor generation is fast and Manhattan distance heuristic can be computed incrementally in constant time (using a table addressed by the tile's label

is the blank. Because successor generation is fast and Manhattan distance heuristic can be computed incrementally in constant time (using a table addressed by the tile's label  , the tile's move direction

, the tile's move direction  , and the position

, and the position  of the tile that is being moved), the computational burden is on computing the hash function.

of the tile that is being moved), the computational burden is on computing the hash function.

One hash value of a Fifteen-Puzzle state u is  . Let state u′ with representation

. Let state u′ with representation  be a successor of u. We know that there is only one transposition in the vectors t and t′. Let j be the position of the blank in u and k be the position of the blank in u′. We have

be a successor of u. We know that there is only one transposition in the vectors t and t′. Let j be the position of the blank in u and k be the position of the blank in u′. We have  ,

,  , and for all

, and for all  , with

, with  , it holds that

, it holds that  . Therefore,

. Therefore,

To save time, we may precompute  for each k and l in

for each k and l in  . If we were to store

. If we were to store  for each value of j, k, and l, we would save another addition. As

for each value of j, k, and l, we would save another addition. As  and

and  , we may further substitute the last mod by faster arithmetic operations.

, we may further substitute the last mod by faster arithmetic operations.

As a particular case, we look at an instance of the Fifteen-Puzzle, where the tile 12 is to be moved downward from its position 11 to position 15. We have  .

.

Next we generalize our observations. The savings are larger, when the state vector grows. For the (n2 − 1)-Puzzle nonincremental hashing results in  time, whereas in incremental hashing the efforts remain constant. Moreover, incremental hashing is available for many search problems that obey a static vector representation. Hence, we assume that state u is a vector

time, whereas in incremental hashing the efforts remain constant. Moreover, incremental hashing is available for many search problems that obey a static vector representation. Hence, we assume that state u is a vector  with ui in finite domain

with ui in finite domain  ,

,  .

.

Theorem 3.2

(Efficiency of Incremental Hashing) Let I (a) be the set of indices in the state vector that change when applying a, and  . The hash value of v for successor u of v via a given the hash value for u is available in time:

. The hash value of v for successor u of v via a given the hash value for u is available in time:

1.  ; using an O (k)-size table.

; using an O (k)-size table.

2. O (1); using an  -size table. where

-size table. where  .

.

Proof

We define  as the hash function, with

as the hash function, with  and

and  for

for  . For case 1 we store

. For case 1 we store  for all

for all  in a precomputed table, so that

in a precomputed table, so that  lookups are needed. For case 2 we compute

lookups are needed. For case 2 we compute  for all possible actions

for all possible actions  . The number of possible actions is bounded by

. The number of possible actions is bounded by  , since at most

, since at most  indices may change to at most

indices may change to at most  different values.

different values.

Note that the number of possible actions is much smaller in practice. The effectiveness of incremental hashing relies on two factors: on the state vector's locality (i.e., how many state variables are affected by a state transition) and on the node expansion efficiency (i.e., the running time of all other operations to generate one successor). In the Rubik's Cube, exploiting locality is limited. If we represent position and orientation of each subcube as a number in the state vector, then for each twist, 8 of the 20 entries will be changed. In contrast, for Sokoban the node expansion efficiency is small; as during move execution, the set of pushable balls has to be determined in linear time to the board layout, and the (incremental) computation of the minimum matching heuristic requires at least quadratic time in the number of balls.

For incremental hashing the resulting technique is very efficient. However, it can also have drawbacks, so take care. For example, as with ordinary hashing, the suggested schema induces collisions, which have to be resolved.

Universal Hash Functions

Universal hashing requires a set of hash functions to have on average a good distribution for any subset of stored keys. It is the basis for FKS and cuckoo hashing and has a lot of nice properties. Universal hashing is often used in a state space search, when restarting a randomized incomplete algorithm with a different hash function.

Let  be the set of hash addresses and

be the set of hash addresses and  be the set of possible keys. A set of hash function H is universal, if for all

be the set of possible keys. A set of hash function H is universal, if for all  ,

,

The intuition in the design of universal hash functions is to include a suitable random number generator inside the hash computation. For example, the Lehmer generator refers to linear congruences. It is one of the most common methods for generating random numbers. With respect to a triple of constants a, b, and c a sequence of pseudo-random numbers xi is generated according to the recursion

Universal hash functions lead to a good distribution of values on the average. If h is drawn randomly from H and S is the set of keys to be inserted in the hash table, the expected cost of each Lookup, Insert, and Delete operation is bounded by  . We give an example of a class of universal hash functions. Let

. We give an example of a class of universal hash functions. Let  , p be prime with

, p be prime with  . For

. For  ,

,  , define

, define Then

Then is a set of universal hash functions. As an example, take m = 3 and p = 5. Then we have 20 functions in H:

is a set of universal hash functions. As an example, take m = 3 and p = 5. Then we have 20 functions in H: all taken mod 5 mod 3. Hashing 1 and 4 yields the following address collisions:

all taken mod 5 mod 3. Hashing 1 and 4 yields the following address collisions:

To prove that H is universal, let us look at the probability that two keys  are mapped to locations r and s by the inner part of the hash function,

are mapped to locations r and s by the inner part of the hash function,

This means that  , which has exactly one solution

, which has exactly one solution  since

since  is a field (we needed

is a field (we needed  to ensure that

to ensure that  ). Value r cannot be equal to s, since this would imply

). Value r cannot be equal to s, since this would imply  , contrary to the definition of the hash function. Therefore, we now assume

, contrary to the definition of the hash function. Therefore, we now assume  . Then there is a 1 in

. Then there is a 1 in  chance that a has the right value. Given this value of a, we need

chance that a has the right value. Given this value of a, we need  , and there is a

, and there is a  chance that b gets this value. Consequently, the overall probability that the inner function maps x to r and y to s is

chance that b gets this value. Consequently, the overall probability that the inner function maps x to r and y to s is  .

.

Now, the probability that x and y collide is equal to this  , times the number of pairs

, times the number of pairs  such that

such that  . We have p choices for r, and subsequently at most

. We have p choices for r, and subsequently at most  choices for s(the −1 is for disallowing s = r). Using

choices for s(the −1 is for disallowing s = r). Using  for integers v and w, the product is at most

for integers v and w, the product is at most  .

.

Putting this all together, we obtain for the probability of a collision between x and y,

Perfect Hash Functions

Can we find a hash function h such that (besides the efforts to compute the hash function) all lookups require constant time? The answer is yes—this leads to perfect hashing. An injective mapping of R with  to

to  is called a perfect hash function; it allows an access without collisions. If n = m we have a minimal perfect hash function. The design of perfect hashing yields an optimal worst-case performance of O (1) accesses. Since perfect hashing uniquely determines an address, a state S can often be reconstructed given h (S).

is called a perfect hash function; it allows an access without collisions. If n = m we have a minimal perfect hash function. The design of perfect hashing yields an optimal worst-case performance of O (1) accesses. Since perfect hashing uniquely determines an address, a state S can often be reconstructed given h (S).

If we invest enough space, perfect (and incremental) hash functions are not difficult to obtain. In the example of the Eight-Puzzle for a state u in vector representation  we may choose

we may choose  for

for  different hash addresses (equivalent to about 46 megabytes space). Unfortunately, this approach leaves most hash addresses vacant. A better hash function is to compute the rank of the permutation in some given ordering, resulting in 9! states or about 44 kilobytes.

different hash addresses (equivalent to about 46 megabytes space). Unfortunately, this approach leaves most hash addresses vacant. A better hash function is to compute the rank of the permutation in some given ordering, resulting in 9! states or about 44 kilobytes.

Lexicographic Ordering

The lexicographic rank of permutation π (of size N) is defined as  where the coefficients di are called the inverted index or factorial base.

where the coefficients di are called the inverted index or factorial base.

By looking at a permutation tree it is easy to see that such a hash function exists. Leaves in the tree are all permutations and at each node in level i, the i th vector value is selected, reducing the range of available values in level i + 1. This leads to an  algorithm. A linear algorithm for this maps a permutation to its factorial base

algorithm. A linear algorithm for this maps a permutation to its factorial base  with di being equal to ti minus the number of elements tj,

with di being equal to ti minus the number of elements tj,  that are smaller than ti; that is;

that are smaller than ti; that is;  with the number of inversions ci being set to

with the number of inversions ci being set to  . For example, the lexicographic rank of permutation

. For example, the lexicographic rank of permutation  is equal to

is equal to  , corresponding to

, corresponding to  and

and  . The values ci are computed in linear time using a table lookup in a

. The values ci are computed in linear time using a table lookup in a  -size table T. In the table T we store the number of ones in the binary representation of a value,

-size table T. In the table T we store the number of ones in the binary representation of a value,  with

with  . For computing the hash value, while processing vector position ti we mark bit ti in bitvector x(initially set to 0). Thus, x denotes the tiles we have seen so far and we can take

. For computing the hash value, while processing vector position ti we mark bit ti in bitvector x(initially set to 0). Thus, x denotes the tiles we have seen so far and we can take  as the value for ci. Since this approach consumes exponential space, time-space trade-offs have been discussed.

as the value for ci. Since this approach consumes exponential space, time-space trade-offs have been discussed.

For the design of a minimum perfect hash function of the sliding-tile puzzles we observe that in a lexicographic ordering every two successive permutations have an alternating signature (parity of the number of inversions) and differ by exactly one transposition. For minimal perfect hashing a (n2 − 1)-Puzzle state to  we consequently compute the lexicographic rank and divide it by 2. For unranking, we now have to determine which one of the two uncompressed permutations of the puzzle is reachable. This amounts to finding the signature of the permutation, which allows us to separate solvable from insolvable states. It is computed as

we consequently compute the lexicographic rank and divide it by 2. For unranking, we now have to determine which one of the two uncompressed permutations of the puzzle is reachable. This amounts to finding the signature of the permutation, which allows us to separate solvable from insolvable states. It is computed as  . For example, with

. For example, with  we have

we have  .

.

There is one subtle problem with the blank. Simply taking the minimum perfect hash value for the alternation group in  does not suffice, since swapping a tile with the blank does not necessarily toggle the solvability status (e.g., it may be a move). To resolve this problem, we partition state space along the position of the blank. Let

does not suffice, since swapping a tile with the blank does not necessarily toggle the solvability status (e.g., it may be a move). To resolve this problem, we partition state space along the position of the blank. Let  denote the sets of blank-projected states. Then each Bi contains

denote the sets of blank-projected states. Then each Bi contains  elements. Given index i and the rank inside Bi, it is simple to reconstruct the state.

elements. Given index i and the rank inside Bi, it is simple to reconstruct the state.

Myrvold Ruskey Ordering

We next turn to alternative permutation indices proposed by Myrvold and Ruskey. The basic motivation is the generation of a random permutation according to swapping  with

with  , where r is a random number uniformly chosen in

, where r is a random number uniformly chosen in  , and i decreases from N − 1 down to 1.

, and i decreases from N − 1 down to 1.

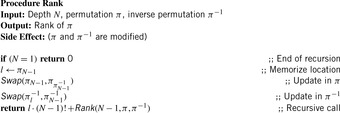

One (recursive) algorithm Rank is shown in Algorithm 3.25. The permutation π and its inverse  are initialized according to the permutation, for which a rank has to be determined.

are initialized according to the permutation, for which a rank has to be determined.

The inverse  of π can be computed by setting

of π can be computed by setting  , for all

, for all  . Take as an example permutation

. Take as an example permutation  . Then its rank is

. Then its rank is  . This unrolls to

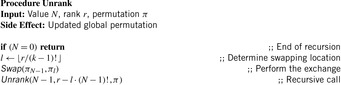

. This unrolls to  . It is also possible to compile a rank back into a permutation in linear time. The inverse procedure Unrank, initialized with the identity permutation, is shown in Algorithm 3.26. The depth value N is initialized with the size of the permutation, and the rank r is the value computed with Algorithm 3.25. (As a side effect, if the algorithm is terminated at the N th step, the positions

. It is also possible to compile a rank back into a permutation in linear time. The inverse procedure Unrank, initialized with the identity permutation, is shown in Algorithm 3.26. The depth value N is initialized with the size of the permutation, and the rank r is the value computed with Algorithm 3.25. (As a side effect, if the algorithm is terminated at the N th step, the positions  hold a random l-permutation of the numbers

hold a random l-permutation of the numbers  .)

.)

Algorithm 3.27 shows another (in this case nonrecursive) unrank algorithm proposed by Myrvold and Ruskey. It also detects the parity of the number of inversions (the signature of the permutation) efficiently and fits to the ranking function in Algorithm 3.28. All permutations of size N = 4 together with their signature and ranked according to the two approaches are listed in Table 3.2.

| Index | Unrank (Alg. 3.26) | Signature | Unrank (Alg. 3.27) | Signature |

|---|---|---|---|---|

| 0 | (2,1,3,0) | 0 | (1,2,3,0) | 0 |

| 1 | (2,3,1,0) | 1 | (3,2,0,1) | 0 |

| 2 | (3,2,1,0) | 0 | (1,3,0,2) | 0 |

| 3 | (1,3,2,0) | 1 | (1,2,0,3) | 1 |

| 4 | (1,3,2,0) | 1 | (2,3,1,0) | 0 |

| 5 | (3,1,2,0) | 0 | (2,0,3,1) | 0 |

| 6 | (3,2,0,1) | 1 | (3,0,1,2) | 0 |

| 7 | (2,3,0,1) | 0 | (2,0,1,3) | 1 |

| 8 | (2,0,3,1) | 1 | (1,3,2,0) | 1 |

| 9 | (0,2,3,1) | 0 | (3,0,2,1) | 1 |

| 10 | (3,0,2,1) | 0 | (1,0,3,2) | 1 |

| 11 | (0,3,2,1) | 1 | (1,0,2,3) | 0 |

| 12 | (1,3,0,2) | 0 | (2,1,3,0) | 1 |

| 13 | (3,1,0,2) | 1 | (2,3,0,1) | 1 |

| 14 | (3,0,1,2) | 0 | (3,1,0,2) | 1 |

| 15 | (0,3,1,2) | 1 | (2,1,0,3) | 0 |

| 16 | (1,0,3,2) | 1 | (3,2,1,0) | 1 |

| 17 | (0,1,3,2) | 0 | (0,2,3,1) | 1 |

| 18 | (1,2,0,3) | 1 | (0,3,1,2) | 1 |

| 19 | (2,1,0,3) | 0 | (0,2,1,3) | 0 |

| 20 | (2,0,1,3) | 1 | (3,1,2,0) | 0 |

| 21 | (0,2,1,3) | 0 | (0,3,2,1) | 0 |

| 22 | (1,0,2,3) | 0 | (0,1,3,2) | 0 |

| 23 | (0,1,2,3) | 1 | (0,1,2,3) | 1 |

Theorem 3.3

(Myrvold-Ruskey Permutation Signature) Given the Myrvold-Ruskey rank (as computed byAlg. 3.28), the signature of a permutation can be computed in O (N) time withinAlgorithm 3.27.

Proof

In the unrank function we always have N − 1 element exchanges. For swapping two elements u and v at respective positions i and j with  we count

we count  transpositions:

transpositions:  . As

. As  , each transposition either increases or decreases the parity of the number of inversion, so that the parity for each iteration toggles. The only exception is if

, each transposition either increases or decreases the parity of the number of inversion, so that the parity for each iteration toggles. The only exception is if  , where no change occurs. Hence, the sign of the permutation can be determined by executing the Myrvold-Ruskey algorithm in O (N) time.

, where no change occurs. Hence, the sign of the permutation can be determined by executing the Myrvold-Ruskey algorithm in O (N) time.

Theorem 3.4

(Compression of Alternation Group) Let π (i) denote the value returned by the Myrvold and Ruskey's Unrank function (Alg. 3.28) for index i. Then π (i) matches  except for transposing π0 and π1.

except for transposing π0 and π1.

Proof

The last call for  in Algorithm 3.27 is

in Algorithm 3.27 is  , which resolves to either

, which resolves to either  or

or  . Only the latter one induces a change. If

. Only the latter one induces a change. If  denote the indices of

denote the indices of  in the iterations

in the iterations  of Myrvold and Ruskey's Unrank function, then

of Myrvold and Ruskey's Unrank function, then  , which is 1 for

, which is 1 for  and 0 for

and 0 for  .

.

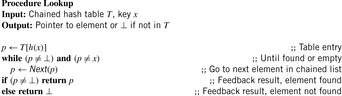

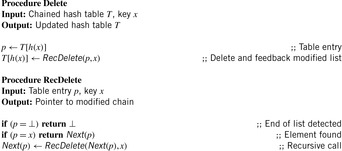

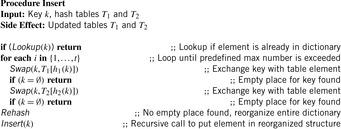

3.2.3. Hashing Algorithms

There are two standard options for dealing with colliding items: chaining or open addressing. In hashing with chaining, keys x are kept in linked overflow lists. The dictionary operations Lookup, Insert, and Delete amount to computing h (x) and then performing pure list manipulations in  . Their pseudo-code implementation is provided in Algorithm 3.29, Algorithm 3.30 and Algorithm 3.31. They assume a null pointer ⊥ and a link Next to the successor in the chained list. Operations Insert and Delete suggest a call to Lookup prior to their invocation to determine whether or not the element is contained in the hash table. An example for hashing the characters in heuristic search in a table of 10 elements with respect to their lexicographical order modulo 10 is depicted in Figure 3.15.

. Their pseudo-code implementation is provided in Algorithm 3.29, Algorithm 3.30 and Algorithm 3.31. They assume a null pointer ⊥ and a link Next to the successor in the chained list. Operations Insert and Delete suggest a call to Lookup prior to their invocation to determine whether or not the element is contained in the hash table. An example for hashing the characters in heuristic search in a table of 10 elements with respect to their lexicographical order modulo 10 is depicted in Figure 3.15.

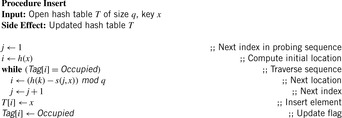

Hashing with open addressing integrates the colliding elements at free locations in the hash table; that is, if  is occupied, it searches for an alternative location for x. Searching a key x starts at h (x) and continues in the probing sequence until either x or an empty table entry is found. When deleting an element, some keys may have to be moved back to fill the hole in the lookup sequence.

is occupied, it searches for an alternative location for x. Searching a key x starts at h (x) and continues in the probing sequence until either x or an empty table entry is found. When deleting an element, some keys may have to be moved back to fill the hole in the lookup sequence.

The linear probing strategy considers  for

for  . In general, we have the sequence

. In general, we have the sequence for probing function

for probing function  There is a broad spectrum of suitable probing sequences, for example,

There is a broad spectrum of suitable probing sequences, for example, where rx is a random number depending on x, and h′ is a second function, which determines the step size of the probing sequence in double hashing.

where rx is a random number depending on x, and h′ is a second function, which determines the step size of the probing sequence in double hashing.

To exploit the whole table,  ,

,  , …,

, …,  , and

, and  should be a permutation of

should be a permutation of  .

.

An implementation of the procedure Lookup for a generic probing function s is provided in Algorithm 3.32. The implementation assumes an additional array Tag that associates one of the values Empty, Occupied, and Deleted with each element. Deletions (see Alg. 3.33) are handled by setting the Deleted tag for the cell of the deleted key. Lookups skip over deleted cells, and insertions (see Alg. 3.34) overwrite them.

When the hash table is nearly full, unsuccessful searches lead to long probe sequences. An optimization is ordered hashing, which maintains all probe sequences sorted. Thus, we can abort a Lookup operation as soon as we reach a larger key in the probe sequence. The according algorithm for inserting a key x is depicted in Algorithm 3.35. It consists of a search phase and an insertion phase. First, the probe sequence is followed up to a table slot that is either empty or contains an element that is larger than x. The insertion phase restores the sorting condition to make the algorithm work properly. If x replaces an element  , the latter has to be reinserted into its respective probe sequence, in turn. This leads to a sequence of updates that end when an empty bin is found. It can be shown that the average number of probes to insert a key into the hash table is the same as in ordinary hashing.

, the latter has to be reinserted into its respective probe sequence, in turn. This leads to a sequence of updates that end when an empty bin is found. It can be shown that the average number of probes to insert a key into the hash table is the same as in ordinary hashing.

For a hash table of size m that stores n keys, the quotient  is called the load factor. The load factor crucially determines the efficiency of hash table operations. The analysis assumes uniformness of h; that is,

is called the load factor. The load factor crucially determines the efficiency of hash table operations. The analysis assumes uniformness of h; that is,  for all

for all  and

and  . Under this precondition, the expected number of memory probes for insertion and unsuccessful lookup is

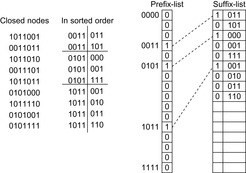

. Under this precondition, the expected number of memory probes for insertion and unsuccessful lookup is Thus, for any