We discussed virtual IP addresses earlier; now, it's time to leverage them properly. A virtual IP is not a service in the traditional sense, but it does provide functionality that we need in a highly-available configuration. In cases where we also have control over DNS resolution, we can even assign a name to the virtual IP address to insulate applications from future changes.

For now, this recipe will limit itself to outlining the steps required to add a transitory IP address to Pacemaker.

As we're continuing to configure Pacemaker, make sure you've followed all the previous recipes.

We will assume that the 192.168.56.30 IP address exists as a predefined target for our PostgreSQL cluster. Users and applications will connect to it instead of the actual addresses of pg1 or pg2.

Perform these steps on any Pacemaker node as the root user:

- Add an IP address

primitiveto Pacemaker withcrm:crm configure primitive pg_vip ocf:heartbeat:IPaddr2 params ip="192.168.56.30" iflabel="pgvip" op monitor interval="5"

- Try to view the IP allocation on

pg1andpg2:ifconfig | grep -A3 :pgvip - Clean up any errors that might have accumulated with

crm:crm resource cleanup pg_vip - Display the status of our new IP address with

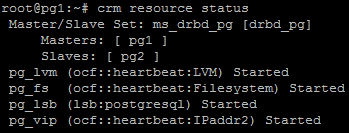

crm:crm resource status

This call to crm with configure primitive allows us to associate an arbitrary IP address with our Pacemaker cluster. Once again, we follow the simple naming scheme and label our resource pg_vip. As we always require a resource agent, we need one that is designed to handle network interfaces. There are actually two that fit this role: IPaddr and IPaddr2. Though we can use either, the IPaddr2 agent is designed specifically for Linux hosts, so we might as well use it for maximum compatibility.

The minimum parameters (params) we need for this resource agent include the IP address (ip) and a label for network management (iflabel). We chose to set these to the IP address that we set aside earlier (192.168.56.30). We also chose a descriptive label to associate with the interface (pgvip). Due to the nature of IP addresses, it's a good idea to check the interface on both machines to see that it is properly listed. Our test system looks like this:

As our test system has a second interface representing the 192.168.56.255 mask, pgvip was attached to eth1 instead of the usual eth0. We check both pg1 and pg2 because Pacemaker still starts resources independently, and the new IP address might be on either node. We'll be resolving this soon, so don't worry if the IP address is allocated to the wrong node.

As usual, we run a resource cleanup and then display the resource status of the cluster. No matter where pgvip is running, we should see output similar to this:

As expected, the pg_vip Pacemaker resource is Started and part of our growing list of resources.