We discussed earlier how to convert a signal into the frequency domain. In most modern speech recognition systems, people use frequency-domain features. After you convert a signal into the frequency domain, you need to convert it into a usable form. Mel Frequency Cepstral Coefficients (MFCC) is a good way to do this. MFCC takes the power spectrum of a signal and then uses a combination of filter banks and discrete cosine transform to extract features. If you need a quick refresher, you can check out http://practicalcryptography.com/miscellaneous/machine-learning/guide-mel-frequency-cepstral-coefficients-mfccs. Make sure that the python_speech_features package is installed before you start. You can find the installation instructions at http://python-speech-features.readthedocs.org/en/latest. Let's take a look at how to extract MFCC features.

- Create a new Python file, and import the following packages:

import numpy as np import matplotlib.pyplot as plt from scipy.io import wavfile from features import mfcc, logfbank

- Read the

input_freq.wavinput file that is already provided to you:# Read input sound file sampling_freq, audio = wavfile.read("input_freq.wav") - Extract the MFCC and filter bank features:

# Extract MFCC and Filter bank features mfcc_features = mfcc(audio, sampling_freq) filterbank_features = logfbank(audio, sampling_freq)

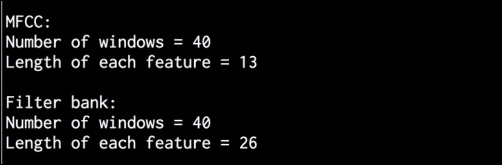

- Print the parameters to see how many windows were generated:

# Print parameters print ' MFCC: Number of windows =', mfcc_features.shape[0] print 'Length of each feature =', mfcc_features.shape[1] print ' Filter bank: Number of windows =', filterbank_features.shape[0] print 'Length of each feature =', filterbank_features.shape[1]

- Let's visualize the MFCC features. We need to transform the matrix so that the time domain is horizontal:

# Plot the features mfcc_features = mfcc_features.T plt.matshow(mfcc_features) plt.title('MFCC') - Let's visualize the filter bank features. Again, we need to transform the matrix so that the time domain is horizontal:

filterbank_features = filterbank_features.T plt.matshow(filterbank_features) plt.title('Filter bank') plt.show() - The full code is in the

extract_freq_features.pyfile. If you run this code, you will get the following figure for MFCC features:

- The filter bank features will look like the following:

- You will get the following output on your Terminal: