7.6 Multiple Eigenvalue Solutions

In Section 7.3 we saw that if the n×n

is given by

with arbitrary constants c1, c2, …, cn. In this section we discuss the situation when the characteristic equation

does not have n distinct roots, and thus has at least one repeated root.

An eigenvalue is of multiplicity k if it is a k-fold root of Eq. (3). For each eigenvalue λ, the eigenvector equation

has at least one nonzero solution v, so there is at least one eigenvector associated with λ. But an eigenvalue of multiplicity k>1 may have fewer than k linearly independent associated eigenvectors. In this case we are unable to find a “complete set” of n linearly independent eigenvectors of A, as needed to form the general solution in (2).

Let us call an eigenvalue of multiplicity k complete if it has k linearly independent associated eigenvectors. If every eigenvalue of the matrix A is complete, then—because eigenvectors associated with different eigenvalues are linearly independent—it follows that A does have a complete set of n linearly independent eigenvectors v1, v2, …, vn associated with the eigenvalues λ1, λ2, …, λn (each repeated with its multiplicity). In this case a general solution of x′=Ax is still given by the usual combination in (2).

Example 1

Find a general solution of the system

Solution

The characteristic equation of the coefficient matrix A in Eq. (5) is

Thus A has the distinct eigenvalue λ1=5 and the repeated eigenvalue λ2=3 of multiplicity k=2.

Case 1: λ1=5. The eigenvector equation (A−λI)v=0, where v=[abc]T, is

Each of the first two equations, 4a+4b=0 and −6a−6b=0, yields b=−a. Then the third equation reduces to 2a−2c=0, so that c=a. The choice a=1 then yields the eigenvector

associated with the eigenvalue λ1=5.

Case 2: λ2=3. Now the eigenvector equation is

so the nonzero vector v=[abc]T is an eigenvector if and only if

that is, b=−32a. The fact that Eq. (6) does not involve c means that c is arbitrary, subject to the condition v≠0. If c=1, then we may choose a=b=0; this gives the eigenvector

associated with λ2=3. If c=0, then we must choose a to be nonzero. For instance, if a=2 (to avoid fractions), then b=−3, so

is a second linearly independent eigenvector associated with the multiplicity 2 eigenvalue λ2=3.

Thus we have found a complete set v1, v2, v3 of three eigenvectors associated with the eigenvalues 5, 3, 3. The corresponding general solution of Eq. (5) is

with scalar component functions given by

Remark

Our choice in Example 1 of the two eigenvectors

associated with the repeated eigenvalue λ2=3 bears comment. The fact that b=−32a for any eigenvector associated with λ2=3 means that any such eigenvector can be written as

and thus is a linear combination of v2 and v3. Therefore, given a and c not both zero, we could choose v rather than v3 as our third eigenvector, and the new general solution

would be equivalent to the one in Eq. (7). Thus we need not worry about making the “right” choice of independent eigenvectors associated with a multiple eigenvalue. Any choice will do; we generally make the simplest one we can.

Defective Eigenvalues

The following example shows that—unfortunately—not all multiple eigenvalues are complete.

Example 2

The matrix

has characteristic equation

Thus A has the single eigenvalue λ1=4 of multiplicity 2. The eigenvector equation

then amounts to the equivalent scalar equations

Hence b=−a if v=[ab]T is to be an eigenvector of A. Therefore, any eigenvector associated with λ1=4 is a nonzero multiple of v=[1−1]T. Thus the multiplicity 2 eigenvalue λ1=4 has only one independent eigenvector, and hence is incomplete.

An eigenvalue λ of multiplicity k>1 is called defective if it is not complete. If λ has only p<k linearly independent eigenvectors, then the number

of “missing” eigenvectors is called the defect of the defective eigenvalue λ. Thus the defective eigenvalue λ1=4 in Example 2 has multiplicity k=2 and defect d=1, because we saw that it has only p=1 associated eigenvector.

If the eigenvalues of the n×n matrix A are not all complete, then the eigenvalue method as yet described will produce fewer than the needed n linearly independent solutions of the system x′=Ax. We therefore need to discover how to find the “missing solutions” corresponding to a defective eigenvalue λ of multiplicity k>1.

The Case of Multiplicity k=2

Let us begin with the case k=2, and suppose that we have found (as in Example 2) that there is only a single eigenvector v1 associated with the defective eigenvalue λ. Then at this point we have found only the single solution

![]()

of x′=Ax. By analogy with the case of a repeated characteristic root for a single linear differential equation (Section 5.3), we might hope to find a second solution of the form

When we substitute x=v2teλt in x′=Ax, we get the equation

But because the coefficients of both eλt and teλt must balance, it follows that v2=0, and hence that x2(t)≡0. This means that—contrary to our hope—the system x′=Ax does not have a nontrivial solution of the form assumed in (11).

Instead of simply giving up on the idea behind Eq. (11), let us extend it slightly and replace v2t with v1t+v2. Thus we explore the possibility of a second solution of the form

![]()

where v1 and v2 are nonzero constant vectors. When we substitute x=v1teλt+v2eλt in x′=Ax, we get the equation

We equate coefficients of eλt and teλt here, and thereby obtain the two equations

and

that the vectors v1 and v2 must satisfy in order for (12) to give a solution of x′=Ax.

Note that Eq. (14) merely confirms that v1 is an eigenvector of A associated with the eigenvalue λ. Then Eq. (15) says that the vector v2 satisfies the equation

It follows that, in order to solve simultaneously the two equations in (14) and (15), it suffices to find a solution v2 of the single equation (A−λI)2v2=0 such that the resulting vector v1=(A−λI)v2 is nonzero. It turns out that this is always possible if the defective eigenvalue λ of A is of multiplicity 2. Consequently, the procedure described in the following algorithm always succeeds in finding two independent solutions associated with such an eigenvalue.

Example 3

Find a general solution of the system

Solution

In Example 2 we found that the coefficient matrix A in Eq. (20) has the defective eigenvalue λ=4 of multiplicity 2. We therefore begin by calculating

Hence Eq. (16) is

and therefore is satisfied by any choice of v2. In principle, it could happen that (A−4I)v2 is nonzero (as desired) for some choices of v2 though not for others. If we try v2=[10]T we find that

is nonzero, and therefore is an eigenvector associated with λ=4. (It is −3 times the eigenvector found in Example 2.) Therefore, the two solutions of Eq. (20) given by Eqs. (18) and (19) are

The resulting general solution

has scalar component functions

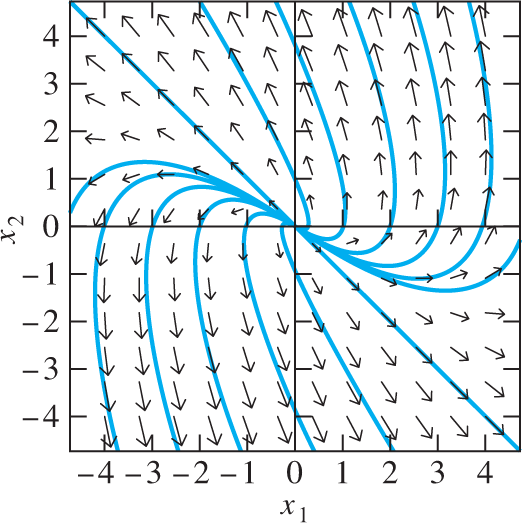

With c2=0 these solution equations reduce to the equations x1(t)=−3c1e4t, x2(t)=3c1e4t, which parametrize the line x1=−x2 in the x1x2-plane. The point (x1(t),x2(t)) then recedes along this line away from the origin as t→+∞, to the northwest if c1>0 and to the southeast if c1<0. As indicated in Fig. 7.6.1, each solution curve with c2≠0 is tangent to the line x1=−x2 at the origin; the point (x1(t),x2(t)) approaches the origin as t→−∞ and approaches +∞ along the solution curve as t→+∞.

FIGURE 7.6.1.

Direction field and solution curves for the linear system x′1=x1−3x2, x′2=3x1+7x2 of Example 3.

Generalized Eigenvectors

The vector v2 in Eq. (16) is an example of a generalized eigenvector. If λ is an eigenvalue of the matrix A, then a rank r generalized eigenvector associated with λ is a vector v such that

![]()

If r=1, then (21) simply means that v is an eigenvector associated with λ (recalling the convention that the 0th power of a square matrix is the identity matrix). Thus a rank 1 generalized eigenvector is an ordinary eigenvector. The vector v2 in (16) is a rank 2 generalized eigenvector (and not an ordinary eigenvector).

The multiplicity 2 method described earlier boils down to finding a pair {v1,v2} of generalized eigenvectors, one of rank 1 and one of rank 2, such that (A−λI)v2=v1. Higher multiplicity methods involve longer “chains” of generalized eigenvectors. A length k chain of generalized eigenvectors based on the eigenvector v1 is a set {v1,v2,…,vk} of k generalized eigenvectors such that

![]()

Because v1 is an ordinary eigenvector, (A−λI)v1=0. Therefore, it follows from (22) that

If {v1,v2,v3} is a length 3 chain of generalized eigenvectors associated with the multiple eigenvalue λ of the matrix A, then it is easy to verify that three linearly independent solutions of x′=Ax are given by

For instance, the equations in (22) give

so

Therefore, x3(t) in (24) does, indeed, define a solution of x′=Ax.

Consequently, in order to “handle” a multiplicity 3 eigenvalue λ, it suffices to find a length 3 chain {v1,v2,v3} of generalized eigenvalues associated with λ. Looking at Eq. (23), we see that we need only find a solution v3 of

such that the vectors

are both nonzero (although, as we will see, this is not always possible).

Example 4

Find three linearly independent solutions of the system

Solution

The characteristic equation of the coefficient matrix in Eq. (25) is

and thus A has the eigenvalue λ=−1 of multiplicity 3. The eigenvector equation (A−λI)v=0 for an eigenvector v=[abc]T is

The third row a+c=0 gives c=−a; then the first row a+b+2c=0 gives b=a. Thus, to within a constant multiple, the eigenvalue λ=−1 has only the single associated eigenvector v=[aa−a]T with a≠0, and so the defect of λ=−1 is 2.

To apply the method described here for triple eigenvalues, we first calculate

and

Thus any nonzero vector v3 will be a solution of the equation (A+I)3v3=0. Beginning with v3=[100]T, for instance, we calculate

Note that v1 is the previously found eigenvector v with a=−2; this agreement serves as a check of the accuracy of our matrix computations.

Thus we have found a length 3 chain {v1,v2,v3} of generalized eigenvectors associated with the triple eigenvalue λ=−1. Substitution in (24) now yields the linearly independent solutions

of the system x′=Ax.

The General Case

A fundamental theorem of linear algebra states that every n×n matrix A has n linearly independent generalized eigenvectors. These n generalized eigenvectors may be arranged in chains, with the sum of the lengths of the chains associated with a given eigenvalue λ equal to the multiplicity of λ. But the structure of these chains depends on the defect of λ, and can be quite complicated. For instance, a multiplicity 4 eigenvalue can correspond to

Four length 1 chains (defect 0);

Two length 1 chains and a length 2 chain (defect 1);

Two length 2 chains (defect 2);

A length 1 chain and a length 3 chain (defect 2); or

A length 4 chain (defect 3).

Note that, in each of these cases, the length of the longest chain is at most d+1, where d is the defect of the eigenvalue. Consequently, once we have found all the ordinary eigenvectors associated with a multiple eigenvalue λ, and therefore know the defect d of λ, we can begin with the equation

![]()

to start building the chains of generalized eigenvectors associated with λ.

Each length k chain {v1,v2,…,vk} of generalized eigenvectors (with v1 an ordinary eigenvector associated with λ) determines a set of k independent solutions of x′=Ax corresponding to the eigenvalue λ:

![]()

Note that (27) reduces to Eqs. (18) through (19) and (24) in the cases k=2 and k=3, respectively.

To ensure that we obtain n generalized eigenvectors of the n×n matrix A that are actually linearly independent, and therefore produce a complete set of n linearly independent solutions of x′=Ax when we amalgamate all the “chains of solutions” corresponding to different chains of generalized eigenvectors, we may rely on the following two facts:

Any chain of generalized eigenvectors constitutes a linearly independent set of vectors.

If two chains of generalized eigenvectors are based on linearly independent eigenvectors, then the union of these two chains is a linearly independent set of vectors (whether the two base eigenvectors are associated with different eigenvalues or with the same eigenvalue).

Example 5

Suppose that the 6×6 matrix A has two multiplicity 3 eigenvalues λ1=−2 and λ2=3 with defects 1 and 2, respectively. Then λ1 must have an associated eigenvector u1 and a length 2 chain {v1,v2} of generalized eigenvectors (with the eigenvectors u1 and v1 being linearly independent), whereas λ2 must have a length 3 chain {w1,w2,w3} of generalized eigenvectors based on its single eigenvector w1. The six generalized eigenvectors u1, v1, v2, w1, w2, and w3 are then linearly independent and yield the following six independent solutions of x′=Ax:

As Example 5 illustrates, the computation of independent solutions corresponding to different eigenvalues and chains of generalized eigenvalues is a routine matter. The determination of the chain structure associated with a given multiple eigenvalue can be more interesting (as in Example 6).

An Application

Figure 7.6.2 shows two railway cars that are connected with a spring (permanently attached to both cars) and with a damper that exerts opposite forces on the two cars, of magnitude c(x′1−x′2) proportional to their relative velocity. The two cars are also subject to frictional resistance forces c1x′1 and c2x′2 proportional to their respective velocities. An application of Newton’s law ma=F (as in Example 1 of Section 7.1) yields the equations of motion

FIGURE 7.6.2.

The railway cars of Example 6.

In terms of the position vector x(t)=[x1(t)x2(t)]T, these equations can be written in the matrix form

where M and K are mass and stiffness matrices (as in Eqs. (2) and (3) of Section 7.4), and

is the resistance matrix. Unfortunately, because of the presence of the term involving x′, the methods of Section 7.4 cannot be used.

Instead, we write (28) as a first-order system in the four unknown functions x1(t), x2(t), x3(t)=x′1(t), and x4(t)=x′2(t). If m1=m2=1 we get

where now x=[x1x2x3x4]T and

Example 6

Two railway cars With m1=m2=c=1 and k=c1=c2=2, the system in Eq. (30) is

It is not too tedious to calculate manually—although a computer algebra system such as Maple, Mathematica, or Matlab is useful here—the characteristic equation

of the coefficient matrix A in Eq. (32). Thus A has the distinct eigenvalue λ0=0 and the triple eigenvalue λ1=−2.

Case 1: λ0=0. The eigenvalue equation (A−λI)v=0 for the eigenvector v=[abcd]T is

The first two rows give c=d=0; then the last two rows yield a=b. Thus

is an eigenvector associated with λ0=0.

Case 2: λ1=−2 The eigenvalue equation (A−λI)v=0 is

The third and fourth scalar equations here are the differences of the first and second equations, and therefore are redundant. Hence v is determined by the first two equations,

We can choose a and b independently, then solve for c and d. Thereby we obtain two eigenvectors associated with the triple eigenvalue λ1=−2. The choice a=1, b=0 yields c=−2, d=0 and thereby the eigenvector

The choice a=0, b=1 yields c=0, d=−2 and thereby the eigenvector

Because λ1=−2 has defect 1, we need a generalized eigenvector of rank 2, and hence a nonzero solution v2 of the equation

Obviously,

is such a vector, and we find that

is nonzero, and therefore is an eigenvector associated with λ1=−2. Then {v1,v2} is the length 2 chain we need.

The eigenvector v1 just found is neither of the two eigenvectors u1 and u2 found previously, but we observe that v1=u1−u2. For a length 1 chain w1 to complete the picture, we can choose any linear combination of u1 and u2 that is independent of v1. For instance, we could choose either w1=u1 or w1=u2. However, we will see momentarily that the particular choice

yields a solution of the system that is of physical interest.

Finally, the chains {v0}, {w1}, and {v1,v2} yield the four independent solutions

of the system x′=Ax in (32).

The four scalar components of the general solution

are described by the equations

Recall that x1(t) and x2(t) are the position functions of the two masses, whereas x3(t)=x′1(t) and x4(t)=x′2(t) are their respective velocity functions.

For instance, suppose that x1(0)=x2(0)=0 and that x′1(0)=x′2(0)=v0. Then the equations

are readily solved for c1=12v0, c2=−12v0, and c3=c4=0, so

In this case the two railway cars continue in the same direction with equal but exponentially damped velocities, approaching the displacements x1=x2=12v0 as t→+∞.

It is of interest to interpret physically the individual generalized eigenvector solutions given in (33). The degenerate (λ0=0) solution

describes the two masses at rest with position functions x1(t)≡1 and x2(t)≡1. The solution

corresponding to the carefully chosen eigenvector w1 describes damped motions x1(t)=e−2t and x2(t)=e−2t of the two masses, with equal velocities in the same direction. Finally, the solutions x3(t) and x4(t) resulting from the length 2 chain {v1,v2} both describe damped motion with the two masses moving in opposite directions.

The methods of this section apply to complex multiple eigenvalues just as to real multiple eigenvalues (although the necessary computations tend to be somewhat lengthy). Given a complex conjugate pair α±βi of eigenvalues of multiplicity k, we work with one of them (say, α−βi) as if it were real to find k independent complex-valued solutions. The real and imaginary parts of these complex-valued solutions then provide 2k real-valued solutions associated with the two eigenvalues λ=α−βi and ̲λ=α+βi each of multiplicity k. See Problems 33 and 34.

The Jordan Normal Form

In Section 6.2 we saw that if the n×n matrix A has a complete set v1,v2,…,vn of n linearly independent eigenvectors, then it is similar to a diagonal matrix. In particular,

where P=[v1v2⋯vn] and the diagonal elements of the diagonal matrix D are the corresponding eigenvalues λ1,λ2,…,λn (not necessarily distinct). This result is a special case of the following general theorem, a proof of which can be found in Appendix B of Gilbert Strang, Linear Algebra and Its Applications (4th edition, Brooks Cole, 2006).

If the Jordan block Ji in (37) is of size k×k, then it corresponds to a length k chain of generalized eigenvectors based on the (ordinary) eigenvector vi. If all these generalized eigenvectors are arranged as column vectors in proper order corresponding to the appearance of the Jordan blocks in (36), the result is a nonsingular n×n matrix Q such that

The block-diagonal matrix J such that A=QJQ−1 is called the Jordan normal form of the matrix A and is unique [except for the order of appearance of the Jordan blocks in (36)].

Example 7

In Example 3 we saw that the matrix

has the single eigenvalue λ=4 and the associated length 2 chain of generalized eigenvectors {v1,v2}, where v1=[−33]T and v2=[10]T, based on the eigenvector v1=Av2. If we define

then we find that the Jordan normal form of A is

Here J=J1 is a single 2×2 Jordan block corresponding to the single eigenvalue λ=4 of A.

Example 8

In Example 6 we saw that the matrix

has the distinct eigenvalue λ1=0 corresponding to the eigenvector

and the triple eigenvalue λ2=−2 corresponding both to the eigenvector v2=[10−20]T and to the length 2 chain of generalized eigenvectors {v3,v4} with v3=[1−1−22]T and v4=[001−1]T, such that v3=(A+2I)v4. If we define

then (using a computer algebra system to ease the labor of calculation) we find that the Jordan normal form of A is

Here we see the 1×1 Jordan block J1=[0] corresponding to the eigenvalue λ1=0 of A, as well as the two Jordan blocks J2=[−2] and J3=[−210−2] corresponding to the two linearly independent eigenvectors v2 and v3 associated with the eigenvalue λ2=−2.

The General Cayley-Hamilton Theorem

In Section 6.3, we showed that every diagonalizable matrix A satisfies its characteristic equation p(λ)=|A−λI|=0, that is, p(A)=0. We can now use the Jordan normal form to show that this is true whether or not A is diagonalizable.

If J=Q−1AQ is the Jordan normal form of the matrix A, then p(A)=Qp(J)Q−1, just as in the conclusion to the proof of Theorem 1 in Section 6.3. It therefore suffices to show that p(J)=0. If the Jordan blocks J1,J2,…,Js in (36) have sizes k1,k2,…,ks—that is, Ji is a ki×ki matrix—and corresponding eigenvalues λ1,λ2,…,λs (respectively), then

and so

Now p(J) has the same block-diagonal structure as J itself, and we see from (39) that the ith block of p(J) involves the factor

where the ki×ki matrix λiI−Ji has all elements zero except on its first superdiagonal. It is easily seen that (λiI−Ji)2 then has nonzero elements only on its second superdiagonal; (λiI−Ji)3 has nonzero elements only on its third superdiagonal; and so on, in turn, until we see that (λiI−Ji)ki=0. Hence it follows from (39) that the ith block of p(J) is the ki×ki zero matrix. This being true for each i=1,2,…,s, we conclude that p(J)=0, as desired. This completes the proof of the general case of the Cayley-Hamilton theorem.

7.6 Problems

Find general solutions of the systems in Problems 1 through 22. In Problems 1 through 6, use a computer system or graphing calculator to construct a direction field and typical solution curves for the given system.

x′=[−21−1−4]x

x′=[3−111]x

x′=[1−225]x

x′=[3−115]x

x′=[71−43]x

x′=[1−449]x

x′=[200−797002]x

x′=[25120−18−506613]x

x′=[−191284050−8433]x

x′=[−1340−48−823−24003]x

x′=[−30−4−1−1−1101]x

x′=[−1010−111−1−1]x

x′=[−10101−401−3]x

x′=[001−5−1−541−2]x

x′=[−2−90140131]x

x′=[100−2−2−3234]x

x′=[1001874−27−9−5]x

x′=[100131−2−4−1]x

x′=[1−40−201006−12−1−60−40−1]x

x′=[2101021000210002]x

x′=[−1−400130012100101]x

x′=[13700−1−4001300−6−141]x

In Problems 23 through 32 the eigenvalues of the coefficient matrix A are given. Find a general solution of the indicated system x′=Ax. Especially in Problems 29 through 32, use of a computer algebra system (as in the application material for this section) may be useful.

x′=[398−16−36−5167216−29]x;λ=−1, 3, 3,

x′=[2850100153360−15−30−57]x;λ=−2, 3, 3

x′=[−2174−161012]x;λ=2, 2, 2

x′=[5−11130−321]x;λ=3, 3, 3

x′=[−35−53−138−810]x;λ=2, 2, 2

x′=[−15−743416−111775]x;λ=2, 2, 2

x′=[−111−27−4−6115−1136−2−26]x;λ=−1, −1, 2, 2

x′=[21−2103−530−1322−120−2745−25]x;λ=−1, −1, 2, 2

x′=[35−12−43022−8319−1030−9−279−3−23]x;λ=1, 1, 1, 1

x′=[11−1266−303000−93−24−633095−1−48−3−138−3018]x;λ=2, 2, 3, 3, 3

The characteristic equation of the coefficient matrix A of the system

x′=[3−4104301003−40043]xis

ϕ(λ)=(λ2−6λ+25)2=0.Therefore, A has the repeated complex conjugate pair 3±4i of eigenvalues. First show that the complex vectors

v1=[1i00]Tandv2=[001i]Tform a length 2 chain {v1,v2} associated with the eigenvalue λ=3−4i. Then calculate the real and imaginary parts of the complex-valued solutions

v1eλtand(v1t+v2)eλtto find four independent real-valued solutions of x′=Ax.

The characteristic equation of the coefficient matrix A of the system

x′=[20−8−3−18−100−9−3−25−933109032]xis

ϕ(λ)=(λ2−4λ+13)2=0.Therefore, A has the repeated complex conjugate pair 2±3i of eigenvalues. First show that the complex vectors

v1=[−i3+3i0−1]T,v2=[3−10+9i−i0]Tform a length 2 chain {v1,v2} associated with the eigenvalue λ=2+3i. Then calculate (as in Problem 33) four independent real-valued solutions of x′=Ax.

Railway cars Find the position functions x1(t) and x2(t) of the railway cars of Fig. 7.5.2 if the physical parameters are given by

m1=m2=c1=c2=c=k=1and the initial conditions are

x1(0)=x2(0)=0,x′1(0)=x′2(0)=v0How far do the cars travel before stopping?

Railway cars Repeat Problem 35 under the assumption that car 1 is shielded from air resistance by car 2, so now c1=0. Show that, before stopping, the cars travel twice as far as those of Problem 35.

37–46. Use the method of Examples 7 and 8 to find the Jordan normal form J of each coefficient matrix A given in Problems 23 through 32 (respectively).

7.6 Application Defective Eigenvalues and Generalized Eigenvectors

A typical computer algebra system can calculate both the eigenvalues of a given matrix A and the linearly independent (ordinary) eigenvectors associated with each eigenvalue. For instance, consider the 4×4 matrix

of Problem 31 in this section. When the matrix A has been entered, the Maple calculation

with(linalg): eigenvectors(A);

[1, 4, {[-1, 0, 1, 1], [0, 1, 3, 0]}]

or the Mathematica calculation

Eigensystem[A]

{{1,1,1,1},

{{-3,-1,0,3}, {0,1,3,0}, {0,0,0,0}, {0,0,0,0}}}

reveals that the matrix A in Eq. (1) has the single eigenvalue λ=1 of multiplicity 4 with only two independent associated eigenvectors v1 and v2. The Matlab command

[V, D] = eig(sym(A))

provides the same information. The eigenvalue λ=1 therefore has defect d=2. If B=A−(1)I, you should find that B2≠0 but B3=0. If

then {u1,u2,u3} should be a length 3 chain of generalized eigenvectors based on the ordinary eigenvector u3 (which should be a linear combination of the original eigenvectors v1 and v2). Use your computer algebra system to carry out this construction, and finally write four linearly independent solutions of the linear system x′=Ax.

For a more exotic matrix to investigate, consider the Matlab’s gallery(5) example matrix

Use appropriate commands like those illustrated here to show that A has a single eigenvalue λ=0 of multiplicity 5 and defect 4. Noting that A−(0)I=A, you should find that A4≠0 but that A5=0. Hence calculate the vectors

You should find that u5 is a nonzero vector such that Au5=0, and is therefore an (ordinary) eigenvector of A associated with the eigenvalue λ=0. Thus {u1,u2,u3,u4,u5} is a length 5 chain of generalized eigenvectors of the matrix A in Eq. (2), and you can finally write five linearly independent solutions of the linear system x′=Ax.