In ERP systems, it is a very common and important integration practice to handle high-volume integration in asynchronous patterns. Dynamics 365 for Finance and Operations allows us to configure how we export or import data in files using a recurring schedule. This integration pattern is based on data entities, the data management platform, and RESTful batch data APIs.

The following diagram shows the batch data API conceptual architecture in Dynamics 365 for Finance and Operations:

As we can see, there are two sets of APIs at the top: data entities and data management.

The following table summarizes the key differences between both APIs so that you can decide on which one works best in your integration scenarios:

| Key point | Recurring integration API | Data package API |

| Scheduling | Scheduling in Finance and Operations | Scheduling outside Finance and Operations |

| Format | Files and data packages | Only data packages |

| Transformation | XSLT support in Finance and Operations | Transformations outside of Finance and Operations |

| Supported protocols | SOAP and REST | REST |

| Availability | Cloud only | Cloud and on-premise |

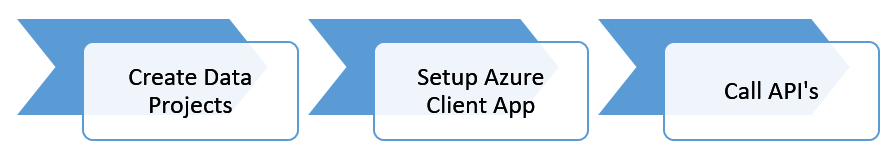

The following diagram describes the process of setting up and consuming the recurring integration using RESTful services:

As highlighted in the preceding diagram, the next heading describes how to set up batch data API in Dynamics 365 for Finance and Operations. These steps are as follows:

- Create data projects: To set up batch data APIs, we need to set up data projects. This step involves creating the data project for export or import and then adding the required data entities with the appropriate source file format and defining the mapping.

- Set up the client application: The next step is to set up a client application. Both recurring integration and package APIs use the OAuth 2.0 authentication model. Before the integrating client application can consume this endpoint, a client application must be registered in Microsoft Azure AD, granted permission, and whitelisted in Dynamics 365 for Finance and Operations.

- Call the APIs: Now, the third-party application or middleware system can use the RESTful APIs to send and receive messages.

The following table describes the integration APIs that are available for recurring integration:

| Type | API name | Description |

|

Import |

Enqueue |

Submit the files for import |

|

Import |

Status |

Get the status of import operations |

|

Export |

Dequeue |

Get the file's content for export activities |

|

Export |

Ack |

Acknowledge the dequeue operation |

The following table describes the list of APIs that are available when using package APIs:

| Type | API name | Description |

| Import | GetAzureWriteUrl | Used to get a writable blob URL. |

| ImportFromPackage | Initiates an import from the data package that is uploaded to the blob storage. | |

| GetImportStagingErrorFileUrl | Gets the URL of the error file containing the input records that failed at the source and sends them to the staging step for a single entity. | |

| GenerateImportTargetErrorKeysFile | Generates an error file containing the keys of the import records that failed at the staging step to the target step for a single entity. | |

|

GetImportTargetErrorKeysFileUrl |

Gets the URL of the error file that contains the keys of the import records that failed at the staging-to-target step of the import for a single entity. | |

| Export | ExportToPackage | Exports a data package. |

| GetExportedPackageUrl | Gets the URL of the data package that was exported by a call to ExportToPackage. | |

| Status check | GetExecutionSummaryStatus | Used to check the status of a data project execution job for both export and import APIs. |

The Microsoft product team has made a console application that showcases the data import and data export methods that are available on GitHub. For more information, go to https://github.com/Microsoft/Dynamics-AX-Integration/tree/master/FileBasedIntegrationSamples/ConsoleAppSamples.