We proceed similarly to create the object for the table, which is also approximated by a box. Therefore, we simply remove any previous object and add the table. In this case, the object name is table:

# Retrieve params:

self._table_object_name = rospy.get_param('~table_object_name',

'table')

# Clean the scene: self._scene.remove_world_object(self._table_object_name) # Add table and Coke can objects to the planning scene: self._pose_table = self._add_table(self._table_object_name)

The _add_table method adds the table to the planning scene:

def _add_table(self, name):

p = PoseStamped()

p.header.frame_id = self._robot.get_planning_frame()

p.header.stamp = rospy.Time.now()

p.pose.position.x = 1.0

p.pose.position.y = 0.0

p.pose.position.z = 1.0

q = quaternion_from_euler(0.0, 0.0, numpy.deg2rad(90.0))

p.pose.orientation = Quaternion(*q)

self._scene.add_box(name, p, (1.5, 0.8, 0.03))

returnp.pose

We can visualize the planning scene objects in RViz running the following commands:

$ roslaunch rosbook_arm_gazebo rosbook_arm_grasping_world.launch

$ roslaunch rosbook_arm_moveit_config moveit_rviz.launch config:=true

$ roslaunch rosbook_arm_pick_and_place grasp_generator_server.launch

$ rosrun rosbook_arm_pick_and_place pick_and_place.py

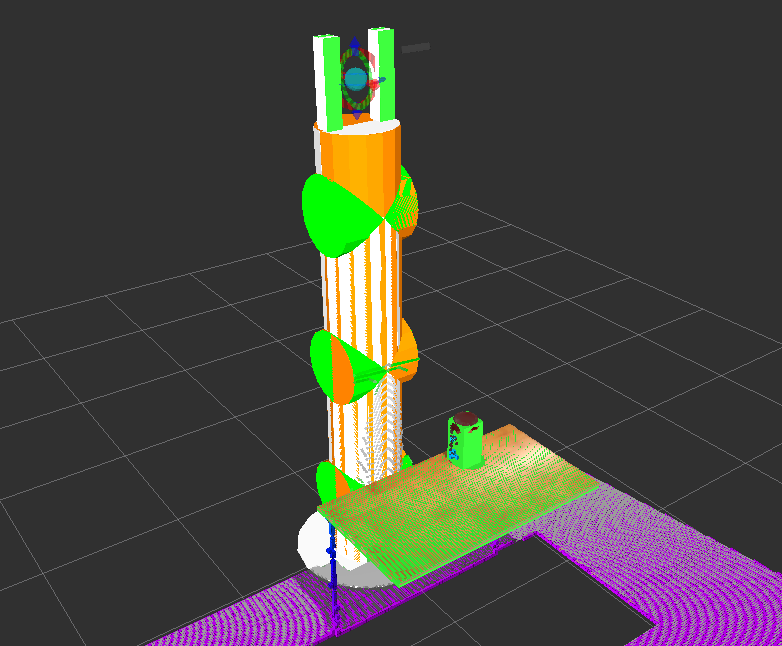

This actually runs the whole pick and place task, which we will continue to explain later. Right after starting the pick_and_place.py program, you will see the boxes that model the table and the can of Coke in green, matching perfectly with the point cloud seen by the RGB-D sensor, as shown in the following figure:

Point cloud seen by the RGB-D sensor of the environment