Let's go through how we can run the image recognition node.

First, you have to plug a UVC webcam, which we used in Chapter 20, Face Detection and Tracking Using ROS, OpenCV and Dynamixel Servos Run roscore:

$ roscore

Run the webcam driver:

$ rosrun cv_camera cv_camera_node

Run the image recognition node, simply using the following command:

$ python image_recognition.py image:=/cv_camera/image_raw

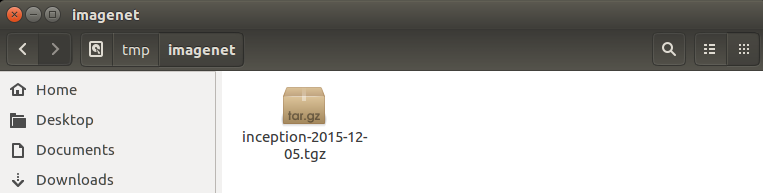

When we run the recognition node, it will download the inception model and extract it into the /tmp/imagenet folder. You can do it manually by downloading inception-v3 from the following link:

http://download.tensorflow.org/models/image/imagenet/inception-2015-12-05.tgz

You can copy this file into the /tmp/imagenet folder:

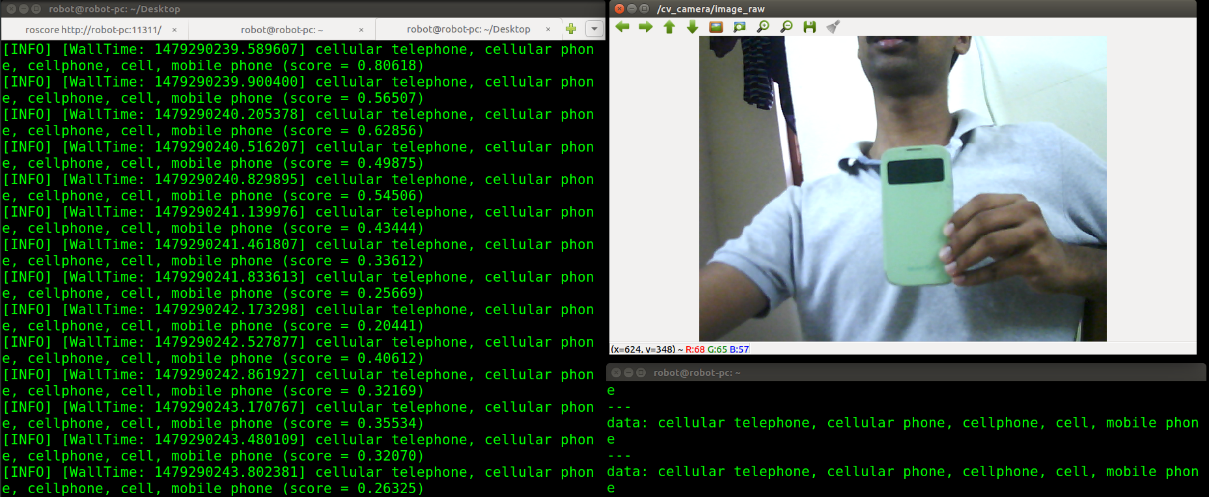

You can see the result by echoing the following topic:

$ rostopic echo /result

You can view the camera images using following command:

$ rosrun image_view image_view image:= /cv_camera/image_raw

Here is the output from the recognizer. The recognizer detects the device as a cell phone.

In the next detection, the object is detected as a water bottle: